Public cloud and telecommunication service providers are moving in the direction of large hyperscale datacenters like Google and Amazon. They are looking for ways to increase efficiencies, flexibility, and agility, and so are turning towards disaggregation and server virtualization as critical tenets of their modernization efforts. However, in doing so, they are stumbling across several challenges. First, virtualization imposes significant performance penalties by using host CPU cycles for network packet processing. Similarly, they are facing considerable security threats that are associated with these new data center architectures. Traditional security strategies from the past only rely on perimeter-based security, which is no longer sufficient.

The evolution from appliance-centric, centralized networking to open, disaggregated, and distributed networking is happening all around you. The large hyperscale datacenters found a successful approach by simplifying the physical network design and widely distributing networking and security tasks among the thousands of endpoints that exist within the network. This enables scale-out growth and ensures that the network is agile and flexible for future changes. It also allows zero-trust, stateful security that can be woven into the fabric of the network by placing security functions at every virtual machine (VM) instance, tenant, and application.

Virtualization switching

Public cloud, service providers, and telecommunication providers are looking to adopt this approach by using Open vSwitch (OVS). OVS provides the hypervisor with the ability to enable transparent switching of traffic between VMs and the outside world. This is especially true when the security applications themselves are virtualized network functions (VNFs), such as firewalls, DDoS blockers, and VPNs.

Despite the flexibility that virtual switching instills, it also comes with the challenges mentioned earlier: poor I/O throughput, unpredictable application performance, and high CPU overhead. To address this, NVIDIA networking developed ASAP2 (Accelerated Switching and Packet Processing) technology. ASAP2 provides a programmable, high-performance, and highly efficient packet forwarding plane in hardware that can work seamlessly with the OpenStack and Kubernetes management plane to overcome the performance and efficiency degradation issues associated with software virtual switching.

When implementing security services within a scale-out datacenter, zero-trust stateful security is the most effective approach. However, as mentioned earlier, the security services must compete with the same server resources as applications. They risk performance penalties in the form of lost efficiencies and high CPU utilization, which can erase many of the benefits of cloud-scale networking.

Running security features on NVIDIA ConnectX NIC or the BlueField data processing unit (DPU), which are designed to accelerate networking functions, can restore efficiencies and allow the compute resources to focus on application processing. This creates a scale-up effect of the servers by basically increasing VM capacity per server. It increases return on investment per server footprint.

Efficient networking means efficient scaling

This means that a datacenter’s service capacity can grow at the same linear pace as the server footprint. An often-overlooked assumption with this technique is that most, if not all, of the CPU resources in each server are available to run application workloads. To efficiently scale, the compute power of many (hundreds to thousands of) servers can be leveraged along with breaking up and distributing datacenter security tasks up into small, manageable workloads that can be allocated to available resources. However, deploying security components on servers again creates a scenario where the security services are competing for CPU compute power with applications, which is counterproductive to the revenue goals of the datacenter.

Security processing functions are a fundamental technology in building secure datacenters. However, without SmartNIC or DPU accelerators and offloads, IT applications can be adversely affected as server-based virtual switching chews up processing power. Customers are forced into the impossible choice of choosing between strong security and fast, efficient servers.

Some of the security functions that should be deployed at every endpoint are as follows:

- Security groups and virtual private clouds (VPCs): A security group is a collection of rules that specify whether to allow traffic for an associated virtual server. It acts as a virtual firewall for one or more servers. When a virtual server is created, it can be assigned to one or more security groups to limit traffic and ensure secure access for the right users but no access for the wrong ones.

- Firewalls: Stateful firewalls rely on connection tracking information to precisely block or allow incoming traffic, using a defined list of approved or blocked addresses. This allows administrators to define network policies that allow certain types of for connections to selected remote IP addresses without needing to block or allow that traffic for all servers.

- Distributed denial-of-service attacks: DDoS is a type of attack that occurs when multiple servers flood the bandwidth or take up all available resources of the targeted system, rendering it useless by flooding it with useless traffic.

- Network address translation: NAT secures the inside network from the outside world. NAT relies on the connection tracking information so it can translate all the packets in a flow in the same way.

- VPN: A VPN or virtual private network channels all network traffic through an encrypted channel that provides secure access by hiding your real IP address. By shielding the actual IP address and encrypting the traffic, the connection is protected from being a target of a DDoS or man-in-the-middle attack.

- Compliance: Track and manage connections to servers to maintain and adhere to unique regulatory compliance guidelines and legal requirements.

Connection tracking for stateful security

Within today’s multi-tenant datacenters, there are significant potentials for threat injection in and out of the datacenter. To adequately protect tenants and application workloads in these zero-trust environments, security functions must be distributed on a per-application or VM basis and associated with the tenants and workloads directly.

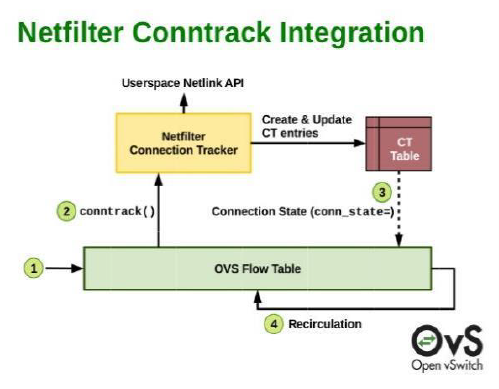

Netfilter is a packet-filtering functionality within OpenStack. Built on top of Netfilter is a security feature that provides a mechanism to implement stateful security in the form of connection tracking (conntrack) on a per-VM basis. The functionality is implemented in the conntrack module within the Linux operating system and is run in software. Yet, the conntrack function itself is PU-intensive and degrades the server networking datapath performance significantly. While running OVS over DPDK can help to accelerate the stateful connection tracking performance, it still happens at the expense of consuming CPU cores.

By distributing stateful connection tracking, security application performance can be improved but only at the expense of reduced server computing efficiency. The goal here is to pack as many applications as possible on each server without increasing the capital cost tied to server deployment. The need for a better solution is evident!

ASAP2 to the rescue

The ASAP2 vSwitch/vRouter offload solution has been well-proven. It offloads the host CPU by performing policy-driven packet forwarding and traffic steering. ASAP2 achieves the highest efficiency by speeding up packet forwarding rates by up to 10X while freeing up all the CPU cores previously used for processing data path networking traffic. Further, ASAP2 retains the SDN principles of the software-driven control plane and policy management without sacrificing performance and efficiency.

However, in the industry standard, the OVS hardware offload architecture that ASAP2 adheres to didn’t consider the statefulness of connections. Every TCP packet that an application sends out must be looked up and handled per the provisioned policy as if it were a new packet seen for the first time. This works well for UDP traffic but not for connection-oriented protocols like TCP. It makes all packet handling stateless results in inefficient forwarding.

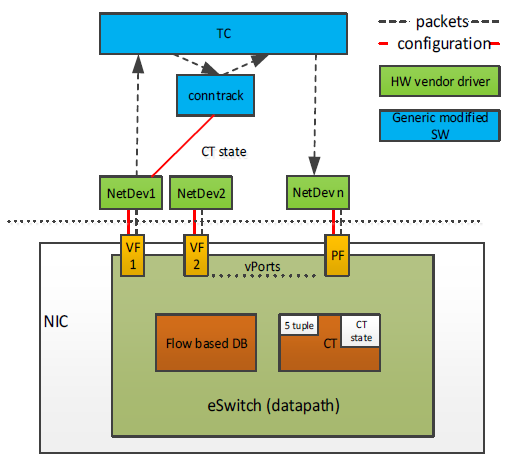

That’s changed now. ConnectX SmartNICs and BlueField DPUs offload stateful connection tracking by augmenting the Linux and OVS conntrack software modules, maintaining connection-state information in the hardware. The eSwitch embedded in NVIDIA SmartNICs and DPUs offloads the compute-intensive of maintaining and reporting connection-state information and the packet forwarding so that traffic for stateful connections such as firewalls, VPNs, DDoS attacks, Web servers, and so on can be swiftly directed, blocked, or reported as per the provisioned policy. This is a software-defined security makeover.

When you are designing workload– and application-level security, connection-based stateful security requires that every connection passing through the firewall is tracked so the state of each connection can be used to enforce policies. The information is stored within the conntrack table. Each flow is reported as NEW, ESTABLISHED, RELATED, or INVALID, which is then used by the firewall to determine how packets are to be treated within the stateful firewall. This type of bidirectional conntrack is critical to adequately protecting many server applications. A traffic type might be perfectly fine on connection A but indicative of a cyber-attack if found on connection B.

The NVIDIA ASAP2 solution offloads the virtual switch data path operations to the ConnectX and BlueField SmartNICs. Both product families have pipelined, programmable hardware accelerators to perform intelligent flow processing and conntrack at scale, with connection tracking security technology protecting the host from attacks being done at wire speed. Furthermore, to simplify deployment, the acceleration is transparent. The virtual switch control plane, SDN controller interface, and cloud management platforms all remain unchanged. ASAP2 optimizes network application performance by streamlining I/O operations, in the most cost-effective manner possible, with minimal CPU impact. This enables you to maximize server resources to run application workloads instead of network I/O processing.

ASAP2 delivers predictable performance for an ecommerce giant

Ecommerce giant and China’s largest online retailer, JD.com adopted ConnectX adapters to take advantage of ASAP2 and hardware offloads to improve performance, efficiency, and security. JD.com worked closely with NVIDIA and the Linux kernel community to develop pioneering new capabilities based on ASAP2 supported by the ConnectX series of Ethernet network adapters. By leveraging ConnectX adapters with ASAP2, JD.com was able to develop cloud-native applications, network function virtualization, and distributed security with unmatched performance and efficiency.

JD.com and NVIDIA worked closely to co-design and implement connection tracking features based on ASAP2 technology. The results were better than expected, delivering excellent network performance and a better user experience. By offloading network packet processing through ASAP2 they were able to free CPU processes from needing to handle every data packet. Instead, almost all CPU cycles became available for applications. The net result is better and more predictable performance for application workloads, while simultaneously achieving better security, scalability, efficiency, and cost savings. For more information about JD.com’s deployment, see the Open Infrastructure Summit presentation on Software Defined Security for Cloud Data Centers, which covers a JD.com case study.

ASAP2 performance benchmark

The new generation of SDN, NFV, and cloud-native computing technologies are pushing the limits of application instances that can be packed efficiently, securely, and quickly on virtualized and containerized server infrastructure. This necessitates an efficient underlying network fabric and offloads.

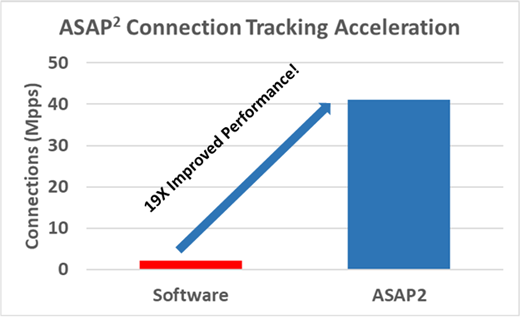

With high-speed server I/O, multiple packets can arrive every microsecond, and OVS cannot keep pace. Without acceleration, OVS can achieve about 1.25 million packets per second (pps) on a 25 Gbit/s link where the theoretical maximum packet rate is more in line with about 42 million pps. In specific real application scenarios where telco VNFs are deployed and traffic is dominated by small packets, OVS can only achieve a fraction of the bare metal I/O performance over a 25 Gb/s interface. When ASAP2 is implemented, there is a significant 19X performance improvement over software implementations.

Conclusion

To properly modernize datacenters with disaggregation and virtualization technologies without compromising performance, the underlying hardware must accommodate an advanced network infrastructure. This network infrastructure provides virtualization efficiencies, accelerates network packet processing, and provides adequate security offloads. While conntrack is a vital kernel feature and is good at what it does, the overhead can easily outweigh the benefits.

In these scenarios, NVIDIA ConnectX SmartNICs and BlueField DPUs can be used to bypass stateful connection tracking data path lookups in software while maintaining the software control of policy provisioning to enforce fast and efficient network security. That is best of all worlds and extends the NVIDIA ASAP2 technology software-defined, hardware-accelerated, SDN architecture to security.