Three trends continue to drive the AI inference market for both training and inference: growing data sets, increasingly complex and diverse networks, and real-time AI services. MLPerf Inference 0.7, the most recent version of the industry-standard AI benchmark, addresses these three trends, giving developers and organizations useful data to inform platform choices, both in the datacenter and at the edge.

The benchmark has expanded the usages covered to include recommender systems, speech recognition, and medical imaging systems. It has upgraded its natural language processing (NLP) workloads to further challenge systems under test. The following table shows the current set of tests. For more information about these workloads, see the MLPerf GitHub repo.

| Application | Network Name |

| Recommendation* | DLRM (99% and 99.9% accuracy targets) |

| NLP* | BERT (99% and 99.9% accuracy targets) |

| Speech Recognition* | RNN-T |

| Medical Imaging* | 3D U-Net (99% and 99.9% accuracy targets) |

| Image Classification | ResNet-50 v1.5 |

| Object Detection | Single-Shot Detector with MobileNet-v1 |

| Objection Detection | Single-Shot Detector with ResNet-34 |

*New workload

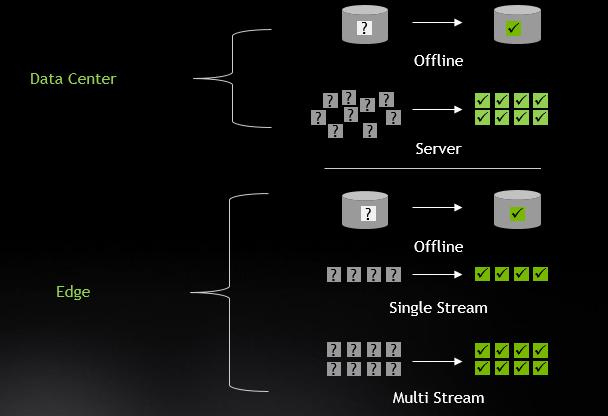

NVIDIA handily won across all tests and scenarios in both the Data Center and Edge categories. While much of this great performance traces back to our GPU architectures, more has to do with the great optimization work by our engineers, which is now available to the developer community.

In this post, we drill down into the ingredients that led to these excellent results, including software optimization to improve efficiency of execution, Multi-Instance GPU (MIG) to enable one A100 GPU to operate as up to seven independent GPUs, and the Triton Inference Server to support easy deployment of inference applications at datacenter scale.

Read the full blog on the NVIDIA Developer Blog.