ONNX

May 12, 2026

How to Eliminate Pipeline Friction in AI Model Serving

The path from a trained AI model to production should be smooth, but rarely is. Many teams invest weeks fine-tuning models, only to discover that exporting to...

10 MIN READ

Nov 19, 2024

Llama 3.2 Full-Stack Optimizations Unlock High Performance on NVIDIA GPUs

Meta recently released its Llama 3.2 series of vision language models (VLMs), which come in 11B parameter and 90B parameter variants. These models are...

6 MIN READ

Oct 07, 2024

Optimizing Microsoft Bing Visual Search with NVIDIA Accelerated Libraries

Microsoft Bing Visual Search enables people around the world to find content using photographs as queries. The heart of this capability is Microsoft's TuringMM...

11 MIN READ

Jun 11, 2024

Maximum Performance and Minimum Footprint for AI Apps with NVIDIA TensorRT Weight-Stripped Engines

NVIDIA TensorRT, an established inference library for data centers, has rapidly emerged as a desirable inference backend for NVIDIA GeForce RTX and NVIDIA RTX...

8 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Inference Optimization

In this post, we delve deeper into the inference optimization process to improve the performance and efficiency of our machine learning models during the...

9 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Implementation

To make scene text detection and recognition work on irregular text or for specific use cases, you must have full control of your model so that you can do...

6 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Introduction

Identification and recognition of text from natural scenes and images become important for use cases like video caption text recognition, detecting signboards...

8 MIN READ

Apr 27, 2023

End-to-End AI for NVIDIA-Based PCs: Optimizing AI by Transitioning from FP32 to FP16

This post is part of a series about optimizing end-to-end AI. The performance of AI models is heavily influenced by the precision of the computational...

4 MIN READ

Apr 25, 2023

End-to-End AI for NVIDIA-Based PCs: ONNX and DirectML

This post is part of a series about optimizing end-to-end AI. While NVIDIA hardware can process the individual operations that constitute a neural network...

14 MIN READ

Mar 15, 2023

End-to-End AI for NVIDIA-Based PCs: NVIDIA TensorRT Deployment

This post is the fifth in a series about optimizing end-to-end AI. NVIDIA TensorRT is a solution for speed-of-light inference deployment on NVIDIA hardware....

10 MIN READ

Mar 14, 2023

Top AI for Creative Applications Sessions at NVIDIA GTC 2023

Learn how AI is boosting creative applications for creators during NVIDIA GTC 2023, March 20-23.

1 MIN READ

Feb 08, 2023

End-to-End AI for NVIDIA-Based PCs: CUDA and TensorRT Execution Providers in ONNX Runtime

This post is the fourth in a series about optimizing end-to-end AI. As explained in the previous post in the End-to-End AI for NVIDIA-Based PCs series, there...

9 MIN READ

Dec 15, 2022

End-to-End AI for NVIDIA-Based PCs: ONNX Runtime and Optimization

This post is the third in a series about optimizing end-to-end AI. When your model has been converted to the ONNX format, there are several ways to deploy it,...

8 MIN READ

Dec 15, 2022

End-to-End AI for NVIDIA-Based PCs: Transitioning AI Models with ONNX

This post is the second in a series about optimizing end-to-end AI. In this post, I discuss how to use ONNX to transition your AI models from research to...

7 MIN READ

Dec 15, 2022

End-to-End AI for NVIDIA-Based PCs: An Introduction to Optimization

This post is the first in a series about optimizing end-to-end AI. The great thing about the GPU is that it offers tremendous parallelism; it allows you to...

9 MIN READ

Aug 29, 2022

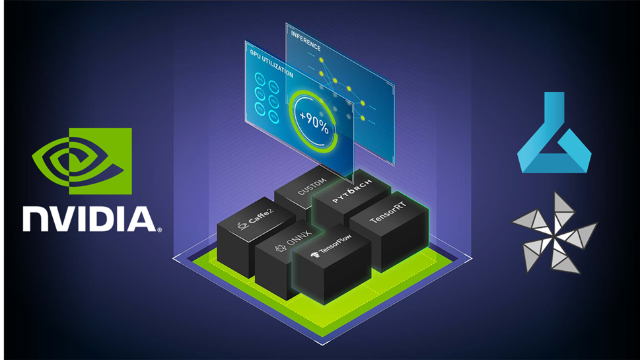

Boosting AI Model Inference Performance on Azure Machine Learning

Every AI application needs a strong inference engine. Whether you’re deploying an image recognition service, intelligent virtual assistant, or a fraud...

15 MIN READ