Ampere

Mar 26, 2025

Boosting Q&A Accuracy with GraphRAG Using PyG and Graph Databases

Large language models (LLMs) often struggle with accuracy when handling domain-specific questions, especially those requiring multi-hop reasoning or access to...

9 MIN READ

Sep 20, 2024

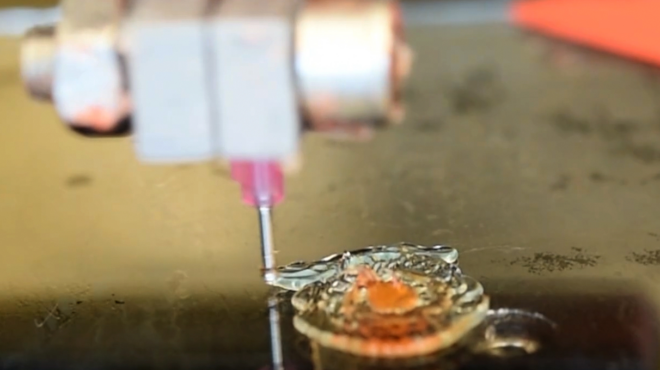

New AI-Powered 3D Printing Can Help Surgeons Rehearse Procedures

Researchers at Washington State University (WSU) unveiled a new AI-guided 3D printing technique that can help physicians print intricate replicas of human...

3 MIN READ

Jun 12, 2024

Introducing Grouped GEMM APIs in cuBLAS and More Performance Updates

The latest release of NVIDIA cuBLAS library, version 12.5, continues to deliver functionality and performance to deep learning (DL) and high-performance...

7 MIN READ

Mar 11, 2024

Advancing GPU-Driven Rendering with Work Graphs in Direct3D 12

GPU-driven rendering has long been a major goal for many game applications. It enables better scalability for handling large virtual scenes and reduces cases...

12 MIN READ

Dec 12, 2023

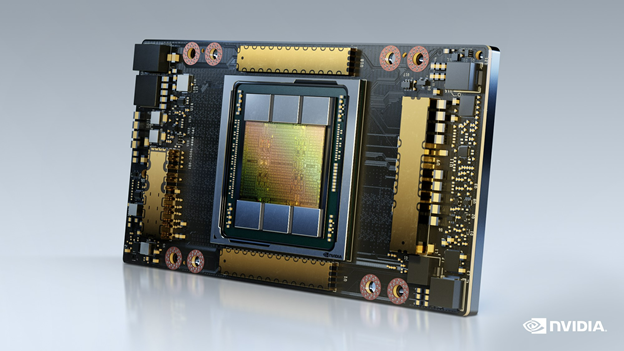

Oracle Cloud Infrastructure Sets Quantitative Financial HPC Calculations Record with NVIDIA GPUs

NVIDIA A100 Tensor Core GPUs were featured in a stack that set several records in a recent STAC-A2™ benchmark standard based on financial market risk analysis.

1 MIN READ

Sep 14, 2023

Software-Defined Broadcast with NVIDIA Holoscan for Media

The broadcast industry is undergoing a transformation in how content is created, managed, distributed, and consumed. This transformation includes a shift from...

5 MIN READ

Jul 03, 2023

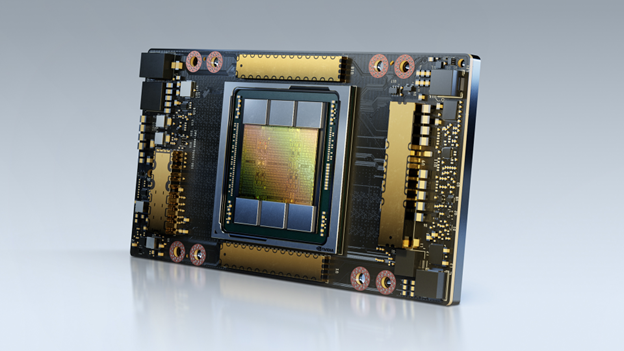

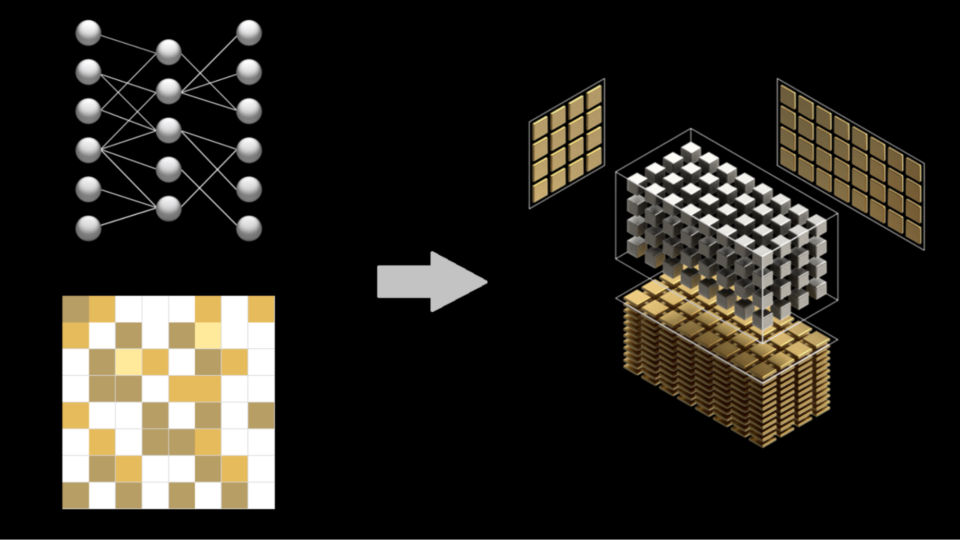

Structured Sparsity in the NVIDIA Ampere Architecture and Applications in Search Engines

Deep learning is achieving significant success in various fields and areas, as it has revolutionized the way we analyze, understand, and manipulate data. There...

13 MIN READ

May 28, 2023

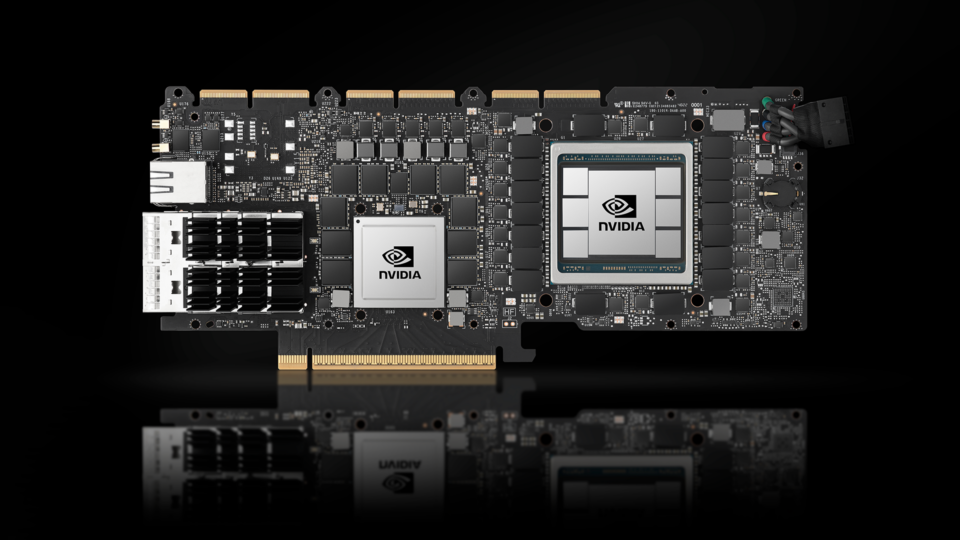

NVIDIA AX800 Delivers High-Performance 5G vRAN and AI Services on One Common Cloud Infrastructure

The pace of 5G investment and adoption is accelerating. According to the GSMA Mobile Economy 2023 report, nearly $1.4 trillion will be spent on 5G CapEx,...

11 MIN READ

Feb 02, 2023

Benchmarking Deep Neural Networks for Low-Latency Trading and Rapid Backtesting on NVIDIA GPUs

Lowering response times to new market events is a driving force in algorithmic trading. Latency-sensitive trading firms keep up with the ever-increasing pace...

8 MIN READ

Aug 30, 2022

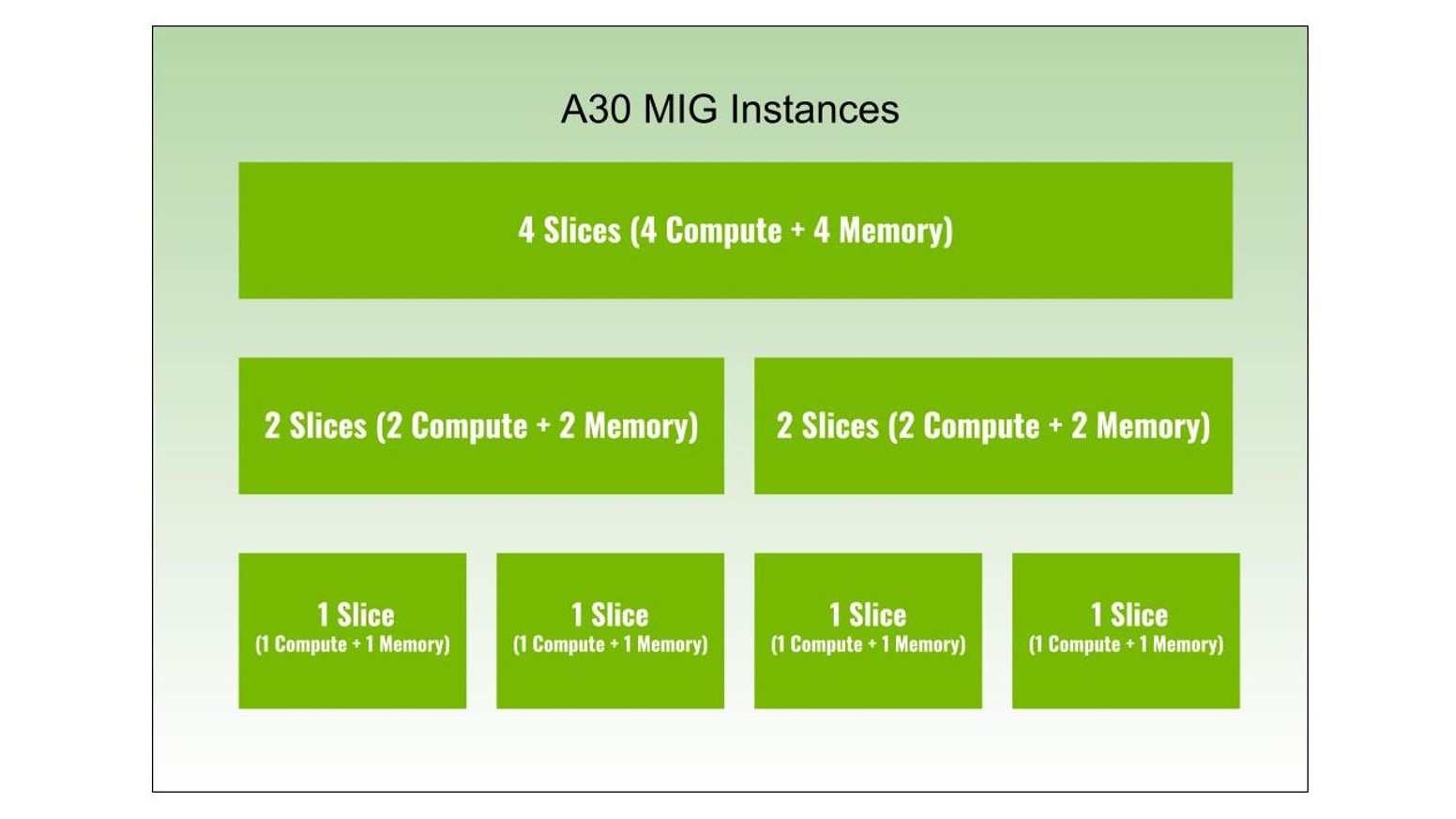

Dividing NVIDIA A30 GPUs and Conquering Multiple Workloads

Multi-Instance GPU (MIG) is an important feature of NVIDIA H100, A100, and A30 Tensor Core GPUs, as it can partition a GPU into multiple instances. Each...

9 MIN READ

Jun 16, 2022

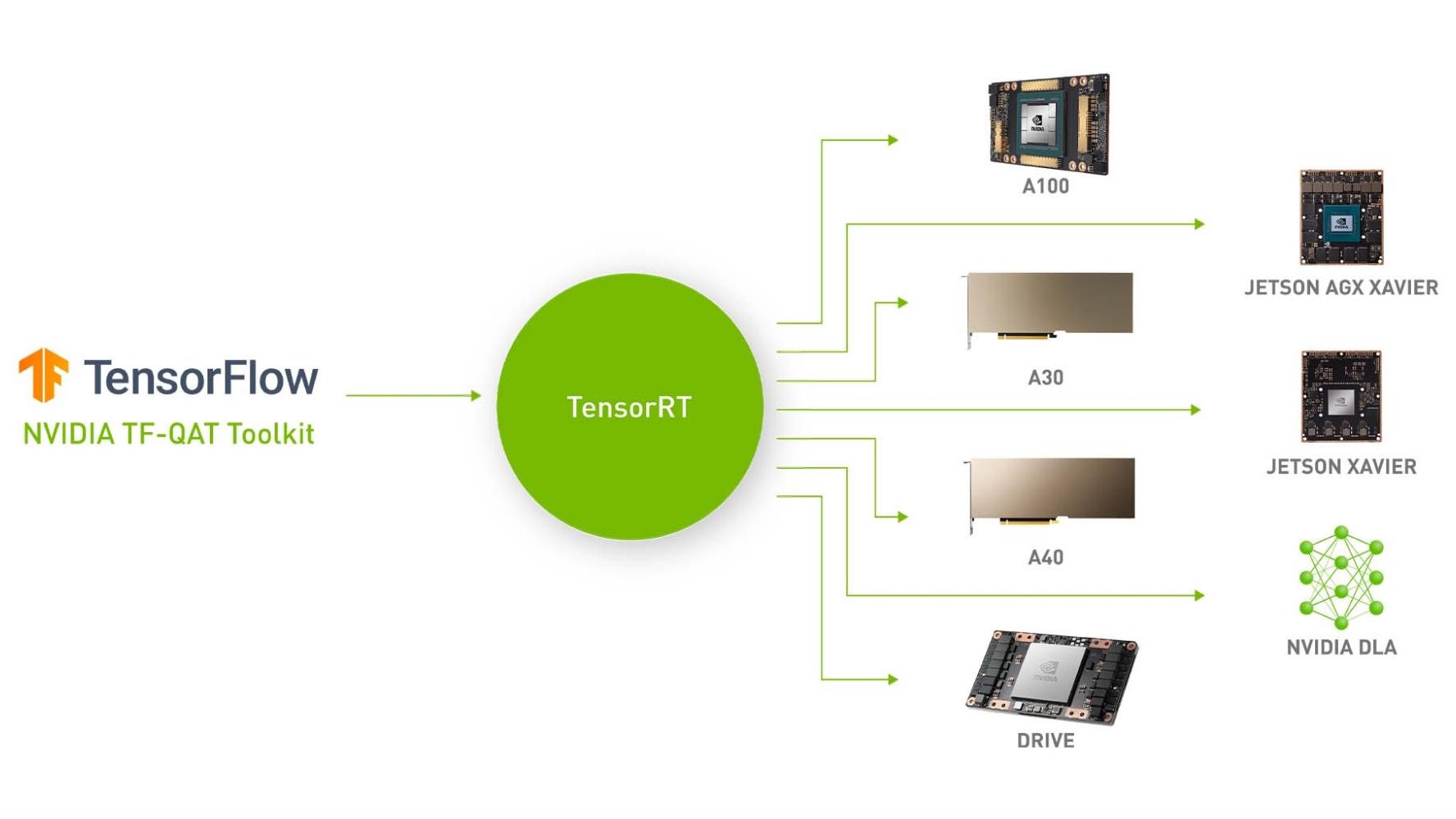

Accelerating Quantized Networks with the NVIDIA QAT Toolkit for TensorFlow and NVIDIA TensorRT

We’re excited to announce the NVIDIA Quantization-Aware Training (QAT) Toolkit for TensorFlow 2 with the goal of accelerating the quantized networks with...

9 MIN READ

Jun 02, 2022

Fueling High-Performance Computing with Full-Stack Innovation

High-performance computing (HPC) has become the essential instrument of scientific discovery. Whether it is discovering new, life-saving drugs, battling...

8 MIN READ

May 25, 2022

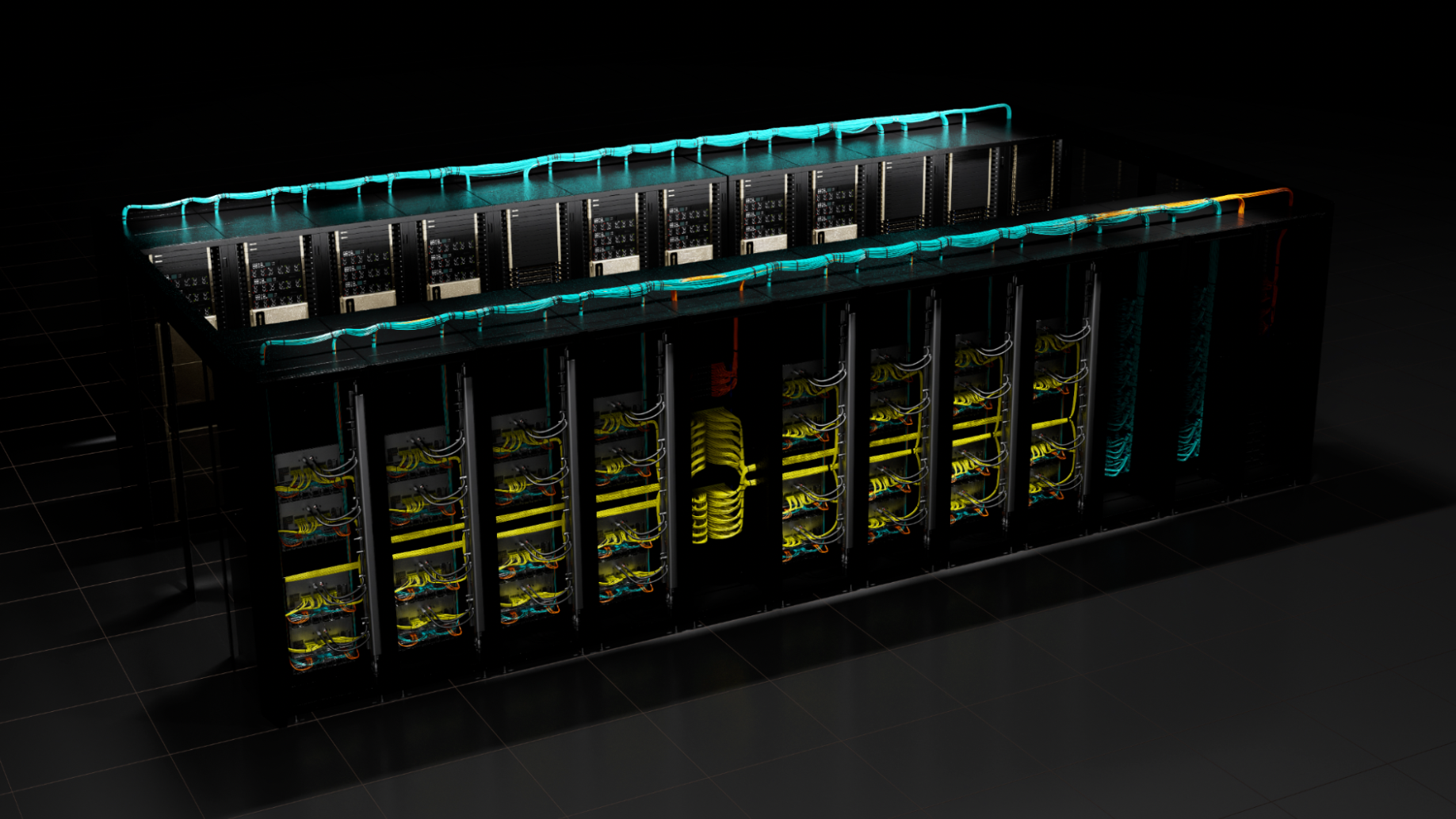

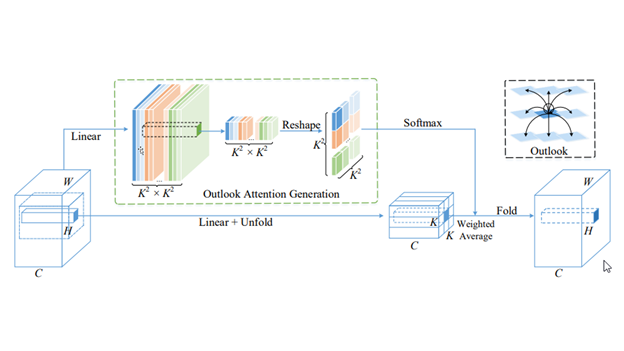

Training a State-of-the-Art ImageNet-1K Visual Transformer Model using NVIDIA DGX SuperPOD

Recent work has demonstrated that large transformer models can achieve or advance the SOTA in computer vision tasks such as semantic segmentation and object...

9 MIN READ

May 11, 2022

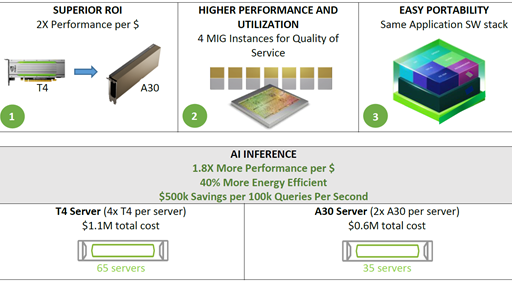

Accelerating AI Inference Workloads with NVIDIA A30 GPU

NVIDIA A30 GPU is built on the latest NVIDIA Ampere Architecture to accelerate diverse workloads like AI inference at scale, enterprise training, and HPC...

6 MIN READ

Sep 08, 2021

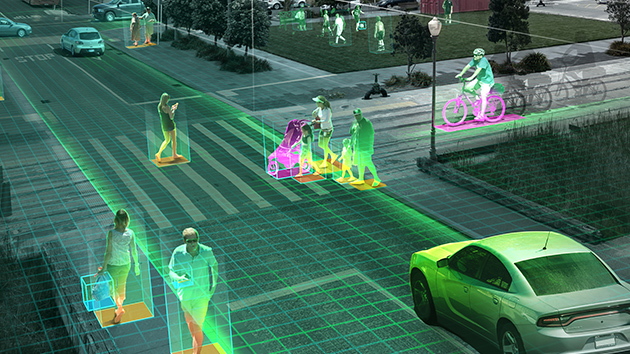

Register for the NVIDIA Metropolis Developer Webinars on Sept. 22

Join NVIDIA experts and Metropolis partners on Sept. 22 for webinars exploring developer SDKs, GPUs, go-to-market opportunities, and more. All three sessions,...

2 MIN READ

Aug 25, 2021

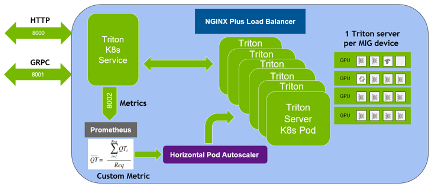

Deploying NVIDIA Triton at Scale with MIG and Kubernetes

NVIDIA Triton Inference Server is an open-source AI model serving software that simplifies the deployment of trained AI models at scale in production. Clients...

24 MIN READ