MPI

Sep 10, 2024

Accelerating the HPCG Benchmark with NVIDIA Math Sparse Libraries

In the realm of high-performance computing (HPC), NVIDIA has continually advanced HPC by offering its highly optimized NVIDIA High-Performance Conjugate...

9 MIN READ

Nov 15, 2022

Scaling VASP with NVIDIA Magnum IO

You could make an argument that the history of civilization and technological advancement is the history of the search and discovery of materials. Ages are...

22 MIN READ

Jun 28, 2021

Accelerating Scientific Applications in HPC Clusters with NVIDIA DPUs Using the MVAPICH2-DPU MPI Library

High-performance computing (HPC) and AI have driven supercomputers into wide commercial use as the primary data processing engines enabling research, scientific...

7 MIN READ

Jun 23, 2021

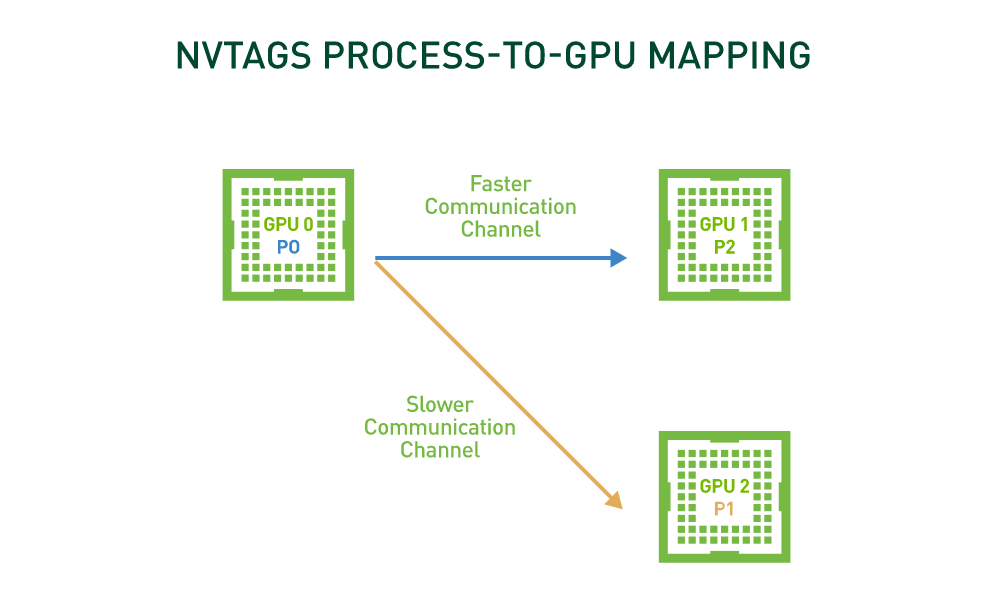

Achieve up to 75% Performance Improvement for Communication Intensive HPC Applications with NVTAGS

Many GPU-accelerated HPC applications spend a substantial portion of their time in non-uniform, GPU-to-GPU communications. Additionally, in many HPC systems,...

2 MIN READ

Apr 07, 2016

Fast Multi-GPU collectives with NCCL

Today many servers contain 8 or more GPUs. In principle then, scaling an application from one to many GPUs should provide a tremendous performance boost. But in...

10 MIN READ

May 05, 2015

GPU Pro Tip: Track MPI Calls In The NVIDIA Visual Profiler

Often when profiling GPU-accelerated applications that run on clusters, one needs to visualize MPI (Message Passing Interface) calls on the GPU timeline in the...

5 MIN READ

Oct 07, 2014

Benchmarking GPUDirect RDMA on Modern Server Platforms

NVIDIA GPUDirect RDMA is a technology which enables a direct path for data exchange between the GPU and third-party peer devices using standard features of PCI...

13 MIN READ

Jun 19, 2014

CUDA Pro Tip: Profiling MPI Applications

When I profile MPI+CUDA applications, sometimes performance issues only occur for certain MPI ranks. To fix these, it's necessary to identify the MPI rank where...

4 MIN READ

Mar 27, 2013

Benchmarking CUDA-Aware MPI

I introduced CUDA-aware MPI in my last post, with an introduction to MPI and a description of the functionality and benefits of CUDA-aware MPI. In this post I...

8 MIN READ

Mar 13, 2013

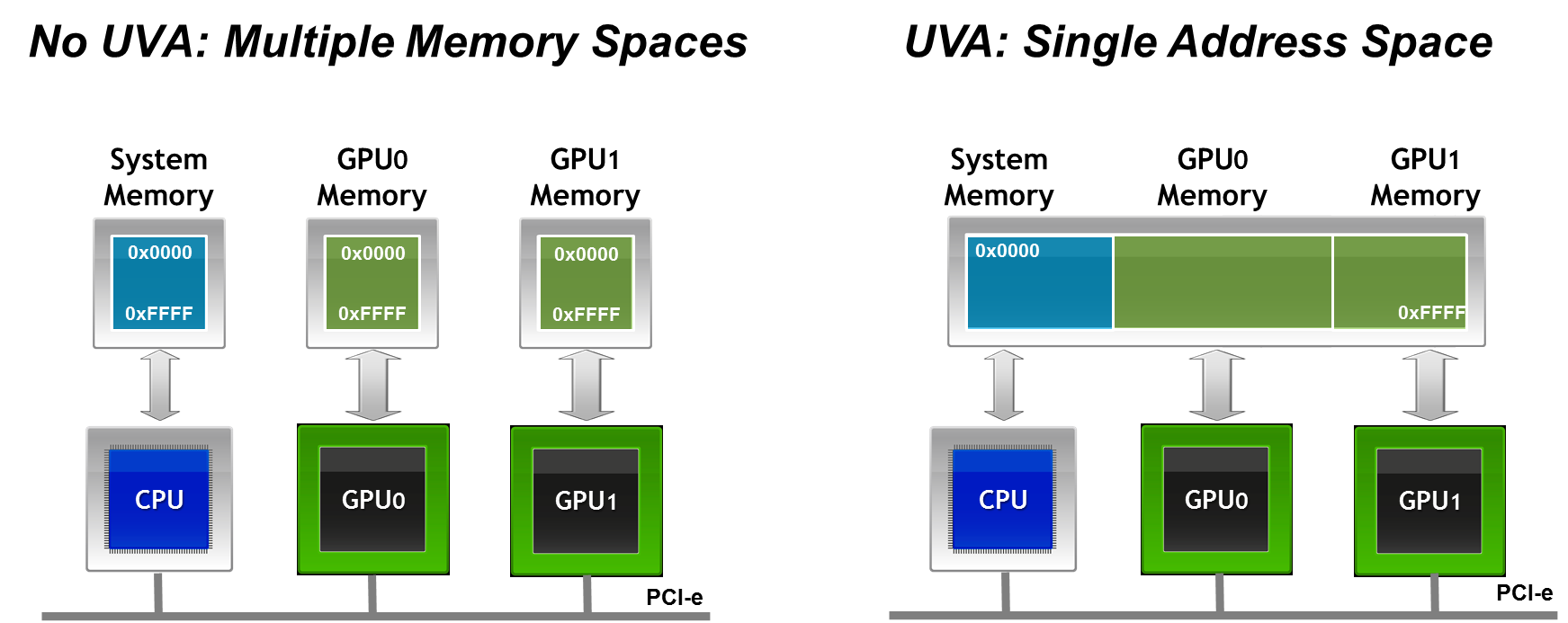

An Introduction to CUDA-Aware MPI

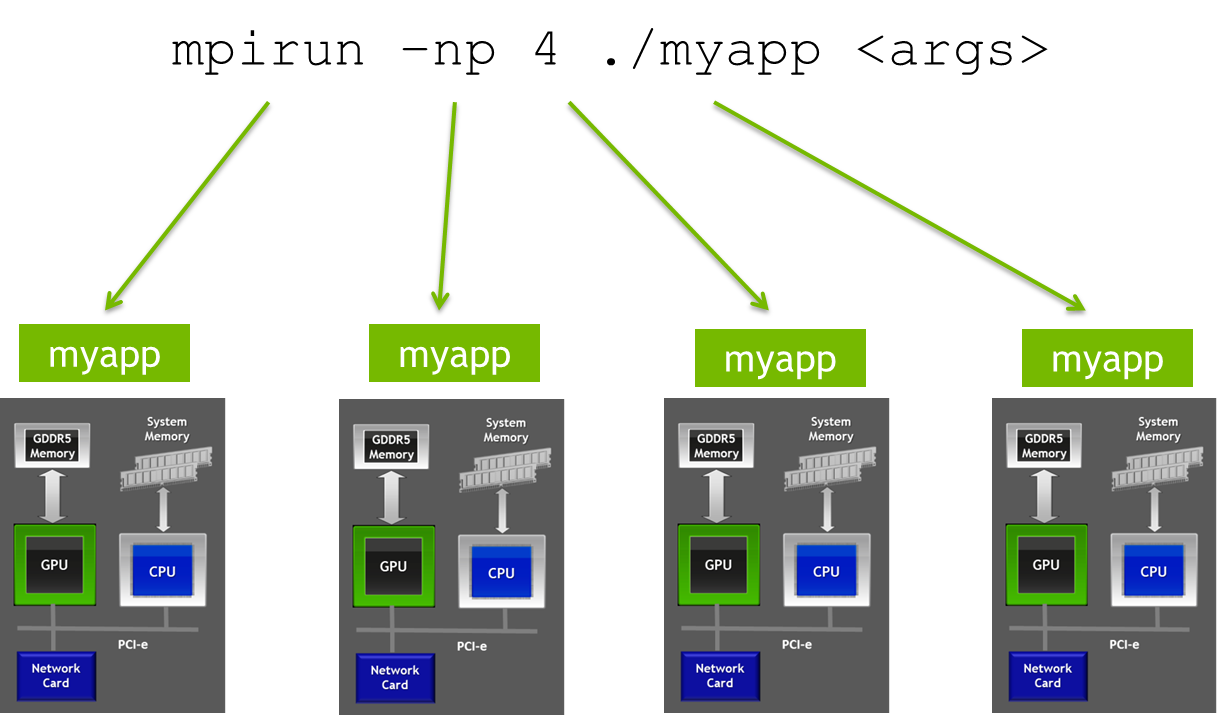

MPI, the Message Passing Interface, is a standard API for communicating data via messages between distributed processes that is commonly used in HPC to build...

11 MIN READ