NVIDIA Zero Touch RoCE (ZTR) enables data centers to seamlessly deploy RDMA over Converged Ethernet (RoCE) without requiring any special switch configuration. Until recently, ZTR was optimal for only small to medium-sized data centers. Meanwhile, large-scale deployments have traditionally relied on Explicit Congestion Notification (ECN) to enable RoCE network transport, which requires switch configuration.

The new NVIDIA congestion control algorithm—Round-Trip Time Congestion Control (RTTCC)—allows ZTR to scale to thousands of servers without compromising performance. Using ZTR and RTTCC allows data center operators to enjoy ease-of-deployment and operations together with the superb performance of Remote Direct Memory Access (RDMA) at a massive scale, without any switch configuration.

This post describes the previously recommended RoCE congestion control in large and small-scale RoCE deployments. It then introduces a new congestion control algorithm that allows configuration-free, large-scale implementations of ZTR, which perform like ECN-enabled RoCE.

RoCE deployments with Data Center Quantized Congestion Notification

In a typical TCP-based environment, distributed memory requests require many steps and CPU cycles, negatively impacting application performance. RDMA eliminates all CPU involvement in memory data transfers between servers significantly accelerating both access to stored data and application performance.

RoCE provides RDMA in Ethernet environments—the primary network fabric in data centers. Ethernet requires an advanced congestion control mechanism to support RDMA network transports. Data Center Quantized Congestion Notification (DCQCN) is a congestion control algorithm that enables responding to congestion notifications and dynamically adjusting traffic transmit rates.

The implementation of DCQCN requires enabling Explicit Congestion Notification (ECN), which entails configuring network switches. ECN configures switches to set the Congestion Experienced (CE) bit to indicate the imminent onset of congestion.

Zero touch RoCE—with reactive congestion control

The NVIDIA-developed ZTR technology allows RoCE deployments, which don’t require configuring the switch infrastructure. Built according to the InfiniBand Trade Association (IBTA) RDMA standard and fully compliant with the RoCE specifications, ZTR enables seamless deployment of RoCE. ZTR also boasts performance equivalent to traditional switch-enabled RoCE and is significantly better than traditional TCP-based memory access. Moreover, with ZTR, RoCE network transport services operate side-by-side with non-RoCE communications in ordinary TCP/IP environments.

As noted in the NVIDIA Zero-Touch RoCE Technology Enables Cloud Economics for Microsoft Azure Stack HCI post, Microsoft has validated ZTR for their Azure Stack HCI platform, which typically scales to a few dozen nodes. In such environments, ZTR relies on implicit packet loss notification, which is sufficient for small-scale deployments. Adding a new Round Trip Timer (RTT)-based congestion control algorithm, ZTR becomes even more robust and scalable without relying on packet loss to notify the server of network congestion.

Introducing round-trip time congestion control

The new NVIDIA congestion control algorithm, RTTCC, actively monitors network RTT to proactively detect and adapt to the onset of congestion before dropping packets. RTTCC enables dynamic congestion control using a hardware-based feedback loop that provides dramatically superior performance compared to software-based congestion control algorithms. RTTCC also supports faster transmission rates and can deploy ZTR at a larger scale. ZTR with RTTCC is now available as a beta feature, with GA planned for the second half of 2022.

How ZTR-RTTCC works

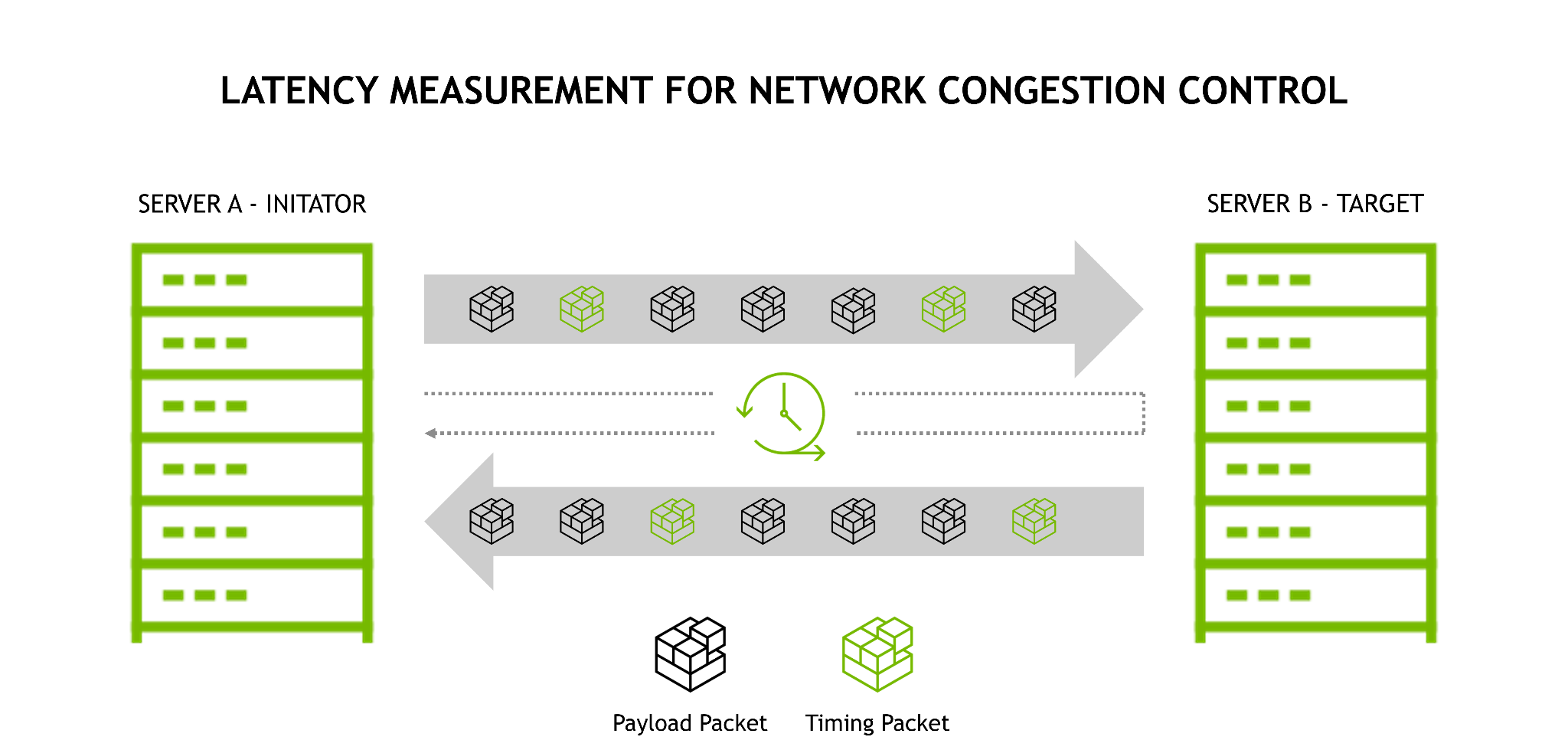

ZTR-RTTCC extends DCQCN in RoCE networks with a hardware RTT-based congestion control algorithm.

Timing packets (green network packets in the preceding figure) are periodically sent from the initiator to the target. The timing packets are immediately returned, enabling measurement of round-trip latency. RTTCC measures the time interval between when the packet was sent and when the initiator received it. The difference (Time Received – Time Sent) measures round-trip latency which indicates path congestion. Uncongested flows continue to transmit packets to utilize the available network path bandwidth best. Flows showing increasing latency imply path congestion, for which RTTCC throttles traffic to avoid buffer overflow and packet drops.

Network traffic can be adjusted either up or down in real-time as congestion decreases or increases. The ability to actively monitor and react to congestion is critical to enabling ZTR to manage congestion proactively. This proactive rate control also results in reduced packet re-transmission and improved RoCE performance. With ZTR-RTTCC, data center nodes do not wait to be notified of packet loss; instead, they actively identify congestion prior to packet loss and react accordingly, notifying initiators to adjust transmission rates.

As noted earlier, one of the key benefits of ZTR is the ability to provide RoCE functionality while operating simultaneously with non-RoCE communications in ordinary TCP/IP traffic. ZTR provides seamless deployment of RoCE network capabilities. With the addition of RTTCC actively monitoring congestion, ZTR provides data center-wide operation without switch configuration. Read on to see how it performs.

ZTR with RTTCC performance

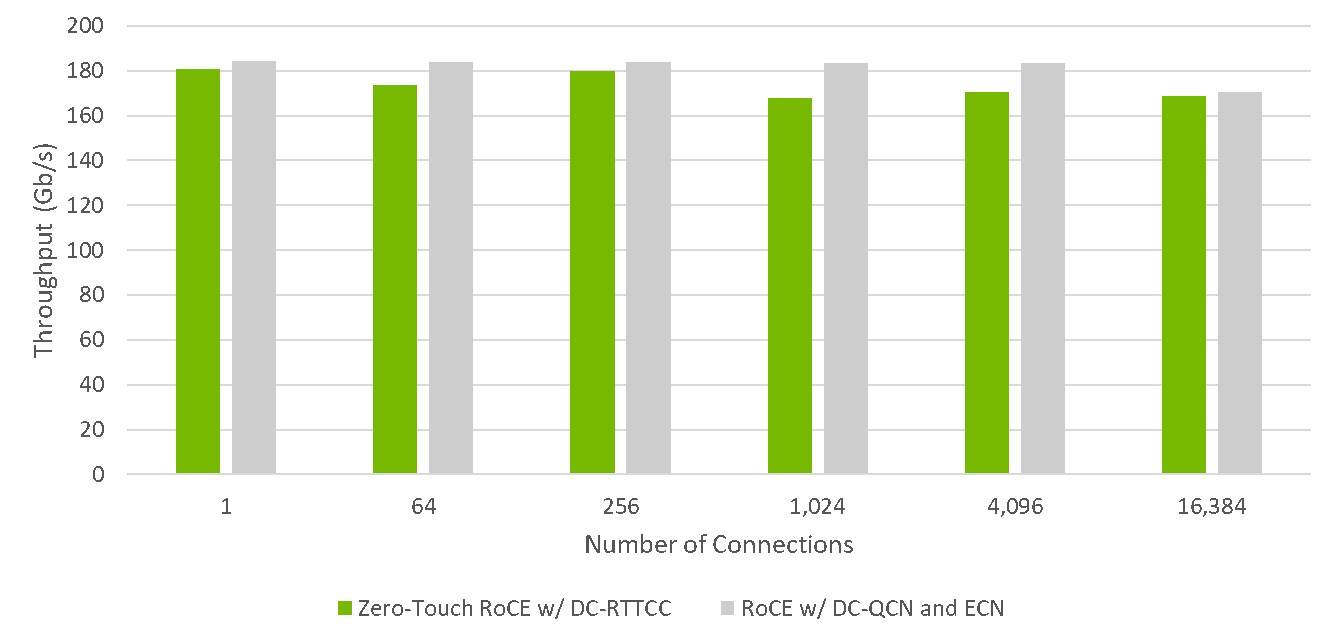

As shown in Figure 2, ZTR with RTTCC provides application performance comparable to RoCE when ECN and PFC are configured across the network fabric. These tests were performed under worst case many-to-one (in cast) scenarios to simulate the throughput under congested conditions.

The results indicate that not only does ZTR with RTTCC scale to thousands of nodes, but it also performs comparably to the fastest RoCE solution currently available.

- At small scale (256 connections and below), ZTR with RTTCC performs within 99% of RoCE with ECN congestion control enabled (conventional RoCE).

- With over 16,000 connections, ZTR with RTTCC throughput is 98% of conventional RoCE throughput.

ZTR with RTTCC provides near-equivalent performance to conventional RoCE without requiring any switch configuration.

Configuring ZTR

To configure ZTR with the new RTTCC algorithm, download and install the latest firmware and tools for your NVIDIA network interface card and perform the following steps.

Enable programmable congestion control using mlxconfig (persistent configuration):

mlxconfig -d /dev/mst/mt4125_pciconf0 -y s ROCE_CC_LEGACY_DCQCN=0

Reset the device using mlxfwreset or reboot the host:

mlxfwreset -d /dev/mst/mt4125_pciconf0 -l 3 -y r

When you complete these steps, ZTR-RTTCC is used when RDMA-CM is used with Enhanced Connection Establishment (ECE, supported with MLNX_OFED version 5.1).

If there’s an error, you can force ZTR-RTTCC usage regardless of RDMA-CM synchronization status:

mlxreg -d /dev/mst/mt4125_pciconf0 --reg_id 0x506e --reg_len 0x40 --set "0x0.0:8=2,0x4.0:4=15" -y

Summary

NVIDIA RTTCC, the new congestion control algorithm for ZTR, delivers superb RoCE performance at data center scale, without any special configuration of the switch infrastructure. This enhancement allows data centers to enable RoCE seamlessly in both existing and new data center infrastructure and benefit from immediate application performance improvements.

We encourage you to test ZTR with RTTCC for your application use cases by downloading the latest NVIDIA software.