Over the past decade, the rapid development of deep learning convolutional neural networks has completely revolutionized how computer vision tasks are performed. Algorithm, software, and hardware improvements have enabled single computer vision models to run at incredibly fast speeds. This real-time performance opens up new possibilities for a wide range of applications, such as digital surgery.

A new frontier in computer vision is quickly approaching, where not just one deep learning model will be used to drive a decision or outcome, but potentially dozens of models running concurrently. This will give rise to even more powerful and sophisticated computer vision applications.

NVIDIA Inception member iCardio.ai is pushing this new frontier. Their Ultrasound Cardiography Brain platform uses over 60 machine learning algorithms to help make cardiovascular diagnoses more accurate. These models can perform tasks such as automatically classifying a cardiac view, identifying crucial linear measurements of the heart, and evaluating various cardiomyopathies and heart valve abnormalities, like aortic stenosis.

Making the iCardio.ai Brain AI processing pipeline run in real time is no easy computational task. To run their multi-AI pipelines in real time, iCardio.ai is leveraging NVIDIA Clara Holoscan, a platform enabling medical device developers to accelerate development and deployment of AI applications at the edge.

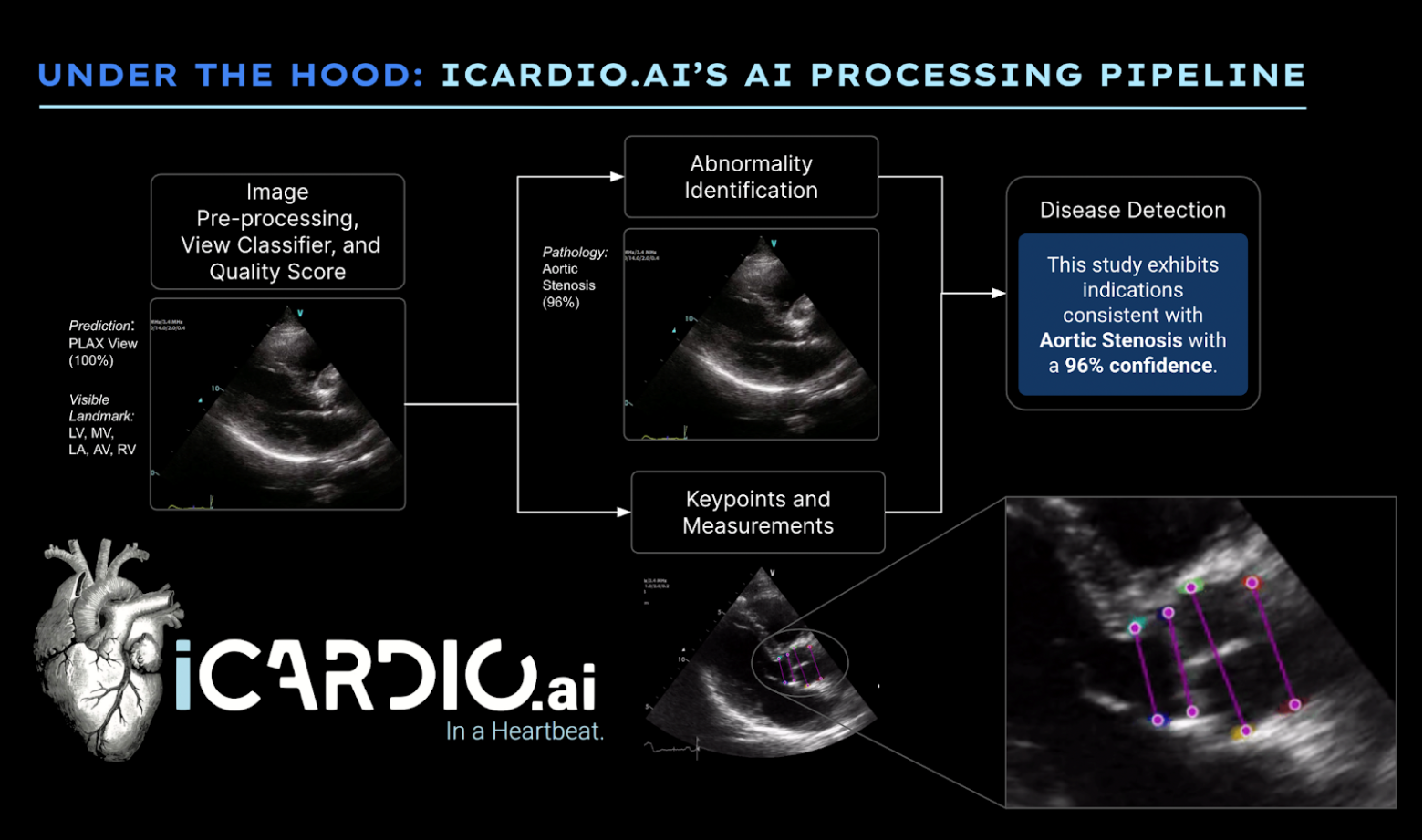

iCardio.ai implements comprehensive AI processing pipelines that attempt to mimic the mind of a cardiologist. Following expected clinical exclusion criteria, the AI begins by assessing image quality. It attempts to detect anatomical components of the heart before applying predictions for measurements or abnormalities. This approach increases trust in the basis of upstream AI predictions, as they are designated to run on images that represent substantial similarity to images of reasonable clinical quality in the real world.

NVIDIA Holoscan SDK v0.4

The NVIDIA Holoscan software development kit provides developers with acceleration libraries, pretrained AI models, and reference applications to build high-performance streaming AI applications.

The release of NVIDIA Holoscan SDK v0.4 now enables the rapid development of software-defined medical applications with C++ and new Python APIs. It also includes a new Holoscan inference model feature with a multi-AI demo application featuring three of the iCardio.ai models.

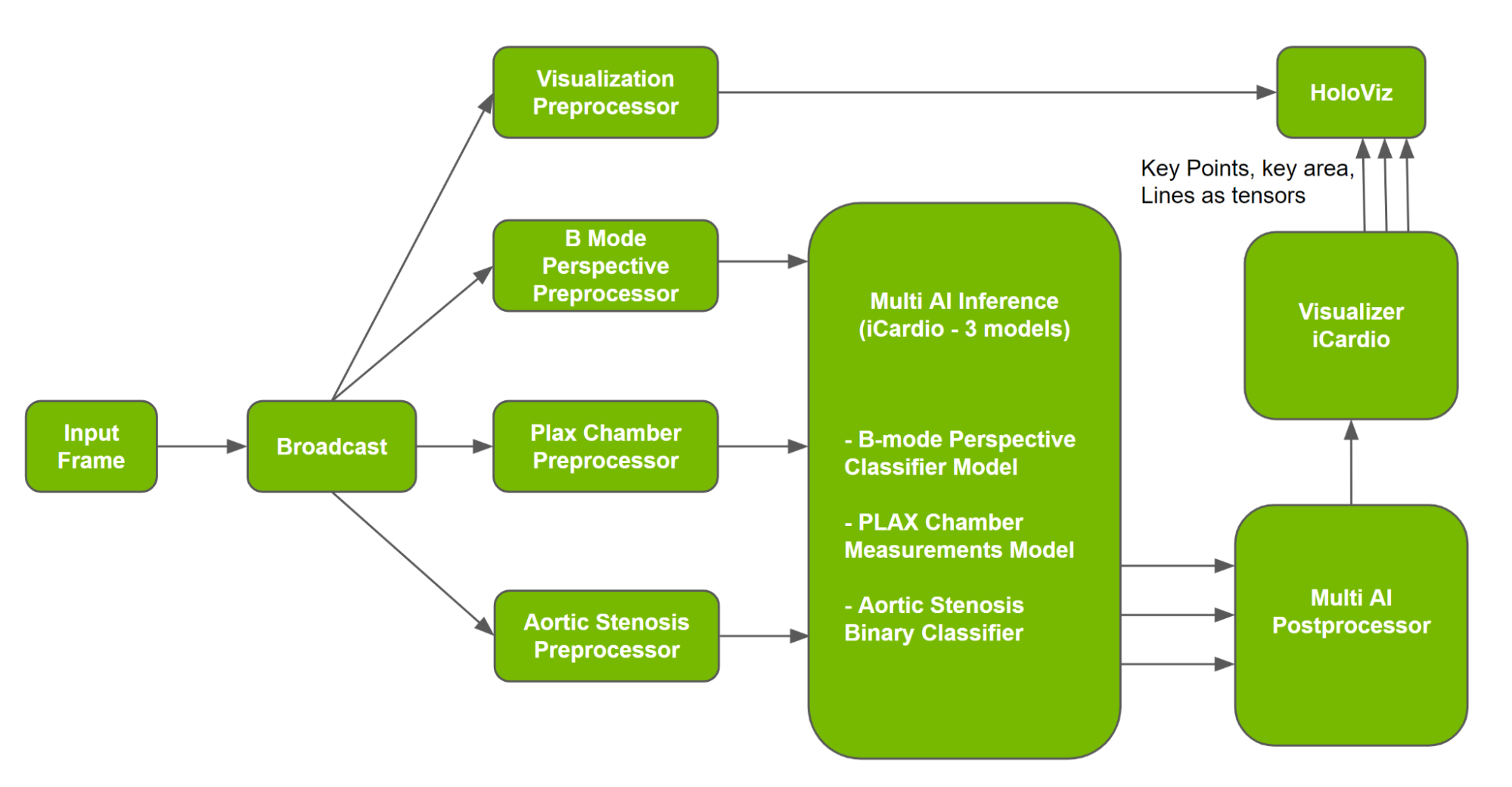

The Holoscan Inference module in the Holoscan SDK is a framework that facilitates designing and executing complex, parallel AI inference applications. Parallel inference through the multi-AI inference module can improve performance by approximately 30%, for you to bring more models into the inference module with the same time constraints. To start the process, a user programs an AI application by initializing required operators in the Holoscan SDK, followed by specifying the flow between operators and configuring required parameters of the operators.

To design the application, the sample multi-AI application in the Holoscan SDK uses models and data from iCardio.ai, a multi-AI Inference operator, and a multi-AI postprocessor operator, among others. Multi-AI operators (inference and postprocessor) use APIs from the Holoscan Inference component to extract data, initialize and execute the inference workflow, and process and transmit data for visualization. A sample multi-AI pipeline is shown in Figure 2, and is covered in more detail in the Holoscan SDK User Guide.

In the pipeline, Multi AI Inference uses outputs from three preprocessors to execute inference. Following inference, Multi AI Postprocessor uses the inferred output to process, following specifications. iCardio.ai then uses deep net outputs to create visualizations for clinicians to understand AI measurements and build trust in the system. The Holoscan Visualizer operator is used to generate visualization components for PLAX chamber output, which is then fed into the Holoviz operator to generate the visualization.

About the iCardio.ai models

In deciding which models should be used for the first Holoscan Multi AI SDK reference application, the Holoscan team reached out to numerous NVIDIA Inception members who were building their products on the edge of what is technically possible. iCardio.ai’s models were a match, needing to run in real-time concurrently on the same input, as well as having varied output types. Details about each model are provided below.

Viewpoint Classifier

In clinical practice, echocardiograms are produced by placing a transducer at various points along a patient’s body at different axes of rotation and degrees of skew. Used around the world, this standard procedure of generating an ultrasound examination produces over 20 “views” of the heart. Each view corresponds to various angles of the heart and contains expected cardiac anatomical components.

Using this model, you can automatically determine the class of the given view, and therefore also determine the cardiac anatomical components contained therein. The determination of the view is the first step in downstream processing for both humans and machines.

The model output produces a confidence for each frame pertaining to one of 28 cardiac views, as defined by the guidelines of the American Society of Echocardiography. The confidence of the most prominent class for each frame is averaged over the length of the video to arrive at a definitive perspective classification. For instance, a confidence of 98% for PLAX Standard suggests, based on each individual frame in that video, that the overall video is most likely to be the PLAX Standard perspective of the heart.

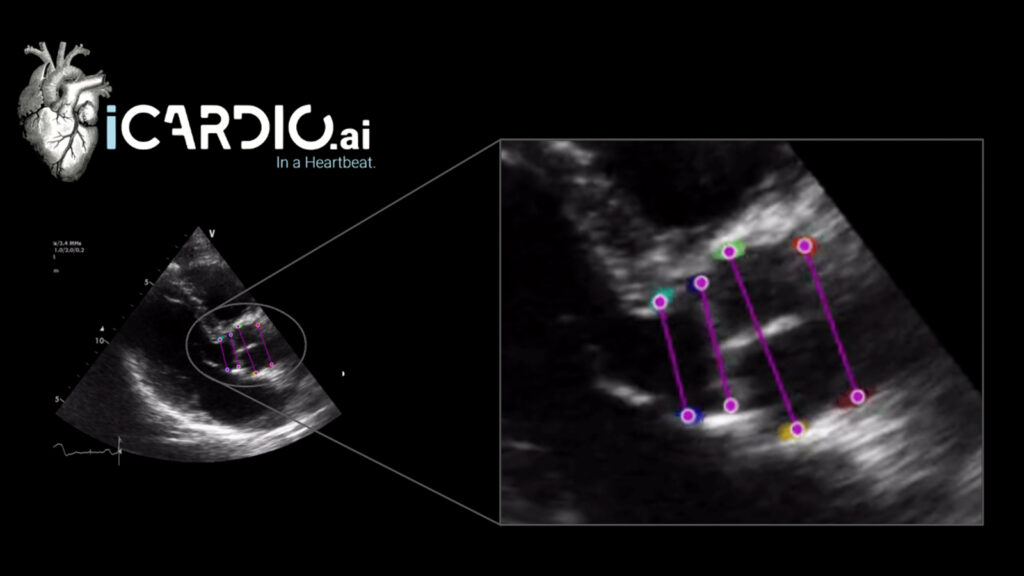

PLAX Chamber Measurements

This model identifies four crucial linear measurements of the heart. From top to bottom, the chamber model automatically generates an estimated caliper placement to measure the diameter of the right ventricle, the thickness of the interventricular septum, the diameter of the left ventricle, and the thickness of the posterior wall.

These measurements are crucial in diagnosing the most common cardiac abnormalities or diseases. For instance, determining that the diameter of the left ventricle is larger than expected for that patient (after considering gender and patient constitution), can be a telling sign of diastolic dysfunction, or other forms of heart failure.

The model interprets the most likely pixel location of each class. The distance between the pixels can be assumed to be the length of the underlying anatomical component. Illustrating the distance between the points, the model calculates the left and right ventricular chamber sizes through the extent of the cardiac cycle.

Aortic Stenosis

Aortic stenosis is a well-studied heart disease affecting the function of the aortic valve. It can affect the flow of blood from the left ventricle into the rest of the body. A patient with severe aortic stenosis may suffer from cardiac dysfunction. Early detection of this disease is crucial. Aortic stenosis has been known to be undertreated, especially in minorities and those in rural areas, resulting in decreased mortality for patients.

Determining aortic stenosis can involve the measurement of multiple parameters, making the diagnosis with traditional means potentially challenging. This innovative model provides a propensity for the presence of aortic stenosis directly from standard ultrasound B-mode images. This makes diagnosis more accessible for clinicians with less experience using ultrasound and probes with more moderate capabilities, like mobile point-of-care ultrasound devices.

Build your own multi-AI medical applications

We hope the iCardio.ai multi-AI reference application has given you an idea of what is possible with NVIDIA Clara Holoscan. To get started building next-generation software-defined medical applications, visit the NVIDIA Holoscan SDK page.

About iCardio.ai

iCardio.ai is a Los Angeles-based company that develops machine learning and deep learning algorithms for the analysis of echocardiograms (heart ultrasounds). iCardio.ai’s core AI product, the iCardio.ai Brain, was trained on its internal dataset of more than 200 million images, making it the largest-scale radiological AI product in the world. iCardio.ai has teamed with some of the largest ultrasound vendors to deliver its technology to clinicians around the globe.