Deep neural networks (DNNs) have been successfully applied to volume segmentation and other medical imaging tasks. They are capable of achieving state-of-the-art accuracy and can augment the medical imaging workflow with AI-powered insights.

However, training robust AI models for medical imaging analysis is time-consuming and tedious and requires iterative experimentation with parameter tuning. On the other hand, automated machine learning (AutoML) has been studied and developed for years in academia and industry. Its objective is to construct AI models without the need for human heuristics.

The latest release of Clara Train SDK v3.0 introduced the AutoML module, with the mission to enable researchers and data scientists with cutting-edge tools for AI development in medical imaging analysis.

The Clara Train AutoML functionality makes the process of hyper-parameter tuning seamless by intelligently searching for the optimal parameter settings to train models automatically. The underlying system is exposed through the MMAR (medical model archive) interface, which gives data scientists a configurable environment to define the training workflow experiments. It provides a standard way to implement state-of-the-art deep learning (DL) solutions.

Research behind the Clara Train AutoML implementation

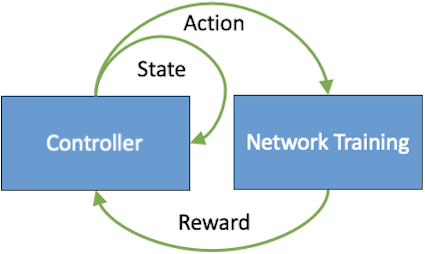

Figure 1 shows that the conventional DL pipelines for 3D medical image analysis contain several components (data pre– and post-processing, neural networks, loss functions, and so on). Each of these plays an important role for the model efficiency and effectiveness. The optimal DL solution requires the optimized setting from various combinations of these parameters. AutoML aims to find such global optimum parameters without much human heuristics.

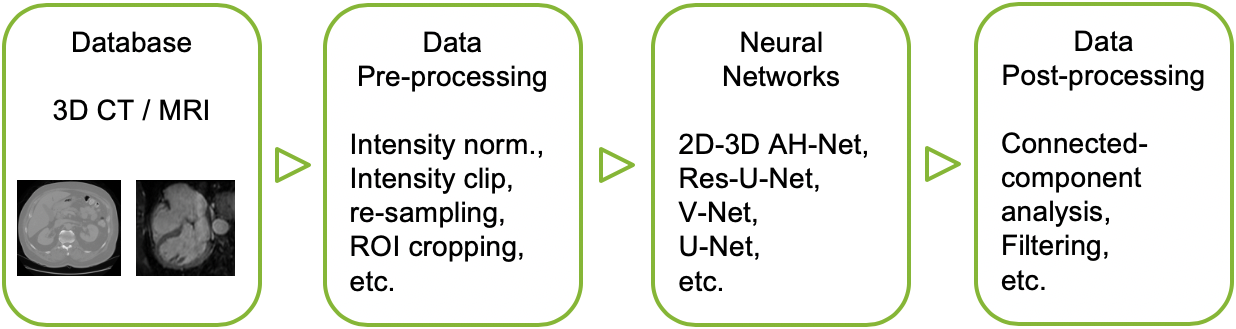

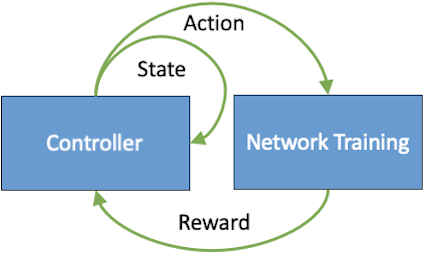

Figure 2 summarizes the most popular AutoML procedure. The “smart” controller manages the entire searching procedure. It not only launches training jobs with different parameter settings, but also collects the searching history to provide further recommendations of parameters for the next round of training jobs. Moreover, the controller can be implemented with deep reinforcement learning (RL), evolutionary, or greedy search algorithms. Based on different real-world applications, you can apply the most-suited algorithm.

AutoML for data augmentation, pre-processing, and hyperparameter optimization

To fully exploit the potentials of neural networks, the NVIDIA Medical Imaging Research team proposed an automated searching approach for the optimal training strategy with RL. The proposed approach can be utilized for tuning hyper-parameters, and selecting necessary data augmentation with certain probabilities. This approach has been validated on several tasks of 3D medical image segmentation. The performance of the baseline model is boosted after searching, and it can achieve comparable accuracy to other manually-tuned state-of-the-art segmentation approaches.

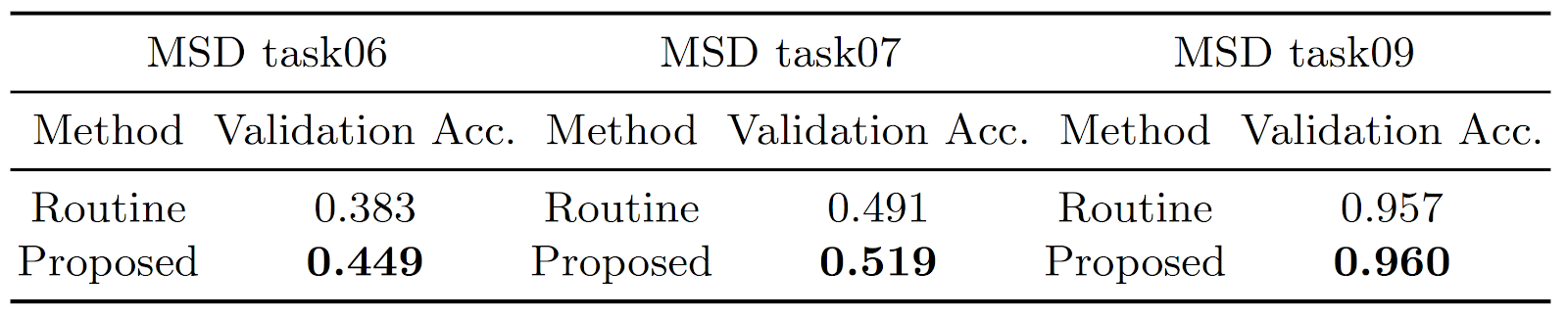

Figure 3 shows the performance comparison of models training from scratch with or without using this proposed AutoML approach. The validation accuracy is the overall average Dice Score (DSC) among different subjects and classes measured on three tasks from the Medical Segmentation Decathlon challenge. For more information, see Searching Learning Strategy with Reinforcement Learning for 3D Medical Image Segmentation.

Clara Train v3.0 provides an implementation of this approach for its first iteration of an AutoML module. The goal is to provide a starting point with this implementation for faster, iterative experimentation and enable you to bring your own implementation for controller, executor, and handler to the configurable Clara Train MMAR interface.

System architecture of Clara Train AutoML

The key component of AutoML architecture is the controller, which implements parameter search strategy that gradually finds the optimal parameter settings from the defined search space. This is a try-and-tune process. The controller first randomly generates the initial set of parameter settings, called recommendations, which are given to the executor to try.

The executor translates recommendations into concrete training parameter settings and performs model training. Result of the training is then fed back to the controller to get a new set of recommendations. The controller examines the training result and produces a set of 0 or more recommendations, based on its parameter search strategy. This interaction pattern is repeated until all recommendations are finished.

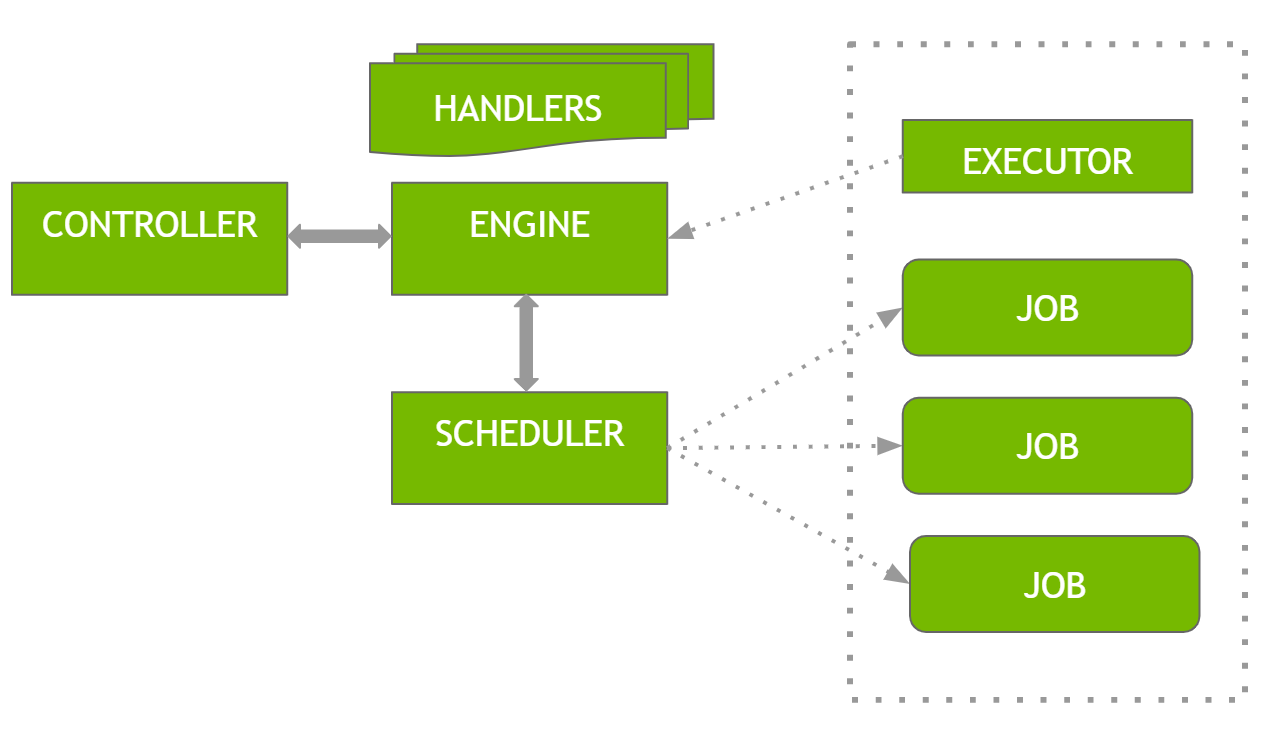

In addition to the controller and executor, there are two additional components, the engine and scheduler.

The engine is responsible for the orderly interactions among other components. All interactions between the executor and controller are through the engine so that additional processing can be performed by the engine or handlers.

The following is the system’s logical workflow:

- The engine calls the executor for the search space definition.

- The engine calls the controller to set the search space.

- The engine calls the controller for the initial set of recommendations.

- The engine calls the scheduler to schedule executions of the recommendations.

- The scheduler accepts recommendations and schedules them for parallel job executions based on its available resources. If not enough workers are available, jobs are queued up for execution. Each job is executed by the executor in its own thread.

- As soon as a job is finished, the scheduler reports the result to the engine.

- Upon receiving a job result, the engine sends the result to the controller and requests the next set of recommendations.

- Steps 4 to 7 are repeated until all recommendations are executed and no more recommendations are produced by the controller.

This basic processing logic can be extended by the handler mechanism. A handler is a Python class that implements methods to listen to and handle certain events during the AutoML processing. Examples of such events include the following:

- AutoML is about to start.

- AutoML has completed.

- Search space is available.

- Recommendations are available.

- A job is about to start.

- A job has finished.

The possibilities of handlers are limitless. For example, you can use handlers to collect and analyze search space and recommendations, generate reports, and even alter training behavior. For more information, see AutoML workflow.

Configuring an AutoML experiment with the Clara Train MMAR

The Clara Train MMAR includes a config_train.json file that defines a training configuration. This JSON framework is extended to include parameters that define AutoML search space. A training config file is defined by a combination of components, including model, loss, optimizer, transforms, metrics, and image pipelines.

The general format of the component config is shown in the following code example:

{

"name/path": "Class name or path",

"args": {

Component init args

},

attributes

},

To fully describe a component, you must specify the following:

- Class information—Python objects are instantiated from classes. You can specify the class path either through the

nameorpathelement. Thepathelement enables you to bring your own components to the Clara Training framework. - Args—Specifies the values of the initial args for the Python object.

- Attributes—Additional attributes can be specified. Currently, the only supported attribute is

disabled, which allows you to control the execution of individual components.

Define a search space

You can define search for args and attributes for any component with a search element within the component definition, as shown in the following code example:

{

"name": "RandomAxisFlip",

"args": {

"fields": [

"image",

"label"

],

"probability": 0.0

},

"search": [

{

"domain": "transform",

"type": "float",

"args": ["probability"],

"targets": [0.0, 1.0]

}

]

}

You can assign an alias to any search args and use the alias in other components. All first-level params can be searched. You can also use AutoML to search against optimizers and it can be applied to models, losses, and learning rate policies.

It has never been easier to use the Clara Train MMAR to define experiments through the search element, refine the training configs, and achieve the most optimal recipe. When you have an initial configuration running, you can make use of the Enum feature of AutoML which enables you to run a scheduler for search space.

AutoML has the ability to search floating parameters using RL along with the grid search approach. With this capability, currently available GPUs are never left idle as more refined recommendation experiments get scheduled. The AutoML engine is always at work augmenting your experiments.

As AutoML runs, it creates separate MMAR folders for each run. You can then track conversion using TensorBoard. AutoML can be configured to run hundreds of experiments to find the best parameters setting. Furthermore, you can configure how many configurations to search and when to stop the search.

Next steps

Download the SDK, Download AutoML MMAR and see the Clara Training Framework. To read about the latest developments, visit the Clara Train SDK forum.