At NVIDIA GTC last week, Jensen Huang laid out the vision for realizing multi-Million-X speedups in computational performance. The breakthrough could solve the challenge of computational requirements faced in data-intensive research, helping scientists further their work.

Solving challenges with Million-X computing speedups

Million-X unlocks new worlds of potential and the applications are vast. Current examples from NVIDIA include accelerating drug discovery, accurately simulating climate change, and driving the future of manufacturing.

Drug discovery

Researchers at NVIDIA, CalTech, and the startup Entos blended machine learning and physics to create OrbNet, speeding up molecular simulations by many orders of magnitude. As a result, Entos can accelerate its drug discovery simulations by 1,000x, finishing in 3 hours what would have taken more than 3 months.

Climate change

Last week, Jensen Huang announced plans to build Earth 2, building a digital twin of the Earth in Omniverse. The world’s most powerful AI supercomputer will be dedicated to simulating climate models that predict the impacts of global warming in different places across the globe. Understanding these changes over time can help humanity plan for and mitigate these changes at a regional level.

Future manufacturing

The earth is not the first digital twin project enabled by NVIDIA. Researchers are already building physically accurate digital twins of cities and factories. The simulation frontier is still young and full of potential, waiting for the catalyst mass increases in computing will provide.

Share your Million-X challenge

Share how you are using Million-X computing on Facebook, LinkedIn, or Twitter using #MyMillionX and tagging @NVIDIAHPCDev.

The NVIDIA developer community is already changing the world, using technology to solve difficult challenges. Join the community. >>

Below are a handful of notable examples.

The community that is changing the world

Smart waterways, safer public transit, and eco-monitoring in Antarctica

The work of Johan Barthelemy is interdisciplinary and covers a variety of industries. As the head of the University of Wollongong’s Digital Living Lab, he aims to deliver innovative AIoT solutions that champion ethical and privacy-compliant AI.

Currently, Barthelemy is working on an assortment of projects including a smart waterways computer vision application that detects stormwater blockage in real-time, helping cities prevent city-wide issues.

Another project, currently being deployed in multiple cities is AI camera software, which detects and reports violence on Sydney trains through aggressive stance modeling.

An AIoT platform for remotely monitoring Antarctica’s terrestrial environment is also in the works. Built around an NVIDIA Jetson Xavier NX edge computer, the platform will be used to monitor the evolution of moss beds—their health being an early indicator of the impact of climate change. The data collected will also inform a variety of models developed by the Securing Antarctica’s Environmental Future community of researchers, in particular hydrology and microclimate models.

Connect: LinkedIn | Twitter | Digital Living Lab

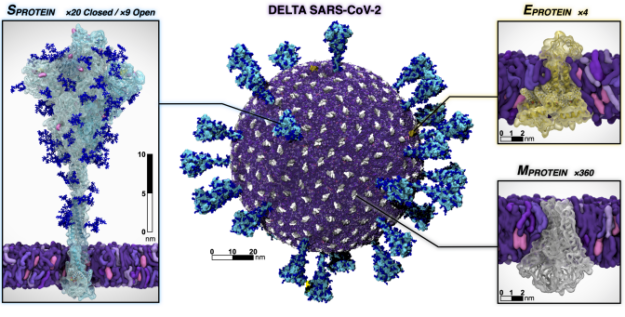

Never-before-seen views of SARS-CoV-2

NVIDIA researchers and 14 partners successfully developed a platform to explore the composition, structure, and dynamics of aerosols and aerosolized viruses at the atomic level.

This work surmounts the previously limited ability to examine aerosols at the atomic and molecular level, obscuring our understanding of airborne transmission. Leveraging the platform, the team produced a series of novel discoveries regarding the SARS-CoV-2 Delta variant.

These breakthroughs dramatically extend the capabilities of multiscale computational microscopy in experimental methods. The full impact of the project has yet to be realized.

Species recognition, environmental monitoring, and adaptive streaming

Dr. Albert Bifet is the Director of the Te Ipu o te Mahara, The Artificial Intelligence Institute at the University of Waikato, and Professor of Big Data at Télécom Paris, Institute.

Bifet also leads the TAIAO project, a data science program using an NVIDIA DGX A100 to build deep learning models on species recognition. He is codeveloping a new machine-learning library in Python called River for online/streaming machine learning, and building a new data repository to improve reproducibility in environmental data science.

Additionally, researchers at TAIAO are building new approaches to compute GPU-based SHAP values for XGBoost, and developing a new adaptive streaming XGBoost.

Connect: Website | LinkedIn | Twitter

Medical imaging, therapy robots, and NLP depression detection

The current interests of Dr. Ekapol Chuangsuwanich fall within the medical imaging domain, including chest x-ray and histopathology technology. However, over the past few years his work has spanned across many industries including NLP, ASR, and medical imaging.

Last year, Chuangsuwanich and his team developed the PYLON architecture, which can learn precise pixel-level object location with only image-level annotation. This is deployed across hospitals in Thailand to provide rapid COVID-19 severity assessments and to facilitate screening of tuberculosis in high-risk communities.

Additionally, he is working on NLP and ASR robots for medical use, including a speech therapy helper and call center robot with depression detection functionality. His startup, Gowajee, is also providing state-of-the-art ASR and TTS for the Thai language. These projects have been created using the NVIDIA NeMo framework and deployed on NVIDIA Jetson Nano devices.

Connect: Website | Org | Facebook

Trillion atom quantum-accurate molecular dynamics simulations

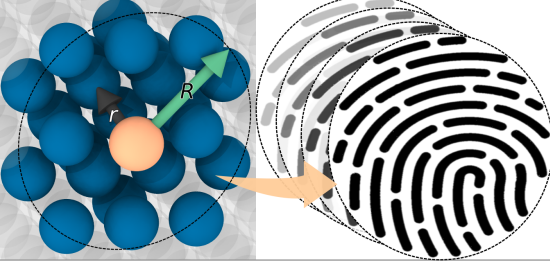

Figure 5. Atomic radial cutoff used to generate descriptors, represented as fingerprints.

Researchers from the University of South Florida, NVIDIA, Sandia National Labs, NERSC, and the Royal Institute of Technology collaborated to produce a LAMMPS trained machine learning kernel with interatomic potentials named SNAP (Spectral Neighborhood Analysis Potential).

SNAP was found to be accurate across a huge pressure-temperature range, from 0-50Mbars or 300-20,000 Kelvin. The peak Molecular Dynamic performance was greater than 22x the previous record—done on a 20-billion-atom system, and simulated on Summit for 1ns in a day.

The project qualified as a Gordon Bell Prize finalist, and the near perfect weak scaling of SNAP MD highlights the potential to launch quantum-accurate MD to trillion atom simulations on upcoming exascale platforms. This dramatically expands the scientific return of X-ray free electron laser diffraction experiments.

BioInformatics, smart cities, and translational research

Dr. Ng See-Kion is constantly in search of big data. A practicing data scientist, See-Kion is also a Professor of Practice and Director of Translational Research at the National University of Singapore.

Currently projects on his desk leverage the NVIDIA NeMo framework covering NLP for indigenous and vernacular languages across Singapore and New Zealand. See-Kion is also working on intelligent COVID-19 contact tracing and outbreak, intelligent social event sensing, and assessing the credibility of information in new media.

Connect: Website