Today, NVIDIA is releasing a SIGGRAPH 2021 technical paper, “Real-time Neural Radiance Caching for Path Tracing” that introduces another leap forward in real-time global illumination: Neural Radiance Caching.

Global illumination, that is, illumination due to light bouncing around in a scene, is essential for rich, realistic visuals. This is a challenging task, even in cinematic rendering, because it is difficult to find all the paths of light that contribute meaningfully to an image. Solving this problem through brute force requires hundreds, sometimes thousands of paths per pixel, but this is far too expensive for real-time rendering.

Before NVIDIA RTX introduced real-time ray tracing to games, global illumination in games was largely static. Since then, technologies such as RTXGI bring dynamic global illumination to life. They overcome the limited realism of pre-computing “baked” lighting in dynamic worlds and simplify an otherwise tedious lighting design process.

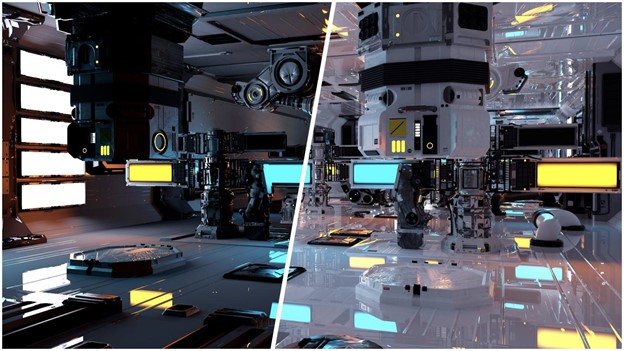

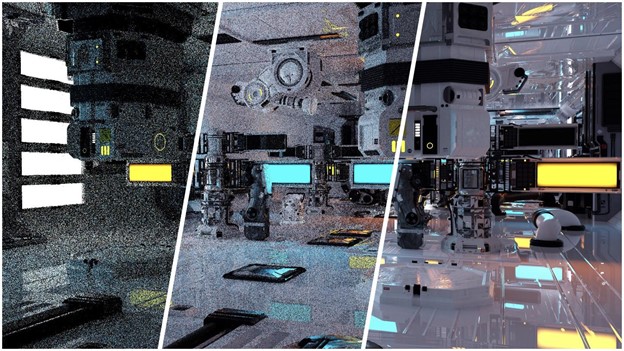

Neural Radiance Caching combines RTX’s neural network acceleration hardware (NVIDIA TensorCores) and ray tracing hardware (NVIDIA RTCores) to create a system capable of fully-dynamic global illumination that works with all kinds of materials, be they diffuse, glossy, or volumetric. It handles fine-scale textures such as albedo, roughness, or bump maps, and scales to large, outdoor environments neither requiring auxiliary data structures nor scene parameterizations.

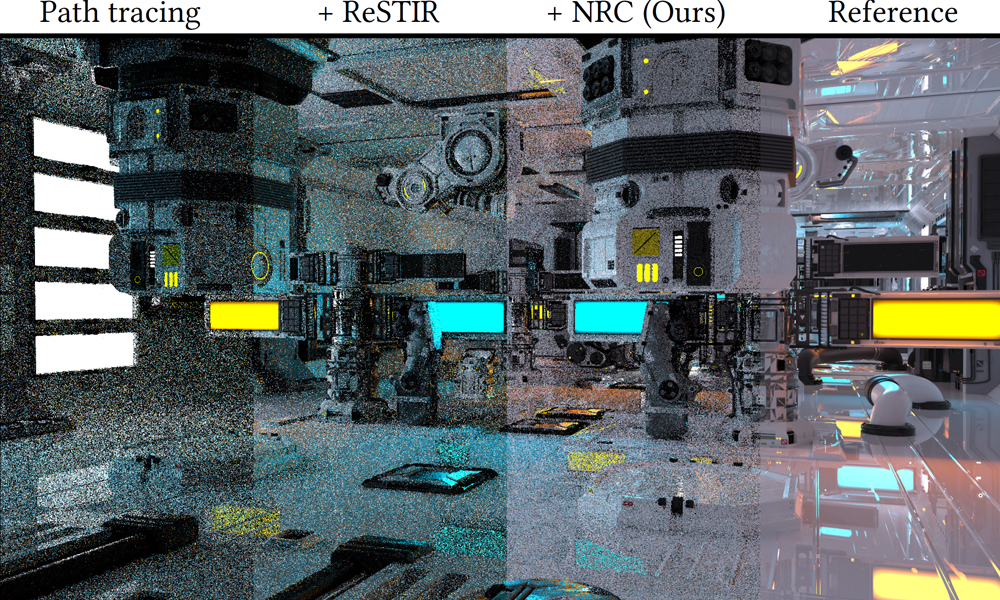

Combined with NVIDIA’s state-of-the-art direct lighting algorithm, ReSTIR, Neural Radiance Caching can improve rendering efficiency of global illumination by up to a factor of 100—two orders of magnitude.

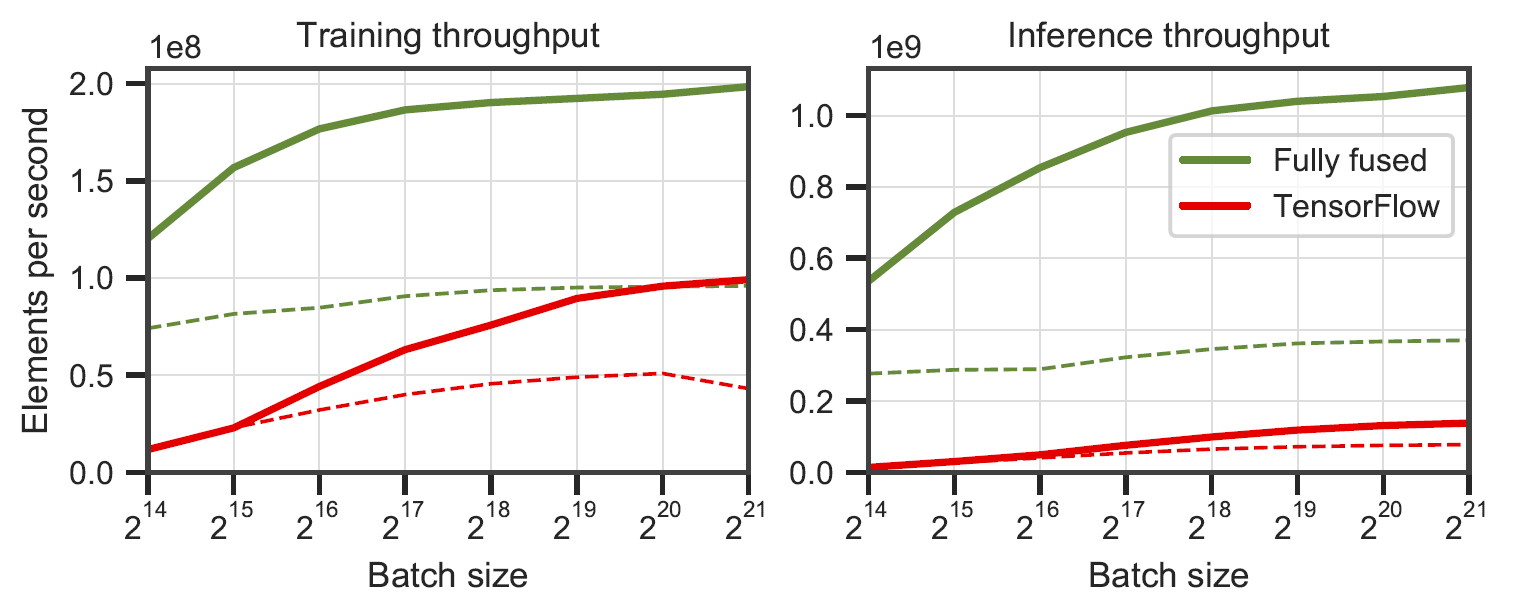

At the heart of the technology is a single tiny neural network that runs up to 9x faster than TensorFlow v2.5.0. Its speed makes it possible to train the network live during gameplay in order to keep up with arbitrary dynamic content. On an NVIDIA RTX 3090 graphics card, Neural Radiance Caching can provide over 1 billion global illumination queries per second.

Training is often considered an offline process with only inference occurring at runtime. In contrast, Neural Radiance Caching performs both training and inference at runtime, showing that real-time training of neural networks is practical.

This paper paves the way for using dynamic neural networks in various other areas of computer graphics and possibly other real-time fields, such as wireless communication and reinforcement learning.

“Our work represents a paradigm shift from complex, memory-intensive auxiliary data structures to generic, compute-intensive neural representations” says Thomas Müller, one of the paper’s authors. “Computation is significantly cheaper than memory transfers, which is why real-time trained neural networks make sense despite their massive number of operations.”

We are excited about future applications enabled by tiny real-time-trained neural networks and look forward to further research of real-time machine learning in computer graphics and beyond. To help researchers and developers adopt the technology, NVIDIA releases the CUDA source code of their tiny neural networks.

Learn more: Check out the project website.