Are you a fan of synthesizer-driven bands like Depeche Mode, Erasure, or Kraftwerk? Did you ever think of how cool it would be to create your own music with a synthesizer at home? And what if that process could be enhanced with the help of NVIDIA Jetson Nano?

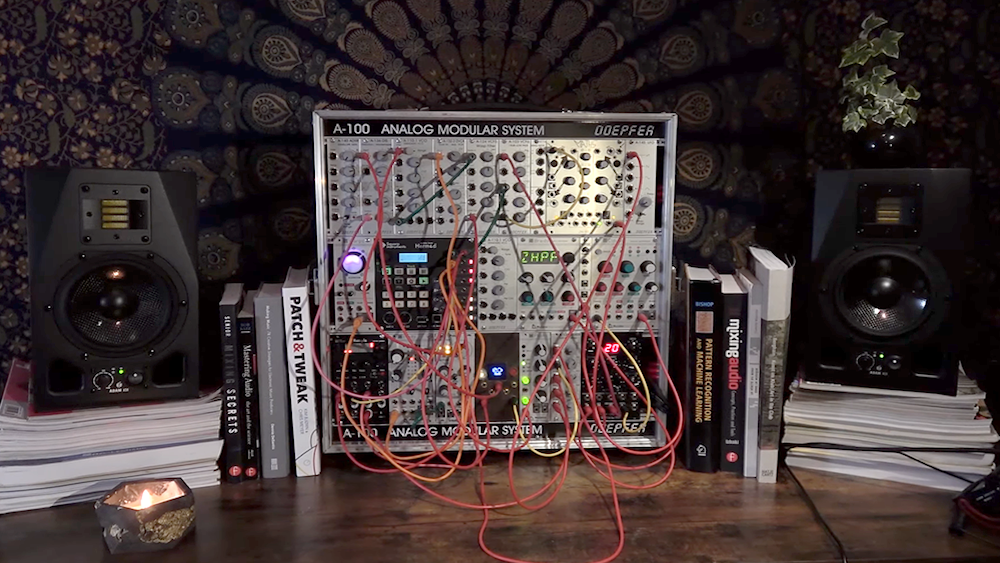

The latest Jetson Project of the Month has found a way to do just that, bringing together a Eurorack synthesizer with a Jetson Nano to create the Neurorack. This musical audio synthesizer is the first to combine the power of deep generative models and the compactness of a Eurorack machine.

“The goal of this project is to design the next generation of musical instruments, providing a new tool for musicians while enhancing the musician’s creativity. It proposes a novel approach to think [about] and compose music,” noted the app developers, who are members of Artificial Creative Intelligence and Data Science (ACIDS) group, based at the IRCAM Laboratory in Paris, France. “We deeply think that AI can be used to achieve this quest.”

The real-time capabilities of the Neurorack rely on Jetson Nano’s processing power and Ninon Devis’ research into crafting trained models that are lightweight in both computation and memory footprint.

“Our original dream was to find a way to miniaturize deep models and allow them inside embedded audio hardware and synthesizers. As we are passionate about all forms of synthesizers, and especially Eurorack, we thought that it would make sense to go directly for this format as it was more fun! The Jetson Nano was our go-to choice right at the onset … It allowed us to rely on deep models without losing audio quality, while maintaining real-time constraints,” said Devis.

Watch a demo of the project in action here:

The developers had several key design considerations as they approached this project, including:

- Musicality: the generative model chosen can produce sounds that are impossible to synthesize without using samples.

- Controllability: the interface that they picked is handy and easy to manipulate.

- Real-time: the hardware behaves like a traditional synthesizer and is equally reactive.

- Ability to standalone: it can be played without a computer.

As the developers note in their NVIDIA Developer Forum post about this project: “The model is based on a modified Neural-Source Filter architecture, which allows real-time descriptor-based synthesis of percussive sounds.”

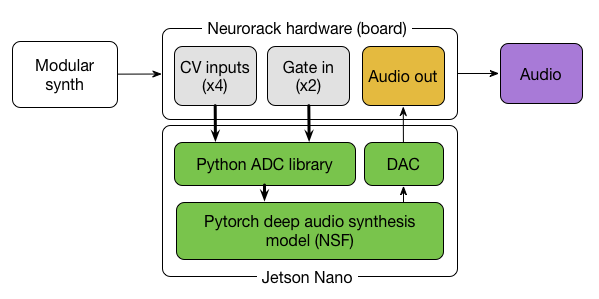

Neurorack uses PyTorch deep audio synthesis models (see Figure 1) to produce sounds that typically require samples, is easy to manipulate and doesn’t require a separate computer.

The hardware features four control voltage (CV) inputs and two gates (along with a screen, rotary, and button for handling the menus), which all communicate with specific Python libraries. The behavior of these controls (and the module itself) is highly dependent on the type of deep model embedded. For this first version of the Neurorack, the developers implemented a descriptor-based impact sounds generator, described in their GitHub documentation.

The Eurorack hardware and software were developed with equal contributions from Ninon Devis, Philippe Esling, and Martin Vert on the ACIDS team. According to their website, ACIDS is “hell-bent on trying to model musical creativity by developing innovative artificial intelligence models.”

The project code and hardware design are free, open-source, and available in their GitHub repository.

The team hopes to make the project accessible to musicians and people interested in AI/embedded computing as well.

“We hope that this project will raise the interest of both communities! Right now reproducing the project is slightly technical, but we will be working on simplifying the deployment and hopefully finding other weird people like us,” Devis said. “We strongly believe that one of the key aspects in developing machine learning models for music will lead to the empowerment of creative expression, even for nonexperts.”

More detail on the science behind this project is available on their website and in their academic paper.

Two of the team members, Devis and Esling, have formed a band using the instruments they developed. They are currently working on a full-length live act, which will feature the Neurorack and plan to perform during the next SynthFest in France this April.

Sign up now for Jetson Developer Day taking place at NVIDIA GTC on March 21. This full-day event led by world-renowned experts in robotics, edge AI, and deep learning, will give you a unique deep dive into building next-generation AI-powered applications and autonomous machines.