How can you tell if your Jupyter instance is secure? The NVIDIA AI Red Team has developed a JupyterLab extension to automatically assess the security of Jupyter environments. jupysec is a tool that evaluates the user’s environment against almost 100 rules that detect configurations and artifacts that have been identified by the AI Red Team as potential vulnerabilities, attack vectors, or indicators of compromise.

NVIDIA AI Red Team and Jupyter

The NVIDIA AI Red Team proactively assesses the security of NVIDIA AI products and development pipelines. On operations, the team frequently encounters software from the Jupyter ecosystem, a powerful and flexible set of tools used by many machine learning (ML) researchers and engineers. The AI Red Team identified Jupyter configurations and functionality that could be used to extend access, gain persistence, or manipulate development runtime and artifacts.

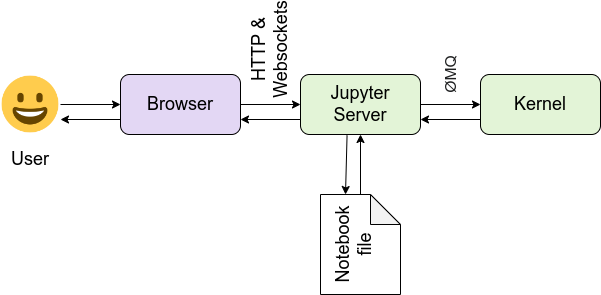

The Jupyter ecosystem consists of many interconnected components designed to execute Julia, Python, or R code in a client-server model. Usually, users interact with code in a browser-based interactive development environment. That code is dispatched over HTTP/S and WebSockets to a Jupyter server that may be running locally, remotely on premises, or in the cloud. The Jupyter server then dispatches code for execution in kernels through a message queue. See Figure 1 and the Jupyter architecture documentation for more information.

While extremely flexible, this modular architecture provides threat actors with multiple opportunities to impact the machine learning development cycle and derivative systems. For instance, with access to a client application like JupyterLab, they may be able to dispatch commands to the server in the context of the authenticated user. In the words of the Jupyter team, “commands may collide, clobber, and overwrite each other.” For more details, see Running a Notebook Server.

Similarly, with access to servers and kernels, threat actors may be able to interact with user runtimes without any access to the user’s host machine. Note that JupyterLab is only intended for one user. The official multi-user solution is JupyterHub, according to Running a Notebook Server.

With its modularity and wide range of use cases, the Jupyter ecosystem has several configuration files and values to enable customization. Jupyter developers and contributors have worked to balance security and usability with default values and security-related functionality and configurations.

However, it is possible for users to inadvertently introduce security vulnerabilities. Moreover, with sufficient access these configurations can be maliciously changed by threat actors to impact the ML process. Some examples of techniques from the NVIDIA AI Red Team include:

1. By default, when starting Jupyter Server (either independently or as part of a JupyterLab instantiation), the server only listens for requests generated by the localhost. However, by either modifying a configuration value or command-line arguments used to launch the process, the server can be made to listen on other interfaces including the broadcast domain. Users may do this intentionally to access their server over the network, unintentionally exposing their server to malicious access.

2. Jupyter has a check to prevent cross-site request forgeries (CSRF) enabled by default. This prevents users from unknowingly submitting attacker-controlled code to the Jupyter server. However, a threat actor can disable this check in the configuration file, exposing the user to CSRF.

3. With sufficient access and credential material, an attacker can attach their own Jupyter client to a user’s running kernel. This means that they could create variables and functions, overwrite imports with malicious clones, and otherwise run arbitrary code in the same context as the user. Threat actors successfully targeting Jupyter deployments are likely to have training-time access and could therefore significantly impact the efficacy of the final AI system.

To summarize, risky configuration values could be intentional, unintentional, or the result of malicious activity. With the modular nature of the Jupyter ecosystem, the values controlling the application’s behavior may be dispersed across a dozen configuration files and command-line utilities. How can you identify and triage them, and take action if necessary?

Using jupysec

jupysec is a set of Jupyter security rules and a JupyterLab extension designed to audit Jupyter environments against known security risks. It is available as a standalone script or JupyterLab extension to maximize the user’s ability to integrate the tool into existing workflows.

As shown in Figure 2, the extension adds a Security Report widget to the Launcher. This is the client-side component of the extension running in a user’s browser. When users click on this widget, the Jupyter client dispatches an HTTP/GET request to the Jupyter server. The server validates that the request is coming from an authenticated user, executes the jupysec rules, and renders and returns any findings to the user.

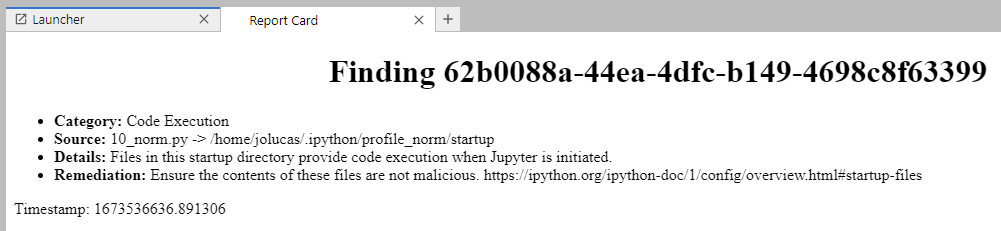

Each jupysec finding consists of a category, the document where the finding was found, the offending line, additional details about why that configuration represents a security risk, and a recommended remediation. Each finding is given a UUID and rendered to the user through a Jinja template. jupysec does not maintain state, so each execution re-evaluates the entire rule set.

Figure 3 shows the jupysec detected potential code execution. There is a file called 10_norm.py in an IPython startup directory. This script will run whenever JupyterLab is launched with that profile, as documented by IPython and demonstrated in IPython Profiles: Big Bag o’ Functionality. The finding was generated from a specific jupysec rule.

jupysec will not remediate the findings for the user, as this would almost certainly generate a breaking change to the environment. Like any automated security tool, there may be false positives. For example, in the finding shown in Figure 3, the startup directory could be used to improve the developer experience by automatically connecting to remote data storage.

However, that same configuration change could also be used to exfiltrate sensitive information. jupysec cannot tell the difference, but can alert you to the potential issue. After eliminating false positives, users and administrators should take the recommended steps to harden the environment or investigate anomalous indicators.

Summary

The Jupyter ecosystem is extremely powerful and configurable, which makes it an attractive tool for researchers and developers as well as threat actors. jupysec can automatically assess the security of your Jupyter environments.

The NVIDIA AI Red Team will continue researching Jupyter security and encoding knowledge and techniques in the jupysec rules. Whether your Jupyter deployment is local or hosted on the cloud, give jupysec a try using pip install jupysec[jupyterlab]. Visit jupysec on GitHub to share your feedback and contributions as issues.

Register for free for NVIDIA GTC 2023, March 20–23, and join us for Connect with the Experts: Using AI to Modernize Cybersecurity, Creating and Executing an Effective Cyberdefense Strategy in an AI-Driven Business, and more related sessions.