NVIDIA Isaac GEMs for ROS provides a set of GPU-accelerated packages for your ROS2 application, improving throughput on image processing and DNN-based perception models. These ROS2 packages are built from ROS2 Foxy, the first Long Stable Release (LTS) to come out of the robotics community.

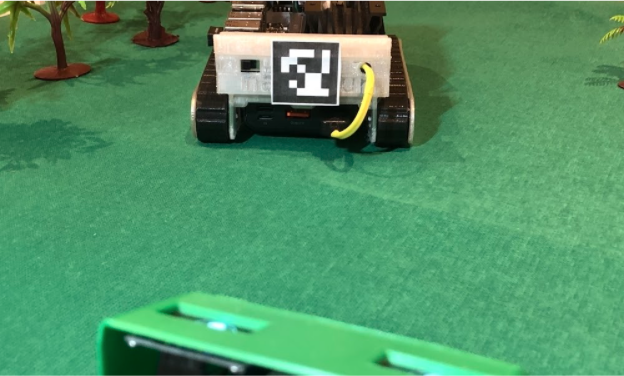

This post investigates how you can accelerate your robot’s deployment by implementing NVIDIA Isaac ROS GEMs. The focus of this post is AprilTags detection using nanosaur, a simple open-source robot based on the NVIDIA Jetson platform.

Before going into detail on this application, here is the history of ROS, NVIDIA Isaac GEMs, and how nanosaur is built.

History of ROS and ROS2

Willow Garage developed the Robot Operating System (ROS) in 2007. The 2012 handover to the new Open Robotics foundation was made to maintain the framework’s development. At first, this framework was primarily used by the robotics research community. Eventually, it gained popularity among the broader developer community, including robotics makers and companies.

In 2015, the ROS community noticed the production release’s weakness, with lacking single robot support (roscore) security, slow real-time support, and other central issues. At that point, the community started to lay the foundation of the second generation of ROS, redesigning it for the research community and companies with an eye on security, intra-communication, and reliability.

ROS2 is becoming the new robotics distribution after the official last ROS release (Noetic), and support from the community is growing after the first LTS release.

nanosaur

nanosaur is a simple open-source robot based on NVIDIA Jetson. The robot is fully 3D printable, able to wander on your desk autonomously and uses a simple camera and two OLEDs—these act as a pair of eyes. It measures a compact 10x12x6cm and it weighs only 500g.

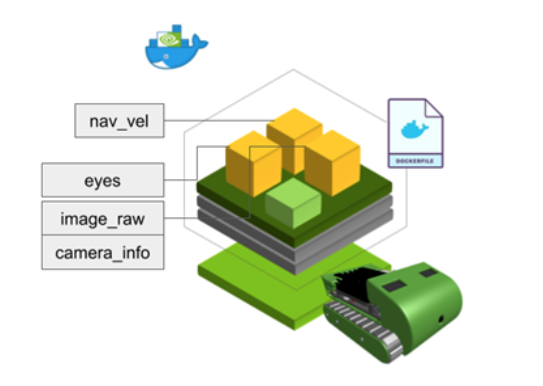

nanosaur’s hardware is similar to that of NVIDIA JetBot, using two I2C OLED displays and sharing the same I2C motor driver. However, nanosaur’s software is developed directly on ROS2 and is entirely GPU accelerated and Docker based.

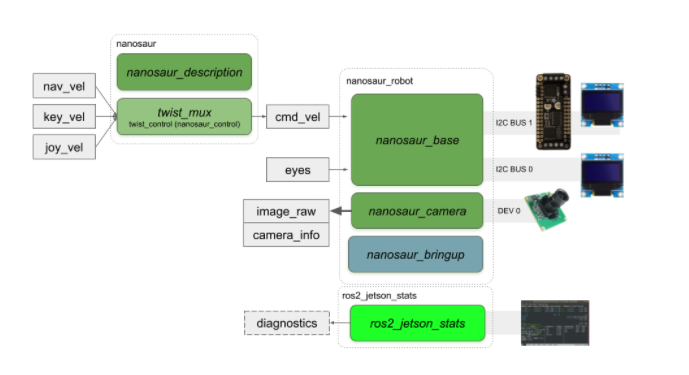

nanosaur has many nodes to drive and show the status of the robot. All nodes are arranged by package.

nanosaur_basenanosaur_baseenables the motor controller and the displays.joy2eyesconverts a joystick message to an eye’s topic. This node is functional when you want to test the eye’s topic.

nanosaur_camerananosaur_cameraruns the camera streamer from the MIPI camera to a ROS2 topic.

ros2_jetson_statsros2_jetson_statsis the wrapper of thejetson-statspackage to monitoring and controlling your NVIDIA Jetson [Xavier NX, Jetson AGX Xavier, Nano, TX1, or TX2].

For more information, see the main nanosaur GitHub repository.

Here is the usual ROS2 graph when you start up the nanosaur.

nanosaur is released starting with the NVIDIA Jetson ROS Foxy Docker image. There is also support for ROS2 Galactic, ROS2 Eloquent, and ROS Melodic and ROS Noetic with AI frameworks such as PyTorch, TensorRT, and DeepStream SDK.

The ROS2 Foxy is compiled and used in the nanosaur_camera with the jetson-utils to speed up camera access.

There are a set of topics available when the nanosaur is running, such as the image_raw topic, the eyes topic to move the eyes drawn on the display, and the navigation command to drive the robot.

NVIDIA Isaac GEMs for ROS

Simplifying the deployment of GPU-accelerated algorithms is the core purpose of these new ROS2 packages. These packages are open-source for the robotics community, making it possible to leverage the CPU and improve your robot’s capabilities using AI and robotics perception directly on the GPU. All these GEMs are deployed for ROS Foxy and work with the NVIDIA engine.

NVIDIA Isaac GEMs provide hardware-accelerated robotics capabilities in a ROS package, maintaining the integration in the ROS2 middleware as native nodes combined in other ROS packages. NVIDIA Isaac GEMs for ROS are distributed for x86_ 64/dGPU (Ubuntu 20.04) and Jetson Xavier NX/AGX Xavier with the latest NVIDIA JetPack 4.6 distribution.

The new NVIDIA Isaac GEMs for ROS include:

- isaac_ros_common

- isaac_ros_image_pipeline

- isaac_ros_apriltag

- isaac_ros_dnn_inference (new)

- isaac_ros_visual_odometry (new)

- isaac_ros_argus_camera (new)

AprilTag

An AprilTag is a unique QR code optimized for a camera to decode quickly and read from a far distance. These tags are fiducials to drive a robot or a manipulator to start an action or complete a job from a specific point. They are also used in augmented reality to calibrate the odometry of the visor. These tags are available in many families, but all are simple to print with a desktop printer.

The ROS2 AprilTag package uses the NVIDIA GPU to accelerate detection in an image and publish pose, ID, and other metadata. This package is comparable to the ROS2 node for CPU AprilTag detection.

Package dependencies are:

isaac_ros_commonisaac_ros_image_pipelineimage_commonvision_cvOpenCV 4.5+

After getting familiar with the tutorial available on the repository, you can define and configure it in your ROS2 robotics project.

Usually, a pipeline is defined starting from the stream coming out of a camera or a stereo camera where there are two topics published: the first one where the output is a raw camera stream and camera_info where all calibration and configuration have defined the stream.

After this step, you can accelerate your ROS2 application using the ros_image_proc to rectify the image and possibly the pose estimation of the tag and the corners.

The isaac_ros_apriltag is a ROS2 topic with an array of AprilTags detected from the stream. For each listed, there are many data points, such as the center in the camera world, all corners, ID, and the pose. By default, the topic is called /tag_detections. Here is an example of a tag_detections message.

---

header:

stamp:

sec: 1631573373

nanosec: 24552192

frame_id: camera_color_optical_frame

detections:

- family: 36h11

id: 0

center:

x: 779.4064331054688

y: 789.7901000976562

z: 0.0

corners:

- x: 614.0

y: 592.0

z: 0.0

- x: 971.0

y: 628.0

z: 0.0

- x: 946.0

y: 989.0

z: 0.0

- x: 566.0

y: 970.0

z: 0.0

pose:

header:

stamp:

sec: 0

nanosec: 0

frame_id: ''

pose:

pose:

position:

x: -0.08404197543859482

y: 0.11455488204956055

z: 0.6107800006866455

orientation:

x: -0.10551299154758453

y: -0.10030339658260345

z: 0.04563025385141373

w: 0.9882935285568237

covariance: [0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0]

---

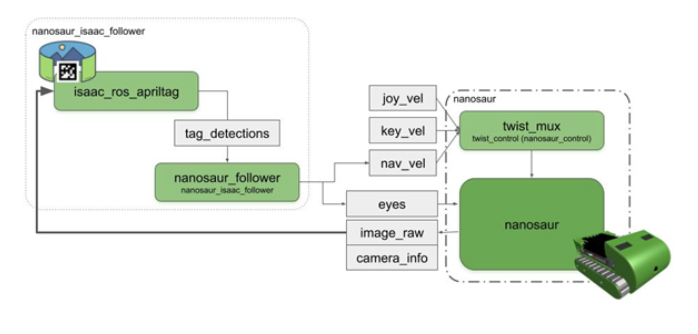

nanosaur and AprilTag detection

The nanosaur_follower node is initialized with a configuration file where all selected are PID gains, the AprilTag ID to follow and other parameters. In the main loop, this node decodes the messages coming from Isaac_ros_apriltag, and when it appears in the camera stream, begins to follow it, generating a linear speed and a twist.

In Figure 8, for each frame, Isaac_ros_apriltag generates a new output on tag detection and the nanosaur_follower node drives the robot.

In this case, a unicycle robot, the first approximation of the nanosaur’s kinematic model, can follow a tag using a decoupled PID controller. In Figure 9, the first controller (A) reduces to zero the error from the center AprilTag corner to the center vertical line. This error drives the ROS2 twist message. At the same time, a second error coming from camera distance drives the robot speed (B).

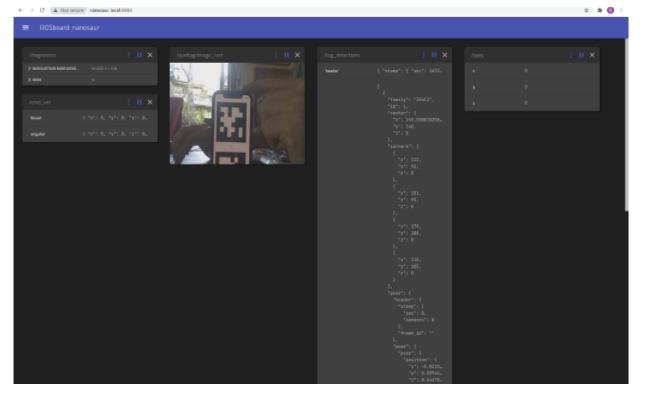

Real-time web interface

nanosaur offers a second Docker image where all topics and the camera stream are available in real time, and you can see in real time what is happening when the robot is moving. Figure 10 shows an example of the user interface.

Summary

In this post, I discussed how to accelerate robot deployment with NVIDIA Isaac ROS GEMs. The solution focused on AprilTags detection using nanosaur, a simple open-source robot based on the NVIDIA Jetson platform.

For more information, download the Isaac ROS nanosaur integration from GitHub.