Deploying AI-powered services like voice-based assistants, e-commerce product recommendations, and contact-center automation into production at scale is challenging. Delivering the best end-user experience while reducing operational costs requires accounting for multiple factors. These include composition and performance of underlying infrastructure, flexibility to scale resources based on user-demand, cluster management overhead, and security.

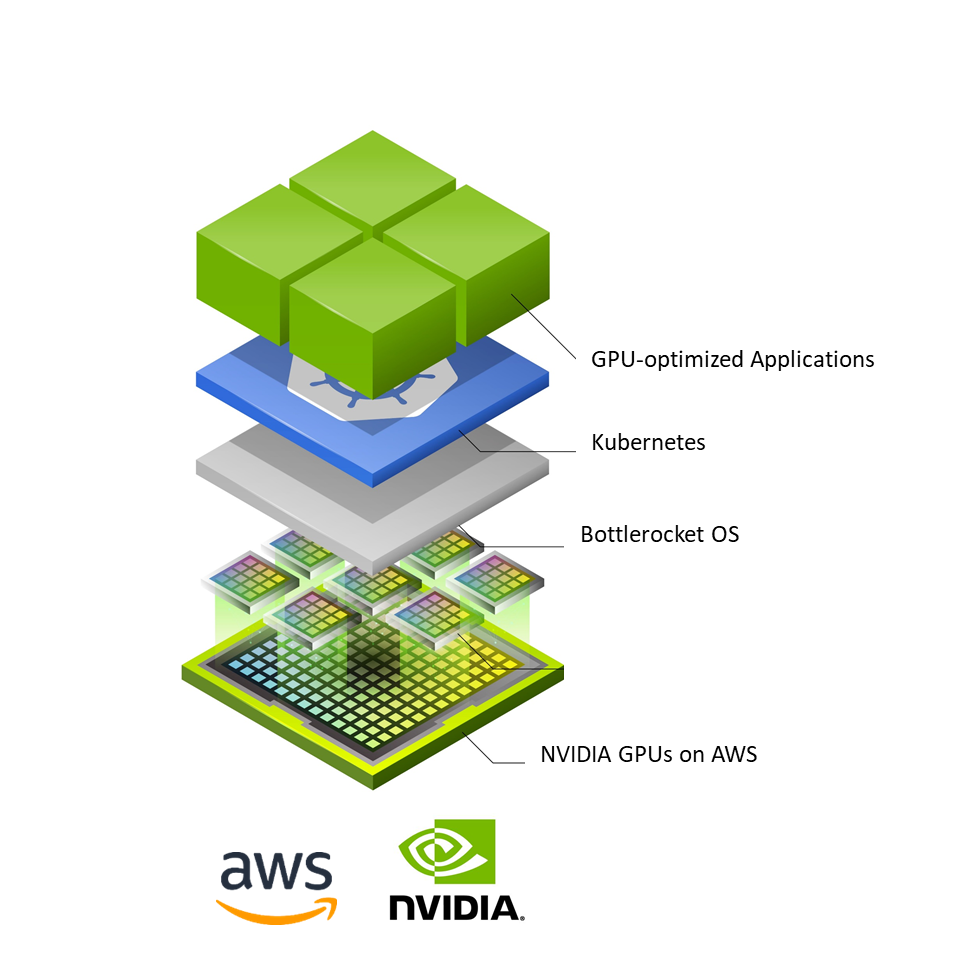

To address the challenges of deploying AI at scale, Enterprise IT teams have adopted Kubernetes (K8s) for container orchestration and NVIDIA accelerated computing to meet the performance needs of production AI deployments. In addition, there’s a growing focus on the role of the operating system (OS) for production infrastructure. The host OS of the production environment has a direct impact on the security, resource utilization, and time it takes to provision and scale additional resources. This influences the user experience, security, and cost of deployments as user demand increases.

Botterocket: a Linux-based container-optimized OS

Bottlerocket is a minimal, Linux based open-source OS developed by AWS that is purpose built for running containers. With a strong emphasis on security, it only includes essential software for running containers.

This reduces the attack surface and impact of vulnerabilities, requiring less effort to meet node compliance requirements. In addition, the minimal host footprint of Bottlerocket helps improve node resource usage and boot times.

Updates to Bottlerocket are applied in a single step and can be rolled back if necessary. This results in lower error rates and improved uptime for container applications. They can also be automated using container orchestration services such as Amazon Elastic Kubernetes Service(EKS) and Amazon Elastic Container Service (ECS).

Use Bottlerocket with Amazon EC2 instances powered by NVIDIA GPUs

AWS and NVIDIA have collaborated to enable Bottlerocket to support all NVIDIA-powered Amazon EC2 instances including P4d, P3, G4dn, and G5. This support combines the computational power of NVIDIA-powered GPU instances with the benefits of a container-optimized OS for deploying AI models on K8s clusters at scale.

The result is enhanced security and faster boot times, especially when running AI workloads scaling additional GPU-based instances in real time.

Support for NVIDIA GPUs is delivered in the form of the Bottlerocket GPU-optimized AMI. This includes NVIDIA drivers, a K8s GPU device-plugin, and containerd runtime built into the base image.

The AMI provides everything to provision and register self-managed nodes, with NVIDIA-powered GPU instances and Bottlerocket OS to an Amazon EKS cluster.

In addition, you can also leverage NVIDIA optimized software from the NVIDIA NGC Catalog on AWS Marketplace—a hub for pretrained models, scripts, Helm charts, and a wide array of AI and HPC software.

For AI inference deployments on AWS, you can leverage the NVIDIA Triton Inference Server. Use the open-source inference serving software to deploy trained AI models from many frameworks including TensorFlow, TensorRT, PyTorch, ONNX, XGBoost, and Python on any GPU or CPU infrastructure.

Learn more about the Bottlerocket support for NVIDIA GPUs from AWS.