To help developers and AI researchers use mixed precision in their deep learning training workflows, NVIDIA researchers at the International Conference on Computer Vision will present a hands-on workshop focused on the use of single and half-precision in their workflows.

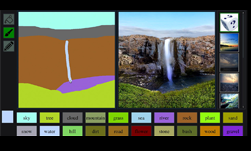

NVIDIA researcher Ming-Yu Liu, one of the developers behind NVIDIA GauGan, the viral AI tool that uses GANs to convert segmentation maps into lifelike images, will share how he and his team used automatic mixed precision to train their model on millions of images in almost half of the time, reducing training time from 21 days to 13 days.

Mixed precision training utilizes half-precision to speed up training, achieving the same accuracy as single-precision training using the same hyper-parameters. When using automatic mixed precision, memory requirements are also reduced, allowing larger models and minibatches.

Enabling mixed precision involves two steps: porting the model to use the half-precision data type where appropriate; and using loss scaling to preserve small gradient values.

At GTC 2019, we introduced an Automatic Mixed Precision feature for TensorFlow, a feature that has already greatly benefited deep learning researchers and engineers speed up their training workflows. To learn more, this developer blog will help you get started and walk you through a ResNet-50 example in TensorFlow.

Automatic mixed precision is also available in PyTorch, and MXNet. This developer blog will help you get started on PyTorch, and this page on NVIDIA’s Developer Zone will tell you more about MXNet, and all the frameworks.

In the case of GauGan, Ming-Yu and his colleagues trained their model using mixed precision with PyTorch. Typically, this only requires two lines of code change after you have imported the APEX library, which is available on GitHub.

model, optimizer = amp.initialize(model, optimizer, opt_level="O1")

with amp.scale_loss(loss, optimizer) as scaled_loss:

scaled_loss.backward()The parameter to engage mixed precision is to use O1; if you prefer to compare mixed precision to FP32 results, you can enable it by setting opt_level to = “O0” as the accuracy baseline.

Ming-Yu and his team used NVIDIA V100 GPUs for training, which are specifically designed for faster training performance.

With Tensor Cores enabled on NVIDIA Volta and Turing GPUs, the FP32 and FP16 mixed precision matrix multiply, dramatically accelerating throughput and reducing AI training times.

If you are attending the conference, you can sign up for the workshop here.