Microsoft and NVIDIA have collaborated to build, validate and publish the ONNX Runtime Python package and Docker container for the NVIDIA Jetson platform, now available on the Jetson Zoo.

Today’s release of ONNX Runtime for Jetson extends the performance and portability benefits of ONNX Runtime to Jetson edge AI systems, allowing models from many different frameworks to run faster, using less power. You can convert models from PyTorch, TensorFlow, Scikit-Learn, and others to perform inference on the Jetson platform with ONNX Runtime.

ONNX Runtime optimizes models to take advantage of the accelerator that is present on the device. This capability delivers the best possible inference throughput across different hardware configurations using the same API surface for the application code to manage and control the inference sessions.

ONNX Runtime runs on hundreds of millions of devices, delivering over 20 billion inference requests daily.

Benefits of ONNX Runtime on Jetson

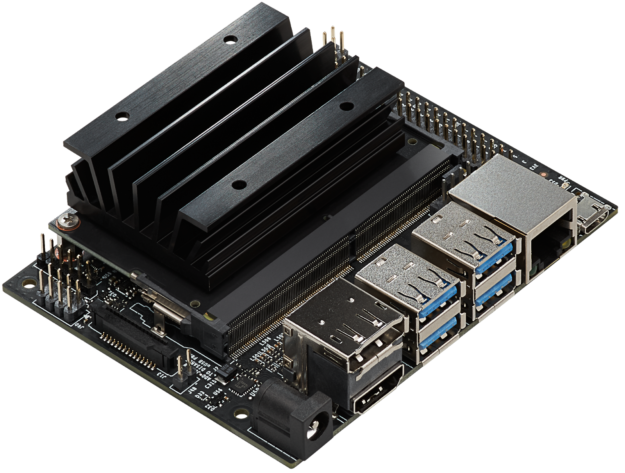

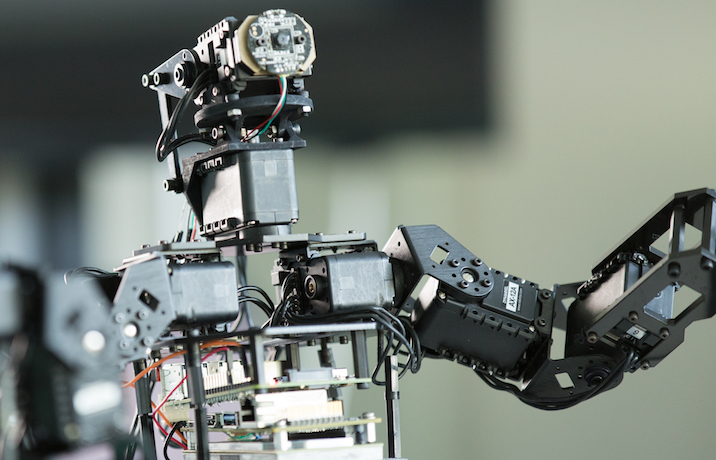

The full line-up of Jetson System-on-Modules (SOM) offers cloud-native support with unbeatable performance and power efficiency in a tiny form factor, effectively bringing the power of modern AI, deep learning, and inference to embedded systems at the edge. Jetson powers a range of applications from AI-powered network video recorders (NVRs) and automated optical inspection (AOI) in high-precision manufacturing to autonomous mobile robots (AMRs).

The full Jetson line-up is powered by the same software stack, supported by the NVIDIA JetPack SDK which includes a board support package (BSP), the Linux operating system, and user-level libraries for end-to-end AI pipeline acceleration:

- CUDA

- cudDNN

- TensorRT for accelerating AI inferencing

- cuBlas, cuFFT, and so on for accelerated computing

- Visionworks, OpenCV, and VPI for computer vision and image processing

- Libraries for camera ISP processing, multimedia, and sensor processing

This ONNX Runtime package takes advantage of the integrated GPU in the Jetson edge AI platform to deliver accelerated inferencing for ONNX models using CUDA and cuDNN libraries. You can also use ONNX Runtime with the TensorRT libraries by building the Python package from the source.

Focusing on developers

This release enables an easy integration path for you to use ONNX Runtime on the Jetson platform. You can integrate ONNX Runtime in your application code to run inference for the AI application on edge devices.

ML developers and IoT solution makers can use the pre-built Docker image to deploy AI applications on the edge or use the standalone Python package. The Jetson Zoo includes pointers to the ONNX Runtime packages and samples to get started.

The Docker image for ONNX Runtime on Jetpack4.4 is available in the Microsoft Container Registry:

docker pull mcr.microsoft.com/azureml/onnxruntime:v.1.4.0-jetpack4.4-l4t-base-r32.4.3

Alternatively, to use the Python package directly in your application, download and install it on your Jetson SOM:

wget https://nvidia.box.com/shared/static/8sc6j25orjcpl6vhq3a4ir8v219fglng.whl \ -O onnxruntime_gpu-1.4.0-cp36-cp36m-linux_aarch64.whl pip3 install onnxruntime_gpu-1.4.0-cp36-cp36m-linux_aarch64.whl

Inference applications with ONNX Runtime on Jetson

The Integrate Azure with machine learning execution on the NVIDIA Jetson platform (an ARM64 device) tutorial shows you how to develop an object detection application on your Jetson device, using the TinyYOLO model, Azure IoT Edge, and ONNX Runtime.

The IoT edge application running on the Jetson platform has a digital twin in the Azure cloud. The inference application code runs in a Docker container built from the integrated Jetson ONNX Runtime base image. The application reads frames from the camera, performs object detection, and sends the detected object results to cloud storage. From there, they can be visualized and further processed.

Sample objection detection code

You can develop your own application using the pre-built ONNX Runtime Docker image for Jetson.

Create a Dockerfile using the Jetson ONNX Runtime Docker image and add the application dependencies:

FROM mcr.microsoft.com/azureml/onnxruntime:v.1.4.0-jetpack4.4-l4t-base-r32.4.3 WORKDIR . RUN apt-get update && apt-get install -y python3-pip libprotobuf-dev protobuf-compiler python-scipy RUN python3 -m pip install onnx==1.6.0 easydict matplotlib CMD ["/bin/bash"]

Build a new image from the Dockerfile:

docker build -t jetson-onnxruntime-yolov4 .

Download the Yolov4 model, object detection anchor locations, and class names from the ONNX model zoo:

wget https://github.com/onnx/models/blob/master/vision/object_detection_segmentation/yolov4/model/yolov4.onnx?raw=true -O yolov4.onnx wget https://raw.githubusercontent.com/onnx/models/master/vision/object_detection_segmentation/yolov4/dependencies/yolov4_anchors.txt wget https://raw.githubusercontent.com/natke/onnxruntime-jetson/master/coco.names

Download the Yolov4 object detection pre- and post-processing code:

wget https://raw.githubusercontent.com/natke/onnxruntime-jetson/master/preprocess_yolov4.py wget https://raw.githubusercontent.com/natke/onnxruntime-jetson/master/postprocess_yolov4.py

Download one or more test images:

wget https://raw.githubusercontent.com/SoloSynth1/tensorflow-yolov4/master/data/kite.jpg

Create an application, main.py, to preprocess an image, run object detection, and save the original image with the detected objects:

import cv2

import numpy as np

import preprocess_yolov4 as pre

import postprocess_yolov4 as post

from PIL import Image

input_size = 416

original_image = cv2.imread("kite.jpg")

original_image = cv2.cvtColor(original_image, cv2.COLOR_BGR2RGB)

original_image_size = original_image.shape[:2]

image_data = pre.image_preprocess(np.copy(original_image), [input_size, input_size])

image_data = image_data[np.newaxis, ...].astype(np.float32)

print("Preprocessed image shape:",image_data.shape) # shape of the preprocessed input

import onnxruntime as rt

sess = rt.InferenceSession("yolov4.onnx")

output_name = sess.get_outputs()[0].name

input_name = sess.get_inputs()[0].name

detections = sess.run([output_name], {input_name: image_data})[0]

print("Output shape:", detections.shape)

image = post.image_postprocess(original_image, input_size, detections)

image = Image.fromarray(image)

image.save("kite-with-objects.jpg")

Run the application:

nvidia-docker run -it --rm -v $PWD:/workspace/ --workdir=/workspace/ jetson-onnxruntime-yolov4 python3 main.py

The application reads in the kite image and locates all the objects in the image. You can try it with different images and extend the application to use a video stream, as shown in the Azure IoT edge application earlier.

ONNX Runtime v1.4 updates

This package is based on the latest ONNX Runtime v1.4 release from July 2020. This latest release provides many updates focused on the popular Transformer models (GPT2, BERT), including performance optimizations, improved quantization support with new operators, and optimization techniques. The release also expands the ONNX Runtime hardware ecosystem compatibility with preview releases for new hardware accelerators including support for ARM-NN and Python package for NVIDIA Jetpack 4.4.

Along with these accelerated inferencing updates, the 1.4 release continues to build upon the innovation introduced in the prior release on the accelerated training front, including expanded operator support with a new sample using the Huggingface GPT-2 model.