With the convergence of IoT and AI organizations are evaluating new ways of computing to keep up with larger data loads and more complicated use cases. For many, edge computing provides the right environment to successfully operate AI applications ingesting data from distributed IoT devices.

But many organizations are still grappling with understanding edge computing. Partners and customers often ask about edge computing, reasons for its popularity in the AI space, and use cases compared to cloud computing.

NVIDIA recently hosted an Edge Computing 101: An Introduction to the Edge webinar. The event provided an introduction to edge computing, outlined different types of edge, the benefits of edge computing, when to use it and why, and more.

During the webinar, we surveyed the audience to understand their biggest questions about edge computing and how we could help.

Below we provide answers to those questions, along with resources that could help you along your edge computing journey.

What stage are you in, on your edge computing journey?

About 51% of the audience answered that they are in the “learning” phase of their journey. At face value, this is not surprising given that the webinar was an introductory session. Of course most of the folks are at the learning phase, as opposed to implementing or scaling. This was also corroborated by the fact that many of the tools in the edge market are still new, meaning many vendors also have much to gain from learning more.

To help in the learning journey refer to Considerations for Deploying AI at the Edge. This overview covers the major decision points for choosing the right components for an edge solution, security tips on edge deployments, and how to evaluate where edge computing fits into your existing environment.

What is the top benefit you hope to gain by deploying applications at the edge?

There are many benefits to deploying AI applications in edge computing environments, including real-time insights, reduced bandwidth, data privacy, and improved efficiency. For the participants in the session, 42% responded that latency (or real-time insights) was the top differentiator they were hoping to gain from deploying applications at the edge.

Improving latency is a major benefit of edge computing since the processing power for an application sits physically closer to where data is collected. For many use cases, the low latency provided by edge computing is essential for success.

For example, an autonomous forklift operating in a manufacturing environment has to be able to react instantaneously to its dynamic environment. It needs to be able to turn around tight corners, lift and deliver heavy loads, and stop in time to avoid colliding with moving workers in the facility. If the forklift is unable to make decisions with ultra-low latency, there is no guarantee it will operate effectively. For safety reasons, organizations must know that AI applications powering that autonomous forklift are able to return insights fast enough to keep the environment safe.

Learn more about latency and the other benefits of edge AI.

What is your biggest challenge designing an edge computing solution?

There are challenges associated with implementing any new technology. This audience gave an even spread of answers across the choices given, which is not surprising given the early nature of the edge computing market. Many organizations are still investigating how edge computing will work for them, and they are experiencing a variety of different challenges.

The following lists six common challenges for this audience, along with resources that can help.

1. Unsure what components are needed

The three major components needed for any edge deployment are an application, infrastructure (including tools to manage applications remotely), and security protocols.

Edge Computing 201: How to Build an Edge Solution will dive deep into each of these topics. The webinar will also provide specifics for what is needed to build an edge deployment, repurpose existing technology to be optimized for an edge deployment, and best practices for getting started.

2. Implementation challenges

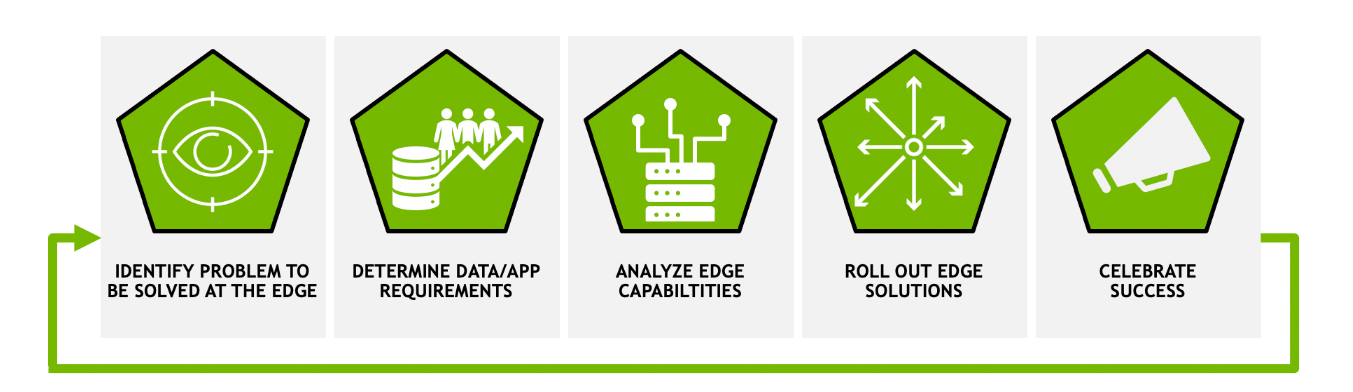

Many organizations are starting to implement edge AI, so it is important to understand the process and challenges involved. There are five main steps to implementing any edge AI solution:

- Identify a use case or challenge to be solved.

- Determine what data and application requirements exist.

- Evaluate existing edge infrastructure and what pieces must be added.

- Test solution and then roll out at scale.

- Share success with other groups to promote additional use cases.

Understanding these five steps is key to overcoming challenges that arise during implementation.

Steps to Get Started With Edge AI dives into each of these steps, outlining best practices and pitfalls to avoid along the way.

3. Tuning an application for edge use cases

The most important aspects of an edge application are flexibility and performance. Organizations need to be able to deploy an application to edge sites that have specific requirements and sometimes different tools than other sites. They need an application that can handle volatile situations. Additionally, ensuring an application can provide the performance needed for ultra-low latency situations is critical to success.

Cloud-native technology fulfills both of those requirements, and has many other added benefits.

4. Scaling a solution across multiple sites

Seamlessly scaling one deployment to multiple (sometimes thousands) of deployments can be easy with the right technology. Tools to manage application deployments across distributed edge sites are critical for any organization looking to scale edge AI across their entire organization. Some examples of tools are Red Hat OpenShift, VMware Tanzu, and NVIDIA Fleet Command.

Fleet Command is turnkey, secure, and can scale to thousands of devices. Check out the demo to learn more.

5. Security of edge environments

Edge computing environments are very different from cloud computing environments, and have different security considerations. For instance, physical security of data and hardware is a consideration for edge sites that is not generally a consideration when deploying in the cloud. It is essential to find the right protocols to provide multilayer security for edge deployments that protects the entire workflow from cloud to edge.

Check out Edge Computing: Considerations for Security Architects to learn more about how to secure edge environments.

6. Justify the cost of an edge solution

Justifying the cost of any technology boils down to understanding all of the cost factors and the value of the solution. For an edge computing solution, there are three main cost factors, the infrastructure costs, the application costs, and the management costs. The value of edge computing will vary by use case, and depends a lot on the ROI of the AI application deployed.

Learn more about the costs associated with an edge deployment with Building an Edge Strategy: Cost Factors.

What is the next step in your edge computing journey?

After the session, 49% responded that “learning more about edge AI use cases” was the next step in their edge computing journey. Many leading edge computing applications use computer vision to perceive objects in an environment, from pedestrians in a crosswalk to objects on a shelf at a retail store. Organizations rely on edge computing for computer vision because of the ultra-fast performance that edge computing delivers. This ensures objects are detected instantaneously.

The NVIDIA AI for Smart Spaces Ebook covers several major vision AI use cases, all of which could be used in edge computing deployments.

If you’re ready to get started working with edge computing solutions, check out NVIDIA LaunchPad. With LaunchPad, organizations can get immediate, short-term access to the necessary hardware and software stacks for an entire end-to-end flow deploying, managing, and validating an application at the edge. Hands-on labs walk users through the same workflow on the same technology that can be deployed in production, ensuring more confident software and infrastructure decisions can be made. With this free trial, organizations can see for themselves the types of use cases and applications that will work best in their environment to meet their goals.

The edge computing industry is exciting and new. There are many emerging technologies that have a clear path to changing the way that organizations deploy and operate AI throughout their entire business. As organizations continue to adopt AI, infrastructure choices will continue to be paramount to innovative use cases.

You can deep dive into how to assemble the components of an edge computing solution, including application, infrastructure, and security protocols in the Edge Computing 201 webinar: How to Build an Edge Solution.