Hybrid cloud refers to a mix of computing and storage services of on-premises infrastructure, like Dell EMC VxRail hyperconverged infrastructure (HCI) and multipublic cloud services such as Amazon Web Services or Microsoft Azure. Hybrid cloud architecture gives you the flexibility to maintain traditional IT on-premises deployments for running business-critical applications or to protect sensitive data while offering cloud architecture agility and flexibility.

VMware enables multicloud architecture and HCI through VMware Cloud Foundation (VCF). VCF consists of VMware vSphere, VMware NSX-T, and VMware vSAN, the three core technologies of a software-defined data center: software-defined compute (or server virtualization), software-defined-networking, and software-defined storage.

VxRail, the only jointly engineered HCI solution with VMware, consolidates compute, storage, and networking resources into a unified system. You can choose to implement VxRail, VCF on VxRail, or VCF on VxRail with Tanzu, enabling you to run traditional and containerized workloads side-by-side while automating lifecycle management, creating a central point of administration, and simplifying operations.

RDMA support

Traditionally, vSAN has relied on TCP communication to move data between hosts reliably and consistently. Earlier this year, with the release of vSAN 7.0 update 2, VMware unveiled the adoption of remote direct memory access (RDMA) support for clusters configured for RDMA-based networking.

RDMA is a technology that allows systems to bypass the CPU and send data with lower latency and compute overhead. This results in lower compute overhead and improved storage performance. A hyperconverged architecture using vSAN is ideal for RDMA. Clusters will automatically detect the support of RDMA and deliver improved application performance and ROI through increased consolidation ratios.

By offloading the core of vSAN and NSX-T communication—its storage and network virtualization components—to the NVIDIA ConnectX SmartNIC, VMware offloads processing tasks that the server CPU would typically handle. This enables applications to maximize the available network bandwidth, save server CPU cycles, and provide superior application performance.

Performance results

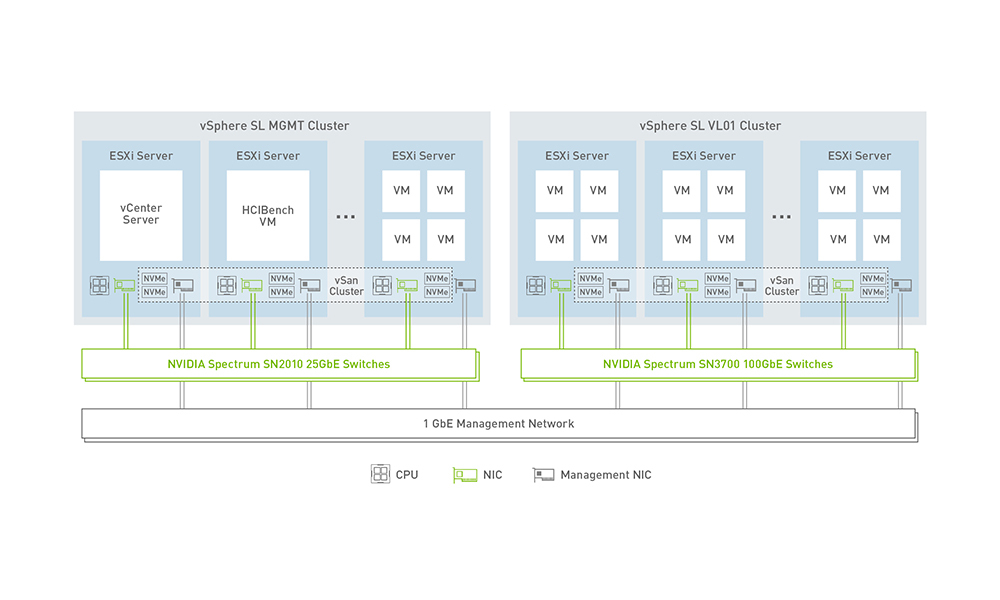

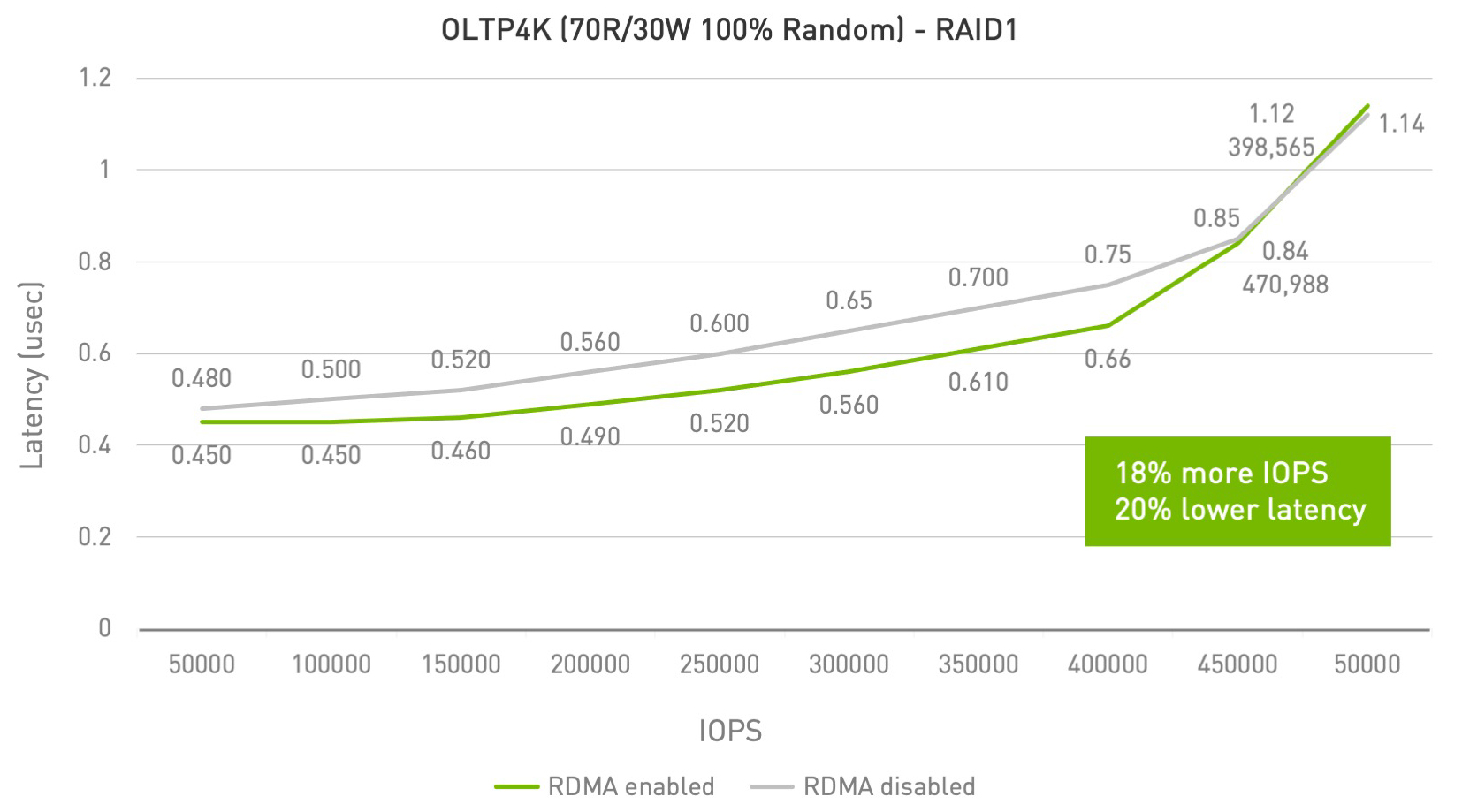

Recent testing showed that VMware vSAN over RoCE running over an NVIDIA end-to-end 100 Gb/s Ethernet solution consistently improved performance over TCP protocols using the same hardware. Performance results compared vSAN performance over TCP and RDMA on the latest 15-generation PowerEdge server with the latest Intel Ice Lake process or running VxRail. By comparison, vSAN was able to deliver:

- 155K IOPs/host on OLTP 4k workloads, under 1 ms!

- Almost 20% increase in IOPS with 20% reduction in latency for small block I/O

- Over 3X increase in read throughput for 4K and 8K block size (128% for 16K)

- Up to 2.46X increase in write throughput for 16K block size.

vSAN over RDMA generates a significant performance boost that is critical in the new era of accelerated computing associated with massive data transfers. As a result, vSAN over RoCE v2 is expected to replace vSAN over TCP to become the leading transport technology in vSphere-enabled data centers.

Dell EMC already offers this as a solution with VxRail. It’s a fully integrated, preconfigured, and pretested hyperconverged infrastructure appliance that allows you to extend your VMware ecosystem, eliminate complexity, and deliver IT services quickly and simply while modernizing the data center.

Shift to hybrid cloud

The re-architecture of VMware Cloud Foundation is leading the shift to hybrid-cloud data center architectures. These rely on a hypervisor and software-defined networking and storage, enabling server disaggregation and includes support for bare metal servers.

The support for RDMA, and the ability to shift workloads to specialized adapters, such as ConnectX Ethernet adapters, enables applications running on one physical server to consume hardware accelerator resources from other physical servers. It also enablesphysical resources to be dynamically accessed based on policy or software APIs tailored to the application’s needs. The combination is poised to reshape the entire virtualized data center by enabling VMware.