James McClure, a Computational Scientist with Advanced Research Computing at Virginia Tech shares how his group uses the NVIDIA Tesla GPU-accelerated Titan Supercomputer at Oak Ridge National Laboratory to combine mathematical models with 3D visualization to provide insight on how fluids move below the surface of the earth.

McClure spoke with us about his research at the 2015 Supercomputing Conference.

Brad Nemire: Can you talk a bit about your current research?

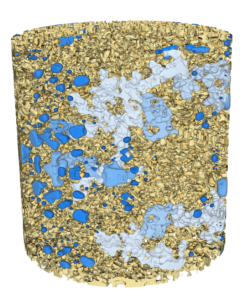

James McClure: Digital Rock Physics is a relatively new computational discipline that relies on high-performance computing to study the behavior of fluids within rock and other geologic materials. Understanding how fluids move within rock is essential for applications like geologic carbon sequestration, oil and gas recovery, and environmental contaminant transport. New technologies such as synchrotron-based x-ray micro-computed tomography enable the collection of 3D images that reveal the structure of rocks at the micron scale. Using these images, we can make predictions about the complex rock-fluid interactions that take place within natural systems.

BN: When and why did you start looking at using NVIDIA GPUs?

JM: We started looking at NVIDIA GPUs in 2007-2008, not long after CUDA became available. We were aware that some of our methods could be efficiently parallelized and that we would likely benefit from GPU implementation. Things really took off after Tesla GPUs arrived, when it became a lot easier to implement massively parallel, high-performance GPU code using MPI.

BN: What is your GPU Computing experience and how have GPUs impacted your research?

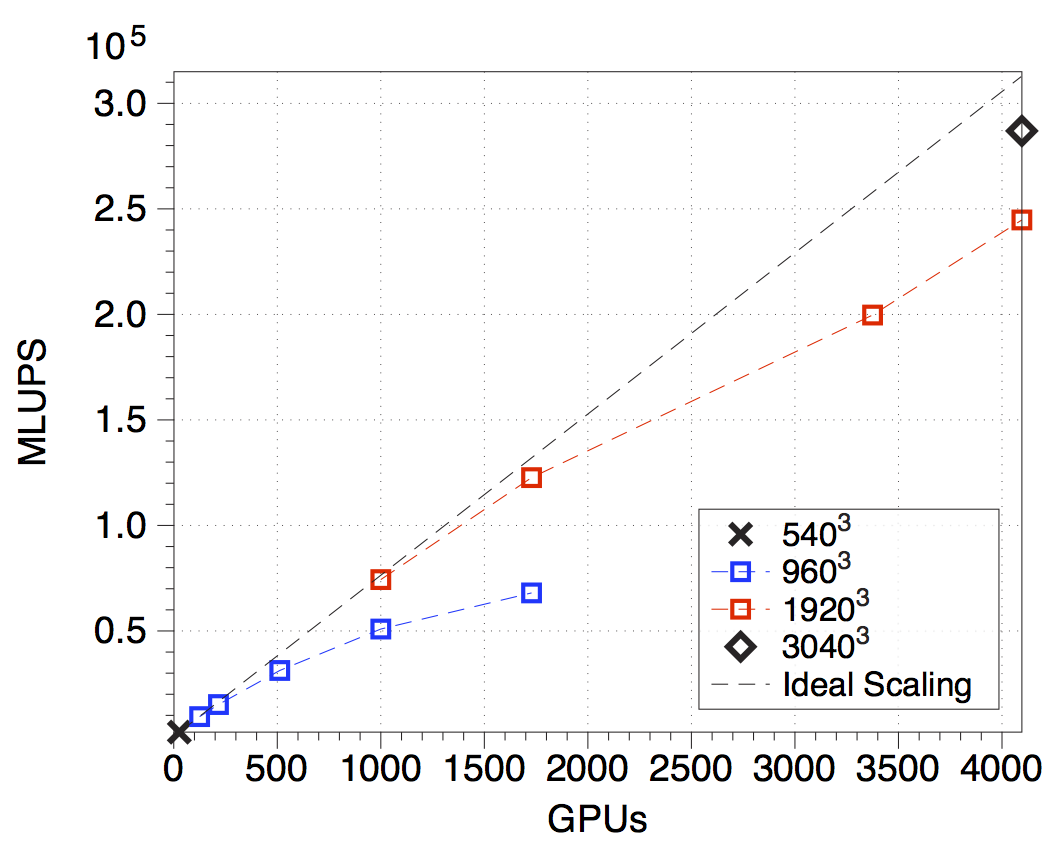

JM: I’ve been programming GPUs for almost ten years, and have used GPUs for most of our large-scale multiphase flow simulations. We rely heavily on Department of Energy (DOE) resources, including the Titan Supercomputer—we could not otherwise afford to do the calculations that we perform. Heterogeneous node architectures are also important to us. While we rely on GPUs for our simulations, we use the CPU to perform in situ data analysis using tools that we have developed based on multiscale averaging theory. Having both CPUs and GPUs in the system is therefore valuable and important to us.

BN: What are some of the biggest challenges in this project?

JM: The US DOE projects that synchrotron light sources will generate ~ 1 PB of data per hour by the year 2020. We apply simulation as a way to generate additional information from data sets generated from these light sources. Figuring out how to keep up with these data rates is a big challenge.

BN: In terms of technology advances, what are you looking forward to in the next five years?

JM: The amount of data that we are able to generate is both an opportunity and a challenge. Depending on the particular scientific questions, we will have some mix of data-bound and compute-bound problems. We need technologies that enable both, so I am looking forward to seeing these mature.

BN: What’s next?

JM: We are working to automate workflows so that various multiscale quantities can be studied more easily by different research groups in a reproducible way. We view simulations as a way to access information that otherwise cannot be measured from experimental data sets, and as a way to make the results of multiscale averaging theories more accessible. Our goal is to help the community access and explore different kinds of information.

BN: What impact do you think your research will have on this scientific domain?

JM: Multiscale information from experimental images and simulation will provide a mechanism to better understand the complexities of the subsurface, which is composed of many different materials.

For further information about McClure’s research, you can watch his SC15 presentation on “Embracing Heterogeneous Simulation of Complex Fluid Flows”.