In the old days of 10 Mbps Ethernet, long before Time-Sensitive Networking became a thing, state-of-the-art shared networks basically required that packets would collide. For the primitive technology of the time, this was eminently practical… computationally preferable to any solution that would require carefully managed access to the medium.

After mangling each other’s data, two competing stations would wait (randomly wasting even more time), before they would try to transmit again. This was deemed ok because the minimum-size frame was 64 bytes (512 bits) and a reasonable estimate of how long this frame would consume the wire was based on the network speed (10 million bits per second means that each bit takes ~0.1 microseconds) so 512 bits equals 51.2 microseconds, at least.

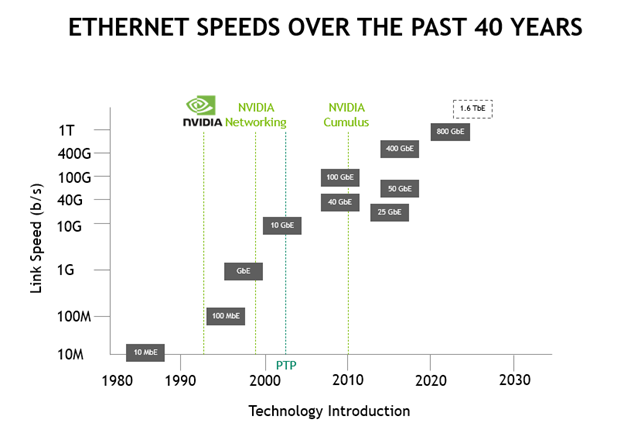

Ethernet technology has evolved from 10 Mbps in the early 80s to 400Gbps as of today with future plans for 800Gbps and 1.6 Tbps (Figure 1).

It should be clear that wanting your networks to go faster is an ongoing trend! As such, any application that must manage events across those networks requires a well-synchronized, commonly understood, network-spanning sense of time, at time resolutions that get progressively narrower as networks become faster.

This is why the IEEE has been investigating how to support time-sensitive network applications since at least 2008, initially for audio and video applications but now for a much richer set of much more important applications.

Three use cases for time-sensitive networking

The requirements for precise and accurate timing extend beyond the physical and data link layers to certain applications that are highly dependent on predictable, reliable service from the network. These new and emerging applications leverage the availability of a precise, accurate, and high-resolution understanding of time.

5G, 6G, and beyond

Starting with the 5G family of protocols from 3GPP, some applications such as IoT or IIoT do not necessarily require extremely high bandwidth. They do require tight control over access to the wireless medium to achieve predictable access with low latency and low jitter. This is achieved by the delivery of precise, accurate, and high-resolution time to all participating stations.

In the time domain style of access, each station asks for and is granted permission to use the medium, after which a network scheduler informs the station of when and for how long they may use the medium.

5G and future networks deliver this accurate, precise, and high-resolution time to all participating stations to enable this kind of new high-value application. Previously, the most desirable attribute of new networks was speed. These new applications actually need control rather than speed.

Successfully enabling these applications requires that participating stations have the same understanding of the time in absolute terms so that they do not either start transmitting too soon, or too late, or for the wrong amount of time.

If a station were to transmit too soon or for too long, it may interfere with another station. If it were to begin transmitting too late, it may waste some of its precious opportunity to use the medium, as in situations where it might transmit for less time than it had been granted permission to transmit.

I should state that 5G clearly isn’t Ethernet, but Ethernet technology is how the 5G radio access network is tied together, through backhaul networks extending out from the metropolitan area data centers. The time-critical portion of the network extends from this Ethernet backhaul domain, both into the data centers and out into the radio access network.

What kinds of applications need this level of precision?

Applications such as telemetry need this precision. Future measurements can implicitly recover from a missed reading just by waiting for the next reading. For example, a meter reading might be generated once every 30 minutes.

What about robots that must have their position understood with submillisecond resolution? Missing a few position reports could lead to damaging the robot, damaging nearby or connected equipment, damaging the materials that the robot is handling, or even the death of a nearby human.

You might think this has nothing to do with 5G, as it’s clearly a manufacturing use case. This is a situation where 5G might be a better solution because the Precision Time Protocol (PTP) is built into the protocol stack from the get-go.

PTP (IEEE 1588-2008) is the foundation of a suite of protocols and profiles that enable highly accurate time to be synchronized across networked devices to high precision and at high resolution.

Time-sensitive networking technology enables 5G (or subsequent) networks to serve thousands or tens of thousands of nodes. It delivers an ever-shifting mix of high-speed, predictable latency, or low-jitter services, according to the demands of the connected devices.

Yes, these might be average users with mobile phones, industrial robots, or medical instruments. The key thing is that with time-sensitive networking built in, the network can satisfy a variety of use cases as long as bandwidth (and time) is available.

PTP implementations in products containing NVIDIA Cumulus Linux 5.0 and higher, regularly deliver deep submillisecond (even submicrosecond) precision, supporting the diverse requirements of 5G applications.

Media and entertainment

The majority of the video content in the television industry currently exists in serial digital interface (SDI) format. However, the industry is transitioning to an Internet Protocol (IP) model.

In the media and entertainment industry, there are several scenarios to consider such as studio (such as combining multiple camera feedback and overlays), video production, video broadcast (from a single point to multiple users), and multiscreen.

Time synchronization is critical for these types of activities.

In the media and broadcast world, consistent time synchronization is of the upmost importance to provide the best viewing experience and to prevent frame alignment, lip syncing, and video and audio syncing issues.

In the baseband world, reference black or genlock was used to keep camera and other video source frames in sync and to avoid introducing nasty artifacts when switching from one source to another.

However, with IP adoption and more specifically, SMPTE-2110 (or SMPTE-2022-6 with AES67), you needed a different way to provide timing. Along came PTP, also referred to as IEEE 1588 (PTP V2).

PTP is fully network-based and can travel over the same data network connections that are already being used to transmit and receive essence streams. Various profiles, such as SMPTE 2059-2 and AES67, provide a standardized set of configurations and rules that meet the requirements for the different types of packet networks.

Spectrum fully supports PTP 1588 under SMPTE 2059-2 and other profiles.

Automotive applications

New generations of car-area networks (CANs) have evolved from shared/bus architectures toward architectures that you might find in 5G radio-access networks (RANs) or in IT environments: switched topologies.

When switches are involved, there is an opportunity for packet loss or delay variability, arising from contention or buffering, which limits or eliminates predictable access to the network that might be needed for various applications in the automobile.

Self-driving cars must regularly, at fairly a high frequency, process video and other sensor inputs to determine a safe path forward for the vehicle. The guiding intelligence in the vehicle depends on regularly accessing its sensors, so the network must be able to guarantee that access to the sensors is frequent enough to support the inputs to the algorithms that must interpret them.

For instance, the steering wheel and brakes are reading friction, engaging antilock and antislip functions, and trading off regenerative energy capture compared to friction braking. The video inputs, and possibly radar and lidar (light detection and ranging), are constantly scanning the road ahead. They enable the interpretation algorithms to determine if new obstacles have become visible that would require steering, braking, or stopping the vehicle.

All this is happening while the vehicle’s navigation subsystem uses GPS to receive and align coarse position data against a map, which is combined with the visual inputs from the cameras to establish accurate positioning information over time, to determine the maximum legally allowed speed and to combine legal limits with local conditions to determine a safe speed.

These varied sensors and associated independent subsystems must be able to deliver their inputs to the main processors and their self-driving algorithms on a predictable-latency/low-jitter basis, while the network is also supporting non-latency-critical applications. The correct, predictable operation of this overall system is life-critical for the passengers (and for pedestrians!).

Beyond the sensors and software that support the safe operation of the vehicle, other applications running on the CAN are still important to the passengers, while clearly not life-critical:

- Operating the ventilation or climate-control system to maintain a desirable temperature at each seat (including air motion, seat heating or cooling, and so on)

- Delivering multiple streams of audio or video content to various passengers

- Gaming with other passengers or passengers in nearby vehicles

- Important mundane maintenance activities like measuring the inflation pressure of the tires, level of battery charge, braking efficiency (which could indicate excessive wear), and so on

Other low-frequency yet also time-critical sensor inputs provide necessary inputs to the vehicle’s self-diagnostics that determine when it should take itself back to the maintenance depot for service, or just to recharge its batteries.

The requirement for all these diverse applications to share the same physical network in the vehicle (to operate over the same CAN) is the reason why PTP is required.

Engineers will design the CAN to have sufficient instantaneous bandwidth to support the worst-case demand from all critical devices (such that contention is either rare or impossible), while dynamically permitting all devices to request access in the amounts and with the latency bounds that each requires, which can change over time. Pun intended.

In a world of autonomous vehicles, PTP is the key to enabling in-car technology, supporting the safe operation of vehicles while delivering rich entertainment and comfort.

Conclusion

You’ve seen three examples of applications where control over access to the network is as important as raw speed. In each case, the application defines the requirements for precise/accurate/high-resolution timing, but the network uses common mechanisms to deliver the required service.

As networks continue to get faster, the time resolution for discriminating events scale linearly as the reciprocal of the bandwidth.

Powerful PTP implementations, such as that in NVIDIA Cumulus Linux 5.0-powered devices, embody scalable protocol mechanisms that will adapt to the faster networks of the future. They will deliver timing accuracy and precision that adjusts to the increasing speeds of these networks.

Future applications can expect to continue to receive the predictable time-dependent services that they need. This will be true even though the networks continue to become more capable of supporting more users, at faster speeds, with even finer-grained time resolution.

For more information, see the following resources:

- NVIDIA Cumulus Linux 5.1 User Guide

- NVIDIA Cumulus Linux datasheet

- NVIDIA Cumulus Linux page

- NVIDIA Air Infrastructure Simulation Platform

- NVIDIA Announces Spectrum High-Performance Data Center Networking Infrastructure Platform

- Precision Timing Protocol standard (2008, updated 2019)

- Time Appliances Project

- Time Synchronization in Distributed Data Centers