Digitizing millions of historical documents and newspapers is a challenging task.

To help speed up the process, the U.S. Library of Congress developed a GPU-accelerated, deep learning model to automatically extract, categorize, and caption over 16 million pages of historic American newspapers published between 1789 and 1963.

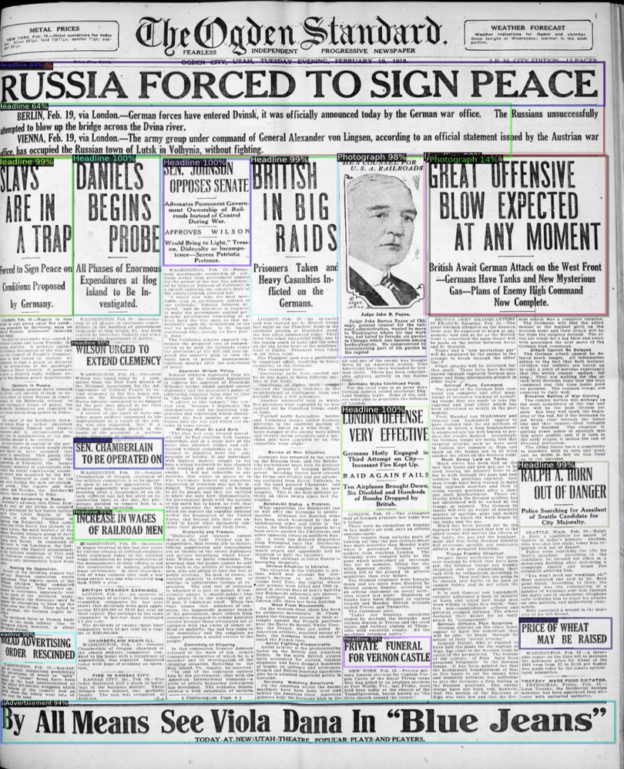

The work, which is being made publicly available for unrestricted reuse, includes visual content, headlines, photographs, illustrations, maps, comics, editorial cartoons and advertisements from historic newspapers.

The dataset, according to the researchers, is the largest dataset of its kind ever produced.

The work is part of the organization’s Chronicling America initiative, which stems from a partnership between the Library of Congress and the National Endowment for the Humanities.

“Over 16 million pages of historic American newspapers have been digitized for Chronicling America to date, complete with high-resolution images and machine-readable METS/ALTO and optical character recognition (OCR),” the organization stated in their paper, The Newspaper Navigator Dataset: Extracting And Analyzing Visual Content from 16 Million Historic Newspaper Pages in Chronicling America. METS and ALTO are the XML standards maintained by the Library of Congress that includes text localization.

In addition to releasing the new dataset, the researchers have also published their visual content recognition model for historic newspapers, as well as a new training dataset for this task. The models are based on the Library’s crowdsourcing initiatives for annotating and captioning visual content in World War 1-era newspapers.

Training and inference was performed on NVIDIA GPUs with the cuDNN-accelerated PyTorch deep learning framework. All fine tuning was performed using NVIDIA T4 GPUs on the Amazon Web Services Cloud, using the R50-FPN backbone. This backbone was selected because it has the fastest inference time of the faster-RCNN backbones, the researchers explained.

“Our work is also a case study in partnering machine learning projects with volunteer crowdsourcing initiatives, a promising paradigm in which annotators are volunteers who learn about a new topic by participating,” the researchers explained. “These partnerships also have the potential to provide insight into project design, decisions, workflows, and the context of the materials for which crowdsourcing contributions are sought.”

A GitHub repository, Newspaper-Navigator, includes the datasets and detection models.