Modern deep neural networks, such as those used in self-driving vehicles, require a mind boggling amount of computational power. Today a single computer, like NVIDIA DGX-1, can achieve computational performance on par with the world’s biggest supercomputers in the year 2010 (“Top 500”, 2010). Even though this technological advance is unprecedented, it is being dwarfed by the computational hunger of modern neural networks and the dataset sizes they require for training.

This is especially true with safety critical systems, like self-driving cars, where detection accuracy requirements are much higher than in the internet industry. These systems are expected to operate flawlessly irrespective of weather conditions, visibility, or road surface quality.

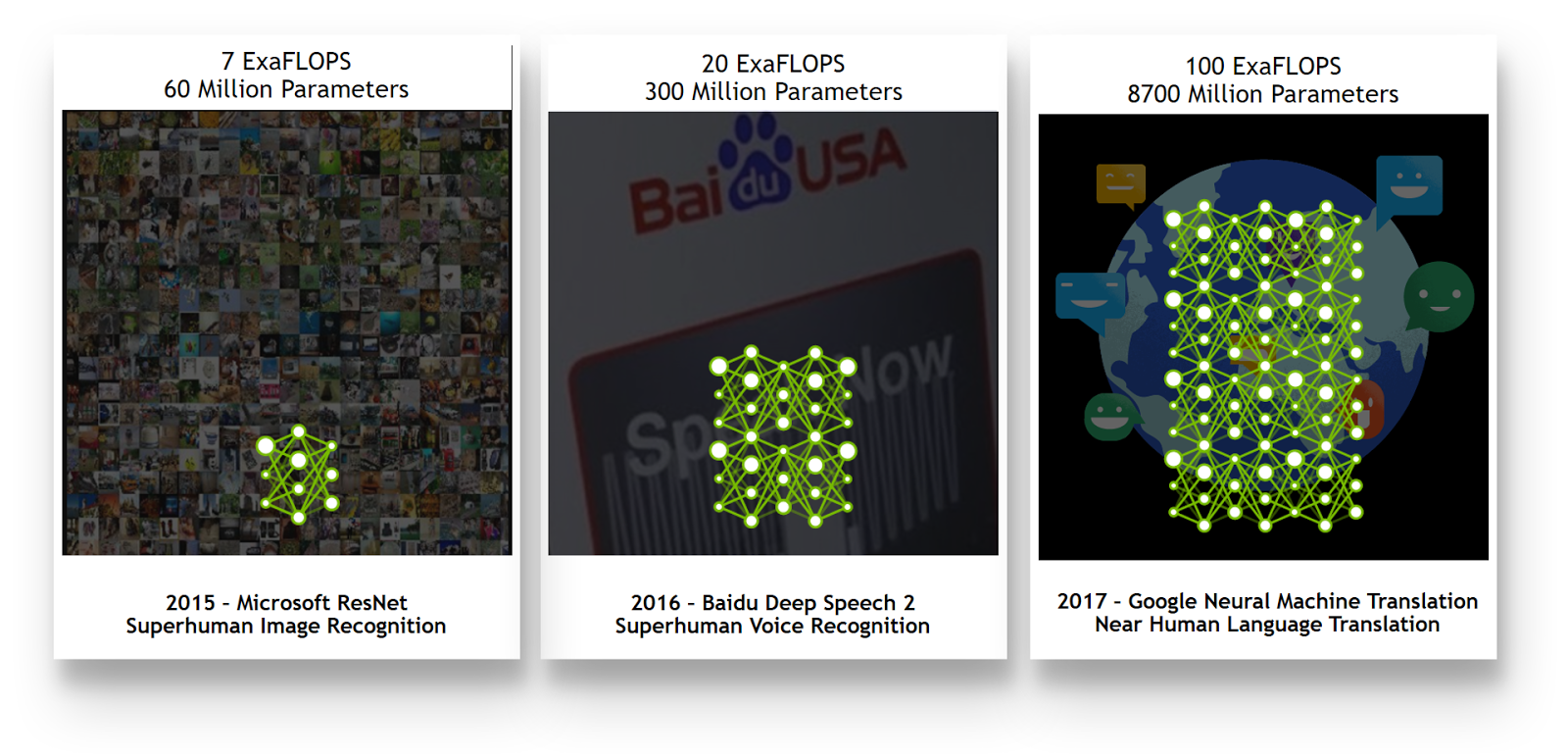

To achieve this level of performance, neural networks need to be trained on representative datasets that include examples of all possible driving, weather, and situational conditions. In practice this translates into Petabytes of training data. Moreover, the neural networks themselves need to be complex enough (have sufficient number of parameters) to learn from such vast datasets without forgetting past experience (Goodfellow et al., 2016). In this scenario, as you increase the dataset size by a factor of n, the computational requirements increase by a factor of n2, creating a complex engineering challenge. Training on a single GPU could take years—if not decades—to complete, depending on the network architecture. And the challenge extends beyond hardware, to networking, storage and algorithm considerations.

A new NVIDIA Developer Blog post by NVIDIA deep learning solution architect Adam Grzywaczewski, investigates the computational complexity associated with the problem of self-driving cars to help new AI teams (and their IT colleagues) to plan the resources required to support the research / engineering process. Adam makes very conservative estimates of the training data and training time required that illustrate the massive scale of this problem.

Read more >

Training AI for Self-Driving Vehicles: The Challenge of Scale

Oct 10, 2017

Discuss (0)

AI-Generated Summary

- Modern deep neural networks, such as those used in self-driving vehicles, require a vast amount of computational power, with a single NVIDIA DGX-1 achieving performance comparable to the world's top supercomputers in 2010.

- The computational complexity of neural networks is increasing rapidly, driven by the need for high detection accuracy in safety-critical systems like self-driving cars, which must operate flawlessly in various conditions.

- As dataset sizes grow, computational requirements increase exponentially, making it a complex engineering challenge that involves not just hardware, but also networking, storage, and algorithm considerations.

AI-generated content may summarize information incompletely. Verify important information. Learn more