NVIDIA CUDA-X AI is a deep learning software stack for researchers and software developers to build high performance GPU-accelerated applications for conversational AI, recommendation systems, and computer vision.

Learn what’s new in the latest releases of the CUDA-X AI tools and libraries. For more information on NVIDIA’s developer tools, join live webinars, training, and “Connect with the Experts” sessions at NVIDIA GTC.

Refer to each package’s release notes in documentation for additional information.

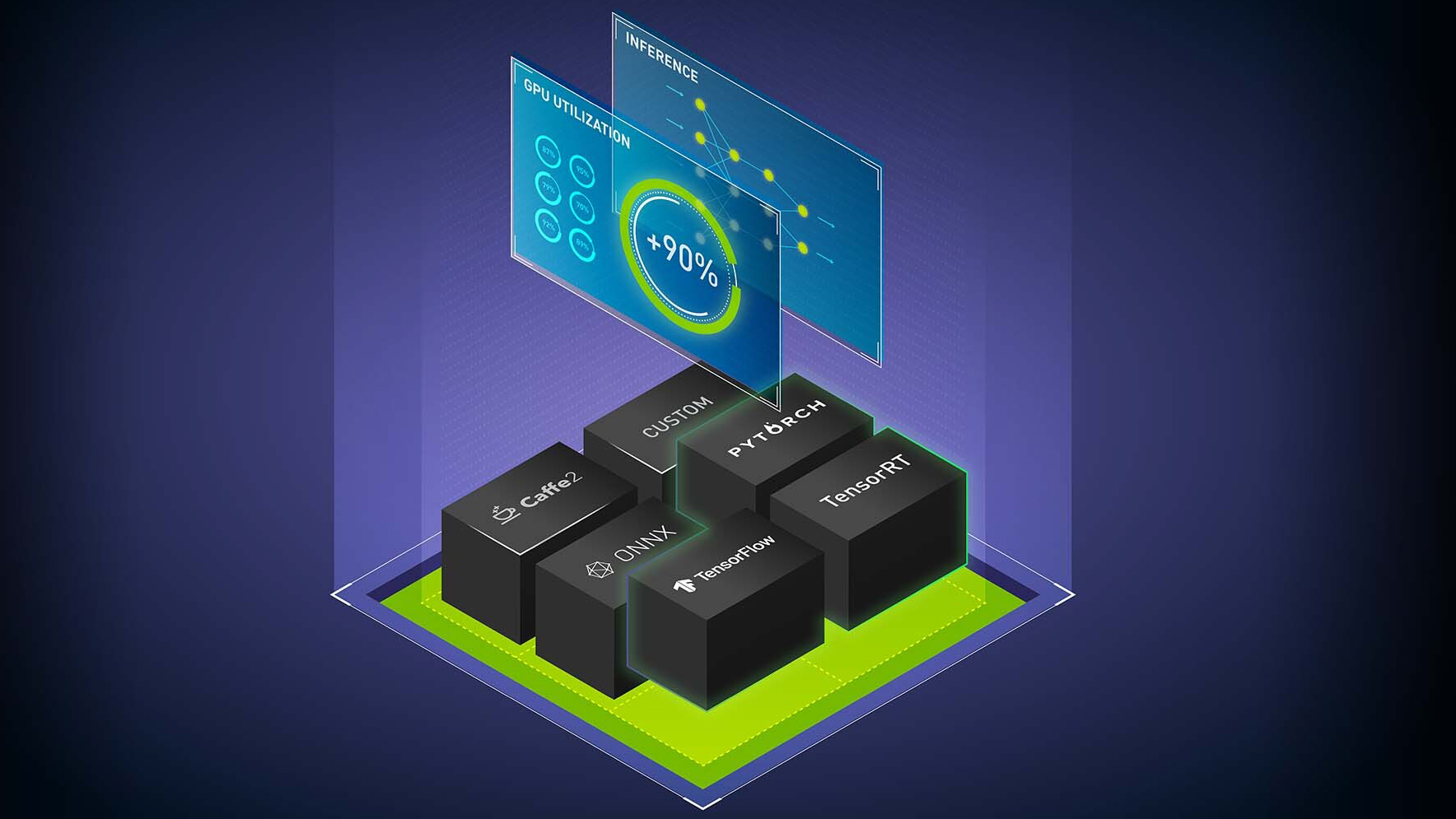

NVIDIA Triton Inference Server

NVIDIA Triton™ Inference Server is open source inference serving software that brings fast and scalable AI models to applications in production. It supports every framework, runs on every GPU- or CPU-based infrastructure on-premises, in the cloud and at the edge.

Updates include:

- Business Logic Scripting (Beta): functions to call other models within an executing Python model.

- Container Composition Utility: create custom Triton containers with specific backends and repository agents.

- Two new containers on NGC, starting with Triton 21.08

- nvcr.io/nvidia/tritonserver:21.08-tf-python-py3 – GPU enabled Triton server with only the TensorFlow 2.x and Python backends.

- nvcr.io/nvidia/tritonserver:21.08-pyt-python-py3 – GPU enabled Triton server with only the PyTorch and Python backends.

TensorRT 8.0

TensorRT is a platform for high-performance deep learning inference. This version includes:

- BERT-Large Inference in 1.2 ms with new Transformer Optimizations.

- Achieve accuracy equivalent to FP32 with INT8 precision using Quantization Aware Training.

- Sparsity support for faster inference on Ampere GPUs.

NVIDIA NeMo

NVIDIA NeMo is an open-source toolkit for developing state-of-the-art conversational AI models. NVIDIA shared new speech processing research and tutorials using NeMo at Interspeech 2021, including:

- Building Speech Recognition Models for Global Languages with the Mozilla Common Voice Dataset and NVIDIA NeMo

- Text-to-Speech Inference Prosody Control

- Six accepted papers

For links to all accepted research visit the NVIDIA Interspeech event page.

Access additional tutorials from the NeMo GitHub repository and the NVIDIA Developer Blog.

NVIDIA Maxine

Maxine provides accelerated real-time video effects (VFX), audio effects (AFX), and augmented reality (AR) SDKs with state-of-the-art AI-based features for building virtual collaboration and content creation applications.

Highlights from this release include:

- Virtual Background (VFX) provides higher stream quality with a variety of object segmentation (chairs, cloths, microphones), robustness to motion and light changes, and an option to enable CUDA graphs for reducing latency under GPU intensive workload.

- Super Resolution (VFX) adds up to 4K video input resolution support, with 2X upscale factor.

- Noise Removal (AFX) preserves speech better, particularly when input is emotive in nature. Room echo cancellation (AFX) improves overall quality while working together with Noise Removal.

- 3D Body Pose Estimation (AR) adds enhanced accuracy and temporal stability of body joints location and angles. It also offers keypoint tracking robustness of body limbs while reaching out and to the side.

- All Linux SDKs support A100, A30, and A10 MIG to ensure consistent performance across GPU partitions.

NGC Updates

The NGC catalog is a hub of GPU-optimized containers, pretrained models, SDKs, and Helm charts designed to accelerate end-to-end AI workflows. Updates include:

Deep Learning Frameworks

- 21.08 containers for TensorFlow, PyTorch, and MXNet

- Support for CUDA 11.4, Dali 1.4, and Ubuntu 20.04

Clara AGX Collection

- This growing collection of AI frameworks, reference applications, and AI models is built for Clara AGX Developer Kit and real-time medical instrument development

- Includes containers for metagenomics, dermatology, ultrasound, and streaming video.

New and Updated Partner Software

- Autovox Hindi ASR Container: Autovox, by Cogknit Semantics, is a voice OS platform, providing multiple language models: ASR, MT and TTS. This limited access ASR container converts Hindi Audio to Hindi text.

- Plexus Satellite Container – Provides a rich set of tools for setting up and managing an isolated networked Kubernetes cluster on the Core Scientific Plexus software stack. A new feature allows the Plexus platform to manage single nodes with ssh connection, without the need of having any resource manager.