Deep learning research requires working at scale. Training on massive data sets or multilayered deep networks is computationally intensive and can take an impractically long time as deep learning models are bound by memory. The key here is to compose the deep learning models in a structured way so that they are decoupled from the engineering and data, enabling researchers to conduct fast research.

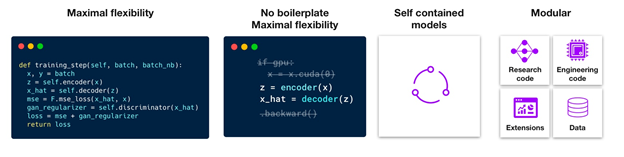

PyTorch Lightning, developed by Grid.AI, is now available as a container on the NGC catalog, NVIDIA’s hub of GPU-optimized AI and HPC software. Pytorch Lightning was designed to remove the roadblocks in deep learning research and allows researchers to focus on science. Lightning is more of a style guide than a framework, enabling you to structure and organize your code while providing utilities for common functions. With PyTorch Lightning, you can scale your models to multiple GPUs and leverage state-of-the-art training features such as 16-bit precision, early stopping, logging, pruning and quantization, while enabling faster iteration and reproducibility.

A Lightning model is composed of the following:

- A LightningModule that encapsulates the model code

- A Lightning DataModule that encapsulates transforms, dataset, and DataLoaders

- A Lightning trainer that automates the training routine with 70+ flags to make advanced features trivial

- Callbacks for users to customize Lightning using hooks

The Lightning objects are implemented as hooks that can be overridden, making every single aspect of deep learning training highly configurable. With Lightning, you have full control over every detail:

- Change how the backward step is done.

- Change how 16-bit is initialized.

- Add your own way of doing distributed training.

- Add learning rate schedulers.

- Use multiple optimizers.

- Change the frequency of optimizer updates.

Get started today with NGC PyTorch Lightning Docker Container from the NGC catalog.