Video conferencing is at the heart of several streaming use cases, such as vlogging, vtubing, webcasting, and even video streaming for remote work. To create an increased sense of presence and pick up on both verbal and non-verbal cues, video conferencing technology must enable users to see and hear clearly.

Eye contact plays a key role in establishing social connections and in face-to-face conversations it signifies confidence, connection, and attention. However, continuously making eye contact is not feasible in video conferencing scenarios. It requires users continuously look directly at their cameras and not at their computer displays. This can be difficult if one is reading off a script or reviewing data on a computer screen.

Maintaining eye contact is also sometimes challenging for various physiological reasons. Many children and adults struggle to make and maintain eye contact.

To improve, augment, and enhance the user experience, we have developed NVIDIA Maxine Eye Contact. This feature uses AI to apply a filter to the user’s webcam feed in real time and redirects their eye gaze toward the camera.

This innovative AI-based algorithm for gaze estimation and redirection is state-of-the-art and is fully integrated into the NVIDIA AR SDK, introducing new gaze estimation and redirection along with 6DOF head pose estimation capabilities.

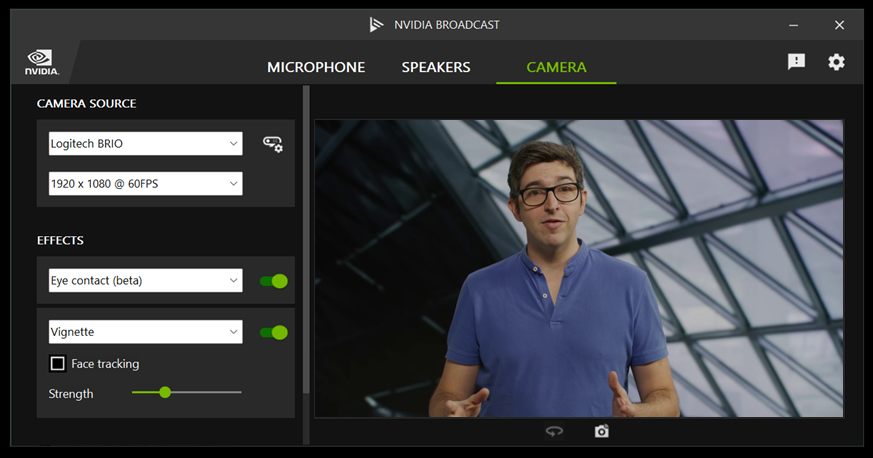

NVIDIA Maxine Eye Contact is also integrated into NVIDIA Broadcast App—a free software download for NVIDIA RTX and GeForce RTX GPU owners—that transforms any room into a home studio. Test the new Eye Contact in version 1.4.

Creating an eye contact pipeline

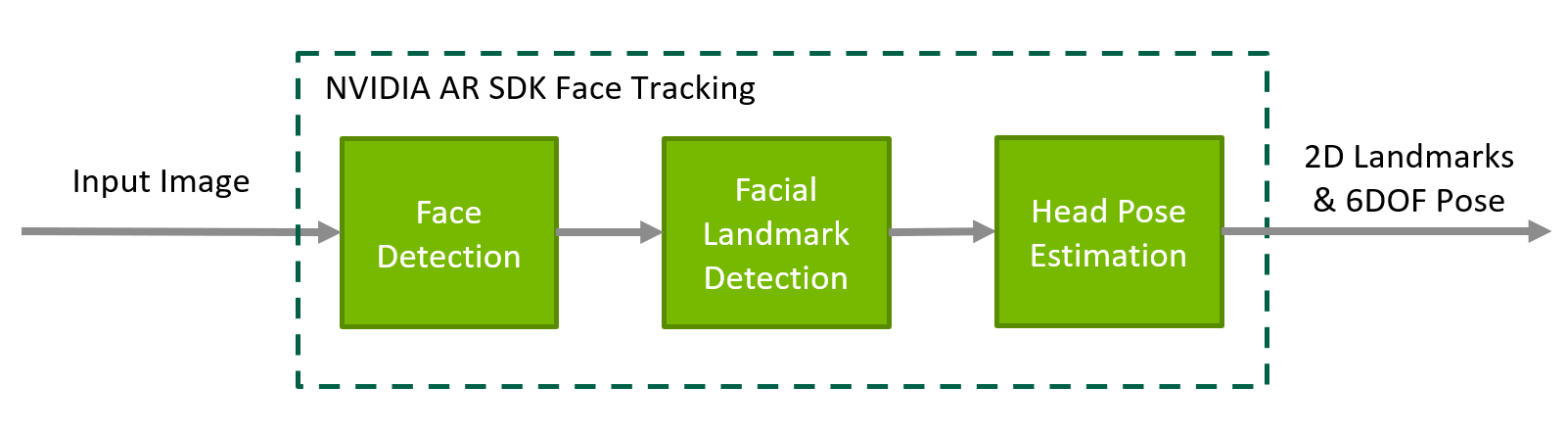

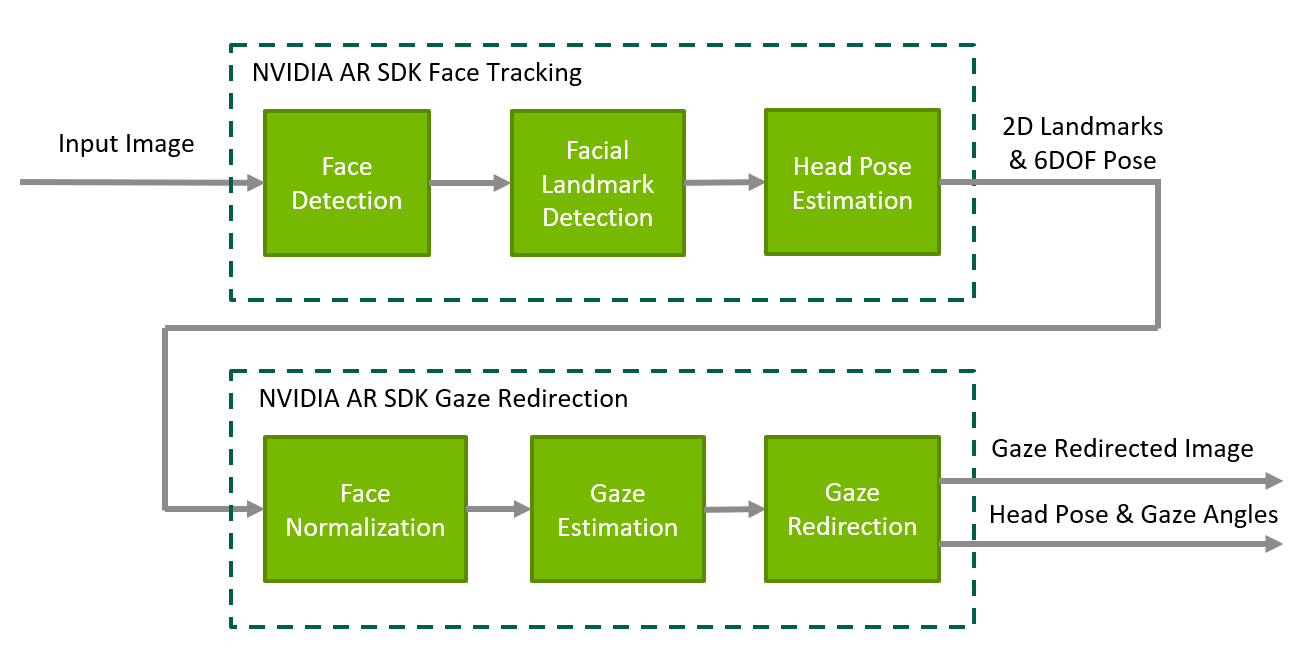

NVIDIA Maxine Eye Contact operates on a region of interest around one’s eyes also known as the eye patch. The eye patch is extracted from a video frame using the NVIDIA Maxine Face tracking pipeline, computing the 2D facial landmarks and the 6DOF head pose from the video frame.

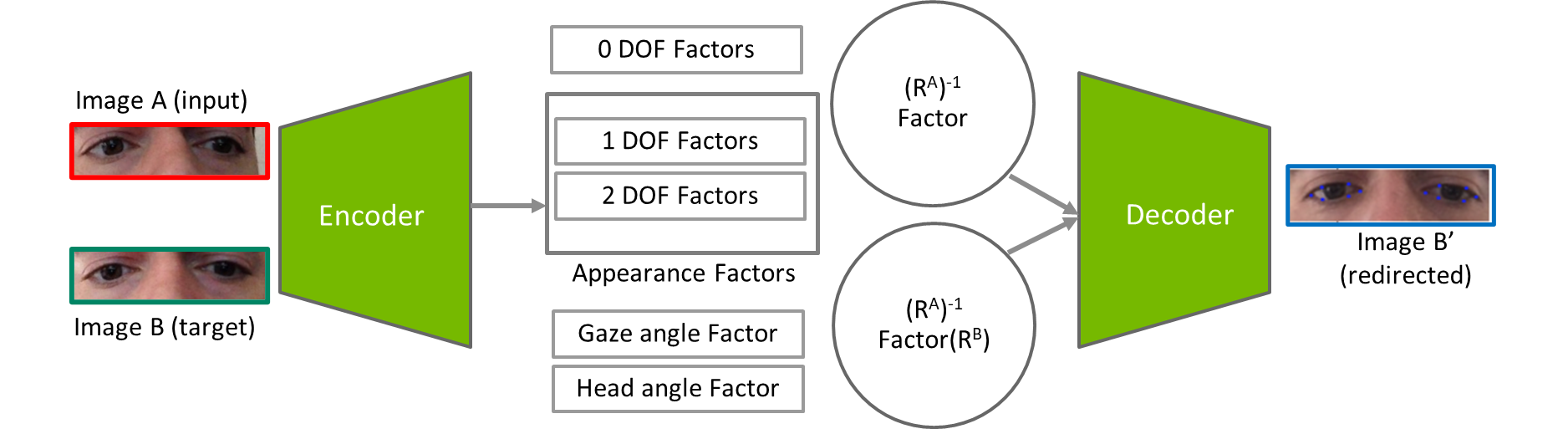

This head pose is then used to normalize the face in the video frame. A 256 ✕ 64 px eye patch is cropped from the normalized frame and it is fed into the eye contact network. The eye contact network has a disentangled encoder-decoder architecture. The encoder estimates the gaze angle from the input eye patch along with a set of features, also known as embeddings.

Based on these embeddings, the decoder performs redirection of the gaze in the input patch to make the face look forward. The last stage of the pipeline involves blending the eye patch back into the original video frame with an inverse transformation.

The outputs of the pipeline are the head pose, gaze angles, and image with redirected eye gaze. The pipeline can also be used in a gaze estimation-only mode in which case redirection is turned off.

The technology redirects a user’s gaze to the front and center. To keep the experience natural, the algorithm reduces the redirection effect as the original eye gaze drifts further away from the center. Redirection is also turned off in cases when the head is rotated beyond a predetermined threshold outside of which a natural-looking redirection is not feasible.

NVIDIA Maxine Eye Contact model architecture

The architecture of the eye contact network consists of a transforming encoder and decoder structure. The encoder encodes the image content into latent representations of the following factors:

- Non-subject related factors such as environmental lighting, shadows, white balance and hue, and blurriness.

- Subject-related factors such as skin color, face and eye shape, eyeglasses, and eye gaze.

- Head pose.

Additionally, the encoder predicts the “state” (Ri) of each of these latent factors (zi) codified by one- or two-dimensional rotation angles.

In our design, a rotation applied to the individual latent factors affects a corresponding monotonic change in the appearance of the image. For example, to alter the gaze, the latent factor related to the gaze needs to be transformed. We then input all the internal representations (original and transformed ones) into a decoder network to create the final redirected eye image.

Our algorithm provides the best accuracy compared to inference time tradeoff when compared with existing state-of-the-art approaches in terms of the accuracy of gaze redirection, gaze disentanglement from other factors, and perceptual image quality.

Maintaining eye color

Preservation of eye color is one of the key challenges for any gaze redirection algorithm. Our eye contact network has been trained on a large and diverse dataset of about 4 million images. Roughly 25% are synthetically generated to add diversity in eye color and shape.

In addition, our network is trained using several loss functions, which contribute to accurate redirection of the eyes. The prominent loss functions used in redirection are:

- Reconstruction loss: We guide the generation of the redirected images with a pixel-wise L1 reconstruction loss between the generated image and the target image.

\(L_R (X_t,X_t)= \frac{1}{|X_t|}||{\tilde{X_t}-X_t}||_1\)

- Functional loss: We use a functional loss, which prioritizes the minimization of task-relevant inconsistencies between the generated and target images, such as the mismatch in iris positions.

This is defined through an L2 loss between the features of the generated and target images.

\(L_{F_{feature}} (\tilde{X_t},X_t) = \sum_{i=1}^{5} \frac{1}{|\psi_i(X_t)|}|| \psi_i(\tilde{X_t})-(X_t) ||_2\)

- Disentanglement and loss: Individual environmental and physical factors should ideally be disengaged to avoid altering any of the other factors in the redirected image when changing a subset.

We encourage the disentanglement between the encoded factors by first randomly transforming a subset of the factors to create a mixed-factor representation. This is formulated as:

\(f_{mix} = \{f_{mix}^1,f_{mix}^2,f_{mix}^N\},f_{mix}^j = sf_{i}^j +(1-s)\tilde{f_{t}^{j},s \tilde \{0,1}\)

The full disentanglement loss is defined as the discrepancy between the mixed and recovered embeddings, as well as the error between the gaze and head labels before and after the process.

Setting a working range

As described earlier, the input to the eye contact network is a scale-normalized eye patch. It has been observed that redirection can take place reliably and is more natural in a cone of around 20-degrees pitch and yaw angles. This is considered the recommended working range of the feature.

The following are examples of successful gaze redirection for eye contact.

Addressing transitional drop-off

It is common and often a reflex for the eye to have fast smooth movements called saccades.

Beyond the working range, which may occur during a saccade, for instance, gaze redirection appears less natural and shuts off.

However, abruptly turning off the feature results in a sudden shift in the iris, which is not desirable. To solve this problem, we introduced a transition region where the eyes are redirected to go from looking at the camera to the actual gaze angle in a smooth manner.

This drop-off is performed incrementally as a function of the gradient between the current angle of redirection and the actual gaze direction. The speed of this transition is set to mimic the typical motion of human eyes. When the angle of redirection is sufficiently close to the estimated gaze angle, the feature is turned off completely.

Handling eye invisibility

There are times when a person’s eyes can be completely or partially obscured due to blinking, movement, or a dynamic environment. For example, a person’s hands or other objects can block the eyes from the camera view.

Our eye contact pipeline is capable of detecting and preserving eye blinks. The algorithm also turns off the gaze redirection effect after detecting an occlusion indicated by the low confidence of landmark estimation.

Optimizing performance

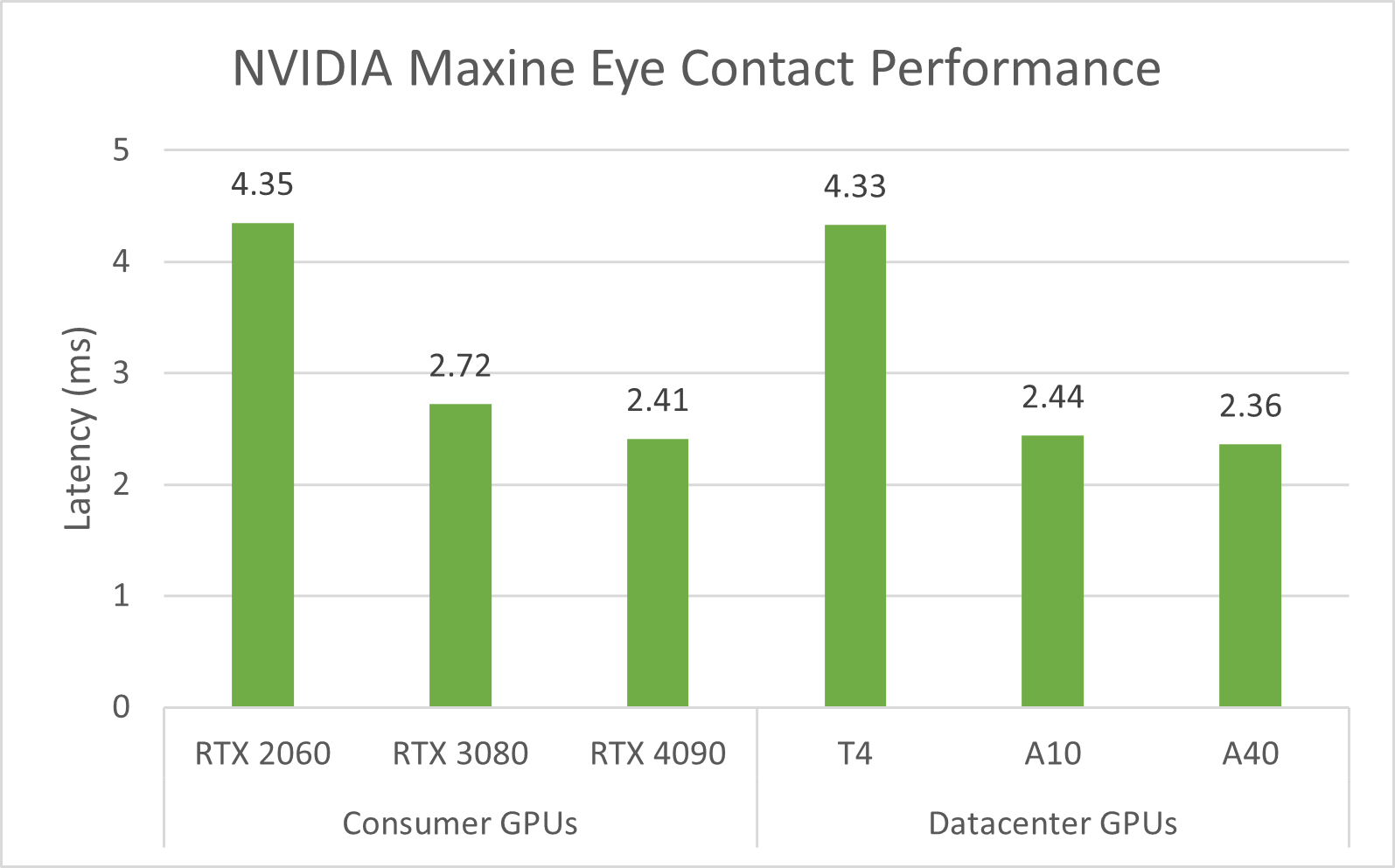

The pipeline is GPU accelerated using TensorRT. Our design and implementation perform real-time time inference on NVIDIA GPUs with less than 5 ms latency per frame. It is performance optimized and supports the simultaneous execution of multiple stream instances for data center use cases in addition to NVIDIA RTX desktops and notebooks.

Download now

SDK for developers

NVIDIA Maxine Eye Contact is available for download in the AR SDK for both Windows and Linux. The SDK has relevant documentation about the API usage and sample app to get started with seamless integration into any application. With this SDK API, various parameters such as temporal filtering and eye size sensitivity are controllable.

NVIDIA UCF Microservice for developers

It will also be available as part of the Video Effects Microservice in the NGC registry. It is NVIDIA UCF compliant and can be combined with other microservices to build multimodal AI applications.

NVIDIA Broadcast App for consumers

For those who do not want to build custom applications but would like to access this feature, it is now available in the NVIDIA Broadcast App. It can be enabled in a video conference and video broadcast application by selecting the NVIDIA Broadcast Camera.

Contribute to our growth

As we continue to improve upon this in future releases, you can help us by contributing to the NVIDIA Maxine and NVIDIA Broadcast App.