When analyzing healthcare and life science data from a distance, bandwidth congestion, network reliability, and latency issues can negatively affect outcomes. This is critical when timing is so important. To address these concerns, forward-thinking healthcare organizations are adopting edge computing, in which data is analyzed and acted upon at the point of collection.

AI-powered instruments, medical devices, and technologies at the edge are transforming healthcare and life sciences by bringing data processing and storage closer to the source, and providing real time insights to clinical and research teams. This is incredibly important for healthcare delivery, where on-demand insights help teams make crucial and urgent decisions about patients.

Throughout the hospital, AI-powered edge technologies are already making a difference by helping to make surgeries less invasive, reducing radiation exposure in an X-Ray machine, and monitoring patients at risk of falling.

According to the Deloitte and MIT Technology Review, 30% of all globally stored data is from healthcare and life sciences. Considering the amount of data coming from healthcare, it is becoming imperative to collect and derive insights in real time to help make faster clinical decisions.

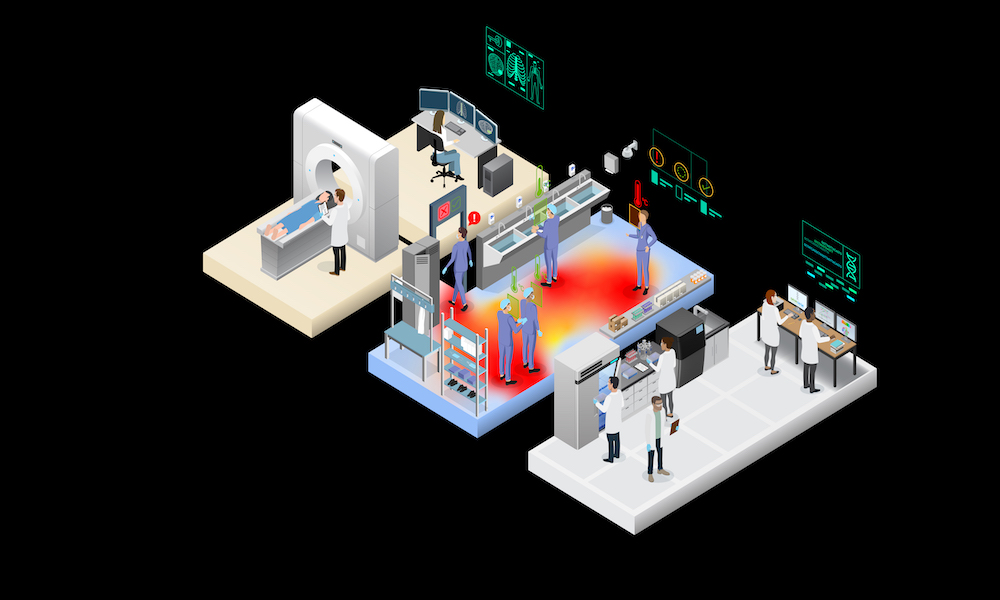

In US hospitals today, 10-15 edge devices are connected to each hospital bed, monitoring the patient’s current real time status. By 2025, it is estimated that 75% of medical data will be generated at the edge. Additionally, the global market for connected medical devices is expected to grow to $158 billion in 2022, up from $41 billion in 2017.

To scale virtual patient services, manage medical devices, and support smart hospital technologies, health systems must now process massive amounts of data closer to data-gathering devices in order to reduce latency and enable real time decision-making. By bringing AI workflows closer to the source, edge computing provides many advantages to healthcare:

- Robust infrastructure: Processing data onsite through edge devices allows healthcare institutions to keep their processes moving without disruption, even during network outages.

- Ultra-low latency processing: Throughput and real time insights for tasks such as hand-eye coordination, or alerts about where critical organs are during a procedure are important for ensuring safer surgeries. Processing data at the edge provides near-instantaneous feedback.

- Enhanced security: Keeping data within the device and inference at the edge means that patient health information (PHI) stays secure and is less vulnerable to many attacks and data breaches.

- Bandwidth savings: AI processing at the edge reduces the need to send high-bandwidth data such as video streams across the network or off-premises.

- Harnessing of operational technology domain knowledge: Empowering domain experts to control the data processing AI parameters enables them to create a highly adaptive and outcome-focused solution.

Medical devices and instruments

Modern medical instruments at the edge are becoming AI-enabled, with accelerated computing built into regulatory approved devices. These features include improved medical image acquisition and reconstruction, workflow optimizations for diagnosis and therapy planning, measurements of organs and tumors, surgical therapy guidance, and real time visualizations and monitoring.

The modern operating room is a complex environment that requires teams to process, coordinate, and act upon several information sources at one time.

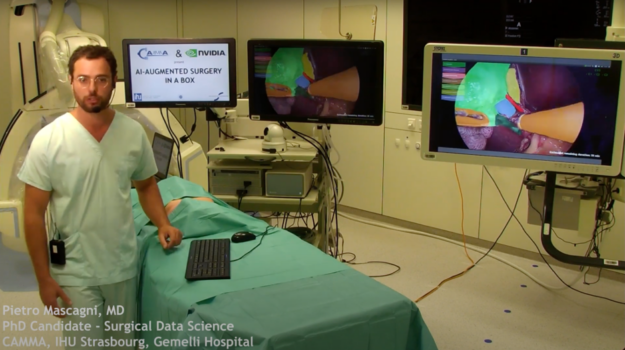

An example of a surgery where edge AI tools are helping is laparoscopy. A laparoscopy is a minimally invasive surgery performed in the abdomen or pelvis using small incisions, surgical instruments, and a laparoscope. A laparoscope is a small tube that has a light source and a camera, which relays images of the inside of the abdomen or pelvis to a television monitor.

Ultra-low latency streaming of surgical video into AI-powered data processing workflows enables surgeons to focus on finding anomalies that need to be removed, make automatic measurements, track surgical tools, monitor organs that need to be preserved, or detect bleeding in real time.

AI-augmented medical devices bring surgeons data-driven insights on-demand. These insights can help make procedures as minimally invasive as possible and improve patient recovery times. By building AI models on sensor and streaming data using development kits, teams can glean insights immediately and even manage fleets of distributed medical instruments remotely.

Outside of the operating room, many different classes of medical and life science instruments also benefit from edge computing. These include CT and MRI imaging scanners, ultrasound devices, radiation therapy, cryo-electron microscopy, and DNA sequencers.

Next-generation sequencing (NGS) refers to large-scale DNA sequencing technologies that find variants in the nucleotide bases that compose our DNA. The order of these bases encodes for genes that then code for proteins. When bases in genes are missing or mis-ordered, protein production can be affected and can disrupt normal development or cause a health condition. There are now NGS technologies that are the size of a printer or handheld device that can be run in patient waiting rooms or in the field, enabling real time sequencing at the edge to help detect these disease-causing variants in our DNA.

Smart hospitals and patient monitoring

Smart hospitals are also integrating edge computing and AI workflows into technologies such as patient monitoring, patient screening, conversational AI, heart rate estimation, CT scanners, and much more. These technologies can help identify a patient who is at risk of falling out of a hospital bed and notify the nursing staff.

Human pose estimation is a popular computer vision task that estimates key points on a person’s body such as eyes, arms, and legs. This can help classify a person’s actions, such as standing, sitting, walking, or lying down. Understanding the context of what a person might be doing has broad application across a wide range of industries. In healthcare, this can be used to monitor patients and alert medical personnel if a patient needs medical attention.

Live-streaming video feeds of thousands of beds to a remote data center comes with many challenges, including ensuring patient confidentiality, insufficient network bandwidth, and the risk of network downtime, which can disrupt patient monitoring. Rather than stream all of this data to a remote data center, it can be processed at the edge, by the bedside, where insights and alerts can be generated on demand. This provides real time data analytics to respond faster to patients in distress, and assures a robust, fault-tolerant capability.

Healthcare companies are automating their processes with IoT sensors, which generate vast amounts of data, and then adding AI tools to further help optimize insights for better clinical decision-making. Computing at the edge of the network is essential for speed, scale, reliability, security, performance, and real time insights.

Learn more about NVIDIA healthcare solutions and about the NVIDIA Clara AGX developer kit for developing AI models on sensor and streaming data for medical instruments and devices.