NVIDIA GTC starts on April 12th with more than 1,400 sessions including the latest deep learning technologies in conversational AI, recommender systems, computer vision, and video streaming.

Here’s a preview of some of the top AI/DL sessions at GTC.

- Building and Deploying a Custom Conversational AI App with NVIDIA Transfer Learning Toolkit and Riva

Tailoring the deep learning models in a conversational AI pipeline to the needs of your enterprise is time-consuming. Deployment for a domain-specific application typically requires several cycles of re-training, fine-tuning, and deploying the model until it satisfies the requirements. In this session, we will walk you through the process of customizing ASR and NLP pipelines to build a truly customized, production-ready conversational AI application that is fine-tuned to your domain.

Arun Venkatesan, Product Manager, Deep Learning Software, NVIDIA

Nikhil Srihari, Deep Learning Software Technical Marketing Engineer, NVIDIA - Accelerated ETL, Training and Inference of Recommender Systems on the GPU with Merlin, HugeCTR, NVTabular, and Triton

In this talk we’ll share the Merlin framework, consisting of NVTabular for ETL, HugeCTR for training, and Triton for inference serving. Merlin accelerates recommender systems on GPU, speeding up common ETL tasks, training of models, and inference serving by ~10x over commonly used methods. Beyond providing better performance, these libraries are also designed to be easy to use and integrate with existing recommendation pipelines.

Even Oldridge, Senior Manager, Recommender Systems Framework Team, NVIDIA - Accelerating AI Workflows with NGC

This session will walk through building a conversational AI solution using the artifacts from the NGC catalog, including a Jupyter notebook, so the process can be repeated offline. It will also cover the benefits of using NGC software throughout AI development journeys.

Adel El Hallak, Director of Product Management for NGC, NVIDIA

Chris Parsons, Product Manager, NGC, NVIDIA

- NVIDIA Maxine: An Accelerated Platform SDK for Developers of Video Conferencing Services

Connect with experts in this session to learn about the latest updates to NVIDIA Maxine, such as how applications based on Maxine can reduce video bandwidth usage down to one-tenth of H.264 using AI video compression. Also see the latest innovations from NVIDIA research, such as face alignment, gaze correction, face re-lighting and real-time translation, in addition to capabilities such as super-resolution, noise removal, closed captioning and virtual assistants.

Davide Onofrio, Technical Marketing Engineer Lead, NVIDIA

Abhijit Patait, Director, System Software, NVIDIA

Abhishek Sawarkar, Deep Learning Software Technical Marketing Engineer, NVIDIA

Tanay Varshney, Technical Marketing Engineer, Deep Learning, NVIDIA

Alex Qi, Product Manager, AI Software, NVIDIA

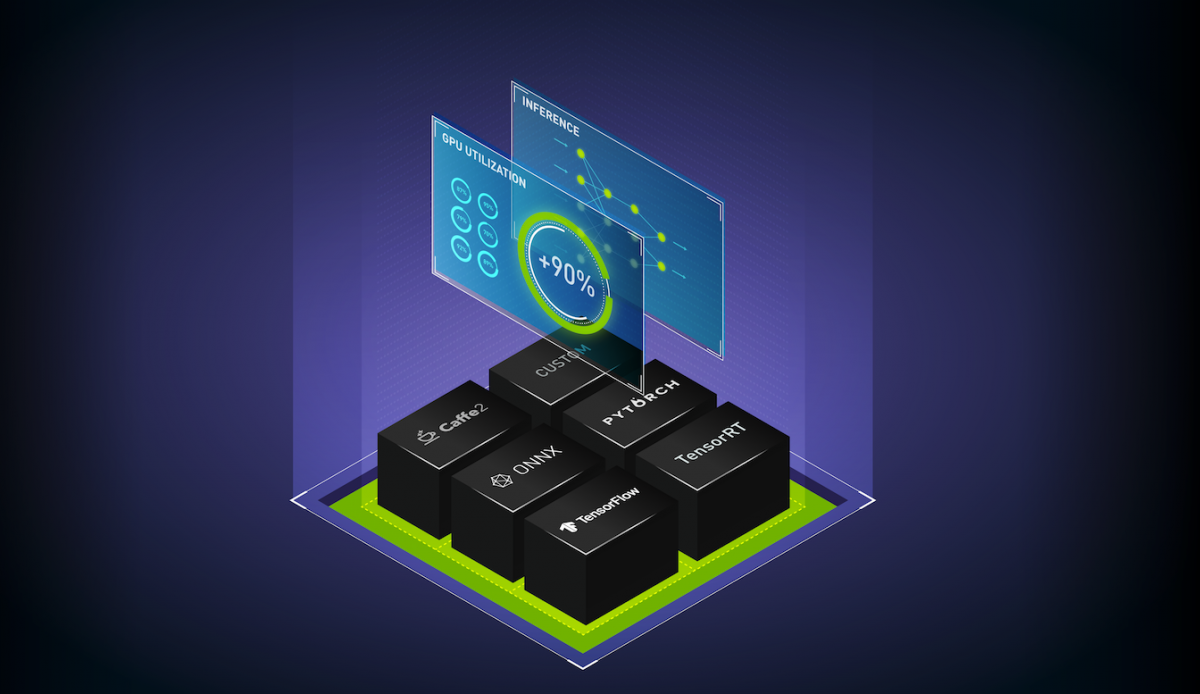

- Easily Deploy AI Deep Learning Models at Scale with Triton Inference Server

Triton Inference Server is a model serving software that simplifies the deployment of AI models at scale in production. It’s an open-source serving software that lets teams deploy trained AI models from any framework on any GPU- or CPU-based infrastructure. Learn about high performance inference serving with Triton’s concurrent execution, dynamic batching, and integrations with Kubernetes and other tools.

Mahan Salehi, Deep Learning Software Product Manager, NVIDIA

Register today for GTC or explore more deep learning sessions to learn about the latest breakthroughs in AI applications for computer vision, conversational AI, and recommender systems.