To make Siri great, Apple employed several artificial intelligence experts three years ago to apply deep learning to their intelligent mobile smart assistant.

The team began training a neural net to replace the original Siri. “We have the biggest and baddest GPU farm cranking all the time,” says Alex Acero, who heads the speech team.

“The error rate has been cut by a factor of two in all the languages, more than a factor of two in many cases,” says Acero. “That’s mostly due to deep learning and the way we have optimized it.”

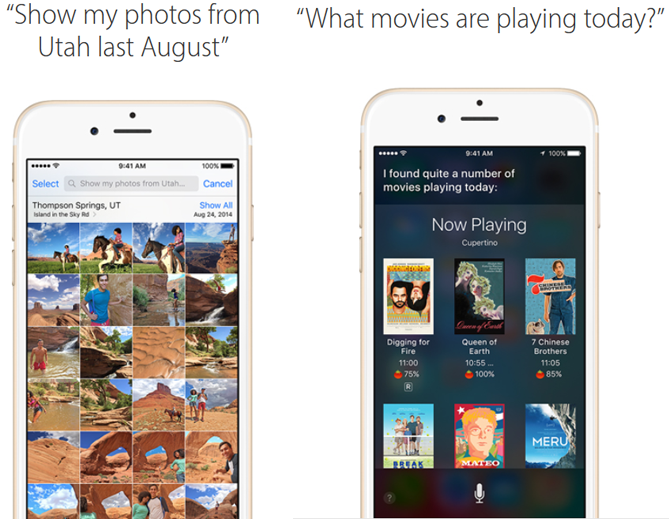

Besides Siri, Apple’s adoption of deep learning and neural nets are now found all over their products and services — including fraud detection on the Apple store, facial recognition and locations in your photos, and to help identify the most useful feedback from thousands of reports from beta testers.

“The typical customer is going to experience deep learning on a day-to-day level that [exemplifies] what you love about an Apple product,” says Phil Schiller, senior vice president of worldwide marketing at Apple. “The most exciting [instances] are so subtle that you don’t even think about it until the third time you see it, and then you stop and say, How is this happening?”

AI-Generated Summary

- Apple hired artificial intelligence experts three years ago to improve Siri using deep learning, with Alex Acero leading the speech team and utilizing a large GPU farm to train a new neural net.

- The application of deep learning has significantly reduced Siri's error rate by a factor of two or more in many languages.

- Apple's use of deep learning extends beyond Siri to other products and services, including fraud detection, facial recognition, and analyzing beta tester feedback, as noted by Phil Schiller, senior vice president of worldwide marketing at Apple.

AI-generated content may summarize information incompletely. Verify important information. Learn more