The adoption of AI in hospitals is accelerating rapidly. There are many reasons for this. With Moore’s law broken and computational capability ever increasing, models that save lives and make us more efficient and effective are becoming the norm. Within the next five years, we will see the rise of the “smart hospital,” augmented by workflows incorporating thousands of AI models.

These smart hospitals adopting AI applications face big challenges in IT and infrastructure. Healthcare demands specific restrictions in how data is transmitted, and respecting patient data privacy is paramount. Flexible compute capability, with “write once, run anywhere” capability makes it possible to deploy state-of-the-art applications at the edge in hospitals. Each application demands different compute capabilities for HPC, AI, and visualization.

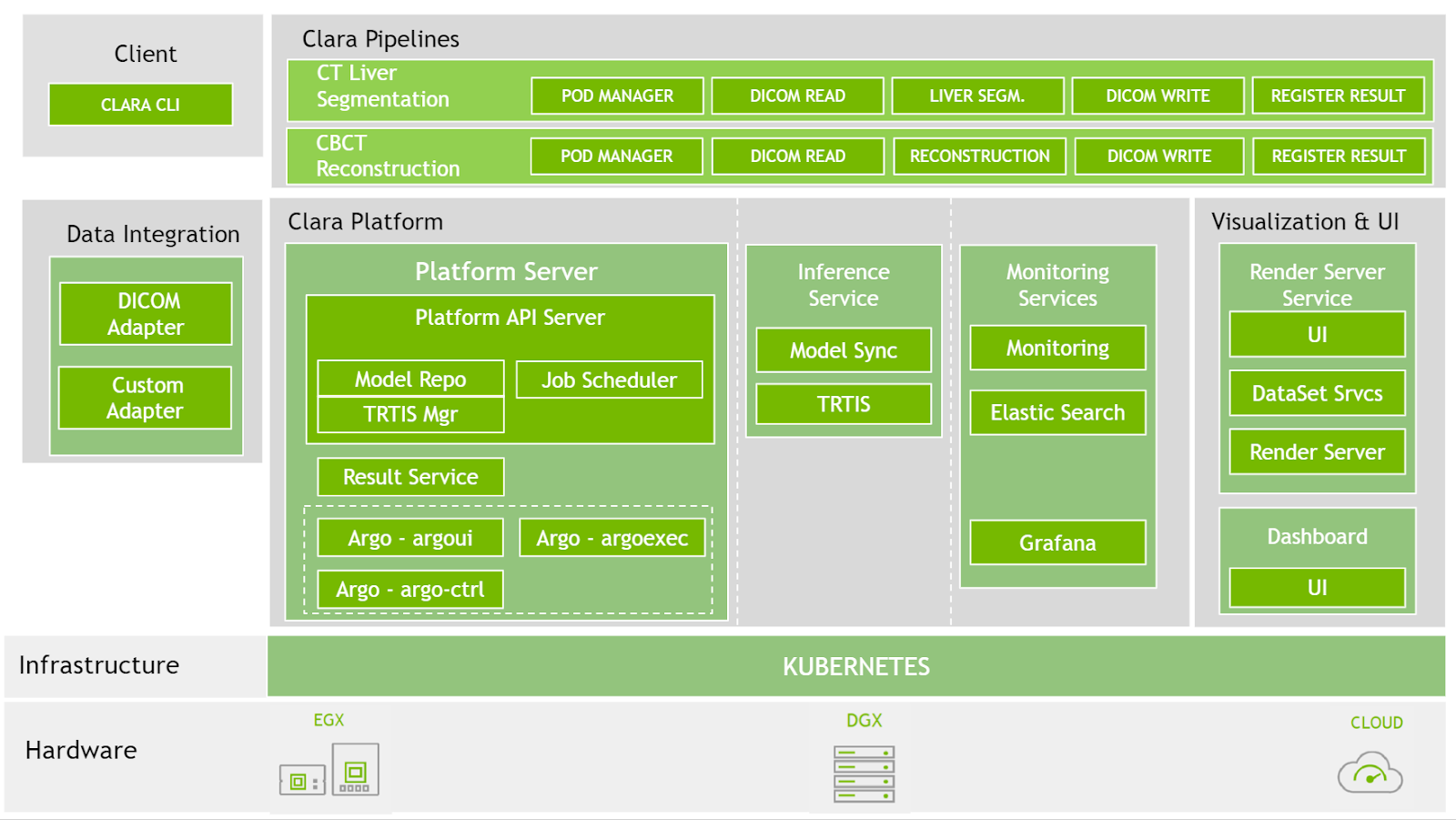

The NVIDIA Clara Deploy SDK answers this call by providing a reference framework for the deployment of multi-AI, multi-modality workflows in smart hospitals: one architecture orchestrating and scaling imaging, genomics and video processing workloads.

The most pressing problem for deploying AI models is architecting an inference platform that can handle the rapidly changing AI ecosystem, including the increasing number of requests for processing, massive size of healthcare datasets, and diversity of the processing pipelines themselves that use a heterogeneous computing environment.

During GTC Digital 2020, we made available the release candidate for the latest version of the Clara Deploy SDK. It includes platform features and reference applications that enable developers and data scientists with a unified foundation for delivering intelligent workloads and realizing the vision of the smart hospital. Figure 2 shows the Clara Deploy SDK technology stack.

Platform features

The latest capabilities of the Clara Deploy SDK include the following:

- Strongly typed operator interface

- Scheduler

- Model repository

- CLI load generator

- EGX support

- Fast I/O integrated with the Clara Platform driver

- Distribution of Clara Deploy in NGC

Strongly typed operator interface

In a Clara Deploy SDK pipeline, operators are used to perform each operation. To simplify the development effort and eliminate the guesswork in interfacing with one another, these operators are strongly typed. You can be confident that what you build hangs seamlessly together.

The Clara Deploy SDK supports pipeline composition using operators that conform to a signature, or well-defined interface. This enables the following functionality:

- Pre-runtime validation of pipelines

- Compatibility of concatenated operators in terms of data type (where specified)

- Allocation of memory for the pipeline using Fast I/O through the CPDriver

Scheduler

Hospitals use priorities to triage patients appropriately based on severity of symptoms. This concept has been introduced as an alpha feature in the Clara Deploy SDK, where studies of higher urgency can be prioritized over processing other studies. Queuing gives the Clara Deploy SDK the resiliency necessary for you to build fault-tolerant hospital-grade systems that meet the needs of future AI.

The Clara platform has a scheduler that is responsible for managing resources allocated to the platform for executing pipeline jobs, and other resources such as render servers. It is responsible for queuing and scheduling pipeline job requests based on available resources. When the system doesn’t have resources to fulfill the resource requirements of a queued job, the scheduler retains the pending job until enough resources become available.

Model repository

Managing AI models has been a manual process. With the rise of AI, it may only get more tedious. Not only are there different models for different purposes, but there are also multiple model versions that must be maintained over time.

The Clara Deploy SDK now offers management of AI models for instances of NVIDIA Triton Inference Server. The following aspects of model management are available:

- The ability to store and manage models locally through user inputs

- The ability to pull models in from external stores such as NGC

- The ability to create and manage model catalogs

CLI load generator

When developing application pipelines, it is important to be able to simulate expected load. This is the way that you gain the confidence that your hardware and software are architected in ways that can support the estimated load.

The Clara CLI load generator helps simulate hospital workloads by feeding the Clara platform with a serial workload. It enables you to specify the pipeline used to create the jobs, the datasets used as input for the jobs, and other options:

- The number of jobs to create

- The frequency at which to create them

- Type of dataset (sequential or nonsequential)

- Priority

EGX support

Clara is deployable on EGX-managed edge devices for single-node deployments. Using Clara containers and Helm charts hosted in NGC, a Clara Deploy environment can be quickly provisioned.

Fast I/O integrated with Clara platform driver

The integrated Fast I/O feature from the Clara Deploy SDK provides an interface to memory resources that are accessible by all operators running in the same pipeline. These memory resources can be used for efficient, zero-copy sharing and passing of data between operators.

Fast I/O allocations can be optionally assigned metadata to describe the resource, such as data type and array size. This metadata and the allocation that they describe can be easily passed between operators using string identifiers.

Distribution of Clara Deploy in NGC

Getting started with the Clara Deploy SDK has never been easier. The Clara Deploy SDK can now be easily installed over NGC to allow flexible installation options. After the core components are installed, you may pick and choose to install over twenty reference pipelines easily with the Clara CLI.

Reference application pipelines

To help you get started quickly, the Clara Deploy SDK comes with new reference application pipelines to enable your AI workflow approach:

- Prostate segmentation pipeline

- Multi-AI pipeline

- 3D image processing pipeline using shared memory

- DeepStream batch pipeline

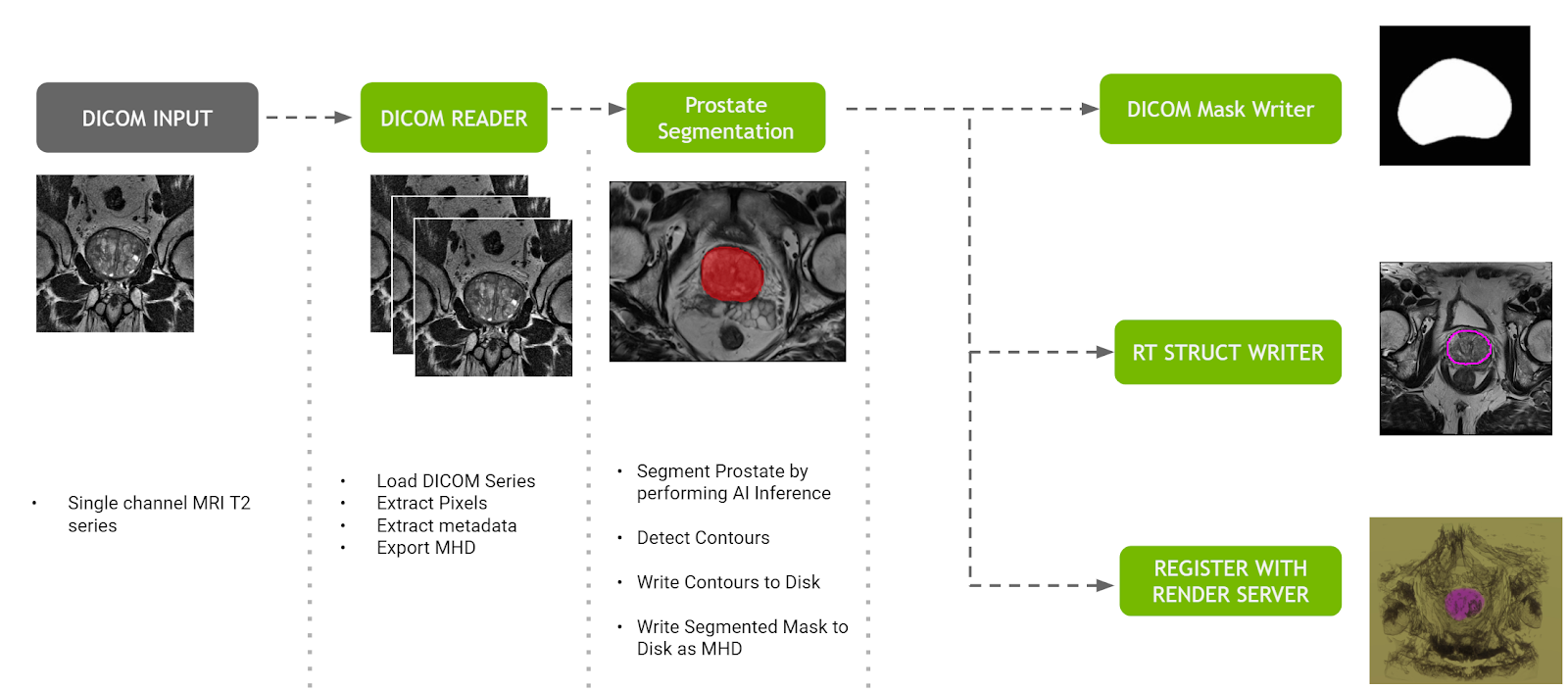

Prostate segmentation pipeline

The prostate segmentation pipeline ingests a single channel MR dataset of the prostate and provides segmentation of prostate anatomy. The pipeline generates three outputs:

- A DICOM RT Structure Set instance in a new series of the original study, optionally sent to a configurable DICOM device.

- A binary mask in a new DICOM series of the original study, optionally sent to the same DICOM device as mentioned earlier.

- The original and segmented volumes in MetaImage format to the Clara Deploy Render Server for visualization on the Clara dashboard.

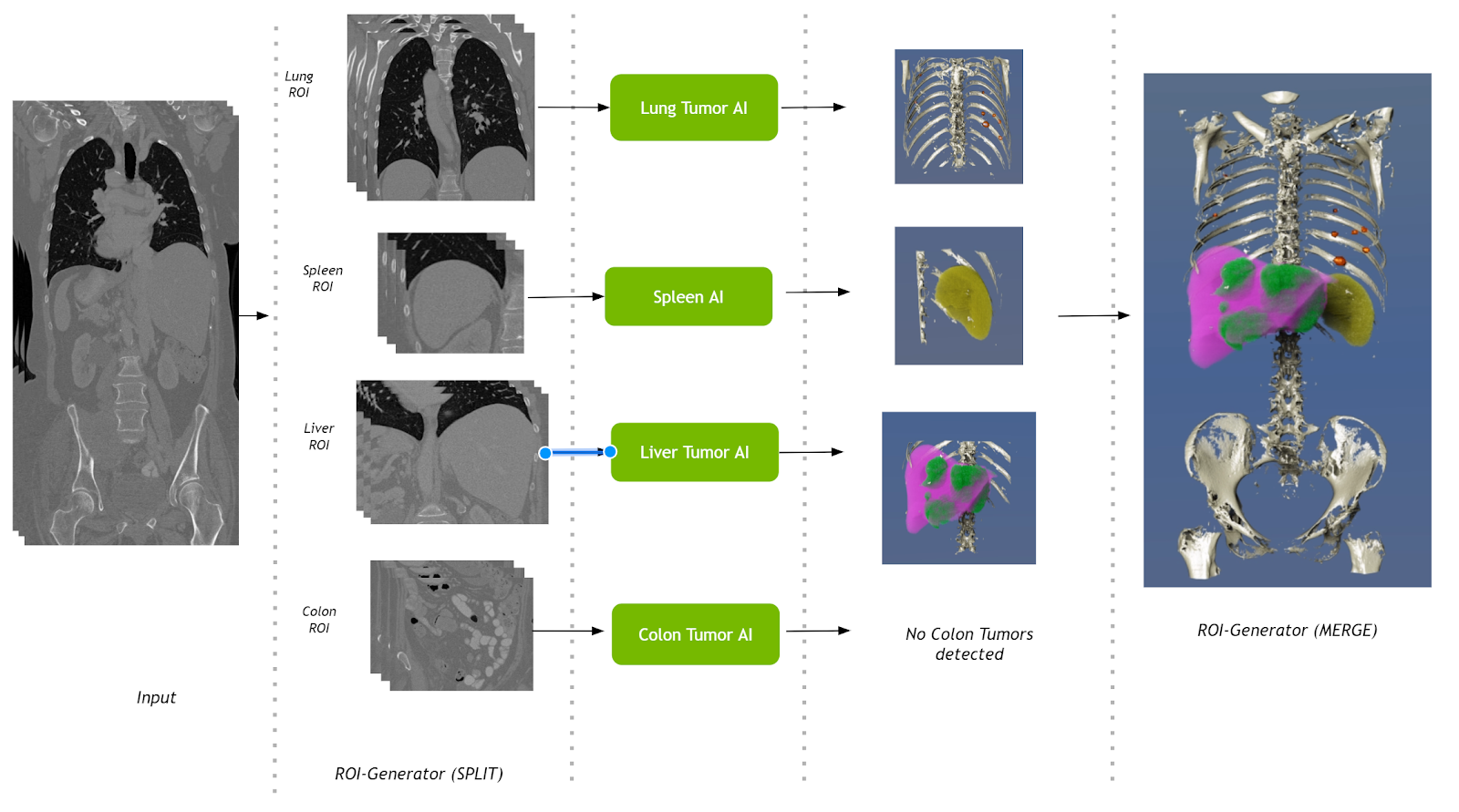

Multi-AI pipeline

This pipeline takes a single CT volumetric dataset as input and splits it into multiple regions of interest (ROIs). These ROIs are then fed into their respective AI operators. Results from the AI operators are finally merged into a single volume. Operators for segmenting liver tumors, lung tumors, colon tumors, and the spleen are used in this pipeline.

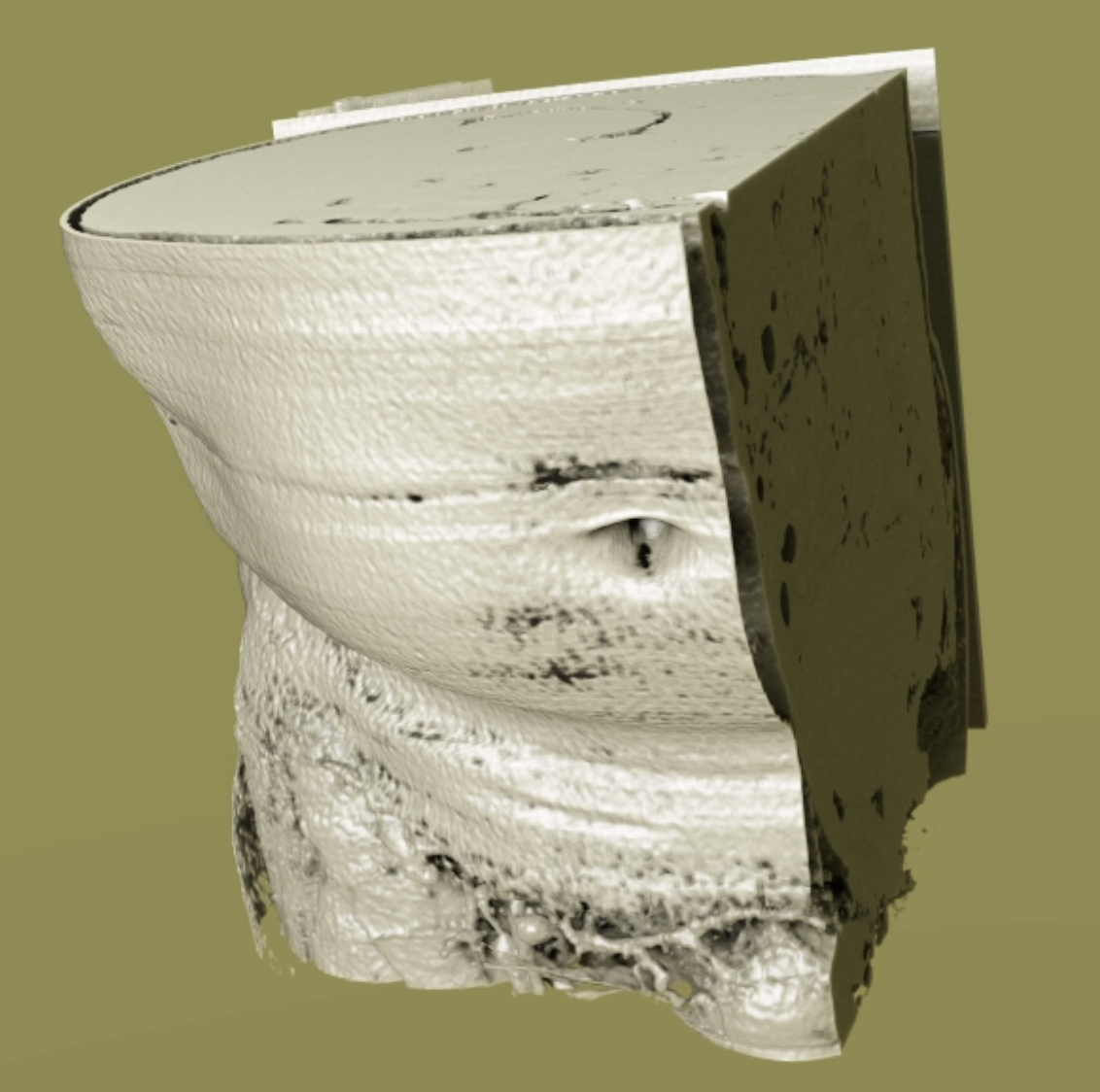

3D image processing pipeline using shared memory

To accelerate the processing of AI pipelines, it is of the utmost importance to keep processes and data in memory, and not cache to disk. Swapping data on and off reduces the performance and ultimately reduces the number of studies that can be performed at any given time. The Clara Deploy SDK provides a reference application pipeline that demonstrates how to leverage shared memory.

The 3D image processing pipeline accepts a volume image in MetaImage format, and optionally accepts parameters for cropping. The output is the cropped volume image and the image is published to the Render Server so that it can be viewed on the web browser. It makes use of shared memory among all operators to pass voxel data around.

DeepStream batch pipeline

The Clara Deploy SDK is used with both medical imaging and videos.

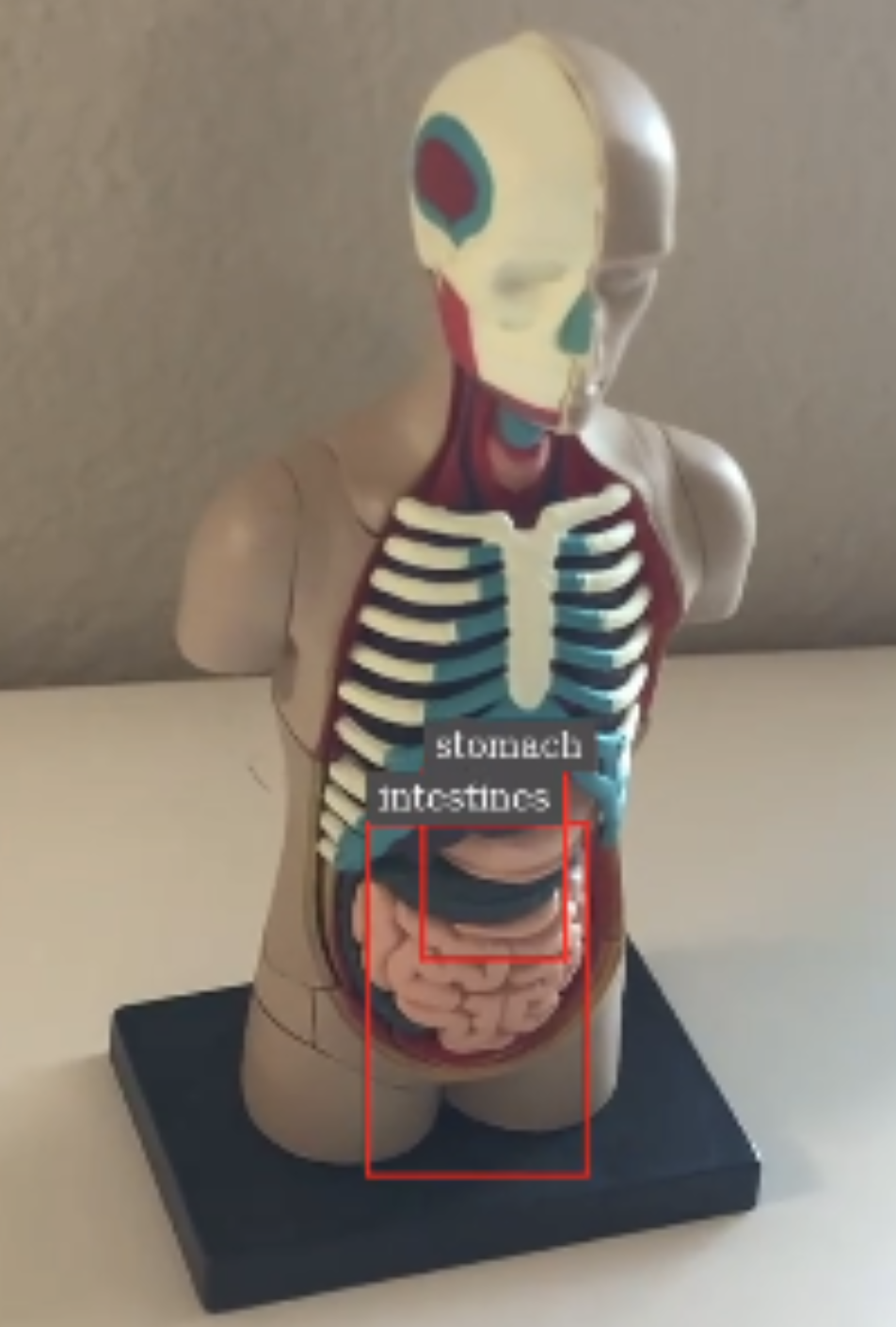

The DeepStream batch pipeline makes use of an organ detection model running on top of the DeepStream SDK, which provides a reference application. It accepts an MP4 file in H.264 format and performs the object detection of finding stomach and intestines from the input video. The output of the pipeline is a rendered video with bounding boxes with labels overlaid on top of the original video in H.264 format (output.mp4), as well as the primary detector output in a modified KITTI metadata format (.txt files).

New Render Server features

The Render Server, part of the Clara Deploy SDK, provides you with interactive tools to visualize what your AI pipelines are producing. In this release, several new features have been added:

- Original slice rendering

- Visualization for segmentation masks on original slices

- Oblique multiplanar reformatting

- Touch support for the Render Server

Original slice rendering

Not only is it important to see the output of AI processing, but sometimes it is relevant to see the input imaging data. The Render Server can now display the original slices in addition to volume-rendered views.

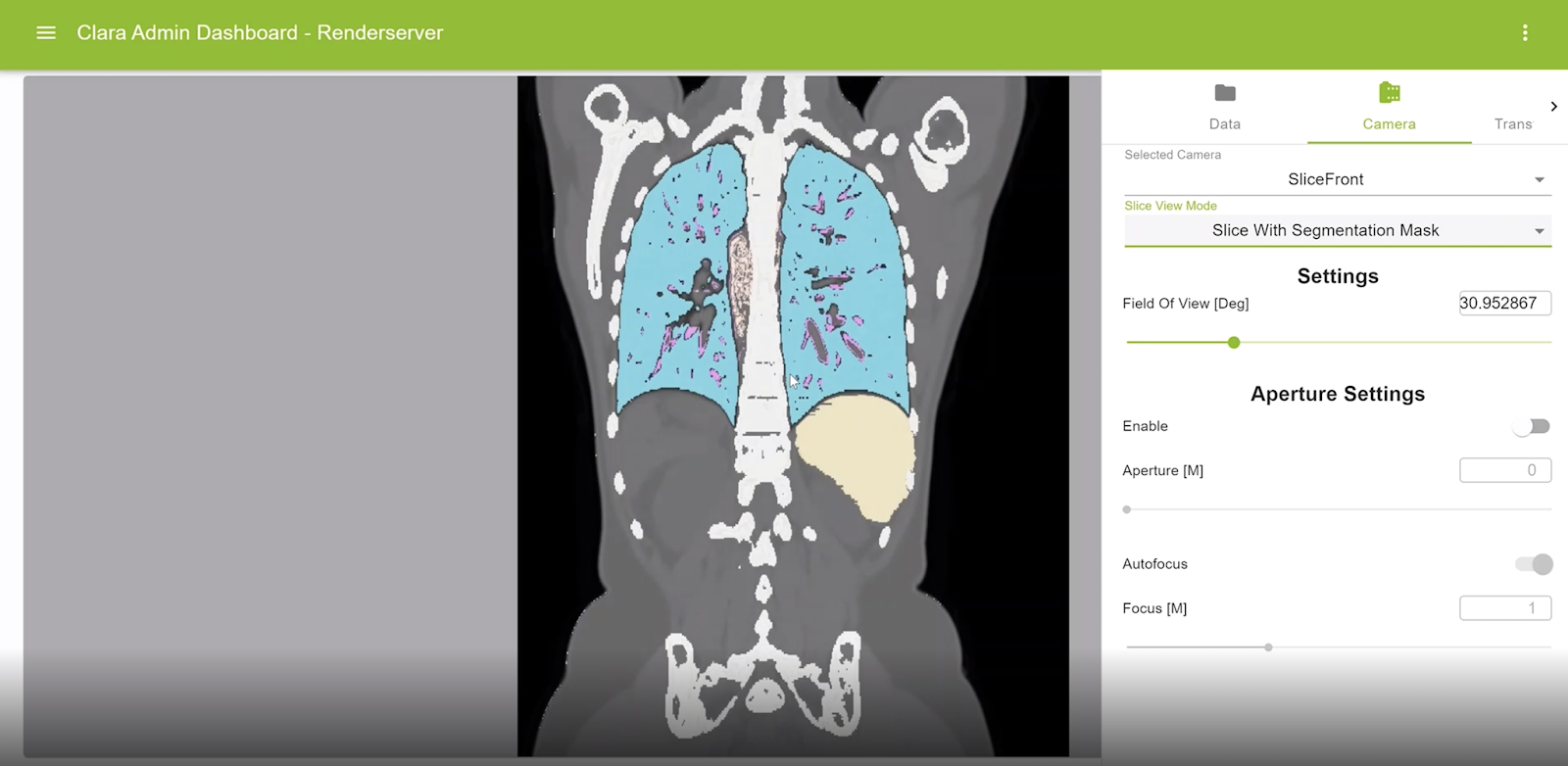

Visualization for segmentation masks on original slices

Segmentation masks can be displayed now on any rendered view of the volume. The color and opacity of such masks are controlled using the corresponding transfer functions.

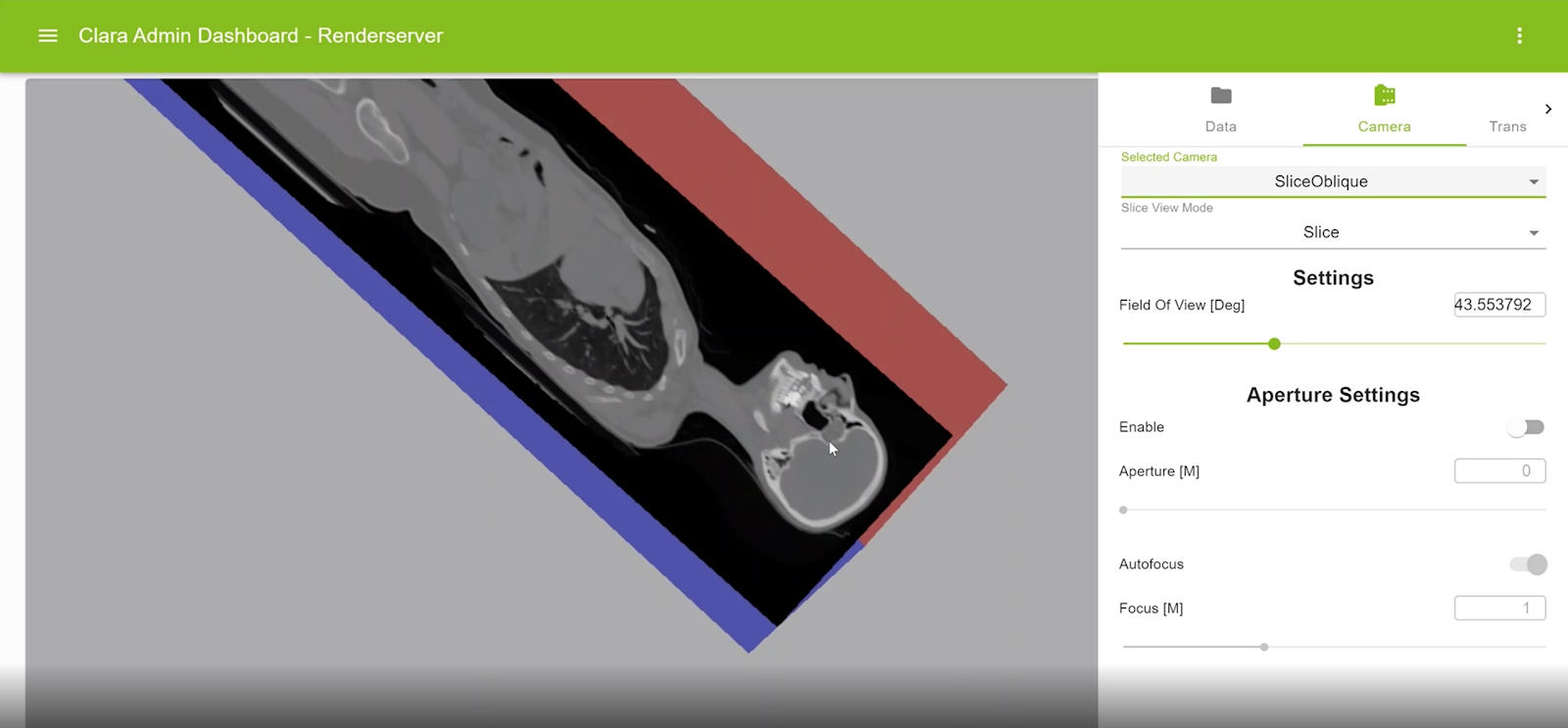

Oblique multiplanar reformatting

This feature enables reformatting the original slices along an arbitrary plane of orientation. For example, axial slices can be reformatted with sagittal or coronal planes. An oblique slice is displayed within the context of a colored axis cube. The view can be rotated, and the displayed slice can be interactively modified.

Touch support for the Render Server

You may visualize the results of AI processes anywhere. On a touch-friendly device, you can now interact with rendered views using gestures.

Management console

A smart hospital that runs hundreds of AI models must have a robust view of all the data being processed at any given time. IT operations, PACS administrators, and even data scientists and model developers benefit from administrative views that allow them to peer into the AI “black box.”

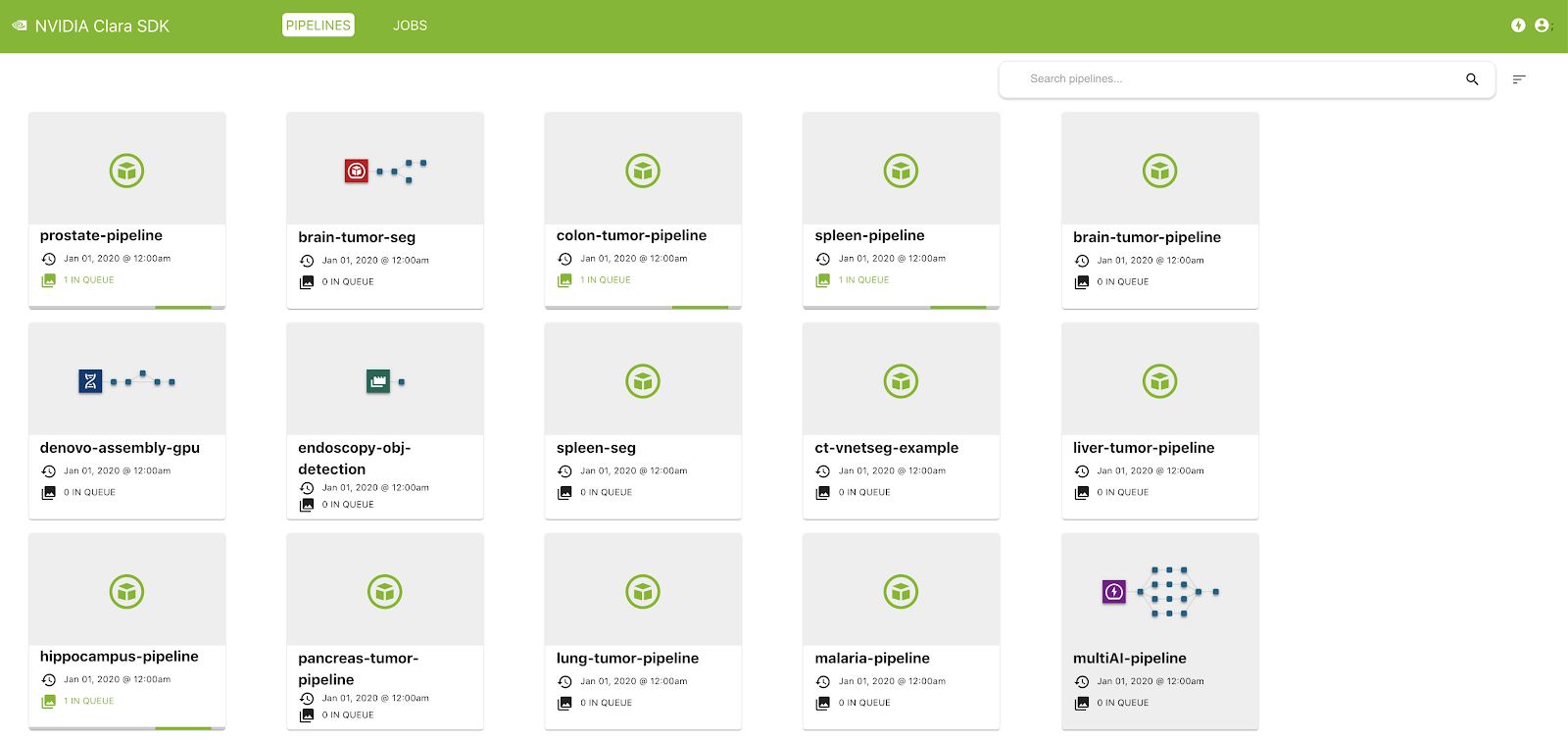

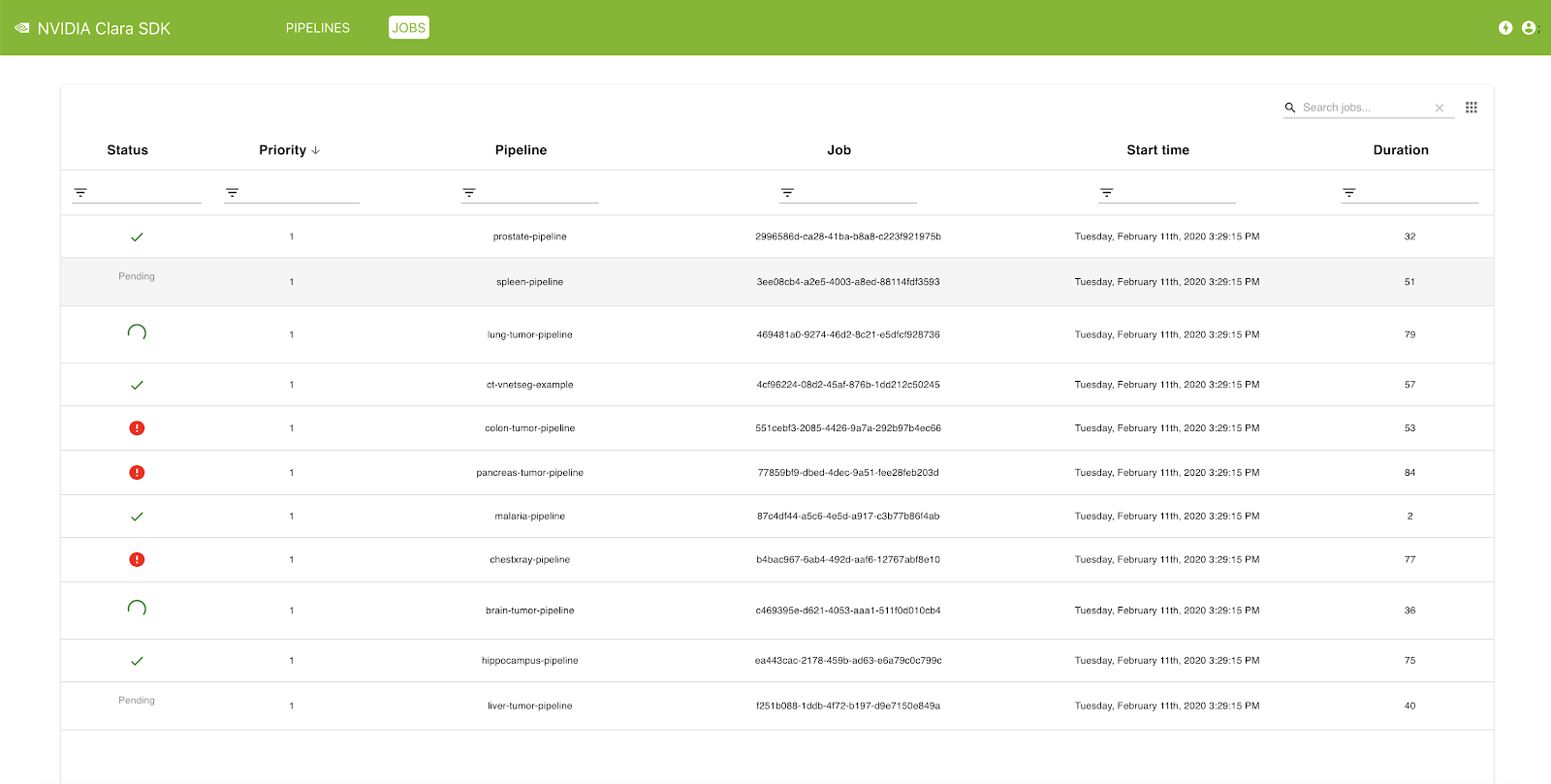

This release of the Clara Deploy SDK features a new management console that can be used to administer pipelines and jobs registered with the Clara Deploy platform. In this release, you can view a list of pipelines with information such as pipeline name, registration date, and the number of jobs queued in the system that were instantiated from this pipeline. Similarly, in the Jobs view, you can see a list of jobs with information such as status, priority, job ID, start time, duration, and so on.

Conclusion

Download the SDK release candidate, visit the NVIDIA Clara Deploy SDK User Guide, and view the installation steps. We would like to hear your feedback. To hear about the latest developments, visit the Clara Deploy SDK forum.