As large language model (LLM) inference workloads grow in complexity, a single monolithic serving process starts to hit its limits. Prefill and decode stages have fundamentally different compute profiles, yet traditional deployments force them onto the same hardware, leaving GPUs underutilized and scaling inflexible.

Disaggregated serving addresses this by splitting the inference pipeline into distinct stages such as prefill, decode, and routing, each running as an independent service that can be resourced and scaled on its own terms.

This post will give an overview of how disaggregated inference gets deployed on Kubernetes, explore different ecosystem solutions and how they execute on a cluster, and evaluate what they provide out of the box.

How do aggregated and disaggregated inference differ?

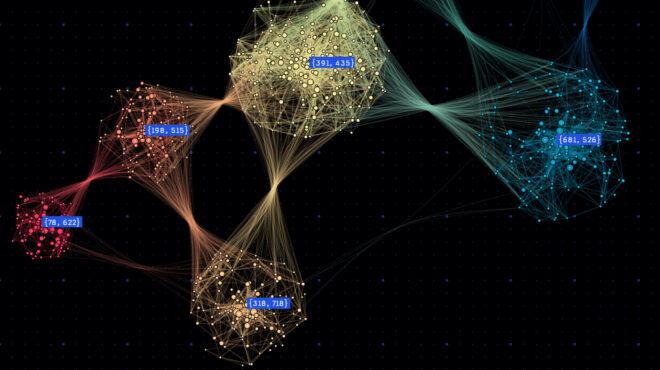

Before diving into Kubernetes manifests, it helps to understand the two inference deployment modes for LLMs: In aggregated serving, a single process (or tightly coupled group of processes) handles the entire inference lifecycle from input to output. Disaggregated serving splits the pipeline into distinct stages such as prefill, decode, and routing, each running as independent services (see Figure 1, below).

Aggregated inference

In a traditional aggregated setup, a single model server (or coordinated group of servers in a parallel configuration) handles the full request lifecycle. A user prompt comes in, the server tokenizes it, runs prefill to build context, generates output tokens autoregressively (decode), and returns the response. Everything happens in a single process or tightly coupled pod group.

This is conceptually simple and works well for many use cases. But it means your hardware alternates between two fundamentally different workloads: Prefill is compute-intensive and benefits from high floating point operations (FLOPS), while decode is memory-bandwidth-bound and benefits from large, fast memory.

Disaggregated inference

Disaggregated architectures separate these stages into distinct services:

- Prefill workers process the input prompt. This is compute-heavy. You want to maximize your GPUs for high-throughput and can parallelize aggressively.

- Decode workers generate output tokens one at a time. This is memory-bandwidth-bound because of the autoregressive nature of LLMs. You want GPUs with fast high bandwidth memory (HBM) access.

- Router/gateway directs incoming requests, manages Key-Value (KV) cache routing between prefill and decode stages, and handles load balancing of requests across your workers.

Why disaggregate? Three reasons stand out:

- Different resource and optimization profiles per stage: With disaggregation, you can match GPU resources, model sharding techniques, and batch sizes to each stage’s needs rather than compromise on a single approach.

- Independent scaling: Prefill and decode traffic patterns differ. A long-context prompt creates a large prefill burst but a steady decode stream. Scaling each stage independently lets you respond to actual demand.

- Better GPU utilization: Separating stages lets each saturate its target resource (compute for prefill, memory bandwidth for decode) rather than alternating between both.

Frameworks like NVIDIA Dynamo and llm-d, implement this pattern. The question becomes: How do you orchestrate it on Kubernetes?

Why scheduling is the key to multi-pod inference performance on Kubernetes

Deploying a multi-pod inference workload (either model-parallel aggregated models or disaggregated models) is only half the story. How the scheduler places pods across the cluster directly impacts performance; placing a Tensor Parallel (TP) group’s pods on the same rack with high-bandwidth NVIDIA NVLink interconnects can mean the difference between fast inference and a network bottleneck. Three scheduling capabilities matter the most here:

- Gang scheduling ensures all pods in a group are placed with all-or-nothing semantics, preventing partial deployments that waste GPUs.

- Hierarchical gang scheduling extends basic gang scheduling to multi-level workloads. In disaggregated inference, you need nested minimum guarantees per component or role: each Tensor Parallel group (e.g., four pods forming one decode instance) must be scheduled atomically, and the full system (at least n prefill instances + at least m decode instances + router) also needs system-level coordination. Without this, one role can consume all available GPUs while the other waits indefinitely—a partial deployment that holds resources but can’t serve requests.

- Topology-aware placement colocates tightly coupled pods on nodes with high-bandwidth interconnects, minimizing inter-node communication latency.

These three capabilities determine how an AI scheduler, such as KAI Scheduler, places pods based on the application’s scheduling constraints. Additionally, it is also important for the AI orchestration layer to determine what needs to be gang-scheduled, and when. For example, when prefill scales independently, something needs to decide that the new pods form a gang with a minimum availability guarantee, without disrupting existing decode pods. As a result, the orchestration layer and the scheduler need to work closely for the entire application lifecycle, handling multi-level auto-scaling, rolling updates, and more, to ensure optimal runtime conditions for AI workloads.

This is where higher-level workload abstractions come in. APIs like LeaderWorkerSet (LWS) and NVIDIA Grove allow users to declaratively express the structure of their inference application: which roles exist, how they relate to each other, how they should scale, and what topology constraints matter. The API’s operator translates that application-level intent into concrete scheduling constraints (including PodGroups, gang requirements, topology hints) that determine what gangs to create and when.

KAI Scheduler then plays the critical role of satisfying those constraints, solving the how: gang scheduling, hierarchical gang scheduling, and topology-aware placement. In this post, we use KAI as the scheduler, though there are other schedulers in the community that support subsets of these features. Readers can explore the broader scheduling landscape through the Cloud Native Computing Foundation (CNCF) ecosystem.

Deploying disaggregated inference

Disaggregated architectures have multiple roles each with different resource profiles and scaling needs. Since each role in a disaggregated pipeline is a distinct workload, a natural approach with LWS is to create a separate resource for each role.

Prefill workers (four replicas, 2-degree Tensor Parallelism):

apiVersion: leaderworkerset.x-k8s.io/v1

kind: LeaderWorkerSet

metadata:

name: prefill-workers

spec:

replicas: 4

leaderWorkerTemplate:

size: 2

restartPolicy: RecreateGroupOnPodRestart

leaderTemplate:

metadata:

labels:

role: prefill-leader

spec:

containers:

- name: prefill

image: <model-server-image>

args: ["--role=prefill", "--tensor-parallel-size=2"]

resources:

limits:

nvidia.com/gpu: "1"

workerTemplate:

spec:

containers:

- name: prefill

image: <model-server-image>

args: ["--role=prefill"]

resources:

limits:

nvidia.com/gpu: "1"

Decode workers (two replicas, 4-degree Tensor Parallelism):

apiVersion: leaderworkerset.x-k8s.io/v1

kind: LeaderWorkerSet

metadata:

name: decode-workers

spec:

replicas: 2

leaderWorkerTemplate:

size: 4

restartPolicy: RecreateGroupOnPodRestart

leaderTemplate:

metadata:

labels:

role: decode-leader

spec:

containers:

- name: decode

image: <model-server-image>

args: ["--role=decode", "--tensor-parallel-size=4"]

resources:

limits:

nvidia.com/gpu: "1"

workerTemplate:

spec:

containers:

- name: decode

image: <model-server-image>

args: ["--role=decode"]

resources:

limits:

nvidia.com/gpu: "1"

Router (a standard deployment—no leader-worker topology needed):

apiVersion: apps/v1

kind: Deployment

metadata:

name: router

spec:

replicas: 2

selector:

matchLabels:

app: router

template:

metadata:

labels:

app: router

spec:

containers:

- name: router

image: <router-image>

env:

- name: PREFILL_ENDPOINT

value: "prefill-workers"

- name: DECODE_ENDPOINT

value: "decode-workers"

Each role is managed as its own resource. You can scale, prefill, and decode independently, and update them on different schedules.

It’s important to note that the scheduler treats prefill workers and decode-workers as independent workloads. The scheduler will place them successfully, but it has no knowledge that they form a single inference pipeline. In practice, this means a few things:

- Topology coordination between prefill and decode (placing them on the same rack for fast KV cache transfer) requires manually adding pod affinity rules that reference labels across the two LWS resources.

- Scaling one role doesn’t automatically account for the other: If a burst of long-context requests requires more prefill capacity, you scale prefill-workers, but the new prefill pods aren’t guaranteed to land near existing decode pods unless you’ve configured affinity yourself.

- Rolling out a new model version means coordinating updates across three independent resources—LWS’s partition update mechanism supports staged rollouts per-resource, but synchronizing across resources is managed externally.

That last point is worth calling out. Inference frameworks move fast and don’t always guarantee backwards compatibility between versions, so prefill pods on the old version and decode pods on the new version may not be able to communicate. Models also take time to load, and prefill and decode workers frequently become ready at different rates. During an unsynchronized rollout, this can create a temporary imbalance, where many new decode pods are ready but very few new prefill pods are (or vice versa). This can create a bottleneck in your inference pipeline until everything catches up.

These patterns work. The coordination just happens outside of Kubernetes primitives: in the inference framework’s routing layer, in custom autoscalers, bespoke operators, or even manually. Another option would be using Grove’s API, which takes a different approach by moving that coordination into the Kubernetes resource itself.

It expresses all roles in a single PodCliqueSet:

apiVersion: grove.io/v1alpha1

kind: PodCliqueSet

metadata:

name: inference-disaggregated

spec:

replicas: 1

template:

cliqueStartupType: CliqueStartupTypeExplicit

terminationDelay: 30s

cliques:

- name: router

spec:

roleName: router

replicas: 2

podSpec:

schedulerName: kai-scheduler

containers:

- name: router

image: <router-image>

resources:

requests:

cpu: 100m

- name: prefill

spec:

roleName: prefill

replicas: 4

startsAfter: [router]

podSpec:

schedulerName: kai-scheduler

containers:

- name: prefill

image: <model-server-image>

args: ["--role=prefill", "--tensor-parallel-size=2"]

resources:

limits:

nvidia.com/gpu: "1"

autoScalingConfig:

maxReplicas: 8

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

- name: decode

spec:

roleName: decode

replicas: 2

startsAfter: [router]

podSpec:

schedulerName: kai-scheduler

containers:

- name: decode

image: <model-server-image>

args: ["--role=decode", "--tensor-parallel-size=4"]

resources:

limits:

nvidia.com/gpu: "1"

autoScalingConfig:

maxReplicas: 6

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 80

topologyConstraint:

packDomain: rack

The Grove operator manages PodCliques for each role and coordinates scheduling, startup, and lifecycle across all of them. A few things to note in the YAML:

startsAfter: [router]on prefill and decode tells the operator to gate their startup until the router is ready. This is expressed declaratively and enforced through init containers. When you first deploy, router pods start and become ready first, then prefill and decode pods start in parallel (since both depend on the router).autoScalingConfigon each clique lets you define per-role scaling policies. The operator creates an horizontal pod autoscaler (HPA) for each, so prefill and decode scale independently based on their own metrics.topologyConstraint with packDomain:rack tells the KAI Scheduler to pack all cliques within the same rack, optimizing KV cache transfer between prefill and decode stages over high-bandwidth interconnects.

After applying this manifest, you can inspect all the resources Grove creates:

$ kubectl get pcs,pclq,pg,pod

NAME AGE

podcliqueset.grove.io/inference-disaggregated 45s

NAME AGE

podclique.grove.io/inference-disaggregated-0-router 44s

podclique.grove.io/inference-disaggregated-0-prefill 44s

podclique.grove.io/inference-disaggregated-0-decode 44s

NAME AGE

podgang.scheduler.grove.io/inference-disaggregated-0 44s

NAME READY STATUS AGE

pod/inference-disaggregated-0-router-k8x2m 1/1 Running 44s

pod/inference-disaggregated-0-router-w9f4n 1/1 Running 44s

pod/inference-disaggregated-0-prefill-abc12 1/1 Running 44s

pod/inference-disaggregated-0-prefill-def34 1/1 Running 44s

pod/inference-disaggregated-0-prefill-ghi56 1/1 Running 44s

pod/inference-disaggregated-0-prefill-jkl78 1/1 Running 44s

pod/inference-disaggregated-0-decode-mn90p 1/1 Running 44s

pod/inference-disaggregated-0-decode-qr12s 1/1 Running 44s

One PodCliqueSet, three PodCliques (one per role), one PodGang for coordinated scheduling, and pods matching each role’s replica count. The startsAfter dependency is enforced through init containers: Prefill and decode pods wait for the router to become ready before their main containers start.

Scaling disaggregated workloads

Once a disaggregated workload is running, scaling becomes the central operational challenge. Prefill and decode have different bottlenecks; teams might want to autoscale prefill workers based on time to first token (TTFT) and decode workers based on inter-token latency (ITL) independently, to meet service level agreements (SLAs) while minimizing GPU costs.

In practice, disaggregated scaling operates at three levels:

- Per-role scaling: adding or removing pods within a single role (e.g. scaling prefill from 4 to 6 replicas)

- Per-TP-group scaling: scaling complete Tensor Parallel groups as atomic units, since you can’t add half a TP group.

- Cross-role coordination: when you add prefill capacity, you may also need to scale the router to handle increased throughput, or scale decode to consume the extra prefill output.

Different tools address different levels.

How inference frameworks coordinate scaling

Inference frameworks address scaling at the application level with custom autoscalers that have visibility into inference-specific metrics. llm-d’s workload variant autoscaler (WVA) monitors per-pod KV cache utilization and queue depth via Prometheus, using a spare-capacity model to determine when replicas should be added or removed. Rather than scaling deployments directly, WVA emits target replica counts as Prometheus metrics that standard HPA/Kubernetes-based event-driven autoscaling (KEDA) act on—keeping the scaling actuation within Kubernetes-native primitives.

The NVIDIA Dynamo planner takes a different approach: It natively understands disaggregated serving, running separate prefill and decode scaling loops that target TTFT and ITL SLAs respectively. It predicts upcoming demand using time-series models, computes replica requirements from profiled per-GPU throughput curves, and enforces a global GPU budget across both roles.

This global visibility matters because in practice there’s an optimal ratio between prefill and decode that shifts with request patterns. Scale prefill 3x without scaling decode and the extra output has nowhere to go—decode bottlenecks and KV cache transfer queues up. Application-level autoscalers handle this because they can see the full pipeline; Kubernetes-native HPA targeting individual resources doesn’t inherently maintain cross-resource ratios.

Scaling with separate LWS resources

With one LWS per role, you scale each independently:

kubectl scale lws prefill-workers --replicas=6

kubectl scale lws decode-workers --replicas=3

Standard HPA can target each LWS separately, or an external autoscaler (like the Dynamo planner or llm-d’s autoscaler) makes coordinated decisions and updates both. The coordination logic lives in the autoscaler, not in the Kubernetes resources themselves.

Scaling with Grove

Grove brings per-role scaling into a single resource. Each PodClique has its own replica count and optional autoScalingConfig, so HPAs can manage roles independently based on per-role metrics:

kubectl scale pclq inference-disaggregated-0-prefill --replicas=6

The operator creates additional prefill pods while leaving the router and decode untouched:

NAME AGE

podclique.grove.io/inference-disaggregated-0-router 5m

podclique.grove.io/inference-disaggregated-0-prefill 5m

podclique.grove.io/inference-disaggregated-0-decode 5m

NAME READY STATUS AGE

pod/inference-disaggregated-0-router-k8x2m 1/1 Running 5m

pod/inference-disaggregated-0-router-w9f4n 1/1 Running 5m

pod/inference-disaggregated-0-prefill-abc12 1/1 Running 5m

pod/inference-disaggregated-0-prefill-def34 1/1 Running 5m

pod/inference-disaggregated-0-prefill-ghi56 1/1 Running 5m

pod/inference-disaggregated-0-prefill-jkl78 1/1 Running 5m

pod/inference-disaggregated-0-prefill-tu34v 1/1 Running 12s # new

pod/inference-disaggregated-0-prefill-wx56y 1/1 Running 12s # new

pod/inference-disaggregated-0-decode-mn90p 1/1 Running 5m

pod/inference-disaggregated-0-decode-qr12s 1/1 Running 5m

Six prefill pods, two router pods, two decode pods—only prefill changed.

For roles that use multi-node Tensor Parallelism internally, PodCliqueScalingGroup ensures multiple PodCliques scale together as a unit while preserving the replica ratio between them. For example, in a configuration where each prefill instance consists of one leader pod and four worker pods:

podCliqueScalingGroups:

- name: prefill

cliqueNames: [pleader, pworker]

replicas: 2

minAvailable: 1

scaleConfig:

maxReplicas: 4

With replicas: Two, this creates two complete prefill instances: two x (one leader + four workers) = 10 pods total. The minAvailable: One guarantee means the system won’t scale below one complete Tensor Parallel group.

Scaling the group from two to three replicas adds a third complete instance while preserving the 1:4 leader-to-worker ratio:leader-to-worker ratio:

$ kubectl scale pcsg inference-disaggregated-0-prefill --replicas=3

Both the leader and worker cliques scaled together as a unit, the new replica (prefill-2) has one pleader pod and four pworker pods, matching the ratio. A new PodGang was created for the third replica to ensure it gets gang-scheduled.

NAME AGE

podcliquescalinggroup.grove.io/inference-disaggregated-0-prefill 10m

NAME AGE

podclique.grove.io/inference-disaggregated-0-prefill-0-pleader 10m

podclique.grove.io/inference-disaggregated-0-prefill-0-pworker 10m

podclique.grove.io/inference-disaggregated-0-prefill-1-pleader 10m

podclique.grove.io/inference-disaggregated-0-prefill-1-pworker 10m

podclique.grove.io/inference-disaggregated-0-prefill-2-pleader 8s # new

podclique.grove.io/inference-disaggregated-0-prefill-2-pworker 8s # new

NAME AGE

podgang.scheduler.grove.io/inference-disaggregated-0 10m

podgang.scheduler.grove.io/inference-disaggregated-0-prefill-0 10m

podgang.scheduler.grove.io/inference-disaggregated-0-prefill-1 8s # new

Getting started

Whether you’re running a single disaggregated pipeline or operating dozens across your cluster, the building blocks for this are emerging and the community is building them in the open. Each approach in this blog represents a different point on the spectrum between simplicity and integrated coordination.

The right choice depends on your workload, your team’s operational model, and how much lifecycle management you want the platform to handle versus the application layer.

Check out these resources for more information.

Join us at Kubecon EU

If you’re attending KubeCon EU 2026 in Amsterdam, drop by at booth No. 241 and join the session where we will cover an end-to-end open source AI inference stack. Explore the Grove Deployment Guide and ask questions on GitHub or Discord. We’d love to hear how you’re thinking about disaggregated inference on Kubernetes.