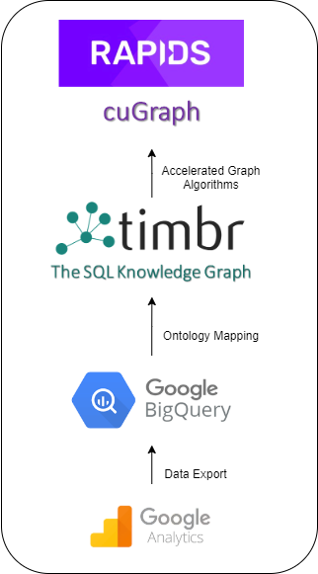

In part 1 of this series, we introduced the GA360 SQL Knowledge Graph that timbr created, which acts as a user-friendly strategic tool to shorten time-to-value. We discussed how users can conveniently connect GA360 exports to BigQuery in no time with the use of an SQL Ontology template, which enables you to understand, explore, and query the data by means of concepts, instead of dealing with many tables and columns. In addition to the many features and capabilities the GA360 SQL Knowledge Graph has to offer, we touched on the fact that the knowledge graph, queryable in SQL is empowered with graph algorithms.

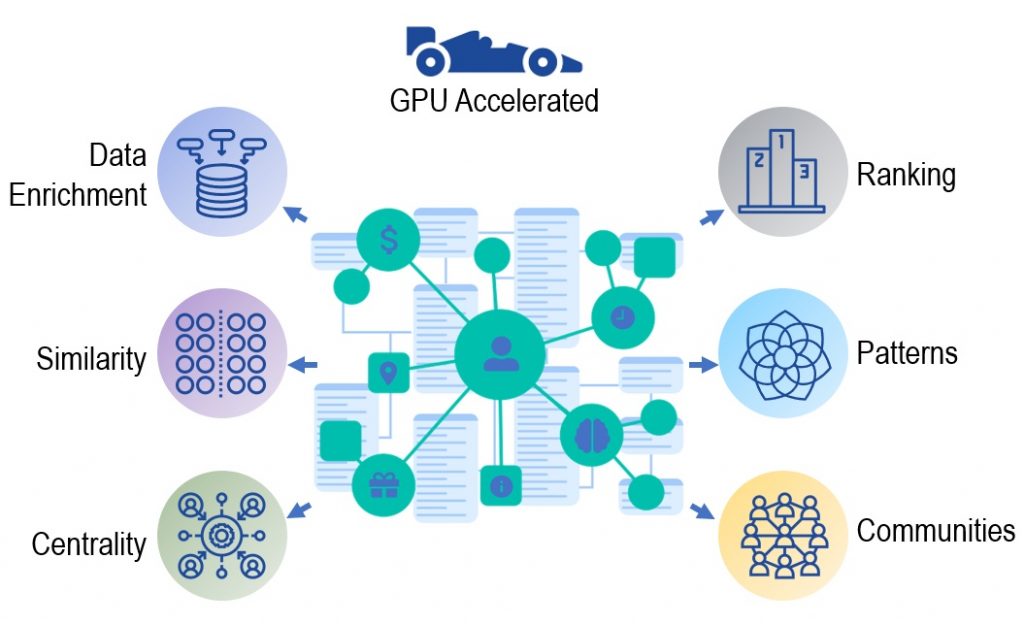

In this post, we discuss the use of graph algorithms with the GA360 SQL Knowledge Graph using RAPIDS cuGraph. Created by NVIDIA, cuGraph is a collection of powerful graph algorithms implemented over NVIDIA GPUs, analyzing data at unmatched speeds.

RAPIDS cuGraph is paving the way in the graph world with multi-GPU graph analytics, enabling you to scale to billion– and even trillion-scale graphs, with performance speeds never seen before. cuGraph is equipped with many graph algorithms, falling into the following classes:

- Centrality

- Community

- Components

- Core

- Layout

- Linear Assignment

- Link Analysis

- Link Prediction

- Traversal

- Structure

- Other unique algorithms

As there is a large rise in interest among companies to improve analytics and boost performance, many companies are turning to different graph options to get more out of their data. One of the main things holding companies back is the different implementation costs and barriers to adoption of these new technologies. Learning new languages to connect your existing data infrastructure and new graph technologies is not only a headache but also a large expense.

This is where timbr comes into the picture. Timbr dramatically reduces implementation costs, as it does not require companies to transfer data or learn new languages. Instead, timbr acts as a virtual layer over the company’s existing data infrastructure, turning simple columns and tables into an easily accessible knowledge graph empowered with tools for exploration, visualization, and querying of data using graph algorithms. This is all delivered in a semantic SQL familiar to every analyst.

In this post, we demonstrate the power of RAPIDS cuGraph combined with timbr by applying the Louvain community detection algorithm as well as the Jaccard similarity algorithm on the GA360 SQL Knowledge Graph.

Louvain community detection algorithm

The Louvain community detection algorithm is used for detecting communities in large networks with a high density of connections, helping you uncover the different connections in a network.

To understand the connections and be able to quantify their strength, you use what’s called modularity. Modularity is used to measure the strength of a partitioning of a graph into groups, clusters, or communities by constructing a score representing the modularity of a particular partitioning. The higher the modularity score, the denser the connections between the nodes in that community.

Community detection is used today in many industries for different reasons. The banking industry uses community detection for fraud analysis to find anomalies and evaluate whether a group has just a few discrete bad behaviors or is acting as a fraud ring. The health industry uses community detection to investigate different biological networks to identify various disease modules. The stock market uses community detection to build portfolios based on the correlation of stock prices.

So, with the many different uses of community detection that exist today, what can be done with community detection when it comes to Google Analytics? How will it function when used with RAPIDS cuGraph in comparison to the standard CPU in the form of NetworkX.

After connecting your Google Analytics knowledge graph to cuGraph, begin with a query requesting to see all the products purchased, allocated to their specific communities based on the customers who purchased these products. To run this query, use the gtimbr schema, which is timbr’s virtual schema for running graph algorithms. For the algorithm, use the Louvain algorithm for community detection.

Here is the exact query:

SELECT id as productsku, community FROM gtimbr.louvain( SELECT distinct productsku, info_of[hits].has_session[ga_sessions].fullvisitorid FROM dtimbr.product )

To understand the difference in performance when running this algorithm query, we tested running this query with cuGraph and then ran it again using NetworkX. Table 1 shows the performance differences.

| NetworkX | RAPIDS cuGraph | ||

| Performance speed | 5 seconds | 0.04 seconds |

Table 1. cuGraph vs. NetworkX performance speeds

After running the query, you’ll receive a list of 982 products, each product belonging to a community ID.

Having a large list of products, you must understand how many communities you’re dealing with. Run the following query:

SELECT community, count(productsku) as number_of_products FROM dtimbr.product_community GROUP BY community ORDER BY 1 asc

Table 2 shows the results.

| community | number_of_products |

| 0 | 189 |

| 1 | 113 |

| 2 | 197 |

| 3 | 166 |

| 4 | 272 |

| 5 | 45 |

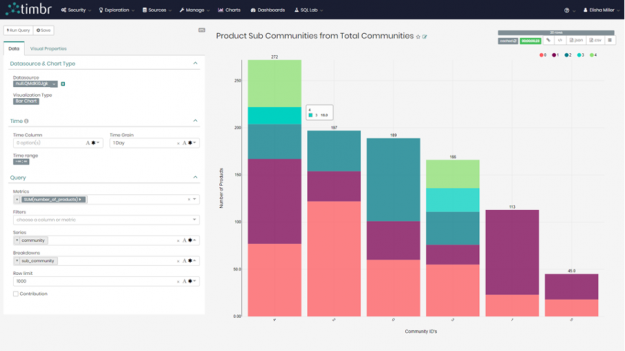

It was pretty clear from the results that having 982 products represented by just six communities makes it hard to understand the connections between the different products and customers. To resolve this, drill down into each community and create subcommunities to really highlight which products belong to which customers.

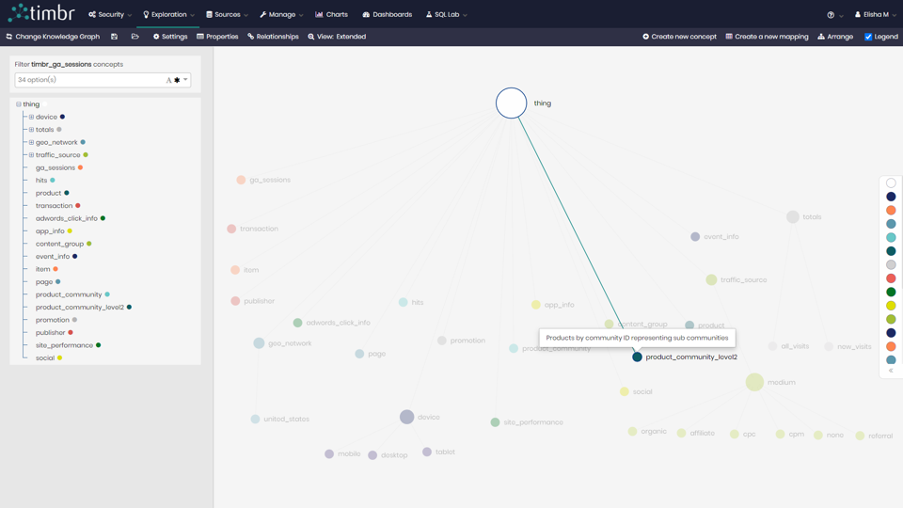

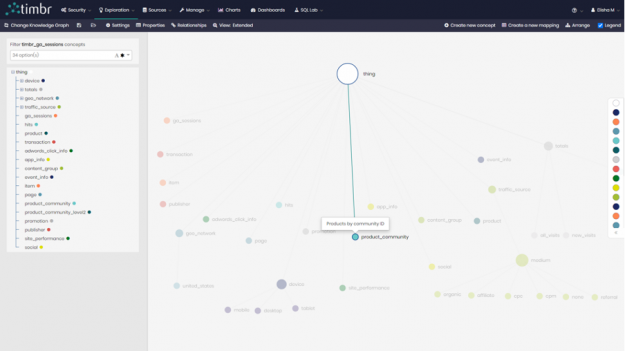

The first step was to create a new concept in the knowledge graph called product_community and map the data to it from the first query showing each of the 982 products and the community to which they belong.

Mapping the data is then performed in simple SQL, similar to the syntax used when creating and mapping data with tables and columns in relational databases:

CREATE OR REPLACE MAPPING map_product_community into product_community AS

SELECT id as productsku, community

FROM gtimbr.louvain(

SELECT distinct productsku, info_of[hits].has_session[ga_sessions].fullvisitorid

FROM dtimbr.product )Now that you have a concept representing products by community, create a second concept called product_community_level2 to represent the subcommunities of the original six communities.

To create the subcommunities for the new concept, create a new mapping for each of the six original communities. For example, here is the new mapping to present the subcommunities for community ID 0:

CREATE OR REPLACE MAPPING map_product_community_level2_0 INTO product_community_level2 AS

SELECT id AS productsku, community, 0 AS parent_community

FROM gtimbr.louvain(

SELECT distinct productsku, `info_of[hits].has_session[ga_sessions].fullvisitorid`

FROM dtimbr.product

WHERE productsku IN (SELECT distinct productsku

FROM dtimbr.product_community

WHERE community = 0))After you query and map the data of all subcommunities to the new concept, view the results in the built-in BI Tool. Figure 4 shows the breakdown of the subcommunities for each of the original six communities.

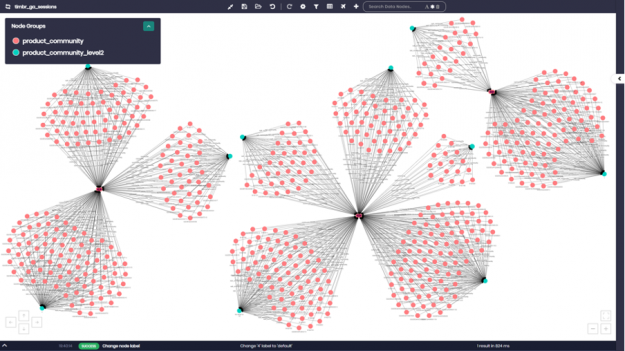

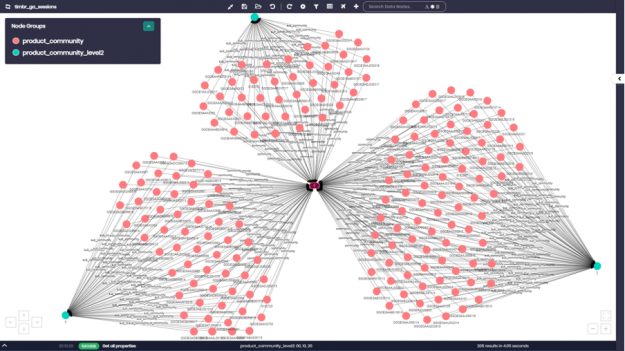

Lastly, view the communities and subcommunities with all their products using timbr’s data explorer. Enter the concepts with the specific communities that you want and ask to see their products. In this case, ask for community numbers 0,1, and 4 as well as their subcommunities. The results show you the products by subcommunity within the larger community.

If you zoom in on community ID number 0, for example, you can see all the different product numbers appearing as pink nodes. Each product number is connected to the different subcommunity to which it belongs, which appear as light blue nodes on the graph.

Link prediction using Jaccard similarity algorithm

A variety of similarity algorithms are used today, including Jaccard, Cosine, Pearson, and Overlap. In the GA Knowledge Graph, we demonstrate the use of the Jaccard similarity with an emphasis on link prediction.

The Jaccard similarity algorithm examines the similarity between different pairs and sets of items, whether they are people, products, or anything else. When using the similarity algorithm, you become exposed to connections between different pairs of people or items that you would never have been able to identify without the use of this unique algorithm.

The use of link prediction with similarity acts as a recommendation algorithm, which extends the idea of linkage measure to a recommendation in a bipartite network. In this case, a bipartite is a network of products and customers.

The similarity algorithm and link prediction are used today in many different use cases. Social networks use this algorithm for many different uses, including making recommendations to users regarding who to connect with based on similar relationships and connections and deciding what advertisements to post for which users based on common interests with other users. One current use case is offering a user a product based on the fact that the similarity algorithm matched them with a different user who bought that same product, which we cover later in this post.

Governments use similarity algorithms to compare populations. Scientists use them to discover connections between different biological components, enabling them to safely develop new drugs. Companies use them to advance machine learning efforts and link prediction analysis.

Here’s how to apply similarity and link prediction in your Google Analytics Knowledge Graph empowered by NVIDIA cuGraph.

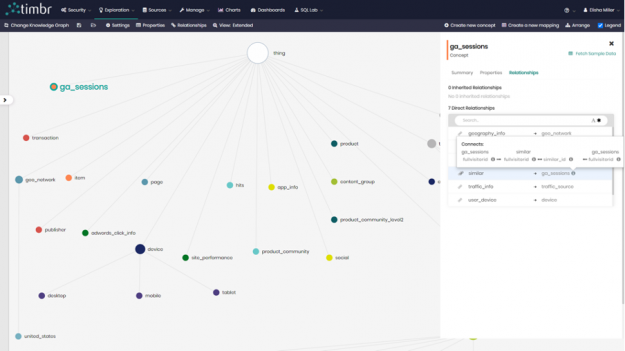

Begin by creating a relationship called similar in your concept ga_sessions, a concept that contains data about all the sessions and visits of users to your website. Unlike in the example earlier on community detection, where you created a relationship between two concepts in your knowledge graph, here you create a relationship between ga_sessions and itself, where the relationship calculates the similarity between customers that searched for more than one similar keyword.

After the relationship is created, it’s time to map the data to the relationship. timbr enables you to map data to concepts and also to map data directly to relationships and extend them with their own properties, thus building a property graph.

The query gives you the similarity between customers that searched for more than one similar keyword, which you can later map to your relationship similar in ga_session:

SELECT id AS fullvisitorid, similar_id AS similarid, similarity

FROM gtimbr.jaccard(

SELECT `has_session[ga_sessions].fullvisitorid`, keyword

FROM dtimbr.traffic_source

WHERE `has_session[ga_sessions].fullvisitorid` IN (

-- Users that searched more than 1 keyword

SELECT distinct `has_session[ga_sessions].fullvisitorid` AS id

FROM dtimbr.traffic_source

WHERE keyword IS NOT NULL AND keyword != '(not provided)'

GROUP BY `has_session[ga_sessions].fullvisitorid`

HAVING count(1) > 1)

AND keyword IS NOT NULL AND keyword != '(not provided)')Again, we compared the performance speeds running this algorithm query with cuGraph and NetworkX. Table 3shows the results.

| NetworkX | RAPIDS cuGraph | |

| Performance speed | 24 seconds | 0.04 seconds |

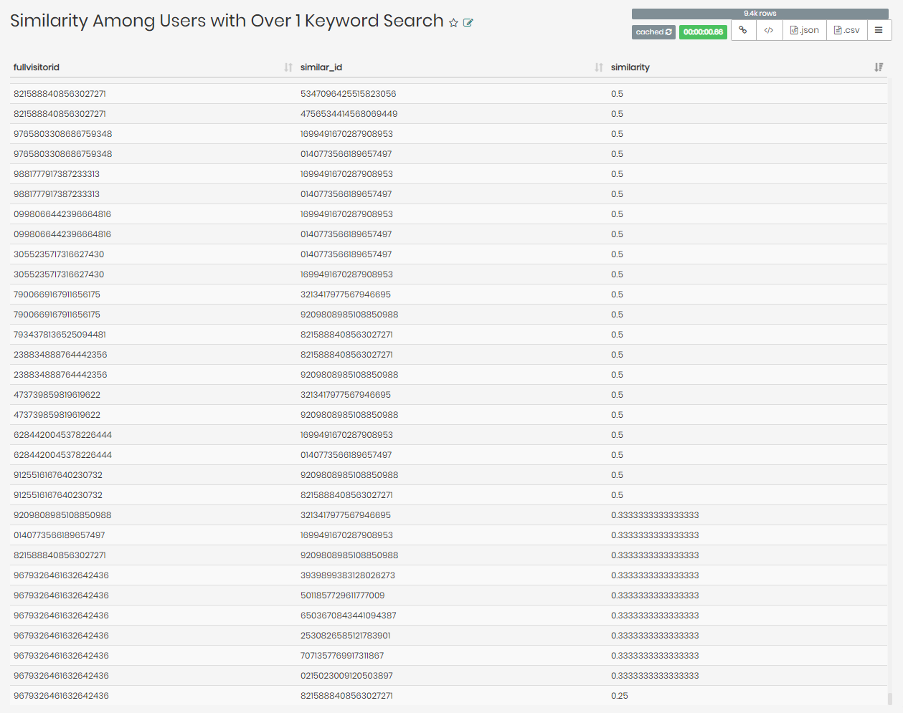

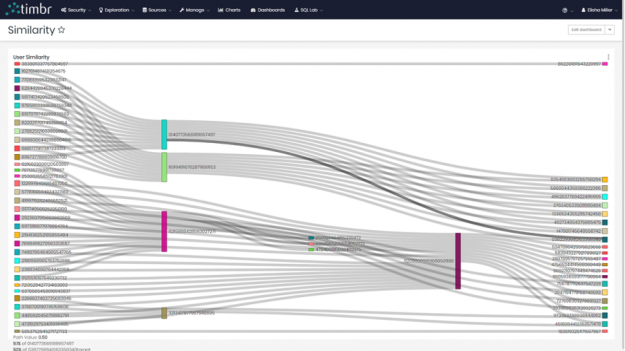

The query asks for the fullvisitorid (user ID), similar_id (IDs of similar users), and similarity (a Jaccard similarity score for each match of user IDs). Figure 8 shows the results.

Next, create a visualization using the created similar relationship. Use the following query to do so:

SELECT DISTINCT `fullvisitorid` AS user_id,

`similar[ga_sessions].fullvisitorid` AS similar_user_id,

`similar[ga_sessions]_similarity` AS similarity

FROM dtimbr.ga_sessions

WHERE `similar[ga_sessions].fullvisitorid` IS NOT NULLNow that you have all the visitor IDs and similar visitor IDs that had more than one similar keyword, as well as _similarity, where the underline before similarity represents the similarity score in the relationship, you are ready to visualize the results.

If you choose the sankey diagram to represent the findings, you see something like the following chart.

You can see the different users on the left and right connecting to their similar match in the middle. Many of these users both in the middle and on the sides are connected to multiple users.

Combining the community and similarity algorithms

In a final example, you can build a recommendation query combing the relationships you’ve created for community and similarity algorithms.

SELECT similar[ga_sessions].has_hits[hits].ecommerce_product_data[product].productsku AS productsku,

similar[ga_sessions].has_hits[hits].ecommerce_product_data[product].in_community[product_community].community AS community,

COUNT(distinct similar[ga_sessions].fullvisitorid) num_of_users

FROM dtimbr.ga_sessions

WHERE fullvisitorid = '9209808985108850988'

AND similar[ga_sessions].has_hits[hits].ecommerce_product_data[product].productsku IS NOT NULL

GROUP BY similar[ga_sessions].has_hits[hits].ecommerce_product_data[product].productsku, similar[ga_sessions].has_hits[hits].ecommerce_product_data[product].in_community[product_community].community

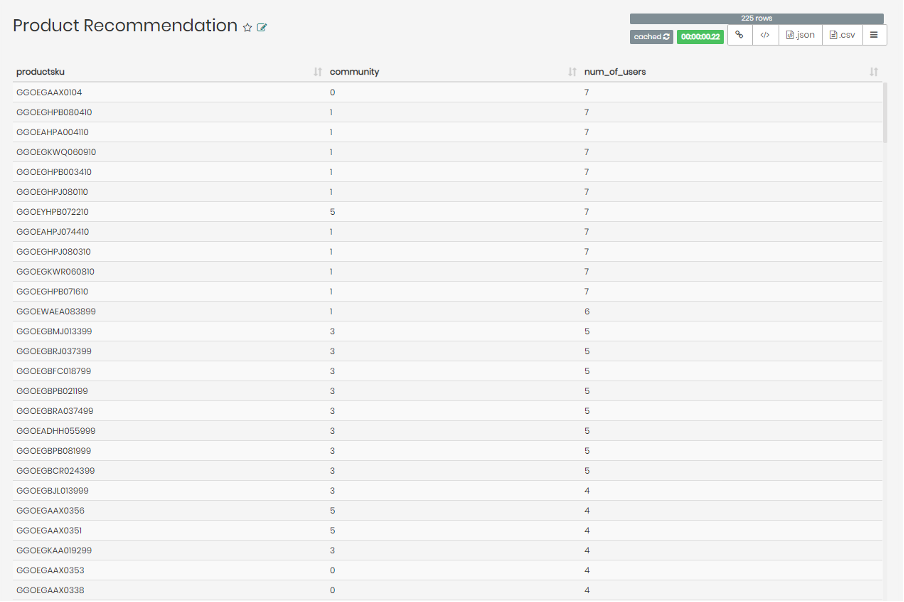

ORDER BY num_of_users DESCThis query focuses on a random visitor with ID number 9209808985108850988. It recommends products for the chosen user based on similar users who previously bought the recommended products. To gain more insight, ask for the communities that the recommended products belong to and see if there are any visible trends.

After running the query, you’ll see the following results:

You can see the list of recommended products for user ID number 9209808985108850988, and the community that these products belong to, as well as the number of similar users to user 9209808985108850988 who bought the specific product on the list.

Interestingly enough, the products recommended for user 9209808985108850988 that have the most similar users who bought these products seem to largely fall in community 1. If you were investigating further, this could direct you to check whether it’s worth recommending more products from community 1 to user 9209808985108850988.

Conclusion

In this post, we demonstrated timbr’s advanced semantic capabilities using the Google Analytics Knowledge Graph combined with Graph Algorithms all in simple SQL, enabling you to analyze, explore, and visualize your data. You can perform in-depth analysis with extremely high performance speeds, going through large amounts of data in a matter of seconds.

You can make the most of your knowledge graph by connecting with RAPIDS cuGraph and NVIDIA GPU capabilities. Using cuGraph enables you to query and analyze a mass amount of data in a fraction of the time it would have taken to do so using the standard CPU in NetworkX.

For more information about leveraging your data and performance speeds like never before, see timbr.ai.