While high-level languages for GPU programming like CUDA C offer a useful level of abstraction, convenience, and maintainability, they inherently hide some of the details of the execution on the hardware. It is sometimes helpful to dig into the underlying assembly code that the hardware is executing to explore performance problems, or to make sure the compiler is generating the code you expect. Reading assembly language is tedious and challenging; thankfully Nsight Visual Studio Edition can help by showing you the correlation between lines in your high-level source code and the executed assembly instructions.

As Mark Harris explained in the previous CUDA Pro Tip, there are two compilation stages required before a kernel written in CUDA C can be executed on the GPU. The first stage compiles the high-level C code into the PTX virtual GPU ISA. The second stage compiles PTX into the actual ISA of the hardware, called SASS (details of SASS can be found in the cuobjdump.pdf installed in the doc folder of the CUDA Toolkit). The hardware ISA is in general different between GPU architectures. To allow forward compatibility, the second compilation phase can be either done as part of the normal compilation using nvcc or at runtime using the integrated JIT compiler in the driver.

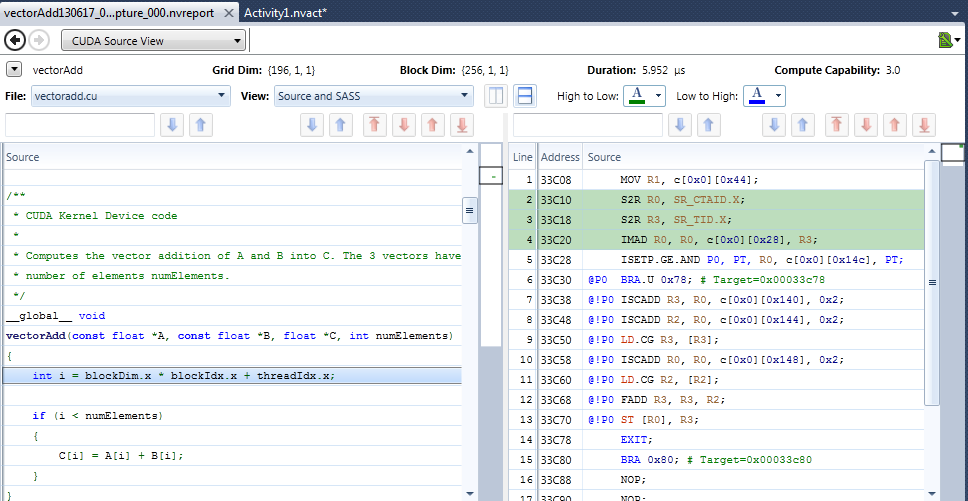

It is possible to manually extract the PTX or SASS from a cubin or executable using the cuobjdump tool included with the CUDA Toolkit. Nsight Visual Studio Edition makes it easier by showing the correlation between lines of CUDA C, PTX, and SASS.Correlating Source Code to Assembly Instructions

The nvcc compiler is able to generate line mapping information for CUDA C code, which correlates code lines or sections across the different languages. To demonstrate a simple case, the line float d = a * b + c might map to fma.rn.ftz.f32 d, a, b, c in PTX and FMA R0, R1, R2, R3 in SASS. But it is also possible for one statement in CUDA C to map to several non-consecutive instructions in SASS.

There are several reasons why looking at the line mapping might be useful even if the code is written in CUDA C.

- To find high-level code statements that generate many GPU assembly instructions. For example, if you compile without the

--use_fast_mathflag, you will see more generated instructions than with--use_fast_math. - To see if a loop was unrolled or if a device function was inlined.

- To find which variables are spilled to local memory (if any). If your application has high register pressure, the compiler might decide to store (spill) variables to local memory. Knowing where and how often register spills happen can help you estimate their effects on kernel performance.

- To see dependencies between instructions. Instruction dependencies can limit instruction-level parallelism, which in turn limits instruction throughput. Finding dependencies in the GPU assembly can help you see how to rearrange code to achieve a higher instruction throughput.

How to View Code Correlation in Nsight Visual Studio Edition

There are only a few steps required to enable source correlation once you have your CUDA C program running in Nsight Visual Studio Edition.

- Enable line information generation in your project.

- Click on the Project > Properties menu.

- Go to CUDA C/C++ > Device and enable Generate Line Number Information.

For debug builds, line information is automatically generated. In release builds this flag is required to get source code correlation.

- Use Nsight > Start Performance Analysis… and select Profile CUDA Application.

- In Experiment Settings, enable the option Collect Information for CUDA Source View.

- Press Launch, wait until your application finishes, and select CUDA Source View in the top left navigation control of the created report.

More Information

You can find more information about the usage and features of the CUDA Source View in the Nsight Visual Studio Edition documentation. It is also possible to combine the view with profiling and show source-correlated statistics about memory transactions, branches, and instructions.