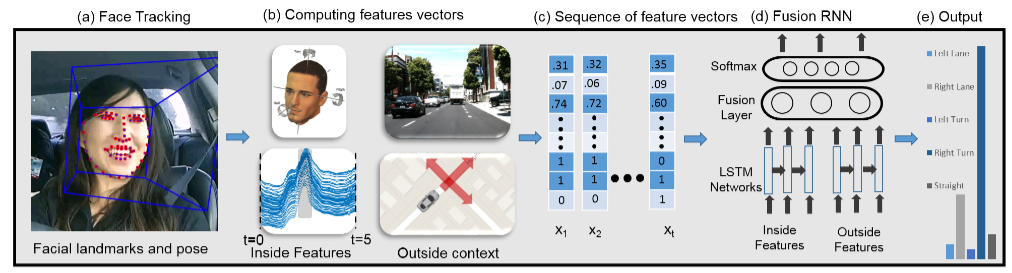

Through a project called Brain4Cars, Stanford and Cornell researchers released a new architecture consisting of Recurrent Neural Networks (RNNs) that use Long Short-Term Memory (LSTM) units to predict driving maneuvers several seconds in advance. This enables assistive cars to alert drivers before they make a dangerous maneuver. Maneuver anticipation complements existing Advance Driver Assistance Systems (ADAS) by giving drivers more time to react to road situations and thereby can prevent many accidents.

Using a Tesla K40, the researchers trained their deep learning architecture in a sequence-to-sequence prediction manner, and it explicitly learns to predict the future given only a partial temporal context. We further introduce a novel loss layer for anticipation which prevents over-fitting and encourages early anticipation. They use their architecture to anticipate driving maneuvers several seconds before they happen on a natural driving data set of 1180 miles. The context for maneuver anticipation comes from multiple sensors installed on the vehicle. The approach shows significant improvement over the state-of-the-art in maneuver anticipation by increasing the precision from 77.4% to 90.5% and recall from 71.2% to 87.4%.

Read the entire research paper >>

AI-Generated Summary

- Researchers from Stanford and Cornell developed a new architecture called Brain4Cars, which uses Recurrent Neural Networks (RNNs) with Long Short-Term Memory (LSTM) units to predict driving maneuvers several seconds in advance.

- The deep learning architecture was trained on a Tesla K40 using a sequence-to-sequence prediction manner and introduced a novel loss layer to prevent over-fitting and encourage early anticipation.

- The Brain4Cars approach significantly improved maneuver anticipation precision to 90.5% and recall to 87.4% on a natural driving data set of 1180 miles, outperforming the state-of-the-art.

AI-generated content may summarize information incompletely. Verify important information. Learn more