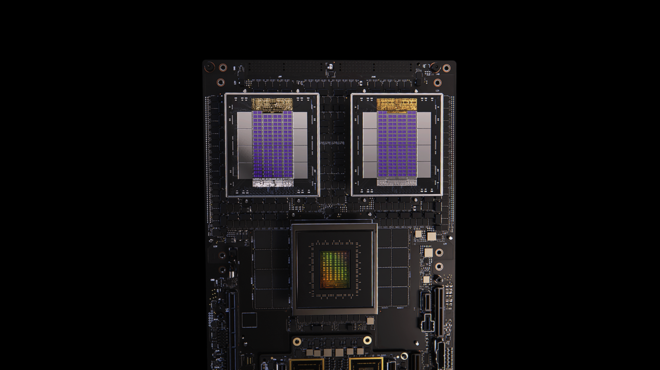

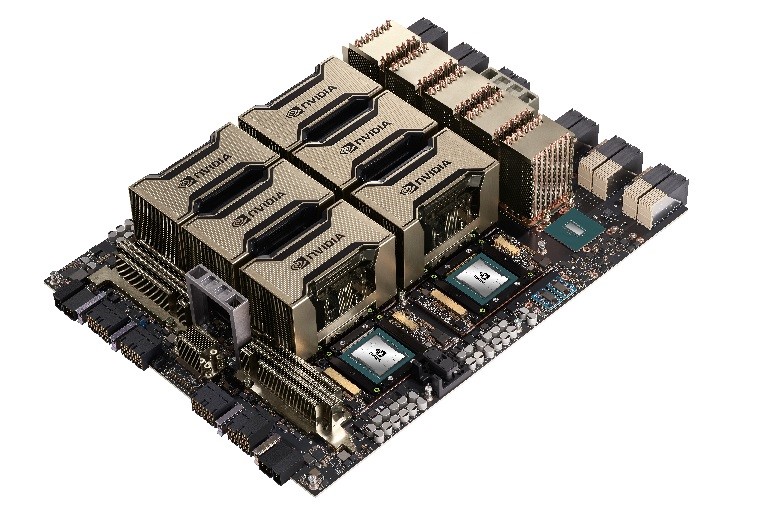

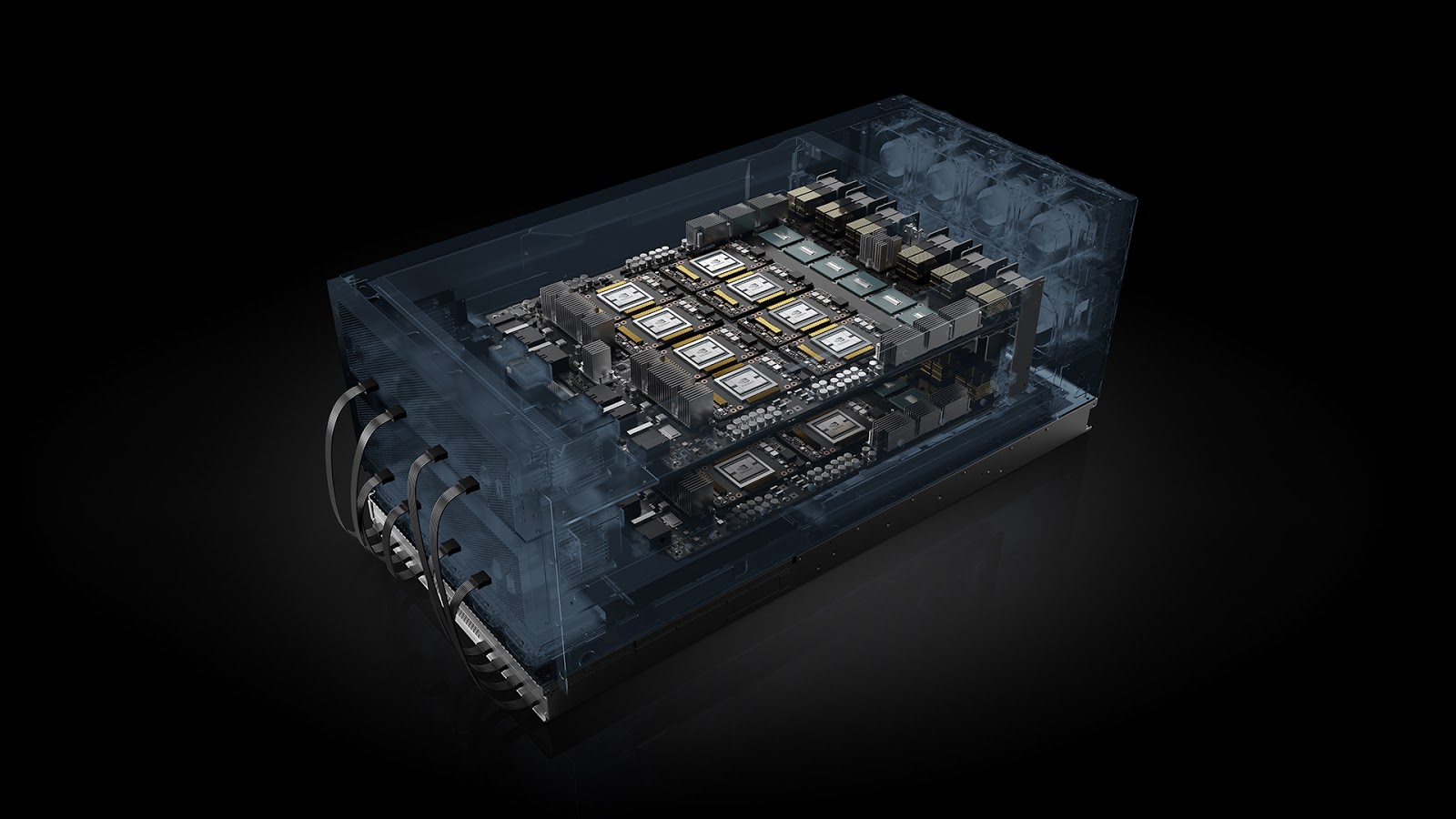

The NVIDIA GB200 NVL72 and NVIDIA GB300 NVL72 systems, featuring NVIDIA Blackwell architecture, are rack-scale supercomputers. They’re designed with 18 tightly coupled compute trays, massive GPU fabrics, and high-bandwidth networking packaged as a unit.

For AI architects and HPC platform operators, the challenge isn’t just racking and stacking hardware—it’s turning infrastructure into safe, performant, and easy-to-use resources for end users. The mismatch between rack-scale hardware topology and scheduler abstractions is where most of the operational complexity lives. Left unaddressed, schedulers operate on a flat pool of GPUs and nodes, overlooking the system’s hierarchical and topology-sensitive design.

This is the gap that a validated software stack, such as NVIDIA Mission Control, is designed to bridge. Mission Control provides rack-scale control planes for NVIDIA Grace Blackwell NVL72 systems. With a native understanding of NVIDIA NVLink and NVIDIA IMEX domains, it integrates with workload management platforms like Slurm and NVIDIA Run:ai. These capabilities will also be supported for the NVIDIA Vera Rubin platform, including for NVIDIA Rubin NVL8.

This post demonstrates how Mission Control, Slurm, and NVIDIA Run:ai turn advanced GPU architecture concepts—such as NVLink and IMEX domains—into an operational AI factory that is scalable, schedulable, and easy to manage.

The core challenge: Rack-scale topology meets AI workload scheduling

At a physical level, GB300 NVL72 and GB200 NVL72 systems are powerful, sophisticated systems. Each delivers a dense GPU fabric connected by NVLink switches, supports NVIDIA Multi-Node NVLink (MNNVL) within the rack, and includes IMEX-capable compute trays that enable shared GPU memory across nodes.

Schedulers, however, don’t operate at the level of switches and fabrics. They require:

- Discrete GPU resource pools can allocate predictably.

- Clear isolation boundaries to protect workloads from one another.

- Consistent performance characteristics that match user expectations.

Under the hood, the NVLink topology of a Grace Blackwell NVL72 rack is reflected up the software stack through a pair of system-level identifiers: cluster UUID and clique ID.

These identifiers encode a GPU’s position in the NVLink fabric—across domains or racks—in a way that system software, schedulers, and higher-level tooling can reason about.

The mapping is straightforward:

- Cluster UUID corresponds to the NVLink domain.

- Clique ID corresponds to the NVLink partition.

A shared cluster UUID means that systems—and their GPUs—belong to the same NVLink domain and are connected by a common NVLink fabric. On Grace Blackwell NVL72, this UUID is consistent across the entire rack: all GPUs in the same NVL72 rack report the same cluster UUID.

The clique ID provides a finer-grained distinction. GPUs that share a clique ID belong to the same NVLink Partition within that domain. When a rack is carved into multiple NVLink partitions, the cluster UUID remains the same—because the GPUs live in the same physical NVLink domain—but the clique IDs differ to reflect the logical partitioning of the fabric.

From an operational perspective, this distinction matters:

- Cluster UUID answers: Which GPUs physically share a rack and are capable of NVLink communication?

- Clique ID answers: Which GPUs share an NVLink Partition and are intended to communicate together for a given workload or service tier?

These identifiers form the connective tissue between hardware topology and scheduling logic. They enable platforms like Slurm, Kubernetes, and NVIDIA Run:ai to align job placement, isolation, and performance guarantees with the actual structure of the NVLink fabric, without exposing that complexity directly to end users.

Scheduling Multi-Node NVLink workloads with Slurm

Once you start running multi-node workloads on Blackwell-based NVL72 systems, placement becomes as important as GPU count. A 16-GPU job spread across the wrong nodes can behave very differently from the same job confined to a single NVLink fabric.

This is where Slurm’s topology/block plugin becomes essential, enabling Slurm to recognize that not all nodes are equal.

On Grace Blackwell NVL72 blocks of nodes with lower-latency connections map directly to NVLink partitions—groups of GPUs that share a high-bandwidth NVLink fabric.

Consider a simple example with two NVL72 racks. Both racks may belong to the same Slurm partition (queue), but that doesn’t mean jobs should freely span them. From a performance standpoint, each rack—or each NVLink partition carved from it—is a distinct high-bandwidth block.

By enabling the topology/block plugin and exposing NVLink partitions as blocks, Slurm gains the context it needs to make better decisions. Jobs are placed within a single NVLink partition (or block) by default, preserving MNNVL performance. Larger jobs can span blocks when necessary, but the tradeoff becomes explicit rather than accidental.

In practice, this means:

- One block/node group per rack, with Slurm QoS applied at the user or group level to manage access to the shared partition.

- Multiple blocks/node groups per rack when offering smaller, isolated high-bandwidth GPU pools. In this model, each block/node group maps to a Slurm partition, providing a service tier per partition. Users automatically land inside the intended NVLink partition through the Slurm partition—without needing to understand the underlying fabric.

IMEX management with Slurm: From rack-level service to per-job isolation

For multi-node NVIDIA CUDA workloads that rely on MNNVL, IMEX enables GPUs on different compute trays to participate in a shared-memory programming model.

From an application’s point of view, using MNNVL looks deceptively simple. Under the hood, however, Mission Control ensures a few things line up when running MNNVL jobs with Slurm:

- IMEX runs on exactly the set of compute trays participating in the job.

- Those trays belong to a common NVLink partition.

- The IMEX lifecycle is reliable, secure, and scoped tightly enough to avoid cross-job interference.

IMEX is a system-level daemon running in the host OS of every compute tray. It provides memory-sharing and synchronization mechanisms that CUDA libraries build on. If IMEX is mis-scoped, left running too broadly, or fails noisily, multi-node workloads quickly become fragile.

Extending multi-node NVLink support to Kubernetes and NVIDIA Run:ai

Just as Slurm needs help understanding NVLink fabrics to schedule Grace Blackwell NVL72 jobs optimally, Kubernetes also lacks native awareness of rack-scale high-bandwidth interconnects like NVLink.

To address this, the NVIDIA ecosystem combines ComputeDomains (via the NVIDIA Dynamic Resource Allocation (DRA) GPU driver) and NVIDIA Run:ai integration—lifting NVLink and IMEX concepts into domain-aware, scheduler-ready primitives.

In Kubernetes, dynamic resource allocation (DRA) provides an API for flexible, fine-grained management of specialized hardware.

The NVIDIA k8s-dra-driver-gpu implements this API for GPUs and ComputeDomains. The driver includes separate kubelet plugins for GPU allocation, and for ComputeDomains, and when enabled, manages GPU multi-node NVLink domains—grouping GPUs across nodes into secure, high-bandwidth, memory-coherent domains for HPC and AI workloads.

Kubernetes: From flat schedulers to NVLink-aware placement

In Kubernetes, the core challenge is similar to Slurm. Kubernetes pods need to be placed on nodes that share high-bandwidth connectivity. Kubernetes by itself doesn’t understand NVLink domains, so workloads might be scattered across nodes that lack the required fabric connectivity for efficient multi-node execution.

The solution is the ComputeDomains concept provided by the NVIDIA DRA driver for GPUs. A ComputeDomain:

- Represents a set of nodes that share an NVLink/MNNVL domain.

- A workload submission must create the ComputeDomain object and link to that object through a ResourceClaim when a distributed workload (training or inference) is submitted.

- Is tied to the exact set of pods participating in the workload.

- Is torn down when the workload ends.

By doing this, ComputeDomains make the high-performance fabric first-class in scheduling. ComputeDomains also ensure that underlying resources like IMEX channels are properly instantiated and scoped for the workload.

---

apiVersion: resource.nvidia.com/v1beta1

kind: ComputeDomain

metadata:

name: compute-domain

spec:

numNodes: 18

channel:

resourceClaimTemplate:

name: compute-domain-resource-claim

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: test-workload

labels:

app: test-workload

spec:

replicas: 18

selector:

matchLabels:

app: test-workload

template:

metadata:

labels:

app: test-workload

spec:

containers:

- name: ctr

image: ubuntu:22.04

command: ["test-workload"]

resources:

limits:

nvidia.com/gpu: 4

claims:

- name: compute-domain

resourceClaims:

- name: compute-domain

resourceClaimTemplateName: compute-domain-resource-claim

How NVIDIA Run:ai simplifies distributed workloads on NVLink domains

NVIDIA Run:ai builds on Kubernetes and ComputeDomains to make Grace Blackwell NVL72 systems usable without exposing users to NVLink topology, IMEX domains, or low-level scheduling mechanics. Users request distributed GPUs. NVIDIA Run:ai handles the rest.

Under the hood, NVIDIA Run:ai automates several critical pieces:

- Automatic detection and labeling: GB200 NVL72 nodes are identified and labeled based on their NVLink/MNNVL domain membership. These labels form the foundation for NVLink-aware placement.

- ComputeDomain-backed workload placement: When a job is submitted, it automatically creates and attaches a ComputeDomain. Pods are placed on nodes that share NVLink connectivity and a correctly scoped IMEX domain.

- Topology-aware scheduling: When a network topology is defined for a node pool, NVIDIA Run:ai applies topology-aware scheduling preferences to keep all pods in a distributed workload as close as possible, only expanding outward when necessary. This reduces latency and avoids accidental cross-domain placement.

Automatic topology detection with Topograph

All of these approaches rely on accurate knowledge of the underlying hardware and network topology. For platform engineers, manually defining NVLink domains, rack boundaries, or network hierarchies does not scale in large or frequently changing environments. This is where Topograph, an open source NVIDIA tool, fits into the picture.

Topograph automatically discovers cluster topology by collecting node-level and infrastructure metadata and translating it into scheduler-consumable representations. It can detect how nodes are connected—across racks, switches, or fabric hierarchies—and reveals this structure through APIs that higher-level systems can consume. Instead of assuming a flat cluster, schedulers gain a concrete view of proximity and bandwidth relationships.

When combined with Slurm and Kubernetes—along with ComputeDomains and NVIDIA Run:ai—Topograph enables a fully automated flow. Topology is discovered rather than hand-modeled, nodes are labeled consistently, and topology-aware placement decisions can be made with minimal operator intervention. This closes the gap between physical infrastructure and logical scheduling abstractions.

Learn more about advanced AI operations

Check out the Mission Control Administrator Guide or User Guide to learn more about implementation.

Watch an on-demand NVIDIA GTC 2026 session with Eli Lilly & Company and learn how they went from rack-scale hardware to schedulable AI infrastructure with powerful, intelligent software.