VR head-mounted displays (HMDs) continue to dramatically improve with each generation. Resolution, refresh rate, field of view, and other features bring unique challenges to the table. The NVIDIA VRWorks Graphics SDK has been offering various rendering technologies to tackle the challenges brought forth by the increasing capabilities of these new HMDs. NVIDIA Turing introduced Variable Rate Shading (VRS), which offers the ability to change the shading rate within a frame.

Eye tracking, shown in Figure 1, represents another key addition in the new generation of VR HMD hardware. The commercial availability of VR HMDs with integrated eye tracking, such as the new HTC VIVE Pro Eye, provides further motivation for VRS integration, as shading resources can be focused in the foveated region. This post focuses on a simplified way to integrate foveated VRS, with the introduction of the VRS Wrapper NVAPIs.

For an introduction to the basics of VRS, check out the “Turing Variable Rate Shading in VRWorks” post.

Variable Rate Shading in Turing

NVIDIA introduced Variable Rate Shading (VRS) with the Turing GPU architecture. VRS balances rendering performance and quality by varying the amount of processing power applied to different areas of the image. The new shading technology works by altering the number of pixels that are processed by a single pixel shader operation; these operations can now be applied to blocks of pixels, allowing applications to effectively vary the shading quality in different areas of the screen. Variable Rate Shading can be used to render VR more efficiently by rendering to a surface closely approximating the lens-corrected image output to the headset display. This avoids rendering pixels that would otherwise be discarded before the image is output to the VR headset. VRS can also be paired with eye tracking to optimize rendering quality to match the user’s gaze.

Our previous post shines more light on the basics of VRS. This time we focus on a new VRS Wrapper that includes VRS Helper and Gaze Handler APIs, designed for ease of integration for foveated use cases.

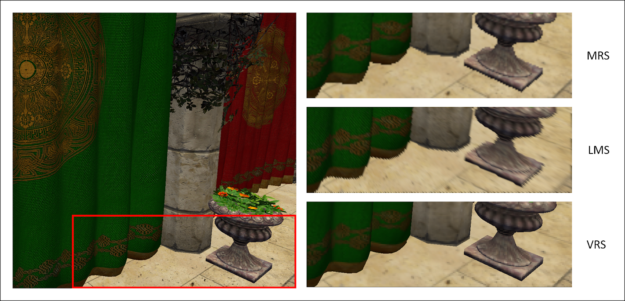

NVIDIA introduced VR rendering optimization features with Maxwell and Pascal, including Multi-Res Shading (MRS) and Lens-Matched Shading (LMS). VRS offers much better edge retention compared to these earlier methods, as well as an overall simpler implementation. Figure 2 below shows a visual comparison between those older methods and VRS, to better illustrate VRS’s advantages:

Foveated Rendering

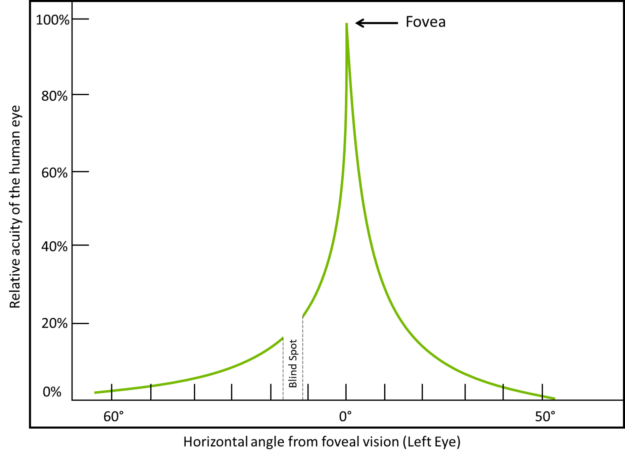

Each new increase in the resolution of head-mounted displays (HMDs) means an increase in the number of pixels that the GPU must render. To maintain a fluid VR experience, we must continue to find new ways to optimize VR rendering performance. The emergence of HMDs with integrated eye tracking offers a new way of improving both perceived visual quality and performance, since the human eye perceives visual quality differently across the field of vision. Based on the number of cone cells present, the acuity or the resolving power of the eye changes as graphed in Figure 3.

The human eye only notices maximum details within a very narrow region in the eye, called the fovea. The peripheral vision around the fovea is typically blurred, containing much less detail. VRS foveated rendering takes advantage of this quirk of human perception to render with higher-quality at the gaze location while rendering at reduced quality in the peripheral region. Foveated rendering improves perceived visual quality in HMDs while maintaining high frame rates. VRS foveated rendering allows developers to change the shading rate based on the gaze input from modern HMDs.

VRS offers a number of tools to help developers implement foveated rendering. The NVAPI support for VRS still requires substantial heavy lifting on the part of developers to effectively implement foveated rendering. The latest NVAPI release now offers tools to simplify the implementation of VRS foveated rendering.

Introducing VRS Helper and Gaze Handler

The initially released low-level VRS NVAPIs provide fully customizable functionality for VR rendering. The newly introduced VRS Wrapper NVAPIs assist developers in integrating foveated rendering within their application more easily, with less code.

VRS Wrapper consists of the following two interfaces.

- VRS Helper. Used to control the foveated rendering parameters.

- Gaze Handler. Gathers and manages the eye-tracking data.

Table 1 highlights the advantages of implementing foveated rendering using these wrappers compared to the low-level VRS APIs.

|

Step |

Low-level VRS NVAPIs | VRS Helpers Wrapper NVAPIs | ||

|

1 |

Create render target(s) for the scene | Create render target(s) for the scene | ||

|

2 |

Query support for VRS | Query support for VRS and initialize VRS Helper and optionally Gaze Handler interface. | ||

|

3 |

Track the base render targets and create Shading Rate Resource texture(s) as per the render target dimensions | Not required | ||

|

4 |

Create Shading Rate Resource View(s) (SRRVs) and Unordered Access View(s) (UAVs) from Shading Rate Resource texture(s) | Not required | ||

|

5 |

Devise the algorithm and create the compute shader, constant buffer for populating the SRRVs | Not required | ||

|

6 |

Create pixel shaders with appropriate NVAPI flags if VRS supersampling is to be implemented | Not required | ||

| 7 | Loop | |||

|

|

7a | Track the render targets and create additional SRRVs as per need (e.g. change of resolution scale). | Not required | |

| 7b | Gather gaze data from eye-tracker runtime | Required only if eye-tracker runtime does not implement Gaze Handler. Call UpdateGazeData() with the data. Note: This can be done in a parallel thread. | ||

| 7c | Unset the SRRV if already set | Not required | ||

| 7d | Set the Shading Rate Surface UAV and use the gaze data via a constant buffer to populate the correct SRRV texture | Not required | ||

| 7e | Set the correct SRRV using NVAPI | Not required | ||

| 7f | N/A | VRSHelper->LatchGaze() | ||

| 7g | Program the shading rate look-up table as per the values populated in the SRRV | Not required | ||

| 7h | Enable VRS for appropriate viewports using NVAPI | VRSHelper->Enable() | ||

| 7i | Perform draw calls as per scene requirement | Perform draw calls as per scene requirement | ||

| 7j | Unset the SRRV | Not required | ||

| 7k | Disable VRS for appropriate viewports using NVAPI | VRSHelper->Disable() | ||

| 7l | Release the SRRV before releasing the Shading Rate Surface texture | Not required | ||

The steps mentioned as “Not Required” above are handled by the backend of the VRS Wrapper NVAPIs included in the display drivers.

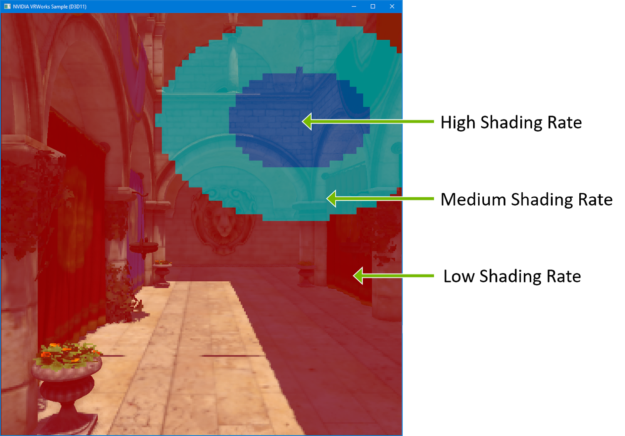

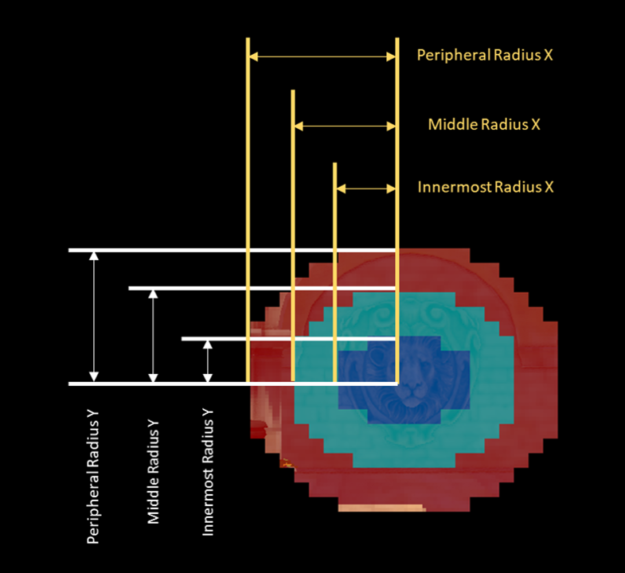

VRS Regions

Before diving into the specifics of setting up VRS Helper, let’s take a closer look at a key concept: VRS regions. Figure 4 shows how the shading rate can vary depending on the gaze. Shading rate refers to the number of shader samples in a block of 4×4 raster pixels. Varying the shading rate within a frame means we’re varying the sampling at different regions within the viewing area. Figure 4 highlights these shading regions.

The dark blue area in figure 4 corresponds to the foveal region, or the focus point of the eye. The central blue region gets the highest shading rate, followed by the medium shading rate in the cyan region, and the low shading rate in the peripheral red region. This allows developers to maintain a high level of perceived visual quality without adversely affecting frame rate. VRS allows developers to bake these different shading rates into their applications. However, that can require significant coding effort. This is where VRS Helper comes in.

Easy to Use Presets and Customization with VRS Helper

VRS Helper simplifies the implementation of foveated rendering, without giving up flexibility. VRS Helper allows you to perform foveated rendering by choosing from the following.

- Easy to use built-in presets. These provide the quickest way to integrate foveated rendering without diving too deep into any numerical parameters, still allowing some flexibility.

- Fully customizable preset. With the custom preset, you can customize most of the internal parameters to perform foveated rendering exactly the way you want.

The choice of presets is made in the Enable() call itself and thus allows dynamically changing the same based on any game-dependent factor. These presets can be explored in the VRWorks Graphics SDK Sample application as well.

Built-in Shading Rates Presets

The shading rates of the foveated rendering can be set using one of the 5 built-in presets, shown in Table 2. Here a shading rate e.g. 2×2 indicates that a single shade evaluated by a pixel shader invocation will be replicated across a region of 2×2 pixels, thus achieving coarse shading. Also, shading rate such as 4×SS (4× supersampling) indicates that pixel shader will be invoked upto 4 times evaluating upto 4 unique shades for the samples within a single pixels.

| Preset |

Shading Rate |

Render Target MSAA | ||

|

Innermost Ellipse |

Middle Region |

Peripheral Region |

||

| Highest Performance | 1×1 | 2×2 | 4×4 | 1× |

| High Performance | 1×1 | 2×2 | 2×2 | 1× |

| Balanced | 4×SS | 1×1 | 2×2 | 4× |

| High Quality | 4×SS | 2×SS | 1×1 | 4× |

| Highest Quality | 8×SS | 4×SS | 2×SS | 8× |

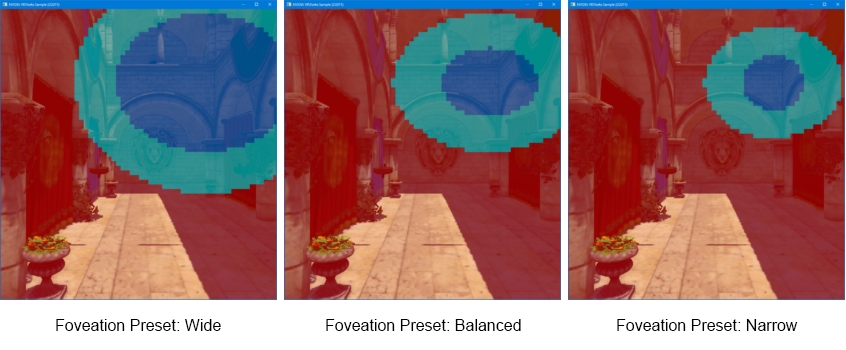

Built-in Foveation Pattern Presets

The foveation pattern can be selected from one of the three built-in presets shown in figure 4.

Custom Presets

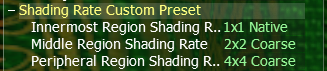

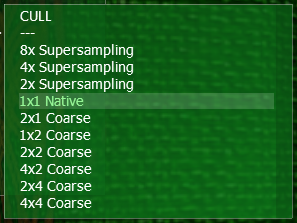

Both shading rate and foveation pattern can be fully customized as well. This can be done by choosing “Custom” preset in the individual settings. Choosing the “Custom” shading rate preset allows you to customize the shading rate of each of the regions of the foveation. Figure 5 and 6 show the basic menu choices.

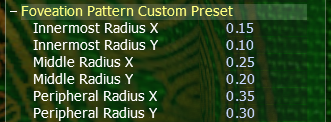

Choosing the “Custom” foveation pattern preset allows you to specify the radii of the three concentric ellipses, as shown in Figure 7. These radii values are on a relative scale from 0.0 to 1.0. For an ellipse placed in the center of the window, a radius of 1.0 would span the width (or height) of the window. Radii of (1.0, 1.0) always represents a perfect circle fitting in the render target, just touching the two longer sides in the case of a rectangle.

Figure 8 depicts the custom preset parameters.

Balanced Preset: Improving Quality while Minimizing Performance Impact

One of the additional challenges VR introduces over regular desktop rendering is that VR displays tend to have fewer pixels per degree when compared to a desktop monitor. So VR rendering is much more susceptible to aliasing artifacts. Furthermore, bringing as much detail and reality as possible in the rendering becomes critical to enhancing the immersive experience. Developers need to tackle various rendering artifacts, aliasing in particular. This can be addressed by using multi-sampled render targets and MSAA to soften the objects’ edges.

If the application is using a multi-sampled render target (MSAA), performing supersampling can further enhance the quality of the rendering. Supersampling enables increased sharpness and better anti-aliasing on high-frequency textures not possible by simple MSAA. The pixel shader is run at every sample location within a pixel instead of just once per pixel when supersampling.

Without VRS, the application would have to perform supersampling on the entire render target. That makes supersampling extremely taxing on the GPU, since the pixel shader load increases. The VRS Helper allows the application to improve the quality while minimizing the performance impact since it can choose to perform supersampling only within the foveal region.

In addition, the “Balanced” shading rate preset performs 2×2 coarse shading in the extreme periphery. This in turn helps regain some of the GPU cycles consumed in performing the central supersampling.

This Balanced preset is achieved by combining the settings as follows:

- Shading Rate Balanced Preset. Performs 4x supersampling in foveal region, 1x in the middle and 2×2 coarse shading at periphery.

- Foveation Pattern Balanced preset. Keeps the foveal region just large enough so that the periphery is outside the instantaneous field of view for most users.

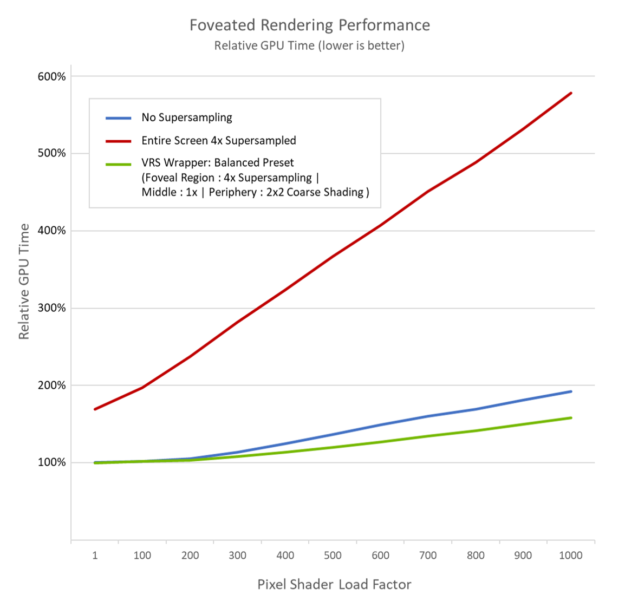

The performance benefits of foveated rendering using VRS Wrappers with Balanced presets versus full-screen supersampling becomes quite apparent with pixel-heavy rendering, as demonstrated by the performance graph in Figure 9.

* Test environment: RTX 2080Ti + Core i7 8700K with Resolution Scale = 2.0 on top of 1920 x 1080 and 4x MSAA VRS Wrapper Balanced preset performance comparison from simulated pixel shader load. These values are captured from the VRWorks SDK VRS Helper sample. The pixel shader load factor in the graph denotes the lighting loop count in the base pass pixel shader to control the load.

The graph shows that using the “Balanced Foveation” and “Shading Rate” preset helps improve quality in the foveal region, while maintaining performance with heavier loads.

Programming VRS Wrapper APIs

VRS Wrapper APIs allow for programming foveated rendering using VRS Helper and Gaze Handler interfaces.

Initializing VRS Helper

VRS Helper needs to be initialized to perform further operations. This process returns an interface object that can be used for calling other crucial functions.

ID3DNvVRSHelper *m_pVRSHelper = NULL;

NV_VRS_HELPER_INIT_PARAMS vrsHelperInitParams;

...

vrsHelperInitParams.ppVRSHelper = &m_pVRSHelper;

NvAPI_Status NvStatus = NvAPI_D3D_InitializeVRSHelper(m_pDevice, &vrsHelperInitParams);

Initializing the Gaze Handler

If the eye-tracker runtime or VR runtime does not implement Gaze Handler, the application needs to take the responsibility of initializing the same and updating the gaze data as and when acquired by the eye-tracker runtime.

ID3DNvGazeHandler *m_pGazeHandler = NULL;

NV_GAZE_HANDLER_INIT_PARAMS gazeHandlerInitParams;

...

gazeHandlerInitParams.GazeDataDeviceId = 0;

gazeHandlerInitParams.GazeDataType = NV_GAZE_DATA_STEREO;

gazeHandlerInitParams.fHorizontalFOV = 110.0;

gazeHandlerInitParams.fVericalFOV = 110.0;

gazeHandlerInitParams.ppNvGazeHandler = &m_pGazeHandler;

NvAPI_Status NvStatus = NvAPI_D3D_InitializeNvGazeHandler(m_pDevice, &gazeHandlerInitParams);

Updating the gaze data

The application can keep updating the gaze data in a parallel thread as follows:

NV_FOVEATED_RENDERING_UPDATE_GAZE_DATA_PARAMS m_gazeDataParams;

m_gazeDataParams.Timestamp = m_gazeTimestamp++; // Or as fetched from the eye tracker hardware

...

m_gazeDataParams.sStereoData.sLeftEye.fGazeNormalizedLocation[0] = ... // ranges from -0.5 to +0.5

m_gazeDataParams.sStereoData.sLeftEye.fGazeNormalizedLocation[1] = ... // ranges from -0.5 to +0.5

m_gazeDataParams.sStereoData.sLeftEye.GazeDataValidityFlags = NV_GAZE_LOCATION_VALID;

...

m_gazeDataParams.sStereoData.sRightEye.fGazeNormalizedLocation[0] = ... // ranges from -0.5 to +0.5

m_gazeDataParams.sStereoData.sRightEye.fGazeNormalizedLocation[1] = ... // ranges from -0.5 to +0.5

m_gazeDataParams.sStereoData.sRightEye.GazeDataValidityFlags = NV_GAZE_LOCATION_VALID;

NvAPI_Status NvStatus = m_pGazeHandler->UpdateGazeData(m_pCtx, &m_gazeDataParams);

Latching the gaze

VRS Helper needs the application to mark the start of the frame. This is the point at which the driver latches the latest gaze and uses it for the rest of the draw calls within that frame.

NV_VRS_HELPER_LATCH_GAZE_PARAMS latchGazeParams;

...

NvAPI_Status NvStatus = m_pVRSHelper->LatchGaze(m_pCtx, &latchGazeParams);

Enabling foveated rendering with balanced presets

The application then needs to enable foveated rendering with configurations before commencing the draw calls. Foveated rendering remains enabled until the application disables it.

NV_VRS_HELPER_ENABLE_PARAMS m_enableParams;

m_enableParams.RenderMode = NV_VRS_RENDER_MODE_STEREO;

...

m_enableParams.ContentType = NV_VRS_CONTENT_TYPE_FOVEATED_RENDERING;

m_enableParams.sFoveatedRenderingDesc.ShadingRatePreset = NV_FOVEATED_RENDERING_SHADING_RATE_PRESET_BALANCED;

m_enableParams.sFoveatedRenderingDesc.FoveationPatternPreset = NV_FOVEATED_RENDERING_FOVEATION_PATTERN_PRESET_BALANCED;

...

NvAPI_Status NvStatus = m_pVRSHelper->Enable(m_pCtx, &m_enableParams);

Disabling foveated rendering

Once all the draw calls required for foveated rendering have completed, the application should disable foveated rendering. The application would then typically perform post-processing and other rendering tasks, such as offscreen rendering where foveated rendering should be disabled.

NV_VRS_HELPER_DISABLE_PARAMS disableParams;

...

NvAPI_Status NvStatus = m_pVRSHelper->Disable(m_pCtx, &disableParams);

Please refer to the NVIDIA Variable Rate Shading Helpers Programming Guide PDF provided with the VRWorks SDK package for further information about the NVAPIs.

Availability

VRS Wrapper APIs (VRS Helper and Gaze Handler) are available as a part of NVIDIA VRWorks Graphics 3.2 SDK. Developers can try out the included sample and implement VRS Wrapper in their own applications.

The new HTC VIVE Pro Eye — the first commercially available HMD with integrated gaze tracking — has launched. With the launch of the VIVE Pro Eye, HTC has created a Unity plugin, which has the VRS Wrapper NVAPIs integrated. The Unity plugin allows developers to integrate VRS foveated rendering with just a few button presses.