Cloud technologies are increasingly taking over the worldwide IT infrastructure market. With offerings that include elastic compute, storage, and networking, cloud service providers (CSPs) allow customers to rapidly scale their IT infrastructure up and down without having to build and manage it on their own. The increasing demand for differentiated and cost-effective cloud products and services is rapidly translating into growth opportunities for CSPs. All this is driving CSPs toward adopting software-defined data center principles to deploy, operate, and scale their massive infrastructures with the greatest possible agility. However, a purely software-defined solution doesn’t provide the necessary level of performance and efficiency. Only the combination of software-defined, hardware-accelerated cloud infrastructures enables CSPs to prevail in this competitive market.

NVIDIA is building data center–scale computing platforms that transform cloud computing with new levels of performance and efficiency. A key component of this effort is the new NVIDIA BlueField-2 Data Processing Unit, or DPU. BlueField-2 DPUs deliver a range of software-defined and hardware-accelerated infrastructure services including networking, storage, security, and manageability.

In this post, we show you how BlueField-2 DPUs allow cloud service providers, telecom operators and enterprises to build high-performing, efficient, and secure cloud infrastructures.

Introducing the NVIDIA BlueField-2 DPU

The NVIDIA BlueField-2 DPU thrives in data-centric, I/O-intensive environments. It transforms the way high-performance and efficient clouds are built, from the ground up. BlueField-2 includes a range of purpose-built networking, storage, and security acceleration engines, together with an array of software-programmable Arm cores that enable advanced functionalities. Those functionalities were previously either unachievable or too expensive to use broadly.

Providing up to 200 Gb/s Ethernet or InfiniBand connectivity and an ample amount of processing capacity, BlueField-2 DPUs include all the advanced networking capabilities of the NVIDIA ConnectX-6 Dx SmartNIC and also run a full-fledged Linux OS and applications on the Arm cores, including software-defined networking agents, NVMe drivers, security or firewall functions, and more.

This extreme level of software programmability, coupled with the broad Arm ecosystem, make BlueField-2 a powerful data processing platform, unlocking countless applications and services across cloud, on-premises, and edge environments.

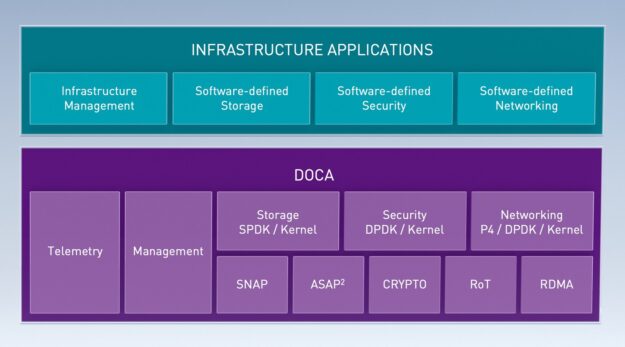

While the first generation of the BlueField DPU was positioned mainly for bare-metal clouds and storage applications, BlueField-2 delivers much broader capabilities across a greater range of scenarios, including virtualized clouds, SDN/NFV, cybersecurity, and AI. Building on top of the DOCA SDK package, you can innovate faster by creating advanced solutions with better agility and flexibility. The Linux OS and Arm ecosystem make porting applications to the BlueField-2 DPU straightforward. Applications that already support the ConnectX accelerations seamlessly support the same features on BlueField-2.

Accelerated cloud networking

On their journey to build elastic, multi-tenant cloud platforms, CSPs deploy software-defined networking to provide the necessary isolation between customer organizations or tenants. Each tenant needs to have its own virtual private cloud (VPC), including its own VPN, firewall, automation framework, and storage. NVIDIA is a leading provider of SDN acceleration technologies with its flagship ASAP2 advanced switching and packet processing technology built into the DPU.

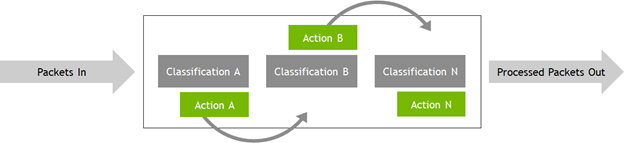

At the heart of ASAP2, which delivers breakthrough cloud-networking performance, is the eSwitch–an ASIC-embedded switch. The beauty of the eSwitch is in how it allows the DPU to handle a large portion of the packet-processing operations in hardware, freeing up the host’s CPU and providing higher network throughput. Nearly all traffic into or out of the server—and even traffic between VMs or containers on the servers—can be processed quickly by the eSwitch on the DPU. ASAP2 uses the eSwitch to deliver the best of both worlds: the performance and efficiency of bare-metal server networking with the flexibility of SDN. BlueField-2 DPUs also offload the SDN control-plane software to the programmable Arm cores. This results in additional CPU savings along with better control and enhanced security for CSPs in bare-metal, virtualized, and containerized environments.

The ASAP2 technology is integrated upstream in the Linux kernel and in a range of leading SDN stacks, including Open vSwitch (OVS) and Open Virtual Network (OVN). This integration enables cloud builders to provision virtual private cloud networks with unparalleled efficiency in their choice of enterprise and telco-grade environments.

Enabling composable block storage

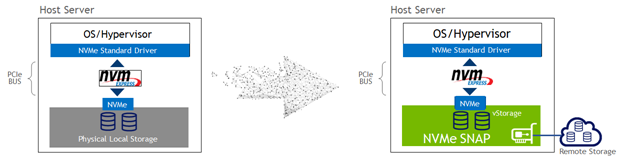

In high-performance cloud environments, CSPs usually install storage media on every host to deliver the best application performance. However, installing local storage at massive scale has many operational drawbacks, as it limits the ability to elastically provision and reallocate storage as needed. It’s also harder to maintain and protect these local storage resources across a fleet of nodes. When you’re designing a high-performance cloud environment, there’s a conflict between doing what is best for application performance, which is over-provisioned local storage, compared to what is best and most easily composable and protectable for the CSP, which is thinly provisioned networked storage.

With BlueField-2 NVMe SNAP technology, you can now provision networked, elastic block storage to each server with zero impact on application performance. It creates a win-win situation. Compute nodes continue to use their standard operating system’s NVMe PCIe driver, with little to no performance degradation, while also increasing efficiency. Storage is virtualized, thinly provisioned, and backed up. It can be migrated between servers as needed, providing savings in terms of both capex and opex.

Transforming cloud security

The cloud computing era has opened a host of cybersecurity challenges. The economic and operational nature of cloud platforms brings together many organizations, teams, and application workloads. These highly distributed, multi-tenant, and dynamic computing environments are driving the demand for new breeds of data center and cloud security solutions.

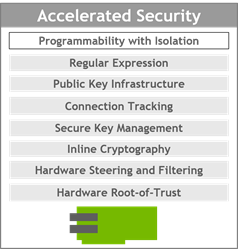

BlueField-2 DPUs transform cloud security by introducing innovative hardware engines that accelerate security across the entire stack, for every host. These engines protect cloud infrastructures and are geared towards the following functions:

- Enhancing platform security

- Accelerating encryption and decryption at line speed

- Enforcing software-defined security policies in hardware

- Performing stateful packet filtering

- Storing and managing keys in-hardware and accelerating PKI exchanges

- Detecting malicious code and mitigating attacks

The BlueField-2 DPU enables security functions to run on its Arm cores, fully isolated from the host’s CPU and operating system. This isolation is key in making BlueField-2 work best for zero-trust security solutions, as it delivers the needed separation of the security functions from the host, while delivering unmatched performance.

In the event a host has been compromised, the separation between the security functions and the compromised host helps stop the attack from spreading further.

Accelerating cloud-native AI workloads

Kubernetes plays an important role in the AI ecosystem, as new applications are built as microservices that run in a highly distributed environment. The widespread use of GPUs for ML and AI workloads makes advanced networking a crucial component in these scale-out Kubernetes clusters.

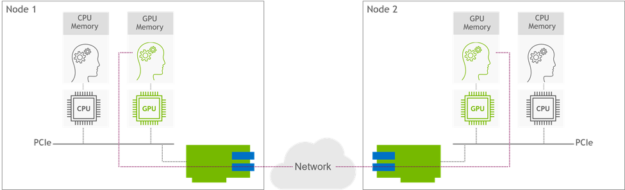

BlueField-2 DPUs provide in-hardware acceleration for remote direct memory access (RDMA/RoCE) communications. The GPUDirect RDMA technology featured in BlueField-2 unlocks high-throughput, low latency network connectivity in data center–scale computing clusters. GPUDirect RDMA allows efficient, zero-copy data transfer between GPUs while facilitating the hardware engines in the network ASIC. That accelerates cloud-native AI workloads by orders of magnitude.

BlueField-2 DPU performance

The following data points are just a few examples that showcase BlueField-2 DPU performance excellence in networking, storage, and security, respectively:

- BlueField-2 delivers line-rate connectivity at 100 Gb/s with zero CPU utilization for cloud overlay networking, where two VMs of the same tenant communicate across the network.

- BlueField-2 NVMe SNAP technology provides 2.5M+ IOPs read/write access, which is line-rate performance at 100Gb/s with 4-KB block sizes. For the sake of clarity, a modern NVMe storage card provides about 300K 4-KB IOPs. BlueField-2 enables software-defined storage composability with best-in-class performance as good as several local NVMe SSDs.

- BlueField-2 DPUs seamlessly add IPSec encryption and decryption capabilities at 100 Gb/s speed. The host is completely unaware that the traffic is being encrypted or decrypted, so the DPU enables end-to-end encryption and decryption without sacrificing even a single CPU cycle.

Enabling data center–scale cloud computing

BlueField-2 DPUs are revolutionizing the way cloud service providers, telco operators, and enterprises build data center scale computing platforms by taking advantage of best-in-class, hardware acceleration engines and cloud-native software.

For more information about NVIDIA BlueField-2 DPU features and capabilities, see the following resources: