AI is impacting every industry, from improving customer service and streamlining supply chains to accelerating cancer research. As enterprises invest in AI to stay ahead of the competition, they often struggle with finding the strategy and infrastructure for success. Many AI projects are rapidly evolving, which makes production at scale especially challenging.

We believe in developing product-grade AI for scale.

Think MLOps first

MLOps is the combination of AI-enabling tools and a set of best practices for automating, streamlining, scaling, and monitoring ML models from training to deployment.

Best practices to develop an efficient MLOps platform

An ideal MLOps platform is a comprehensive solution that supports the entire machine learning lifecycle, from data preparation and model development to model deployment and monitoring. It should provide a seamless integration of tools and technologies that can enable organizations to build, deploy, and manage machine learning models with ease.

Developing an MLOps platform for AI development and deployment at scale involves several key steps:

- Define the objectives.

- Identify the tools and technologies.

- Establish a model development workflow.

- Automate the pipeline.

- Monitor and manage models.

- Implement security and governance.

- Test and refine the platform.

- Continuously monitor the performance and accuracy of models in production.

Define the objectives

Clearly define what you want to achieve with your MLOps platform. This could include improving model development workflows, ensuring model quality, automating model deployment and management, or a combination of these.

Identify the tools and technologies

Determine the tools and technologies to be used for different stages of the MLOps pipeline: version control, continuous integration, continuous delivery, and monitoring.

Establish a model development workflow

Define the model development process and create a workflow that integrates the tools and technologies you have chosen. The model development workflow includes stages such as data preprocessing, model training, testing, and validation.

Automate the pipeline

Automating the model development pipeline using tools such as Jenkins, Travis CI, or CircleCI makes it easier to reproduce the model development process, reduce the time and effort required to deploy models, and help ensure consistency and quality.

Monitor and manage models

Implement a monitoring and management system for your models with logging and monitoring of model performance, version control for model artifacts, and a system for rolling out and updating models.

Implement security and governance

Implement security measures to ensure that sensitive data is protected and that models are developed, deployed, and managed in accordance with regulations and policies.

Test and refine the platform

Test the MLOps platform to ensure it is working as expected and refine it based on feedback from users. Continuously monitor and evaluate the platform to ensure it continues to meet the needs of your organization.

Continuously monitor the performance and accuracy of models in production

Continuously monitor and evaluate the performance and accuracy of models in production to make improvements to the model development process and the MLOps platform.

True MLOps

With a true MLOps platform, enterprises have the foundation to streamline AI development to deployment at scale.

A complete, integrated MLOps platform for any enterprise or organization should enable various personas contributing as data scientists, ML engineers, DevOps, AI practitioners, product managers, compliance, security, and many more to collaborate efficiently.

Accelerate MLOps at scale

Despite the many benefits and growing need for an end-to-end MLOps platform, there are challenges to deploying MLOps at scale. The MLOps ecosystem is a continuously evolving segment consisting of multiple independent software vendors and building your own MLOps infrastructure can be daunting.

NVIDIA and MLOps

NVIDIA is partnering with leading MLOps solution providers to simplify the development and deployment of accelerated AI with certification and integration with NVIDIA AI solutions.

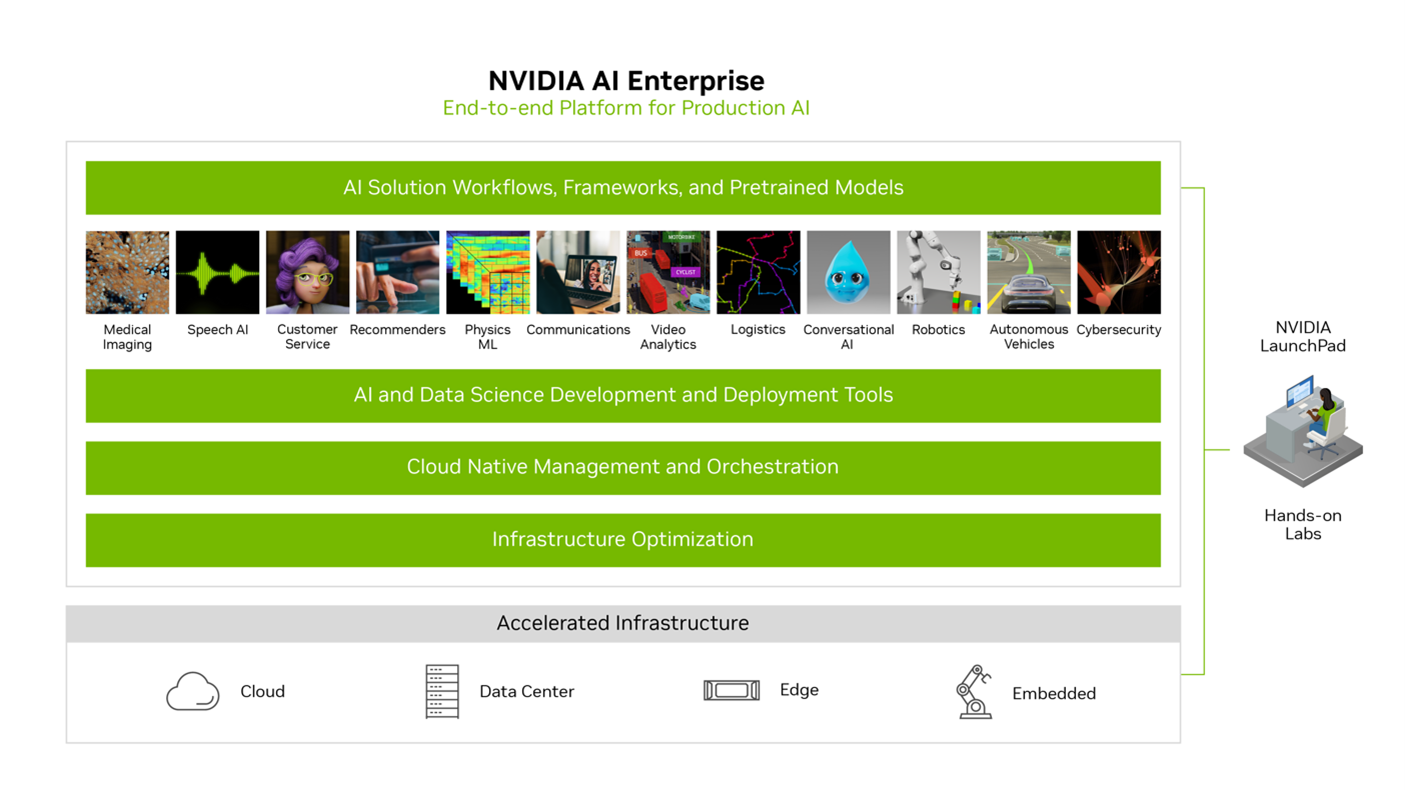

NVIDIA accelerated computing solutions for MLOps includes NVIDIA DGX systems, a portfolio of purpose-built AI infrastructure, and NVIDIA AI Enterprise, end-to-end, secure, cloud-native suite of AI software, optimized, validated, and supported for every organization to excel at AI, as well as an extensive library of full-stack software including AI solution workflows, frameworks, pretrained models, and infrastructure optimization.

At GTC 2023, learn how NVIDIA partners with leading MLOps solution providers to ensure reliable, high-performance end-to-end AI solutions accelerated by the NVIDIA AI platform.

How to Develop AI Workflows and MLOps Infrastructure at Scale

In this session, a panel of experts discusses fundamentals to rapidly build AI-enabled applications, respective workflows, and full-stack MLOps infrastructure.

- Manish Harsh, Global DevRel, MLOps Integrations and Partners, NVIDIA

- Yaron Haviv, co-founder and CTO, Iguazio

- Aparna Dhinakaran, co-founder and chief product officer, Arize AI

- Shelbee Eigenbrode, principal ML specialist solution architect, Amazon Web Services (AWS)

- Tina Naro, director of product marketing, ClearML