Participants in the Neural Information Processing Systems (NIPS) conference “Learning to Run” competition are vying for the chance to win an NVIDIA DGX Station, the fastest personal supercomputer for researchers and data scientists.

Using Deep Reinforcement Learning and open-source tools, competitors are tasked to see who can develop a controller to enable a physiologically-based human model to navigate a complex obstacle course as quickly as possible. A human musculoskeletal model and a physics-based simulation environment will be provided to synthesize physically and physiologically accurate motion. Potential obstacles include external obstacles like steps, or a slippery floor, along with internal obstacles like muscle weakness or motor noise. Scoring is based on the distance traveled through the obstacle course in a set amount of time.

Łukasz Kidziński, a postdoctoral fellow in bioengineering at Stanford, dreamed up the contest as a way to better understand how people with cerebral palsy will respond to muscle-relaxing surgery. Often, doctors resort to surgery to improve a patient’s gait, but it doesn’t always work.

“The key question is how to predict how patients will walk after surgery,” said Kidziński. “That’s a big question, which is extremely difficult to approach.”

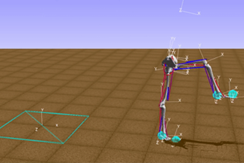

A competition submission of a virtual skeleton learning to walk.

A competition submission of a virtual skeleton learning to walk.

361 participants have already submitted 1,244 submissions to the competition, which is one of five official challenges in the NIPS 2017 Competition Track. Besides the winner receiving an NVIDIA DGX Station, the second and third place finishers will each receive an NVIDIA TITAN Xp GPU. Amazon has also donated $30,000 worth of Amazon Web Services credits to support participants and the top 100 performers will receive $300 in AWS credits.

The “Learning to Run” competition is open to anyone and ends October 31, 2017.

Read more >