NVIDIA researchers collaborated with ETH Zurich and Disney Research|Studios on a new paper, “NeRF-Tex: Neural Reflectance Field Textures” that will be presented at the Eurographics Symposium on Rendering (EGSR) June 29 – July 2, 2021. The paper introduces a new modeling primitive: neural reflectance field textures, or NeRF-Tex for short. NeRF-Tex is inspired by recent techniques for representing real and virtual scenes using neural networks. The main distinction of NeRF-Tex is that it does not attempt to completely replace classical graphics modeling, like many neural approaches do nowadays, but it complements them in situations where they struggle.

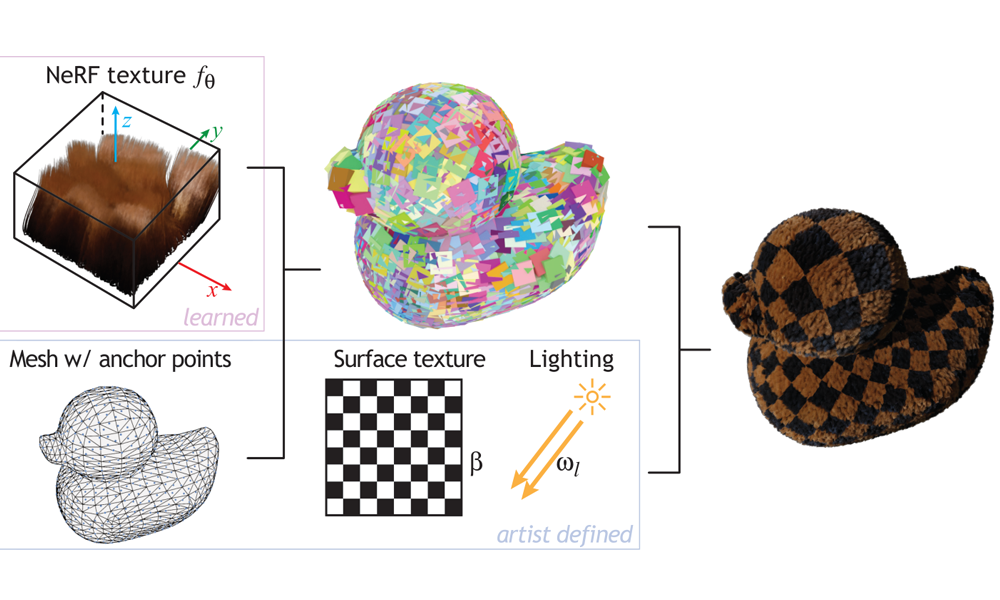

Modeling complex materials, such as fur, grass, or fabrics, is one such challenging scenario. NeRF-Tex addresses this challenge by utilizing a neural network to represent a small piece of fuzzy geometry. In order to create an object, an artist would model a base triangle mesh and instantiate the NeRF texture over its surface to create a volumetric layer with the desired structure, e.g., to dress a triangle mesh of a cat with fur.

Classical textures have traditionally been used to handle surface appearance. In contrast, NeRF Tex handles entire classes of volumetric appearance. For instance, a single NeRF texture could handle cases ranging from short, dark fur to bright, curly fibers. The spatial variations of the material are encoded using classical textures, effectively elevating these to control a volumetric structure above the surface.

Another great advantage of using neural networks for representing complex materials is the ability to perform level-of-detail rendering. The neural network is trained to reproduce filtered results to combat aliasing artifacts that classical primitives, like curves and triangles, are susceptible to.

The development of NeRF-Tex has been spearheaded by Hendrik Baatz and was done in collaboration with ETH Zurich and Disney Research|Studios. We are all excited about the potential of neural primitives for material modeling. More research and development is needed, but they could become powerful tools in offline and real-time production for creating photorealistic appearances in the future.

To learn more, view the project website.