Training Script now Available on GitHub and NGC Script Section

Late last year, we described how teams at NVIDIA had achieved a 4X speedup on the Bidirectional Encoder Representations from Transformers (BERT) network, a cutting-edge natural language processing (NLP) network. Since then, we’ve further refined this accelerated implementation, and will be releasing a script to both GitHub and the NGC software hub under the Model Scripts section. The script will enable researchers in the deep learning community to take advantage of this acceleration, and can be fine-tuned for NLP, recommender and question-answer applications. More specifically, this TensorFlow script supports Squad QA fine-tuning, and supports both DGX-1V and DGX-2 server configurations, and takes advantage of the new TensorFlow Automatic Mixed Precision feature.

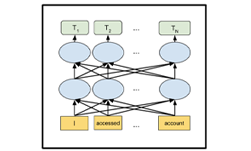

BERT represents a state-of-the-art NLP network, capable of achieving super-human levels of predictive accuracy. One of the key innovations is the “B” in BERT: bidirectional. Previous NLP models generally used unidirectional scans (left-to-right, right-to-left, or both) to recognize words, both in context-free and contextual representations. In addition, BERT can analyze entire sentences to more accurately learn the context of a given word based on its surroundings in both directions. While this approach produces a more accurate contextual model that is considered state of the art, it also has significantly higher computational demands.

To train BERT from scratch, you start with a large dataset like Wikipedia or combining multiple datasets. From there, you can add 1-2 layers at the end to customize it for a particular task, such as sentence classification or question-answer. Since these new layers add extra parameters, you need to train them with a specific dataset for that problem, say, Squad for Q&A for example. For optimal results, you will not only train the parameters in those small additional layers, but also fine-tune the training of the entire BERT model as well. For this step you can start with BERT parameters that you’ve trained, or use the pretrained weights released by Google. You can then use this updated BERT model, now fine-tune trained for a specific task, and use it for inference on your specific task, such as Q&A.

The work done here at NVIDIA used the Large version of BERT (called bert-large), which has 340 million parameters. Our initial acceleration findings were reported based on testing on a single GPU. The updated scripts we are publishing support both 8-GPU DGX-1V and 16-GPU DGX-2 systems.

While the scripts don’t specifically support an inference use case, they do report out the prediction speed achieved while evaluating the test dataset. These scripts could be easily modified to support a direct inferencing use case.

You can download the scripts to train BERT from GitHub or the NGC Scripts Repository. BERT represents a major step forward for NLP, and NVIDIA continues to add acceleration to the latest networks for all deep learning usages from images to NLP to recommender systems. This resources are continuously updated at NGC, as well as our GitHub page.

![]() By Dave Salvator, Senior Manager, Product Marketing

By Dave Salvator, Senior Manager, Product Marketing