Large language models (LLMs) are incredibly powerful and capable of answering complex questions, performing feats of creative writing, developing, debugging source code, and so much more. You can build incredibly sophisticated LLM applications by connecting them to external tools, for example reading data from a real-time source, or enabling an LLM to decide what action to take given a user’s request. Yet, building these LLM applications in a safe and secure manner is challenging.

NeMo Guardrails is an open-source toolkit for easily developing safe and trustworthy LLM conversational systems. Because safety in generative AI is an industry-wide concern, NVIDIA designed NeMo Guardrails to work with all LLMs, including OpenAI’s ChatGPT.

This toolkit is powered by community-built toolkits such as LangChain, which has gathered ~30 K stars on GitHub within just a few months. The toolkits provide composable, easy-to-use templates and patterns to build LLM-powered applications by gluing together LLMs, APIs, and other software packages.

Building trustworthy, safe, and secure LLM conversational systems

NeMo Guardrails enables developers to guide chatbots easily by adding programmable rules to define desired user interactions within an application. It natively supports LangChain, adding a layer of safety, security, and trustworthiness to LLM-based conversational applications. Developers can define user interactions and easily integrate these guardrails into any application using a Python library.

Adding guardrails to conversational systems

Guardrails are a set of programmable constraints or rules that sit in between a user and an LLM. These guardrails monitor, affect, and dictate a user’s interactions, like guardrails on a highway that define the width of a road and keep vehicles from veering off into unwanted territory.

A user sends queries to a bot, and the bot returns responses. The full set of queries and responses together is a chat. The bot responds to user queries and creates corresponding prompts, either intermediary steps or actions to execute on. The bot then uses the prompt to make calls to an LLM, and the LLM returns information that forms the bot response.

Sometimes, the bot uses relevant information from a knowledge base that contains documents. The bot retrieves chunks from the knowledge base and adds them as context in the prompt.

NeMo Guardrails is built on Colang, a modeling language and runtime developed by NVIDIA for conversational AI. The goal of Colang is to provide a readable and extensible interface for users to define or control the behavior of conversational bots with natural language.

Interacting with this system is like a traditional dialog manager. You create guardrails by defining flows in a Colang file that contains these key concepts:

- Canonical form

- Messages

- Flows

A canonical form is a simplified paraphrase of an utterance. This is used to reason about what a conversation is about and to match rules to utterances.

Messages represent a shorthand method to classify user intent as the generation of a canonical form, a lightweight representation using natural language. Under the hood, these messages are indexed and stored in an in-memory vector store. When activated, the top N most similar messages from the vector store are retrieved or sent to the LLM to generate similar canonical forms. For example:

define user express greeting

"Hi"

"Hello!"

"Hey there!"

This example represents the shorthand for a greeting. It defines a new canonical form express greeting, and provides some natural language prompts or messages. Any prompt similar to the given set of prompts is understood as the user expressing a greeting.

Flows are composed of a set of messages and actions and define the structure or flow of user interactions. Flows can also be thought of as a tree or a graph of interactions between the user and the bot.

define flow greeting

user express greeting

bot express greeting

bot ask how are you

When a greeting flow is triggered, the user and the bot are instructed to exchange greetings. The syntax for defining these is the same as messages.

Guardrail techniques

When guardrails are defined, these sit between the user and an AI application. By monitoring communication in both directions and taking appropriate actions to ensure safety and security, the models remain within the application’s desired domain. You programmatically define guardrails using a simple and intuitive syntax.

Today, NeMo Guardrails supports three broad categories of guardrails:

- Topical

- Safety

- Security

Topical guardrails

Topical guardrails are designed to ensure that conversations stay focused on a particular topic and prevent them from veering off into undesired areas.

They serve as a mechanism to detect when a person or a bot engages in conversations that fall outside of the topical range. These topical guardrails can handle the situation and steer the conversations back to the intended topics. For example, if a customer service bot is intended to answer questions about products, it should recognize that a question is outside of the scope and answer accordingly.

Safety guardrails

Safety guardrails ensure that interactions with an LLM do not result in misinformation, toxic responses, or inappropriate content. LLMs are known to make up plausible-sounding answers. Safety guardrails can help detect and enforce policies to deliver appropriate responses.

Other important aspects of safety guardrails are ensuring that the model’s responses are factual and supported by credible sources, preventing humans from hacking the AI systems to provide inappropriate answers, and mitigating biases.

Security guardrails

Security guardrails prevent an LLM from executing malicious code or calls to an external application in a way that poses security risks.

LLM applications are an attractive attack surface when they are allowed to access external systems, and they pose significant cybersecurity risks. Security guardrails help provide a robust security model and mitigate against LLM-based attacks as they are discovered.

Guardrails workflow

NeMo Guardrails is fully programmable. Applying a guardrail and the set of actions that the guardrail triggers is easy to customize and improve over time. For critical cases, a new rule can be added with just a few lines of code.

This approach is highly complementary to techniques such as reinforcement learning from human feedback (RLHF), where the model is aligned to human intentions through a model-training process. NeMo Guardrails can help developers harden their AI applications, making them more deterministic and reliable.

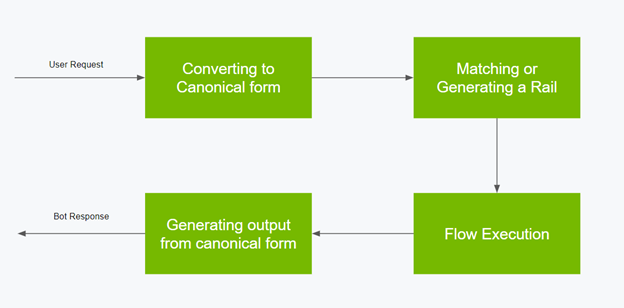

Here’s the workflow of a guardrail flow:

- Convert user input to a canonical form.

- Match or generate a guardrail based on the canonical form, using Colang.

- Plan and dictate the next step for the bot to execute actions.

- Generate the final output from the canonical form or generated context.

For more information about several examples addressing common patterns for implementing guardrails, see the NeMo Guardrails GitHub repo. These make up a great set of recipes to draw from when building your own safe and secure LLM-powered conversational systems in NeMo Guardrails.

Summary

NVIDIA is incorporating NeMo Guardrails into the NeMo framework, which includes everything you need for training and tuning language models using your company’s domain expertise and datasets.

NeMo is also available as a service. It’s part of NVIDIA AI Foundations, a family of cloud services for businesses that want to create and run custom generative AI models based on their own datasets and domain knowledge.

NVIDIA looks forward to working with the AI community to continue making the power of trustworthy, safe, and secure LLMs accessible to everyone. Explore NeMo Guardrails today.