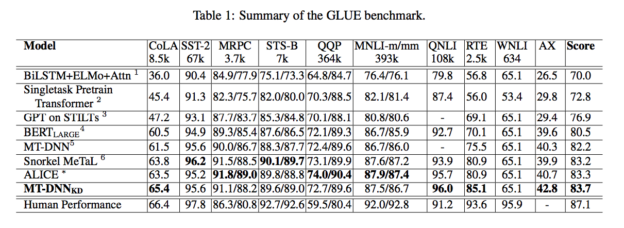

Microsoft AI Research just announced a new breakthrough in the field of conversational AI that achieves new records in seven of nine natural language processing tasks from the General Language Understanding Evaluation (GLUE) benchmark.

Microsoft’s natural language processing algorithm called Multi-Task DNN, first released in January and updated this month, incorporates Google’s BERT NLP model to achieve groundbreaking results.

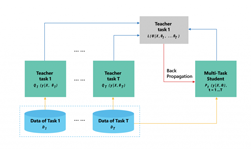

“For each task, we train an ensemble of different MT-DNNs (teacher) that outperforms any single model, and then train a single MT-DNN (student) via multi-task learning to distill knowledge from these ensemble teachers,” reads a summary of the paper “Improving Multi-Task Deep Neural Networks via Knowledge Distillation for Natural Language Understanding.”

The new records lie at the crux of research in the AI speech community to improve conversational AI algorithms, which consists of neural networks capable of speech recognition, natural language understanding, and text-to-speech systems.

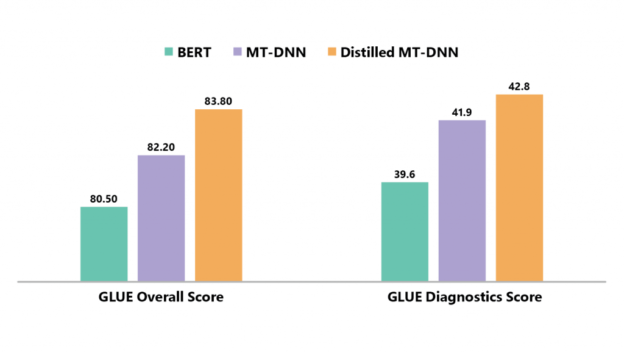

“This distilled model improves the GLUE benchmark (single model) to 83.7%, amounting to 3.2% absolute improvement over BERT and 1.5% absolute improvement over the previous state of the art model based on the GLUE leaderboard2 as of April 1, 2019,” the researchers stated.

Training and testing was performed on NVIDIA Tesla V100 GPUs with the cuDNN-accelerated PyTorch deep learning framework.

“We ran experiments on four NVIDIA V100 GPUs for base MT-DNN models,” the team wrote in a GitHub post. To reproduce the GLUE results with MTL refinement, the team ran the experiments on eight NVIDIA V100 GPUs.

Updated results and code to replicate the results will be published on GitHub in June.

The GLUE benchmark consists of nine NLU tasks including question answering, sentiment analysis, text similarity, and textual entailment.

The Microsoft work was published on Thursday on the Microsoft Research Blog.