Working in collaboration since October 2021, NVIDIA and Open Robotics are introducing two important changes, now available in the Humble ROS 2 release for improved performance on compute platforms that offer hardware accelerators.

The new ROS 2 Humble hardware-acceleration features are called type adaptation and type negotiation. NVIDIA will release a software package-implementing type adaptation and type negotiation in the next NVIDIA Isaac ROS release (late June 2022).

These simple but powerful additions to the framework will significantly increase performance for developers seeking to incorporate AI/machine learning and computer vision functionality into their ROS-based applications.

“As ROS developers add more autonomy to their robot applications, the on-robot computers are becoming much more powerful. We have been working to evolve the ROS framework to make sure that it can take advantage of high-performance hardware resources in these edge computers,” said Brian Gerkey, CEO of Open Robotics.

“Working closely with the NVIDIA robotics team, we are excited to share new features (type adaptation and negotiation) in the Humble release that will benefit the entire ROS community’s efforts to embrace hardware acceleration.”

Eliminating overhead of hardware acceleration

Type adaptation

It is common for hardware accelerators to require a different data format to deliver optimal performance. Type adaptation (REP-2007) can now be used for ROS nodes to work in the format better suited for the hardware. Processing pipelines can eliminate memory copies between the CPU and the memory accelerator using the adapted type. Unnecessary memory copies consume CPU compute, waste power, and slow down performance, especially as the size of the images increases.

Type negotiation

Another new innovation is type negotiation (REP-2009). Different ROS nodes in a processing pipeline can advertise their supported types, so that formats yielding ideal performance are chosen. The ROS framework performs this negotiation process and maintains compatibility with legacy nodes that don’t support negotiation.

Accelerating processing pipelines using type adaptation and negotiation makes hardware accelerator zero-copy possible. This reduces software/CPU overhead and unlocks the potential of the underlying hardware. As roboticists migrate to more powerful compute platforms like NVIDIA Jetson Orin, they can expect to realize more of the performance gains enabled by the hardware.

These changes are done completely inside of ROS 2, which ensures compatibility with existing tools, workflows, and codebases.

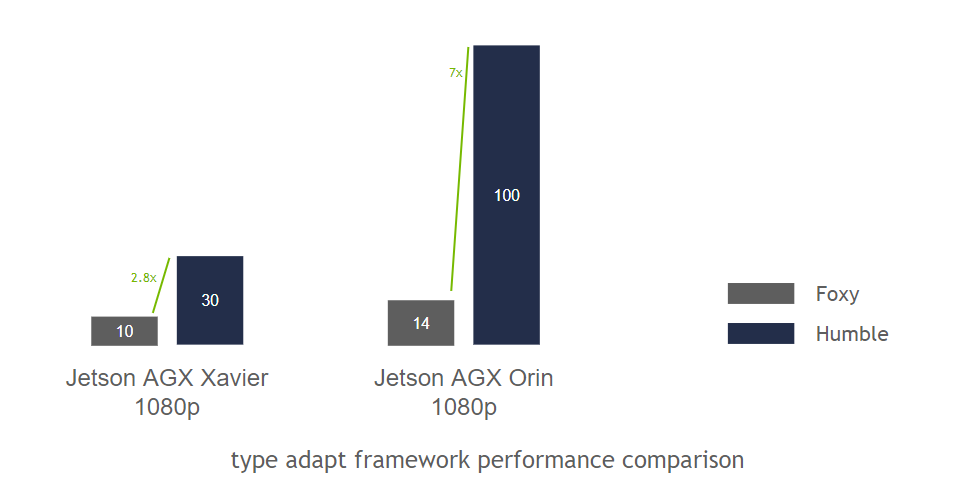

Type adaptation and negotiation have shown promising results. A benchmark consisting of a graph of ROS nodes, with minimal compute in each node, was run on ROS 2 Foxy and ROS 2 Humble so that we could observe the underlying framework performance. We ran this benchmark on Jetson AGX Xavier and the new Jetson AGX Orin. We observed a 3x improvement on Xavier and an impressive 7x improvement on Orin.

Introducing NVIDIA Isaac for Transport for ROS

The NVIDIA implementation of type adaption and negotiation are called NITROS. These are ROS processing pipelines made up of Isaac ROS hardware accelerated modules (a.k.a. GEMs). These pipelines will be available in Isaac ROS Developer Preview (DP) scheduled for late June 2022. The first release of NITROS will include three pipelines and more are planned for later in the year.

| NITROS Pipeline | ROS 2 Nodes in Pipeline |

|---|---|

| AprilTag Detection Pipeline | ArgusCameraMono (Raw Image) – Rectify – (Rectified Image) – AprilTag (AprilTag Detection) |

| Stereo Disparity Pipeline | ArgusCameraStereo (Raw Image) – Rectify – (Rectified Image) – ESSDisparity (DNN Inference) – PointCloud (Point Cloud Output) |

| Image Segmentation Pipeline | ArgusCameraMono (Raw Image) – Rectify – (Rectified Image) – DNNImageEncode (DNN Pre-Processed Tensors) – Triton (DNN Inference) – UNetDecode (Segmentation Image) |

Powerful new GEMs aid robotics perception

In addition to the NITROS accelerated pipelines, the Isaac ROS DP release contains two new DNN-based GEMs designed to help roboticists with common perception tasks.

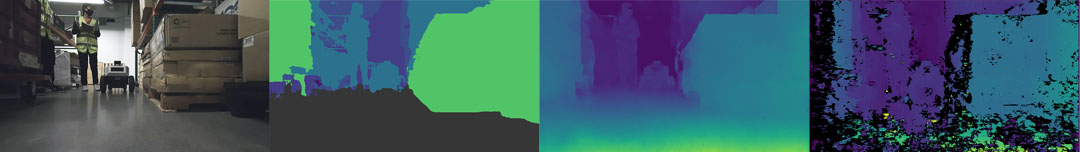

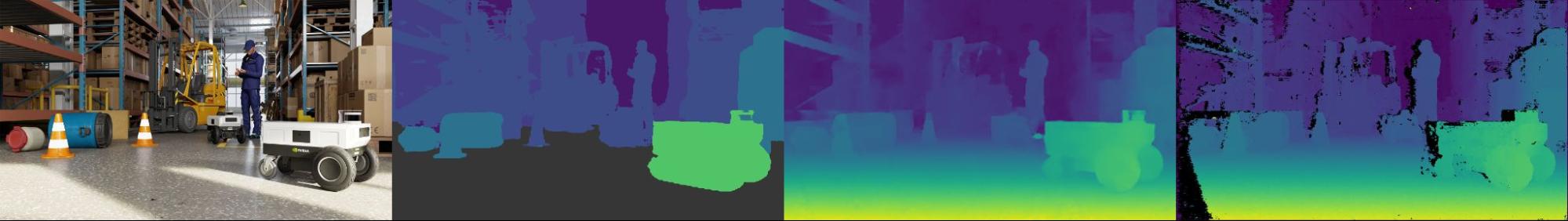

The first GEM, ESS, is a DNN for stereo camera disparity prediction. The network provides vision-based continuous depth perception for robotics applications.

The other GEM, Bi3D, is a DNN for vision-based obstacle prediction. The DNN, based on groundbreaking work from NVIDIA Research, is enhanced to detect free space with obstacle predictions simultaneously. The network predicts if an obstacle is within one of four programmable proximity fields from a stereo camera.

Bi3D is optimized to run on NVIDIA DLA hardware. Leveraging the DLA, both GPU and CPU compute resources are preserved.

Both Bi3D and ESS are pretrained for robotics applications using synthetic and real data and are intended for commercial use. These two new Isaac ROS GEMs join stereo_image_proc, a classic computer vision stereo depth disparity routine previously released, to offer three diverse, independent functions for stereo camera depth perception.

| Isaac ROS 2 GEM | Description |

|---|---|

| Image Pipeline | Camera Image Processing |

| NVBlox | 3D Scene Reconstruction |

| Visual SLAM | VSLAM and Stereo Odometry |

| AprilTags | Apriltag Detection and Pose Estimation |

| Pose Estimation | 3D Object Pose Estimation |

| Image Segmentation | Semantic Image Segmentation |

| Object Detection | DNN for Object Detection using DetectNet |

| DNN Inference | DNN Node for using Triton/TensorRT |

| Argus Camera | CSI/GSML Camera Support |

Getting started

ROS developers interested in integrating NVIDIA AI Perception to their products should get started today with Isaac ROS.