In the context of global warming, NVIDIA Earth-2 has emerged as a pivotal platform for climate tech, generating actionable insights in the face of increasingly disastrous extreme weather impacts amplified by climate change.

With Earth-2, accessible insights into weather and climate are no longer confined to experts in atmospheric physics or oceanic dynamics. You can now harness advanced technologies to navigate the complexities of our changing climate with foresight and precision—guiding companies, organizations, and nations to anticipate unprecedented extreme weather-related risks and mitigate the impacts thereof.

This post spotlights the comprehensive NVIDIA Earth-2 suite of tools designed for AI model training and inference, with an emphasis on downscaling using generative AI.

Downscaling, akin to the concept of super-resolution in image processing, involves generating higher-resolution data or predictions from lower-resolution input data. Our focus extends to generative AI for kilometer-scale (km-scale) weather predictions, encompassing everything from training global AI weather models to inferencing and generating km-scale predictions.

Finally, this post highlights the software tools driving this Earth digital twin revolution, helping you leverage generative AI techniques to realize accurate and cost-effective weather forecasts using Earth-2 AI tools.

Solving the need for cost-effective, km-scale weather predictions

With the AI-driven advancement of NVIDIA Earth-2, the landscape of climate simulation has shifted dramatically, democratizing access to weather and climate information.

Earth-2 will catalyze proactive decision-making, guiding companies, organizations, and nations in answering what-if scenarios, and anticipating unprecedented weather to enable actionable outcomes, spanning policy formulation, urban development, and infrastructure planning.

Predicting impending weather hazards accurately requires costly simulations at km-scale resolutions. The same is true of predicting future climate hazards.

Using traditional simulation methods to reach km-scale makes models too large, complex, and computationally expensive. Furthermore, weather and climate are chaotic systems that are inherently uncertain and require a large set of forecasts, called ensembles, to predict probabilities of future outcomes.

Simulation resolution trades off against ensemble size. This limits the range of hazards that can be sampled to inform planning. However, a cost-effective solution lies in stochastic AI downscaling models.

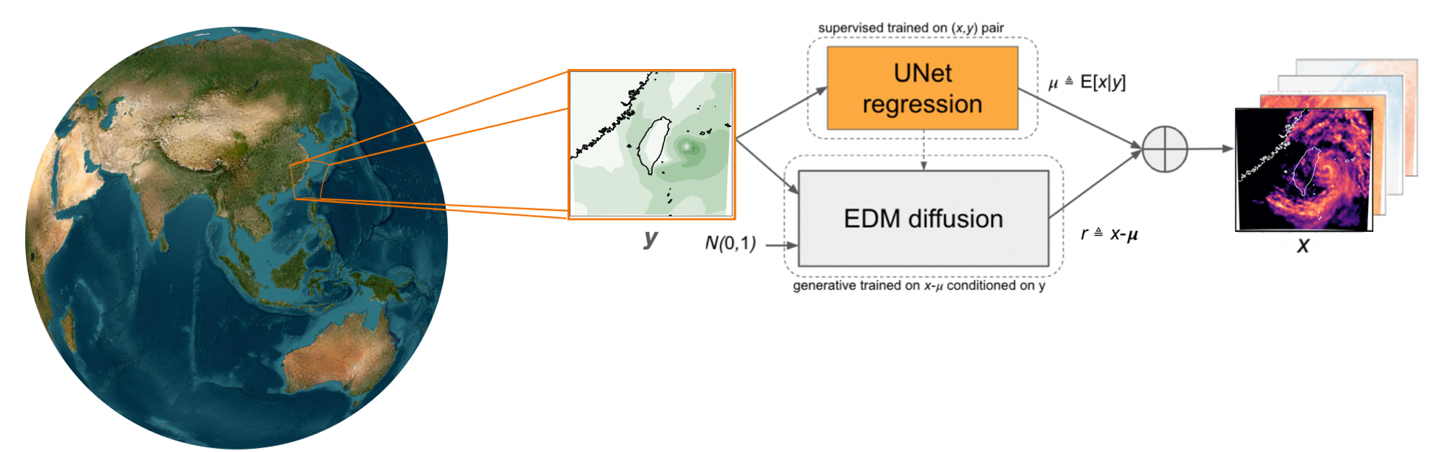

At the heart of the Earth-2 platform, NVIDIA offers a novel, two-step, generative AI, diffusion modeling–based approach for downscaling weather data with high fidelity called CorrDiff.

Predicting fine-scale weather details with CorrDiff

Developed by pioneering NVIDIA research and developer technology teams, CorrDiff introduces a corrector diffusion model approach that promises to redefine weather prediction at km-scale resolutions.

At its core, CorrDiff harnesses the power of generative learning to address the challenge of predicting fine-scale details of extreme weather phenomena with unprecedented accuracy and efficiency.

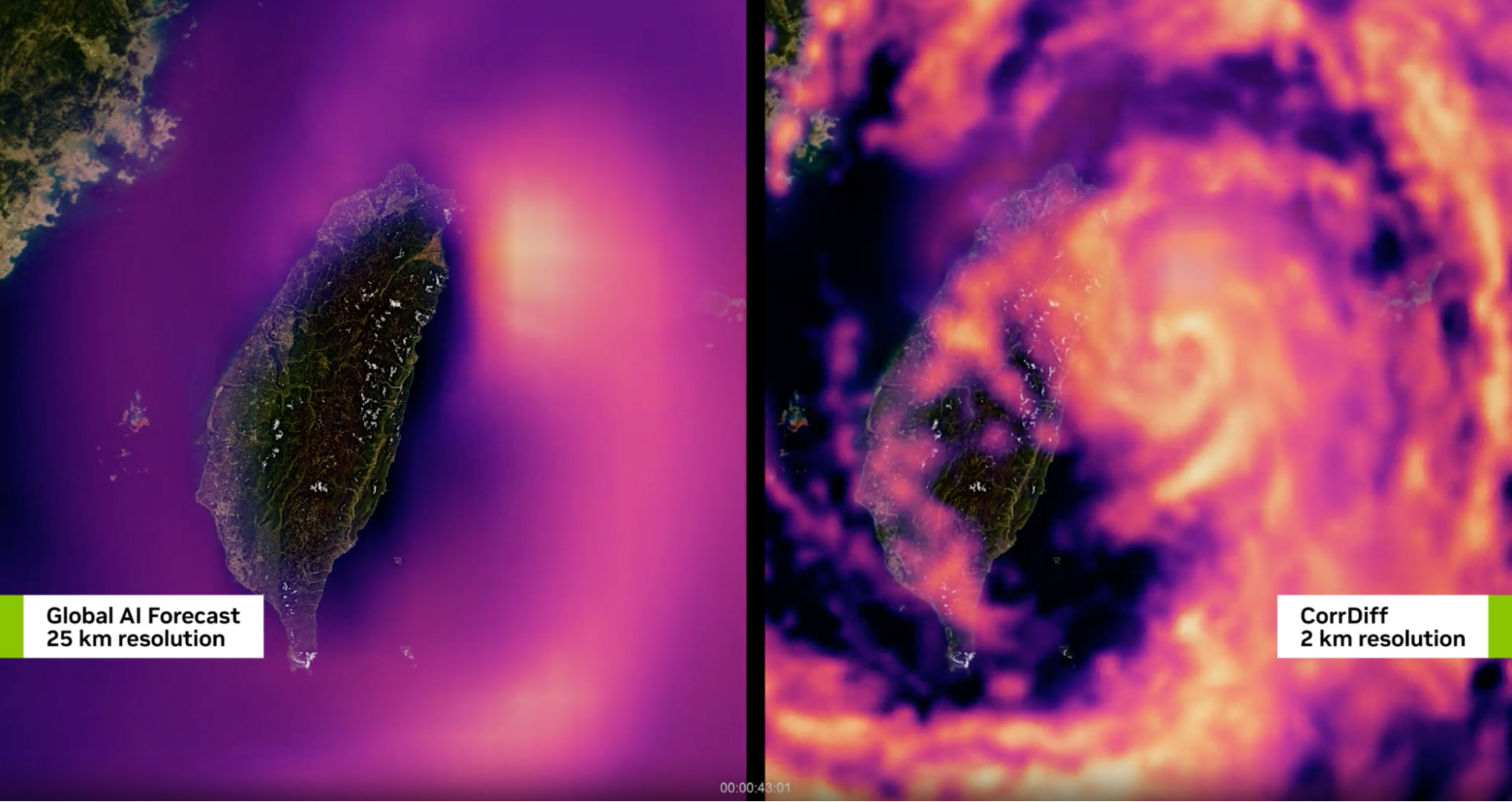

The CorrDiff method effectively isolates generative learning to shorter length scales, enabling skillful predictions of fine-scale details of extreme weather phenomena. For instance, you might start with forecasts at a 25-km resolution and want to generate forecasts at a 2-km resolution.

The key challenge is to predict the details missing in the coarse-resolution forecasts to make them more accurate and detailed, resembling the finer-resolution data and including the coherent spatial structure of weather extremes.

CorrDiff works in two main steps. First, a regression model predicts the mean of the fine-resolution field. Then, in the second step, CorrDiff refines this guess by adding in the missing details that weren’t captured in the initial prediction, enabling it to better match reality.

Crucially, because this is a generative AI approach, CorrDiff can synthesize new fine-resolution fields that are not present in the coarse-resolution input data. The inherently stochastic sampling of diffusion models also enables the generation of many possible fine-resolution states corresponding to a single coarse-resolution input, providing a distribution of outcomes and a measure of uncertainty.

The idea behind CorrDiff is that it’s easier to learn the corrections needed to improve an initial guess than to directly learn the fine-resolution details from scratch. By breaking down the problem into the regression and diffusion steps, CorrDiff can effectively leverage the information available in the coarse-resolution forecasts to generate more accurate and detailed predictions at the finer resolution.

Overall, CorrDiff offers a practical approach to improving the resolution of weather forecasts, synthesizing new variables, and providing an ensemble of states by leveraging existing coarse-resolution data and models to produce more detailed and accurate predictions for specific regions, in this case, Taiwan. This practicality is underscored by its remarkable efficiency, being orders of magnitude faster and more energy-efficient than conventional methods.

CorrDiff can also synthesize outputs that may not be available in the input vector but are hypothesized to correlate with inputs. This means that users with their own datasets can train customized CorrDiff equivalents for their own use cases, expanding the applicability and utility of CorrDiff across diverse datasets and scenarios.

NVIDIA Earth-2 software toolkits for AI weather models

NVIDIA Earth-2 offers a suite of powerful climate tech tools for training and inference of AI weather models, including NVIDIA PhysicsNeMo and Earth2Studio.

Training AI weather models in NVIDIA PhysicsNeMo

To train AI weather models, you can use NVIDIA PhysicsNeMo, an open-source physics-informed machine learning (physics-ML) platform.

NVIDIA PhysicsNeMo empowers engineers to construct AI surrogate models that make realistic predictions rivaling and able to surpass those of physics-based prediction models. PhysicsNeMo provides trainable architectures of the leading global AI-weather forecasting models, diagnostic models to derive further variables from a forecast, and CorrDiff for downscaling.

PhysicsNeMo also includes functionality for building training pipelines, like data loaders for the most common data types. These features are combined in example workflows for training AI models for the atmosphere. The components of PhysicsNeMo are designed from the ground up to optimally leverage GPUs and scale to large training settings.

For each atmospheric AI model, PhysicsNeMo includes an example of a workflow to train the respective model on a suitable data set. Currently, PhysicsNeMo implements several forecasting models:

- FourCastNet (FCN), our in-house AI-based global weather model based on the Spherical Fourier Neural Operator architecture.

- A hierarchical, icosahedral graph neural network (GNN) analogous to GraphCast.

- DLWP-HEALPix, a physically inspired AI prediction method with an equal-area grid, based on DLWP, which was the first global forecast model to demonstrate competitive sub-seasonal to seasonal prediction skill.

- Other models like Pangu-Weather, FengWu, and SwinVRNN, built on vision transformer architectures, will follow.

For more information and access, see the /NVIDIA/physicsnemo GitHub repo.

Such global weather forecast models predict a set of variables on surface and pressure levels that are required to progress the atmospheric state in time.

Often, you might be interested in variables that are not included in the global model, such as precipitation or other variables from custom data streams hypothesized to be predictable from the global weather model backbone.

Diagnostic models do not advance the atmospheric state in time but derive desired quantities based on existing ones at the same time. By combining a diagnostic and a forecast model, you can forecast additional variables without retraining the latter.

PhysicsNeMo also includes methods to train diagnostic models on the set of variables required for your use case. To try out diagnostic models, see the checkpoint for a model predicting precipitation.

AI-weather model inference through Earth2Studio

To better enable developers and researchers to experiment, build, and customize AI weather and climate inference workflows, NVIDIA Earth-2 has developed an open-source Python package Earth2Studio. This package is now available in the NVIDIA PhysicsNeMo 24.04 release.

Earth2Studio is aimed to serve commonly used models, data sources, perturbation methods, IO modules, and more that are required for a wide variety of inference workflows, all while making extension and customization easy.

You will have access to common public online data source API out-of-the-box, including the following:

A collection of pretrained models is accessible in Earth2Studio, with more to follow, as a starting point for the integration of AI weather models for your use case:

- FourCastNet

- PhysicsNeMo GraphCast

- Pangu-Weather

- LWP

Checkpoints are readily available in the NGC Catalog, such as FCN, and Earth2Studio includes routines for automatically downloading and caching them to your device. Some models are instead loaded from external sources, such as the PanguWeather checkpoints hosted at ECMWF.

Earth2Studio aims to enable you to build your own custom workflows for inferencing forecasting and diagnostic models with little effort. It includes a set of common workflows for deterministic and ensemble-based prediction and validation out of the box with just a few lines of code.

The following code example shows how to produce a deterministic forecast with just eight lines of code leveraging the FourCastNet model through Earth2Studio.

from earth2studio.models.px import FCN

from earth2studio.data import GFS

from earth2studio.io import ZarrBackend

import earth2studio.wf_simple as wf

# Load FourCastNet pretrained model

model = FCN.load_model(FCN.load_default_package())

# Create the data source

data = GFS ()

# Create the Zarr IO

io = ZarrBackend()

# Run 20 steps of inference

output_datastore = wf.run([ “2024-01-01” ], 20, model, data, io)

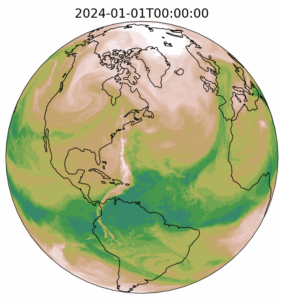

Figure 1 shows the total column water vapor field produced at the last timestep of the forecast.

Training CorrDiff in NVIDIA PhysicsNeMo

This example demonstrates super-resolution and new channel synthesis, training CorrDiff to convert ERA5 data at 25 km to 2-km data around Taiwan.

The data was generated by the Central Weather Administration (CWA) in Taiwan using a high-resolution regional numerical weather prediction model. The dataset is available for non-commercial use under the CC BY-NC-ND 4.0 license and can be downloaded from NGC. For more information about specific instructions to train the model, see the Getting Started section in the /NVIDIA/physicsnemo GitHub repo.

A key benefit of NVIDIA PhysicsNeMo, besides the ease of use, is performance optimization. Training CorrDiff currently requires 2–3K GPU hours on NVIDIA A100 or NVIDIA H100 GPUs, and the CorrDiff team is working on optimizing the training procedure further. One super-resolution sample can be generated on similar hardware in a matter of seconds.

Inferencing CorrDiff through PhysicsNeMo

(Source: Residual Diffusion Modeling for Km-Scale Atmospheric Downscaling)

The same CorrDiff example also contains inference scripts to generate conditional samples of high-resolution weather based on low-resolution ERA5 conditioning (inputs at 25 km).

For more information about instructions to generate samples and save them to a NetCDF file, see the /NVIDIA/physicsnemo GitHub repo. Running inference requires having PhysicsNeMo checkpoints for both the regression and the diffusion model. These checkpoints are saved as a part of the training pipeline.

For more information and access, see the CorrDiff inference package in the NGC Catalog.

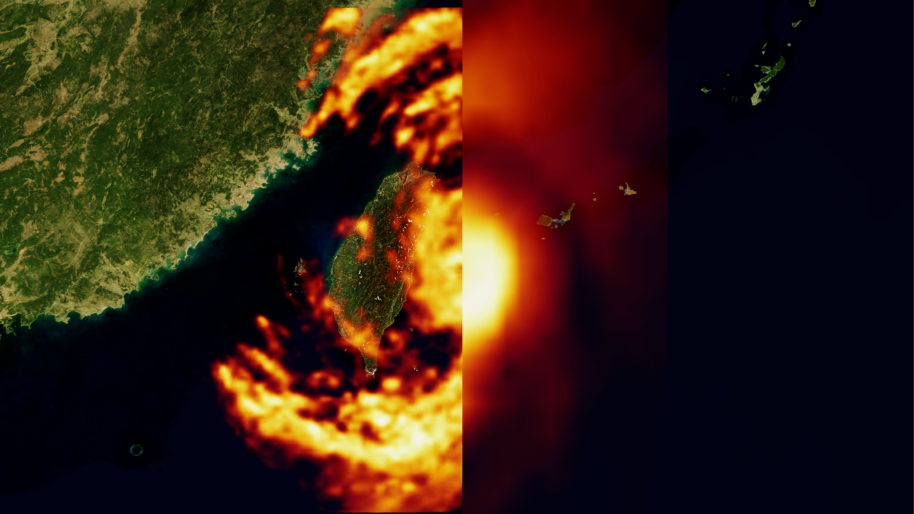

Storm tracking over Taiwan

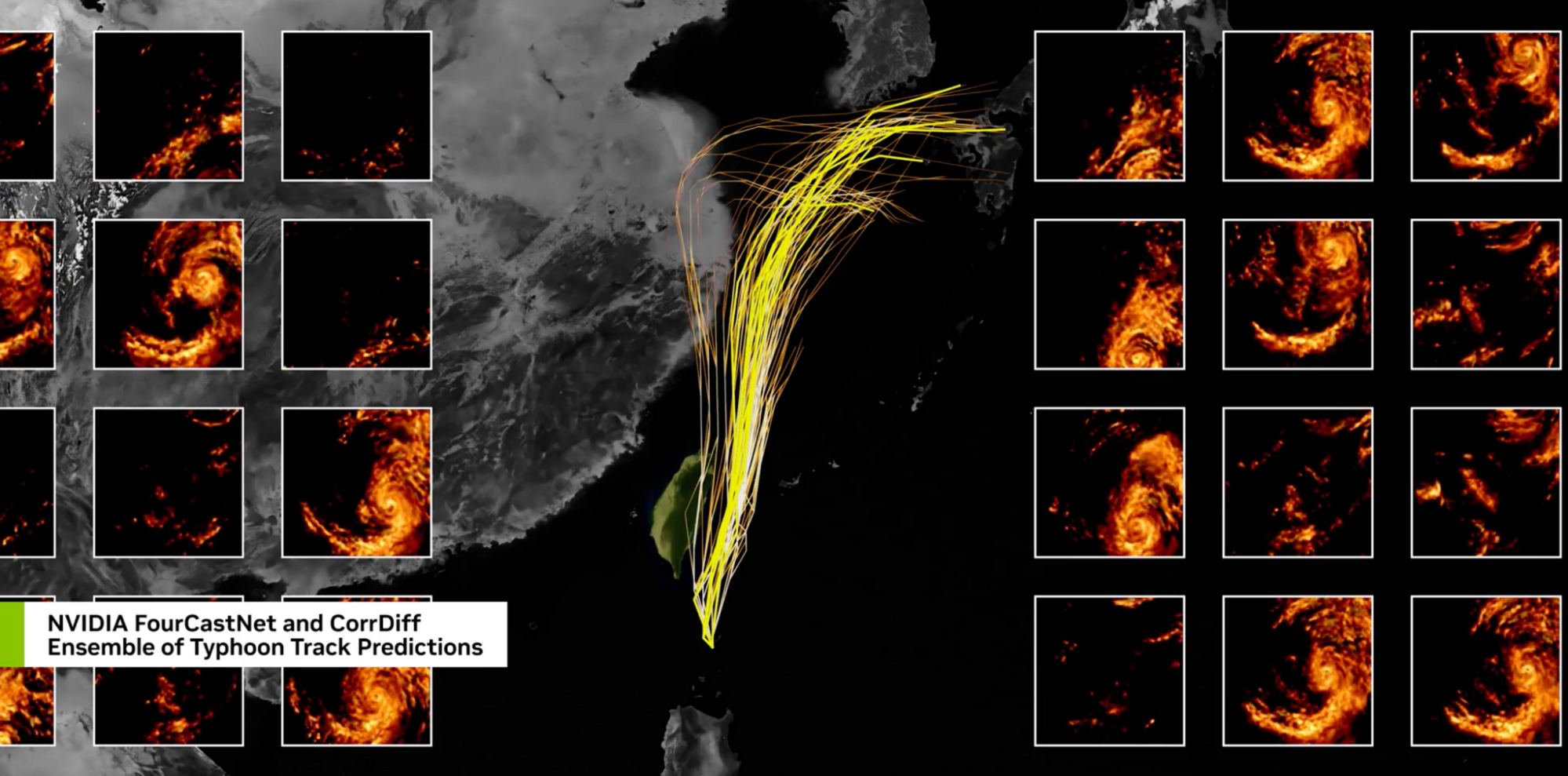

As an example of CorrDiff’s use cases for extreme weather problems, we present the challenges of storm tracking over Taiwan.

Although global AI forecast models excel in predicting storm tracks, their effectiveness is hindered by their limited resolution of 25 km, which cannot capture the fine-scale details that often contain the strongest winds and precipitation critical to storm-related damage.

At a resolution of 25 km, the structure of typhoons in ERA5 input data is often poorly resolved, resulting in inaccurate representations of their size and intensity. It is also missing key spatial details in the eyewall and rainbands related to physical hazards.

Taiwan, renowned as one of the wettest locations globally with an annual rainfall of 2,600 mm—approximately 3x the worldwide average—faces an average annual disaster cost of $650M. This financial burden is caused by seasonal typhoons depositing substantial rainfall on the island, resulting in extensive flooding that damages life and property and requires large-scale evacuation efforts.

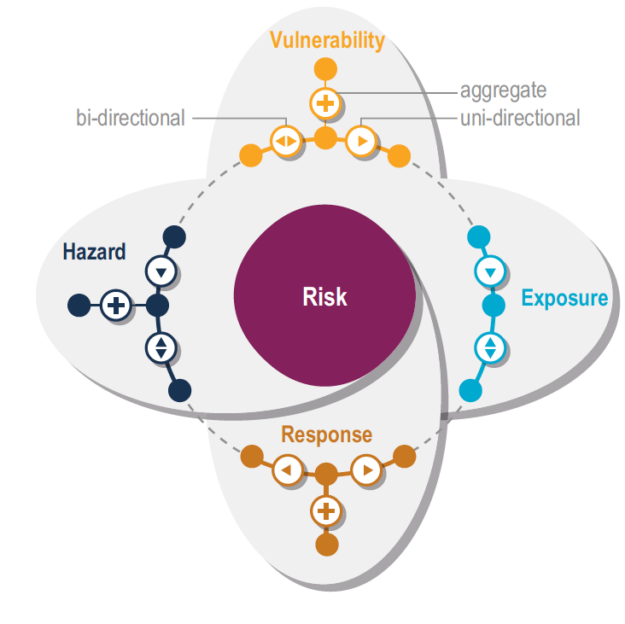

Disaster risk is considered as the combination of the severity and frequency of a hazard, the number of people and assets exposed to the hazard, and their vulnerability to damage. Figure 3 shows an IPCC schematic in their 2022 sixth assessment report on impacts, adaptation, and vulnerability.

(Source: IPCC AR6, WG2, Chapter 1, pages 146-147)

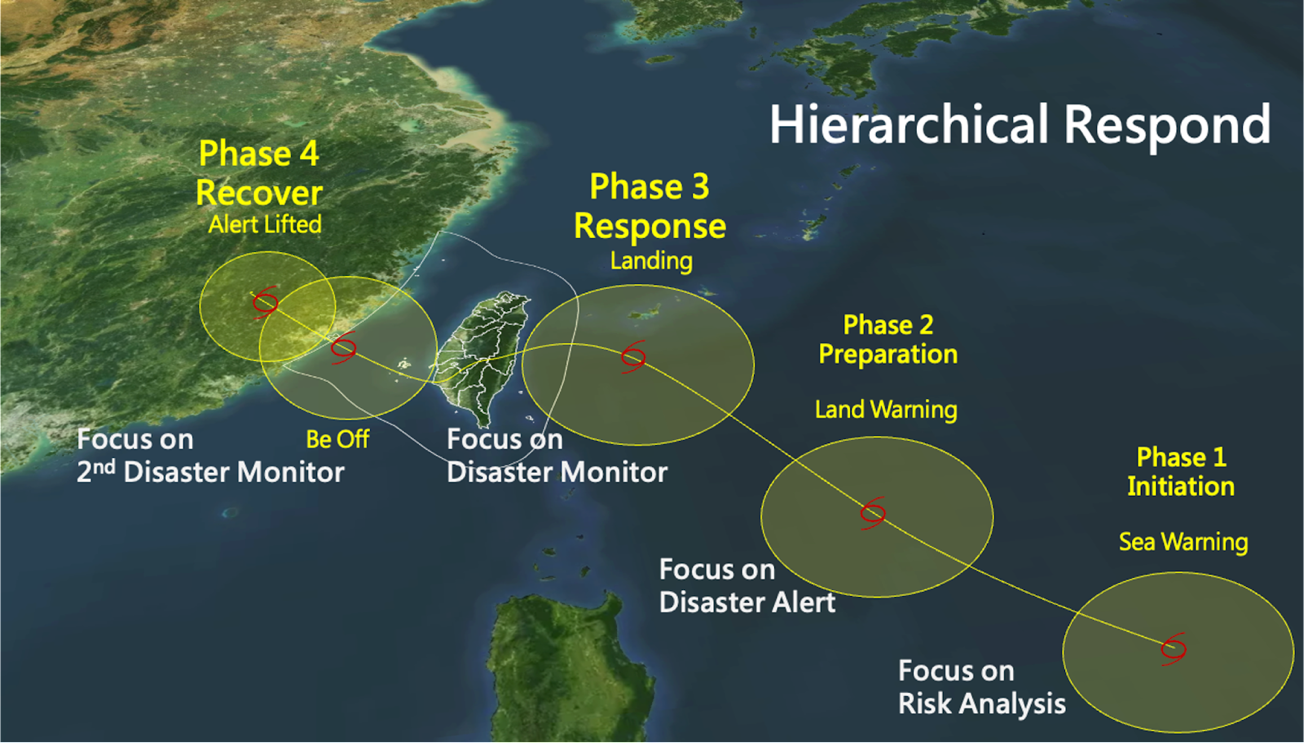

The Taiwanese National Science and Technology Center for Disaster Reduction (NCDR) outlines a four-phase approach for typhoon response (Figure 4).

The first two phases, Initiation and Preparation, concentrate on analyzing risks and issuing disaster alerts. Phases 3 and 4, Response and Recovery, are dedicated to monitoring the disaster and implementing response measures.

NVIDIA technology can address these challenges.

Enhancements from AI weather forecasting have the potential to improve the risk analysis during phases 1 and 2. By improving weather forecasting technology, specifically through higher resolution and larger ensembles, we can more comprehensively assess exposure risks.

CorrDiff, the groundbreaking NVIDIA generative AI diffusion model, is trained on high-resolution, radar-incorporated WRF data provided by the Taiwan CWA and ERA5 reanalysis data sourced from the European Center for Medium-Range Weather Forecasts.

Through CorrDiff, predictions of extreme weather phenomena such as typhoons can be significantly enhanced from a 25-km resolution to a 2-km resolution.

In this post, we’ve demonstrated that, by downscaling from ERA5 25 km to 2 km, we can explore many more local forecast scenarios aiming at providing a clear picture of the best-case, worst-case, and most-likely impacts of a storm.

Assessing uncertainty is crucial. Yet, there’s a compromise between the number of ensemble forecast members and their resolution, given finite computational resources. NCDR produces forecasts that comprise approximately 200 ensemble members with varying resolutions.

The introduction of cutting-edge AI technologies, like CorrDiff, marks a significant transformation. It offers the ability to scale up to thousands of members for ensemble forecasting in near real-time on just a single GPU node.

Citing the transformative potential of the NVIDIA generative AI CorrDiff model, Chia-Ping Cheng, administrator of the Taiwan CWA, emphasizes its ability to revolutionize weather forecasting. Cheng highlighted how CorrDiff enables the generation of kilometer-scale weather forecasts, empowering society to anticipate detailed features of extreme weather events with unprecedented accuracy, thus aiding in disaster mitigation efforts.

Echoing this, Hongey Chen, director of the National Science and Technology Center for Disaster Reduction in Taiwan, underscores CorrDiff’s significance in addressing the diverse and unprecedented impacts of natural disasters. Chen highlights CorrDiff as a creative solution for ensuring public safety.

Democratizing AI-weather and enabling climate tech

In summary, NVIDIA Earth-2 democratizes access to weather information and embodies a modern initiative to extend the reach of climate science beyond academia, making it easily accessible to policymakers, businesses, journalists, and the general public.

CorrDiff, the cutting-edge NVIDIA generative AI-based downscaling model, holds promise for various sectors:

- In financial services, CorrDiff can empower users to make informed decisions regarding risk assessment and asset management.

- In the energy sector, CorrDiff’s precise downscaling capabilities can enable better resource allocation and infrastructure planning, crucial for optimizing energy production and distribution.

- Government agencies can benefit from CorrDiff to enhance disaster preparedness and response efforts.

- Individual users can feel CorrDiff’s impact through more accurate and localized weather forecasts for everyday planning and safety.

With its remarkable adaptability and efficiency, CorrDiff can assist actionable insights and precise forecasts to empower us all in building a more resilient world.