In the world of machine learning, models are trained using existing data sets and then deployed to do inference on new data. In a previous post, Simplifying and Scaling Inference Serving with NVIDIA Triton 2.3, we discussed inference workflow and the need for an efficient inference serving solution. In that post, we introduced Triton Inference Server and its benefits and looked at the new features in version 2.3. Because model deployment is critical to the success of AI in the organization, we revisit the key benefits of using Triton Inference Server in this post.

Triton is designed as an enterprise class software that is also open source. It supports the following features:

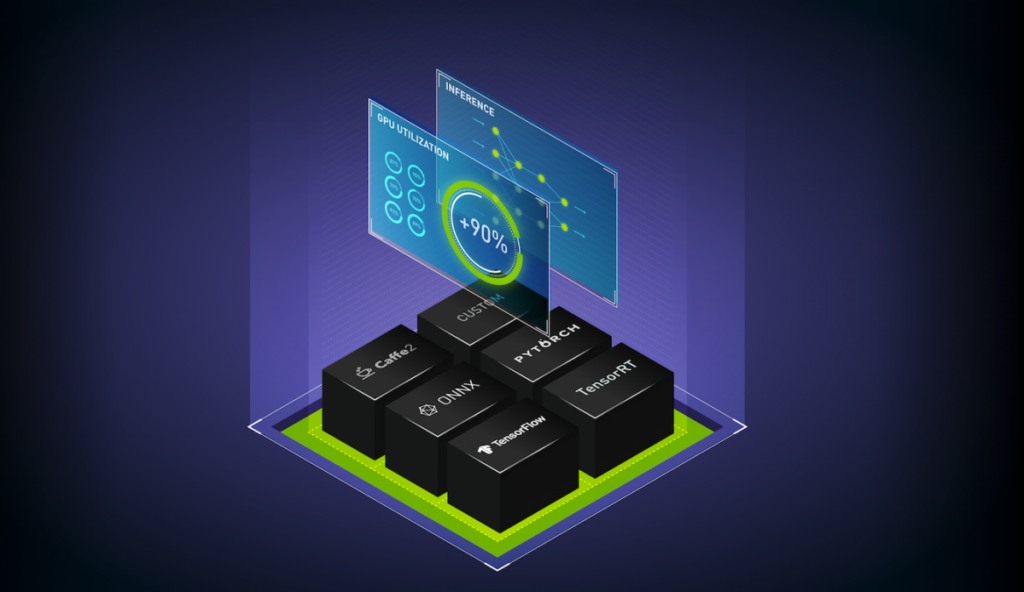

- Multiple frameworks: Developers and ML engineers can run inference on models from any framework such as TensorFlow, PyTorch, ONNX, TensorRT, and even custom framework backends. Triton has standard HTTP/gRPC communication endpoints to communicate with the AI application. It provides flexibility to the engineers and standardized deployment to DevOps and MLOps.

- Dynamic batching and concurrent execution to maximize throughput: Triton provides concurrent model execution on GPUs and CPUs for high throughput and utilization. This enables you to load multiple models, or multiple copies of the same model, on a single GPU or CPU to be executed simultaneously. It also supports dynamic batching on both CPUs and GPUs to increase throughput under tight latency constraints.

- Inference on GPU and CPU: As alluded to earlier, Triton serves inferences from models on both GPU and CPU. Triton can be used in public clouds, on-premises, data centers, and on the edge. Triton can run as a Docker container, on bare metal, or inside a virtual machine in a virtualized environment.

- Different types of inferences: Triton supports a variety of input requests: real-time, batch, and streaming inputs. It also has a model ensemble feature to create a pipeline of different models and pre- or post-processing operations to handle a variety of different workloads.

- Kubernetes integration: It can be deployed as a scalable microservice container in Kubernetes. It uses Prometheus to export metrics for automatic scaling. A Helm chart from NVIDIA GPU Cloud (NGC) can be used for fast deployment. Triton also can be used in KFServing, which is used for serverless inferencing on Kubernetes.

- MLOps: Its model management functionality helps with dynamic model loading and unloading and live model updates in production. The Triton Model Analyzer helps characterize a model’s performance and memory utilization on a GPU ahead of production deployment. Triton is integrated in Seldon, Google CAIP custom container workflow, and in Azure ML (public preview). It exports several metrics like GPU utilization and memory, inference load, and latency metrics for performance monitoring and automatic scaling.

- Open source and customizable: Triton is completely open source, enabling organizations to inspect for InfoSec compliance, customize Triton for their situation, and extend its functionality for the community. As a container, it can be deployed in modular fashion. Start with the minimal base container that has only the TensorRT backend and add framework backends as you grow to maintain optimal memory footprint on the device.

Triton is used by many organizations for their production inference service including Microsoft, American Express, and Naver, in both on-premises data centers and on public clouds. Triton is also included in the NVIDIA enterprise support service on NGC-Ready systems.

Modern AI applications in a data center or cloud are deployed as a coordinated set of microservices. The advantage of using microservices architecture with Triton is that models can be deployed faster, scale more efficiently, and be updated live without restarting the server.

Here are some new Triton features that we did not cover in the earlier post.

- Triton on DeepStream:

Triton is natively integrated in NVIDIA DeepStream to support video analytics inference workflows. Video inference serving is needed for use cases such as optical inspection in manufacturing, smart checkout, retail analytics, and others. Triton on DeepStream can run inference on the edge or on the cloud with Kubernetes. For embedded applications, support for DeepStream on Jetson uses Triton’s C API to directly link Triton into the application. Triton brings features such as dynamic batching and concurrent model execution of multiple models from different frameworks to DeepStream. Triton can be used to serve smaller models on CPUs when GPU accelerated models cannot fit in the limited Jetson memory.

- Python pip client:

The Triton Python client library is now a pip package available from the NVIDIA PyPi index. The GRPC and HTTP client libraries are available as a Python package that can be installed using a recent version of pip.

- Support for Ubuntu 20.04:

Triton provides support for the latest version of Ubuntu, which comes with additional security updates.

- Model Analyzer:

The Model Analyzer is a suite of tools that helps users select the optimal model configuration that maximizes performance in Triton. The Model Analyzer benchmarks model performance by measuring throughput (inferences/second) and latency under varying client loads. It can then generate performance charts to help users make tradeoff decisions around throughput and latency, as well as identify the optimal batch size and concurrency values to use for the best performance.

By measuring GPU memory utilization, the Model Analyzer also optimizes hardware usage by informing how many models can be loaded onto a GPU and the exact amount of hardware needed to run your models based on performance or memory requirements.

Triton allows you to customize inference serving for your specific workloads. You can use the Triton Backend API to execute Python or C++ code for any type of logic, such as pre- and post-processing operations around your models. The Backend API can also be used to create your own custom backend in Triton. Custom backends that are integrated into Triton can take advantage of all of Triton’s features such as standardized gRPC/HTTP interfaces, dynamic batching, concurrent execution, and others.

Customer use cases

Here’s how Tencent, Kingsoft, and Fermilabs used Triton’s custom backend functionality to integrate their own deep learning backend for inference.

Tencent TNN backend

Tencent YouTu Lab, Tencent’s R&D organization, developed a new open-source high performance framework called TNN. They were able to easily customize Triton by integrating TNN as a Triton custom backend. Triton was selected as Tencent’s choice of inference server due to its product maturity, dynamic batching, and concurrent model execution capabilities. Tencent YouTu Lab’s goal is to standardize high performance inferencing for all AI developers with TNN and Triton.

Kingsoft

Kingsoft is a leading cloud services provider in China. They use Triton’s dynamic batching and concurrent model execution on T4 GPUs to enable 15 real-time services that require inferencing with low latency. They use custom framework backends and achieved 50% higher query per second (QPS) with Triton and 4-5x higher QPS with Triton and TensorRT/TVM. The support for multiple frameworks enabled their developers to deploy models from different frameworks easily without code changes.

Fermilab

Fermilab is a United States Department of Energy national laboratory specializing in high-energy particle physics. They use Triton on T4 GPU to serve image processing models for experiments. Their biggest requirement is to cost effectively scale inference on GPU for thousands of client applications running on different servers, which need access to GPU inference at different times. They integrated Triton with Kubernetes and using a custom orchestration service, they enabled inference as a service for the client applications. The applications requesting inference did not have to manage different model frameworks, input batching, or multi-GPU servers. Models could be updated independently, and in future other GPUs can be used.

Conclusion

Triton Inference Server simplifies the deployment of deep learning models at scale in production. It supports all major AI frameworks, runs multiple models concurrently to increase throughput and utilization, and integrates with Kubernetes ecosystem for a streamlined production pipeline that’s easy to set up. These capabilities bring data scientists, developers, and IT operators together to accelerate AI development and simplify production deployment.

Try Triton Inference Server today on GPU, CPU, or both. The Triton container can be downloaded on NGC and its source code is available on GitHub. For documentation, see Triton Inference Server on GitHub.