University of California, Irvine researchers developed a deep learning-based approach to accelerate drug discovery and cancer research.

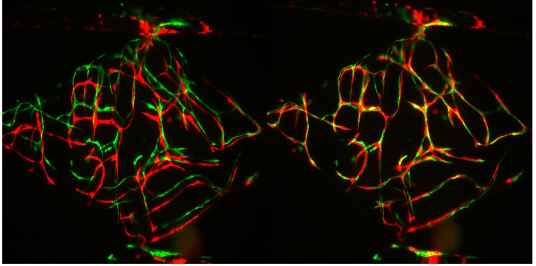

“We have developed a convolutional neural network to improve the data analysis processes for high-throughput drug screening using our microphysiological system (MPS),” the researchers stated in their paper.

A microphysiological system is an interconnected set of 2 or 3D cellular constructs that are frequently referred to as organs-on-chips or in vitro organ constructs.

“This network can classify new images near instantaneously and surpasses human accuracy on this task.” the team said. “The accuracy of our best model is significantly better than our minimally-trained human raters and requires no human intervention to operate. This model is a first step toward automation of data analysis for high-throughput drug screening,” the researchers said.

Using NVIDIA TITAN Xp GPUs and the cuDNN-accelerated Keras deep learning framework, the researchers trained their convolutional neural network on thousands of blood vessel images, including some images obtained with data augmentation.

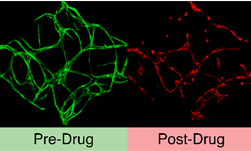

Once trained the system, distinguishes between effective and ineffective drug compounds through automatic analysis of vascularization images.

For inference, the team used the same GPUs used during training.

“The success of this convolutional model is driven in part by carefully tuning our loss function to discourage false negatives but also by the steps taken to control overfitting in the model,” the team said. “One regularization strategy was to augment our limited training dataset to virtually infinite size via randomly transforming images during each training pass.”

A pre-print version of the research was recently published on the IEEE/ACM Transactions on Computational Biology and Bioinformatics journal.

Read more >

AI Helps Accelerate the Drug Development Process

Jun 12, 2018

Discuss (0)

AI-Generated Summary

- Researchers from the University of California, Irvine have developed a deep learning-based approach using a convolutional neural network to accelerate drug discovery and cancer research by improving data analysis for high-throughput drug screening.

- The convolutional neural network was trained on thousands of blood vessel images using NVIDIA TITAN Xp GPUs and the cuDNN-accelerated Keras deep learning framework, and can classify new images near instantaneously with higher accuracy than human raters.

- The system distinguishes between effective and ineffective drug compounds through automatic analysis of vascularization images, and its success is driven by careful tuning of the loss function and strategies to control overfitting.

AI-generated content may summarize information incompletely. Verify important information. Learn more