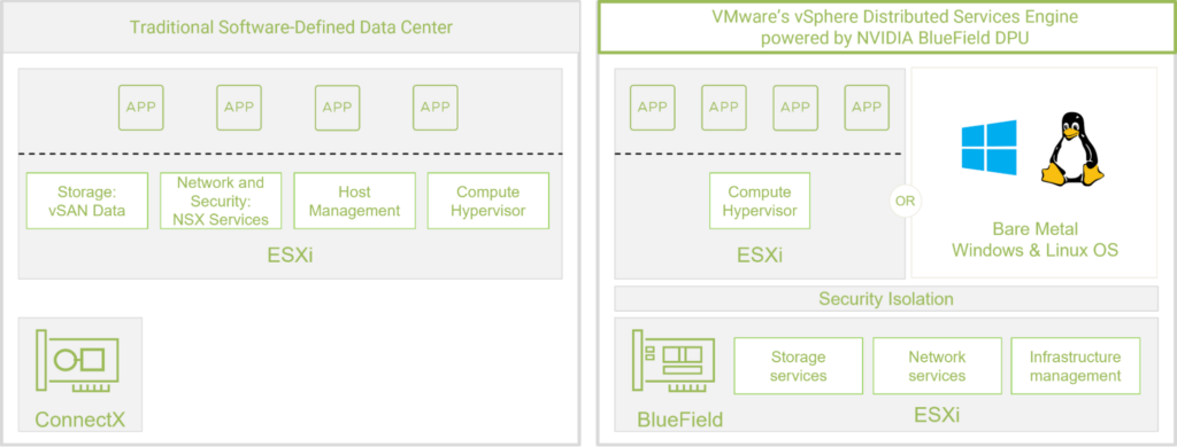

A shift to modern distributed workloads, along with higher networking speeds, has increased the overhead of infrastructure services. There are fewer CPU cycles available for the applications that power businesses. Deploying data processing units (DPUs) to offload and accelerate these infrastructure services delivers faster performance, lower CPU utilization, and better energy efficiency.

Many modern workloads are distributed, meaning they no longer fit within just one server. Instead, they run simultaneously across multiple servers to achieve greater scalability and availability. Such workloads include web and e-commerce applications such as NoSQL databases, analytics, AI, and key-value stores like Redis.

Many companies run these distributed workloads on the vSphere enterprise workload platform. With different parts of the applications communicating across VMs and hosts, vSphere must devote an increasing amount of CPU power to managing data movement and infrastructure workloads such as networking.

Running networking and security infrastructure services off the CPU and onto the DPU frees up the CPU cores for the business applications and also drastically reduces issues such as CPU cache pollution and context switches, resulting in a highly efficient system.

vSphere

vSphere on DPUs (formerly Project Monterey) was released with vSphere 8. Along with an NVIDIA BlueField DPU, it provides the ability for application workload traffic to take the networking fast path through the hypervisor. Running the BlueField DPU in passthrough mode enables offloading and isolating network processing to the DPU. This results in a significant application performance boost.

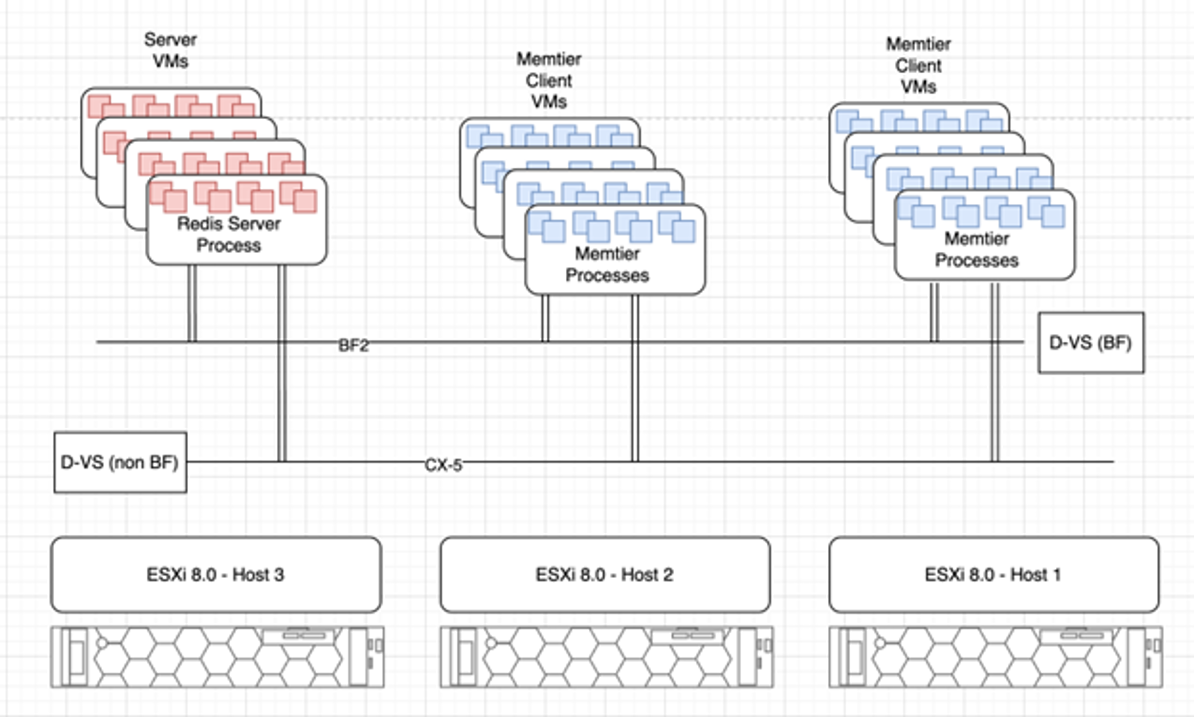

To test this theory, NVIDIA, and VMware joined forces to demonstrate how vSphere 8 running on a DPU could improve scalability, efficiency, and performance.

Redis key-value store database

Due to Redis’s popularity as a multi-model NoSQL database server and caching engine, engineering experts from both companies chose it to test Redis on vSphere 8 with the BlueField DPU in an NVIDIA lab.

Redis, which stands for Remote Dictionary Server, is a fast, open-source, in-memory, key-value data store. Redis goes beyond other NoSQL databases to provide advanced capabilities that modern applications need, including a variety of data structures with built-in replication, the ability to deliver high availability through Redis Sentinel, and automatic partitioning with Redis Cluster.

The tested metrics included the following:

- Redis transactions per second (TPS)

- Average application latency

- Network throughput

- Server CPU utilization for networking

- Energy efficiency

Benchmarking Redis

The testing included running multiple workloads, and the network setup used Geneve overlay networking with VMware NSX and the NSX distributed firewall. The testing compared three networking options:

- Enhanced datapath (EDP) standard with a regular NIC and no DPU offload

- EDP standard with partial DPU offload (the default mode)

- EDP standard with full DPU offload and acceleration

The DPU offloads and isolates network processing, resulting in networking processes using the accelerators and caches on the DPU. This frees the caches on the host for application logic, resulting in significant application performance boosts in terms of throughput and latency. There are two ways of using the DPU for this:

- Accelerated mode: Can achieve the best results by achieving SR-IOV-like high networking performance without losing the workload mobility services that vSphere enables.

- Default mode: Provides DPU-based offload and acceleration for network processing but also incurs some CPU overhead on the host. It does not free up as many cores as UPTv2 mode.

Benchmark results

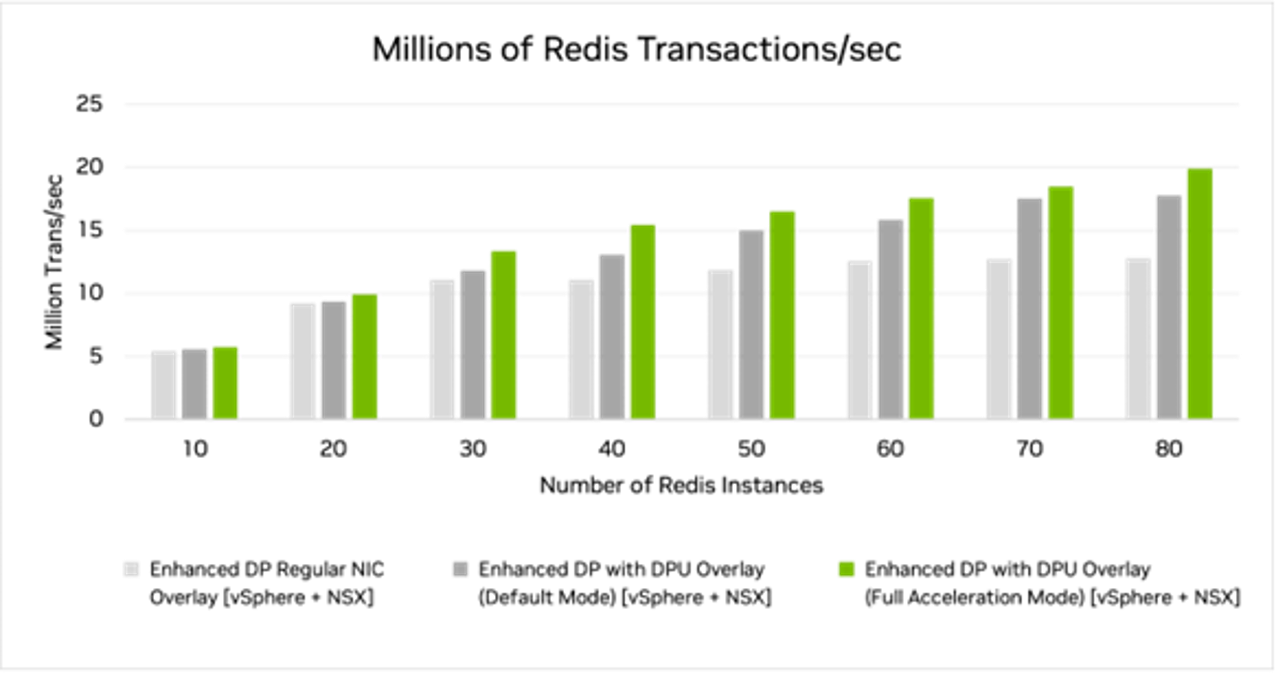

The testing conducted for the whitepaper used network acceleration on NSX using overlay networking with an L4 distributed firewall. The results achieved almost 20M TPS using full DPU acceleration (EDP standard with UPTv2) with 80 Redis instances.

We also achieved a significant fraction of that (17.74M TPS) using the default DPU offload mode. Using the standard ConnectX-5 NIC, without any DPU offload or acceleration, we peaked around 12.75M TPS while running only 30 Redis instances.

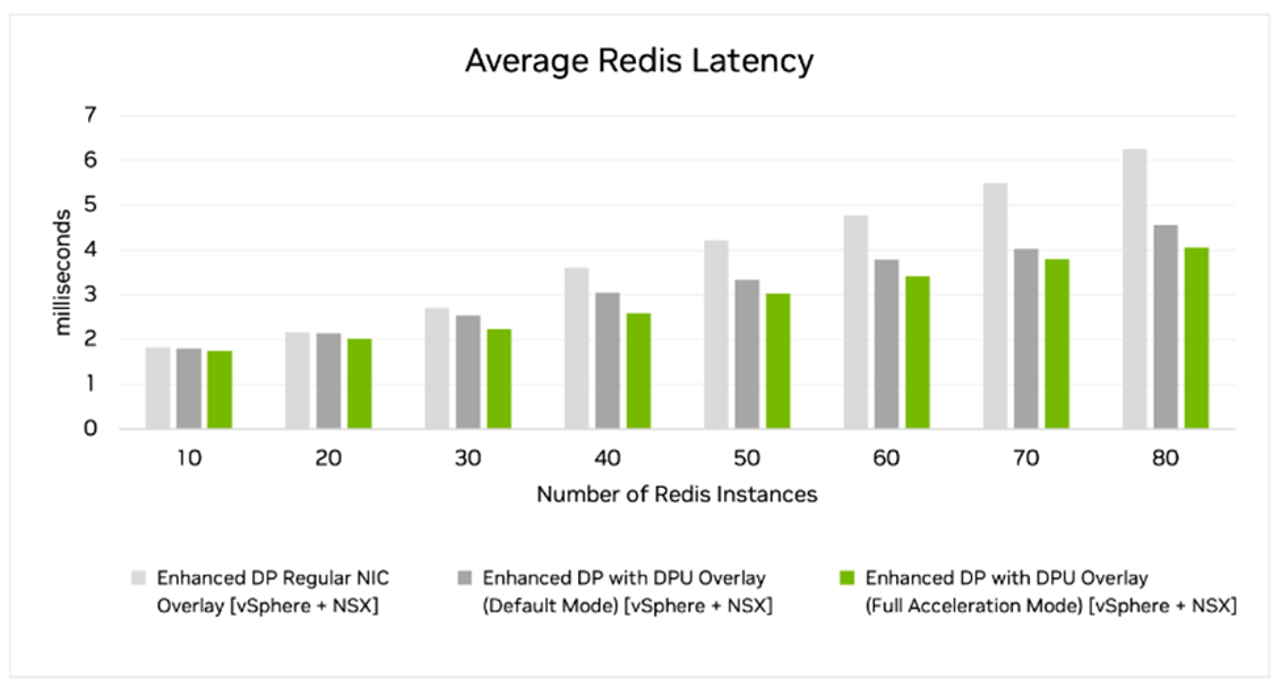

We also observed that the application latency was significantly lower with DPU offload and DPU full acceleration than when using a regular NIC. Using the DPU to offload and accelerate VMware ESXi networking resulted in lower latencies than using a regular NIC. The latency advantage of the DPU is more significant as we increase the number of Redis instances.

Looking at throughput and bandwidth, we saw higher throughput when using the DPU offload than a standard NIC. DPU full acceleration showed the highest throughput. The standard NIC throughput plateaued at 30 instances as the CPU cores could not handle any additional networking tasks. The DPU offload and full acceleration modes continued to increase throughput as the number of Redis instances increased.

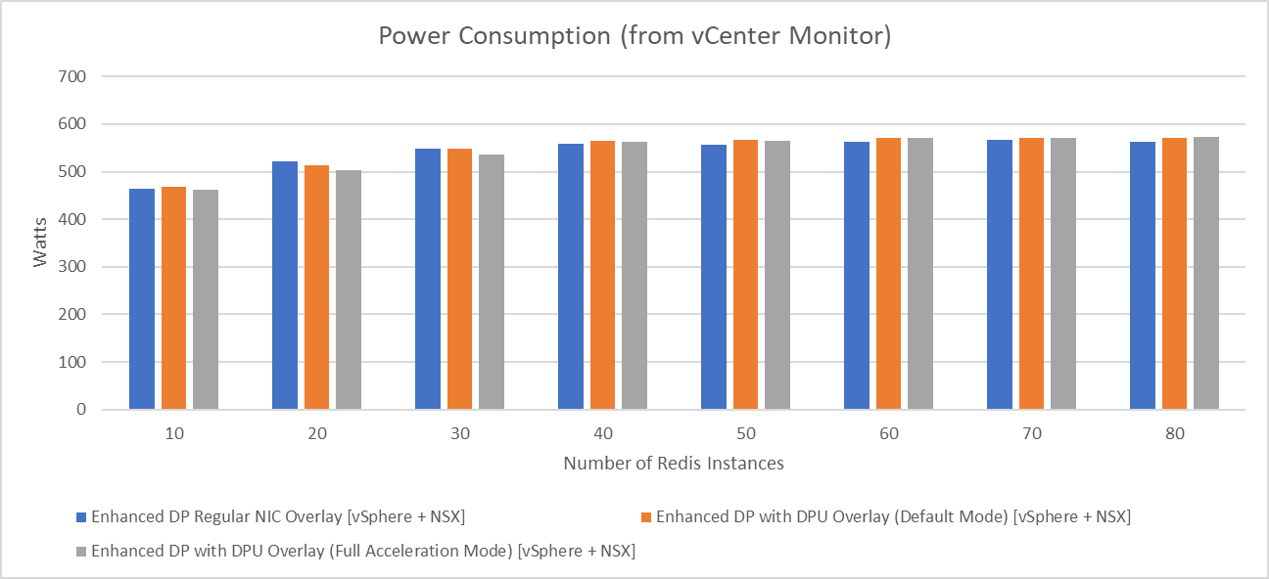

Power consumption with the DPU is slightly lower for 10-30 instances and marginally higher for 40-80 instances. However, the server is accomplishing considerably more work with the DPU, resulting in greater power efficiency.

Using DPU offloading still consumed some x86 processing cycles, but to a much smaller degree, as part of the network processing was moved from the CPU to the DPU. This resulted in much better energy efficiency. When using overlay networks and EDP standard, full DPU acceleration used 6%-40% fewer watts per million TPS than a regular NIC.

By reducing the number of CPU cores needed for ESXi networking, the DPU frees up those cores to run additional VMs and applications. That enables more workloads to run on the same number of servers. You could also use fewer servers to support the same workloads that were running without the DPU offloads.

Value proposition

The benchmark results determined that a BlueField DPU-enabled host could achieve better transaction latencies than a non-DPU–enabled host while also using 20% fewer CPU cores. The DPU-enabled host achieved >30% better throughput with a >25% reduction in transaction latency.

DPU full acceleration also increased energy efficiency, reducing watts per transaction by up to 35% and increasing performance per watt by up to 50%. The benchmark proved that running vSphere Distributed Services Engine on a BlueField DPU enables data centers to reduce the number of Redis servers by 14-18%.

Maximizing the ROI

Due to the CPU cores saved in DPU acceleration (UPTv2) mode and to a lesser extent in DPU offload (default) mode, you need from 4–15 fewer CPU cores to support the same Redis workload. This enables you to reduce the number of servers by 14–18%, assuming the workload is for 30–80 Redis instances per ESX host. This results in a CapEx savings from purchasing fewer servers and paying for less data center infrastructure. It also results in an OpEx savings because the reduced number of servers consumes less electricity and related power distribution and cooling electricity.

For a Redis-on-vSphere deployment that initially required 10K servers, a simple TCO analysis where the BlueField full acceleration mode reduces the number of required servers by 14-18% would save $8.3M to $10.6M over a 3-year period. Approximately half of that is in CapEx savings (fewer servers) and half in OpEx savings (reduced consumption of electricity along with related reduction in cooling and power distribution costs).

If you are deploying only a few ESX hosts, Redis servers still benefit from the increased application performance. The accelerated servers will likely avoid future costs by delaying the purchase or upgrade of servers as application needs grow over time.

These specified results and cost savings are specific to the 25G DPU used as we were limited by the line rate for the DPU in accelerated mode at the maximum scale tested here.

Accelerating Redis performance using VMware VSphere 8 and NVIDIA BlueField DPU

The Accelerating Redis performance using VMware vSphere 8 and NVIDIA BlueField DPU whitepaper documents the testing and results. The paper reveals how using vSphere with hardware-accelerated networking offloads from a BlueField DPU can significantly increase application performance, provide higher throughput, and enable faster response times.

It also shows how offloading to the DPU can free server CPU cores to run applications and increase operational efficiency. The DPU offload and acceleration also reduces the power used per application transaction, resulting in a more efficient data center and significant cost savings from reduced electricity consumption.

Experience VMware on BlueField DPUs through NVIDIA LaunchPad

To experience the BlueField DPU advantages, NVIDIA offers LaunchPad, a demo area well-suited for showcasing the benefits. You can apply to test various applications and libraries running on vSphere and BlueField without needing to purchase and deploy hardware in your data center.

LaunchPad includes several curated labs that walk you through deployment and performance benchmarks running in several use cases, including Redis on vSphere with BlueField DPUs.

This lab guides you through a step-by-step process of installing, configuring, and deploying Redis in a vSphere 8 environment. It enables you to compare Redis testing with and without BlueField DPU acceleration to verify performance gains.

LaunchPad provides developers, designers, and IT professionals with rapid access to the hardware and tools needed to familiarize themselves with new technologies and determine how they can benefit from DPU acceleration. Enterprise teams can use LaunchPad to speed up creating and deploying modern, data-intensive applications. After quick testing and prototyping sessions on LaunchPad, the same complete stack can be deployed for their production workflows.

Summary

DPUs are already broadly deployed in hyperscalers to tackle infrastructure functions and free up CPU cycles for revenue-generating workloads. Every node with vSphere Distributed Services Engine installed and a BlueField DPU can use DPU offloads to improve performance. It provides an effective solution for enterprises to address the strain that new workloads place on servers.

Based on the results from NVIDIA testing with VMware and the NVIDIA LaunchPad labs, adding DPUs to VMware servers drives down TCO while improving overall workload processing. Offloading the infrastructure processes to the DPU improves overall security by adding isolation between the CPU and the infrastructure.

For more information, see the following resources:

- vSphere on DPUs Behind the Scenes: A Technical Deep Dive (GTC session)

- Redis on VMware with BlueField DPU (whitepaper)

- Benchmarking Redis Workloads Lab | VMware vSphere on NVIDIA BlueField DPU (video)

- vSphere on DPUs

Join us for the Hybrid Cloud Architecture with VMware vSphere on NVIDIA BlueField DPU webinar (on-demand).