The NVIDIA BlueField-2 data processing unit (DPU) delivers unmatched software-defined networking (SDN) performance, programmability, and scalability. It integrates eight Arm CPU cores, the secure and advanced ConnectX-6 Dx cloud network interface, and hardware accelerators that together offload, accelerate, and isolate SDN functions, performing connection tracking, flow matching, and advanced packet processing.

This post outlines the basic tenets of an accurate SDN performance benchmark and demonstrates the actual results achievable on the NVIDIA ConnectX-6 Dx with accelerators enabled. The BlueField-2 and next-generation BlueField-3 DPUs include additional acceleration capabilities and offer higher performance for a broader range of use cases.

SDN performance benchmark best practices

Any SDN performance evaluation of the BlueField DPUs or ConnectX SmartNICs should leverage the full power of the hardware accelerators. BlueField-2’s packet processing actions are programmable through the NVIDIA ASAP2 (accelerated switching and packet processing) engine. The SDN accelerators featured on both the BlueField DPUs and ConnectX SmartNICs rely on ASAP2 and other programmable hardware accelerators to achieve line-rate networking performance.

NVIDIA ASAP2 support has been integrated into the upstream Linux Kernel and the Data Plane Development Kit (DPDK) framework and is readily available in a range of Linux OS distributions and cloud management platforms.

Connection tracking acceleration is available starting with Linux Kernel 5.6. The best practice is to use a modern enterprise Linux OS, for example, Ubuntu 20.04, Red Hat Enterprise Linux 8.4, and so on. These newer kernels include inbox support for SDN with connection tracking acceleration with ConnectX-6 Dx SmartNICs and BlueField-2 DPUs. Benchmarking SDN with connection tracking based on a Linux system with an outdated kernel would be misleading.

Finally, for any SDN benchmark to be effective, it must be representative of SDN pipelines implemented in real-world cloud data centers where hundreds of thousands of connections are the norm. Both ConnectX-6 Dx SmartNICs and BlueField-2 DPUs are designed for, and deployed in hyperscale environments, and deliver breakthrough network performance at cloud-scale.

Accelerated SDN performance

Look at the NVIDIA ConnectX-6 Dx performance. The following benchmarks show the throughput and latency of SDN pipeline performance with connection tracking hardware acceleration enabled. We ran tests using a system set up, testing tools, and procedures similar to other reported results. We ran Open VSwitch (OVS) DPDK to seamlessly enable connection tracking acceleration on the ConnectX-6 Dx SmartNIC.

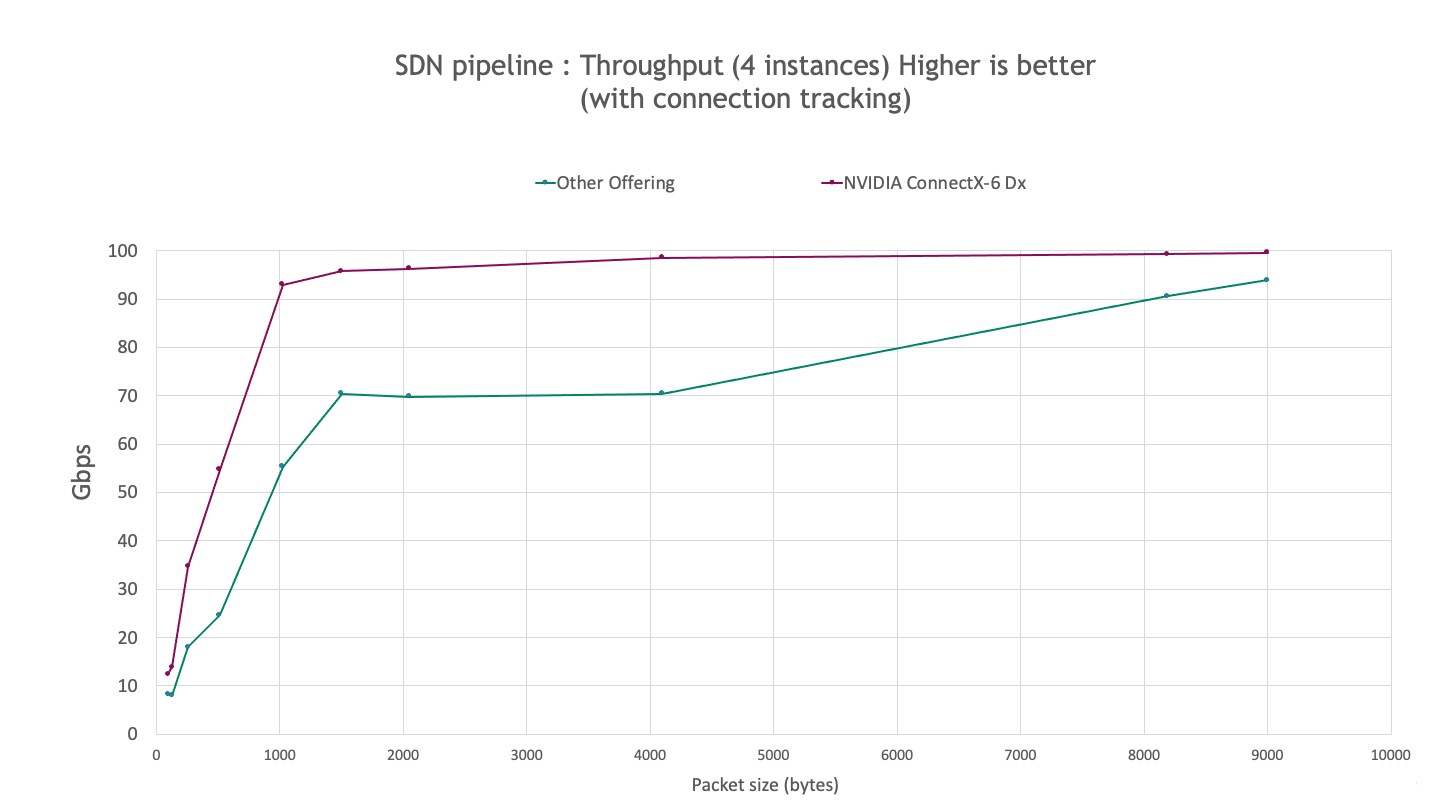

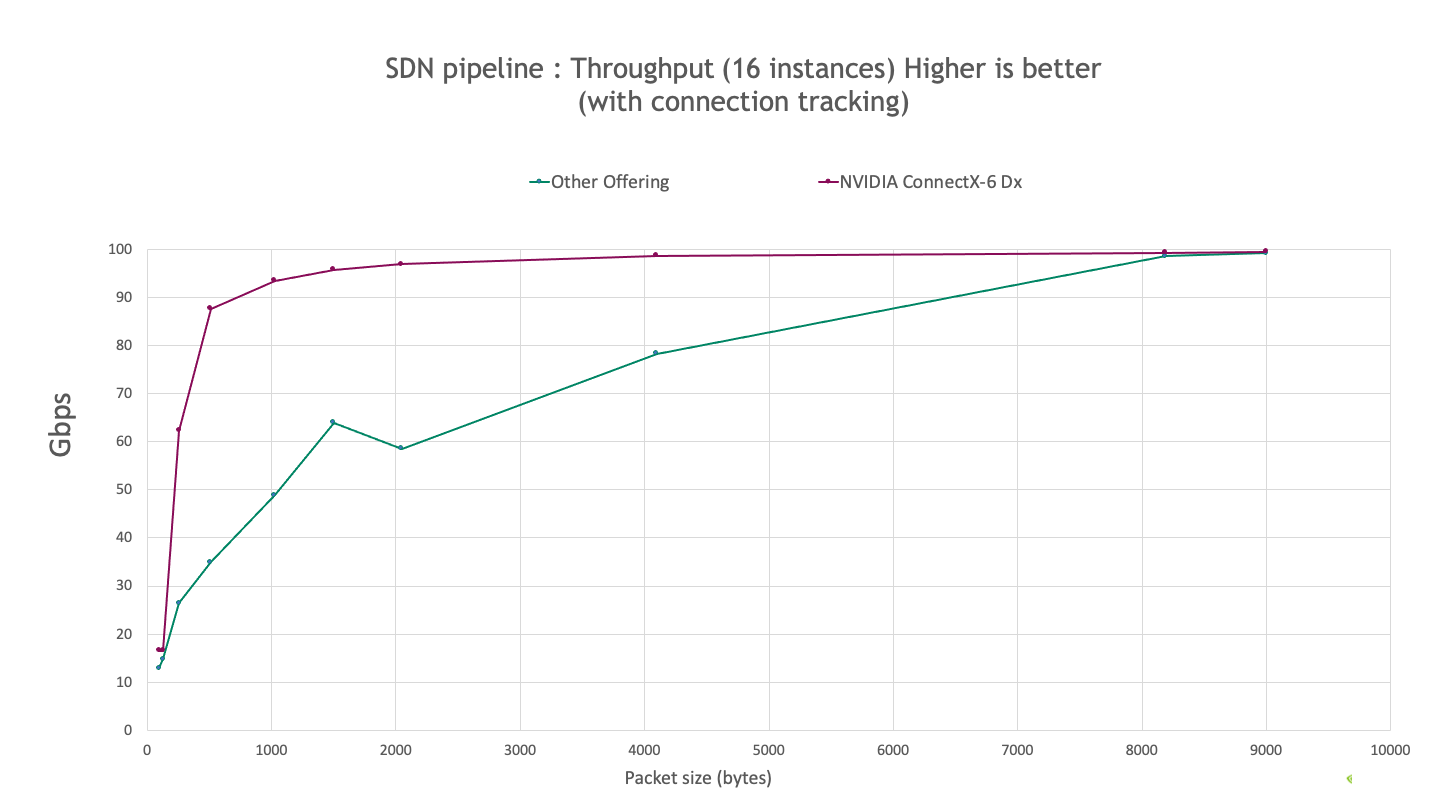

The following charts describe the observed SDN performance by using the iperf3 tool for 4 and 16 iperf instances with one flow per instance.

Key findings:

- ConnectX-6 Dx provides higher throughput, achieving up to 120% and 150% higher for 4 and 16 instances respectively, for all packet sizes tested.

- ConnectX-6 Dx achieves >90% line rate for packets as small as 1 KB compared to 8-KB packets for the other offerings.

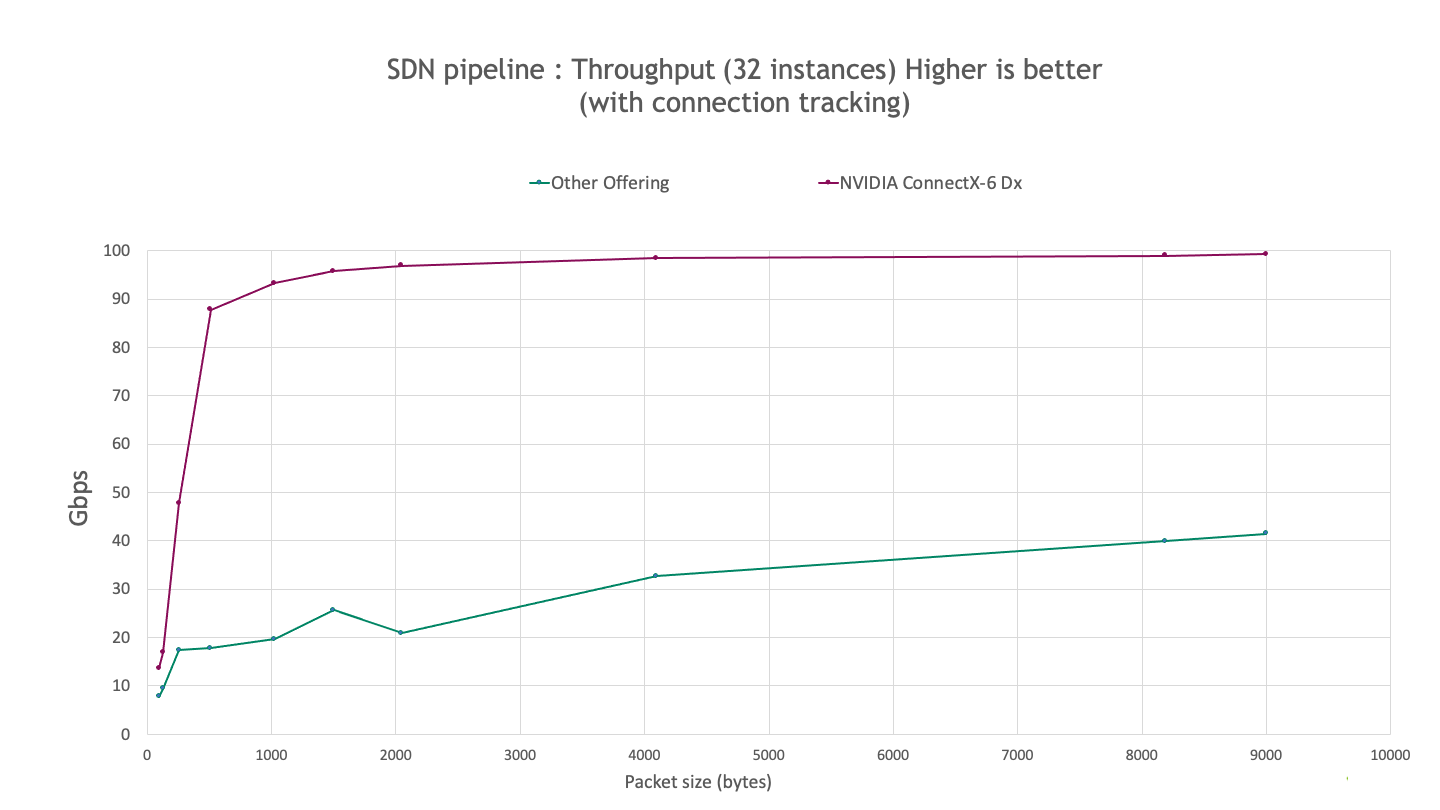

The following chart shows the observed performance for an SDN pipeline with 32 instances on the same system setup. The results show that ConnectX-6 Dx provides much better scaling as the number of flows increases and up to 4x higher throughput.

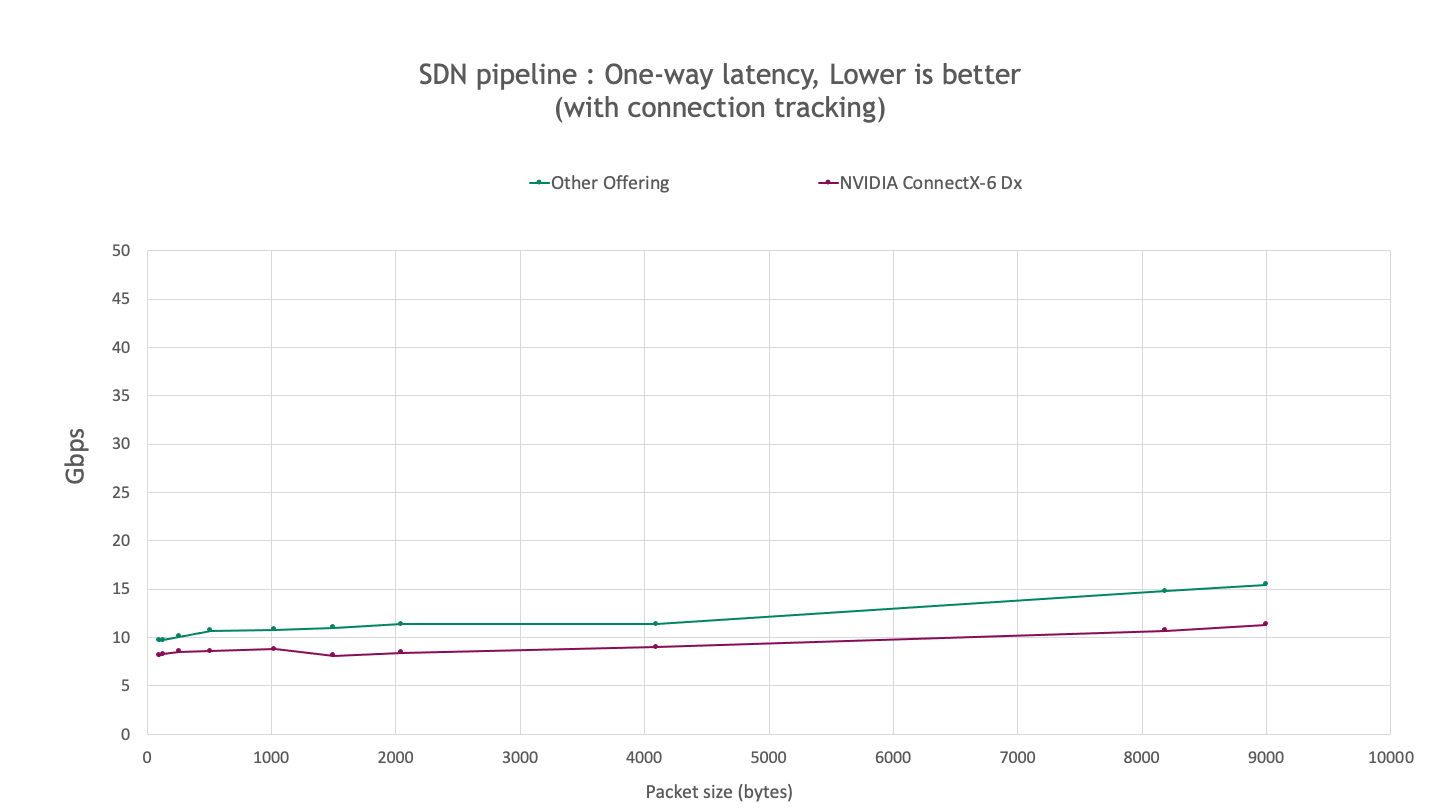

The following benchmark measures latency using sockperf. The results indicate that ConnectX-6 Dx provides ~20-30% lower latency compared to other offerings for all packet sizes that were tested.

Non-accelerated connection tracking implementations create bottlenecks on the host CPU. Offloading connection tracking to the on-chip accelerators means the performance achieved in these benchmarks is not strongly dependent on the host CPU or its ability to drive the test bench. These results are also indicative of the performance achievable on the BlueField-2 DPU, which integrates ConnectX-6 Dx.

BlueField-3 supports higher performance levels

NVIDIA welcomes the opportunity to test and showcase the performance of ConnectX-6 Dx and BlueField-2 while also adhering to industry best practices and operating standards. The data shown in this post compares the performance benchmark results for ConnectX-6 Dx to results reported elsewhere. The ConnectX-6 Dx provides up to 4X higher throughput and up to 30% lower latency compared to other offerings. These benchmark results demonstrate the NVIDIA leadership position in SDN acceleration technologies.

BlueField-3 is the next-generation NVIDIA DPU and integrates the advanced ConnectX-7 adapter and additional acceleration engines. Providing 400 Gb/s networking, more powerful Arm CPU cores, and a highly programmable Datapath Accelerator (DPA), BlueField-3 delivers even higher levels of performance and programmability to address the most demanding workloads in massive-scale data centers. Existing DPU-accelerated SDN applications built on BlueField-2 using DOCA will benefit from the performance enhancements that the BlueField-3 brings, without any code changes.

Learn more about modernizing your data center infrastructure with BlueField DPUs. Stay tuned for even higher SDN performance with BlueField-3 arriving in 2022.