The release of MiniMax M2.7 adds enhancements to the popular MiniMax M2.5 model, built for agentic harnesses, and other complex use cases in fields such as reasoning, ML research workflows, software, engineering, and office work. The open weights release of MiniMax M2.7 is now available through NVIDIA and across the open source inference ecosystem.

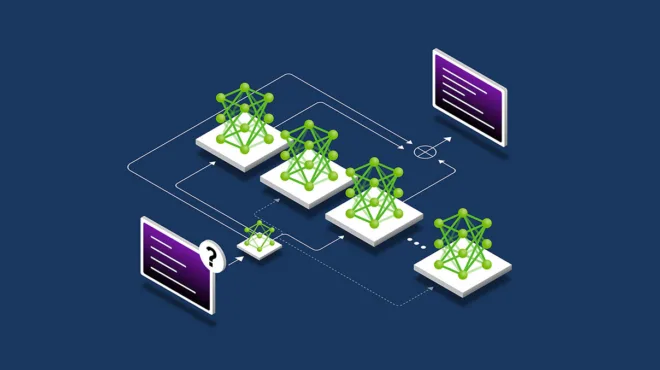

The MiniMax M2 series is a sparse mixture-of-experts (MoE) model family designed for efficiency and capability. The MoE design keeps inference costs low while preserving the full capacity of a 230B-parameter model. It uses multi-head causal self-attention enhanced with Rotary Position Embeddings (RoPE) and Query-Key Root Mean Square Normalization (QK RMSNorm) for stable training at scale. A top-k expert routing mechanism ensures that only the most relevant experts activate for any given input, keeping inference costs low despite the model’s large total parameter count. The result is an architecture tuned to excel at coding challenges and complex agentic tasks.

| MiniMax M2.7 | |

| Modalities | Language |

| Total parameters | 230B |

| Active parameters | 10B |

| Activation rate | 4.3% |

| Input context length | 200K |

| Additional configuration information | |

| Experts | 256 local experts |

| Experts activated per token | 8 |

| Layers | 62 |

Building long-running agents with NVIDIA NemoClaw

NVIDIA NemoClaw is an open source reference stack that simplifies running OpenClaw always-on assistants more safely, with a single command. It installs the NVIDIA OpenShell runtime, a secure environment for running autonomous agents with endpoints or open models like M2.7. Developers can get started today with this one-click launchable to provision an environment with OpenClaw and OpenShell on the NVIDIA Brev cloud AI GPU platform.

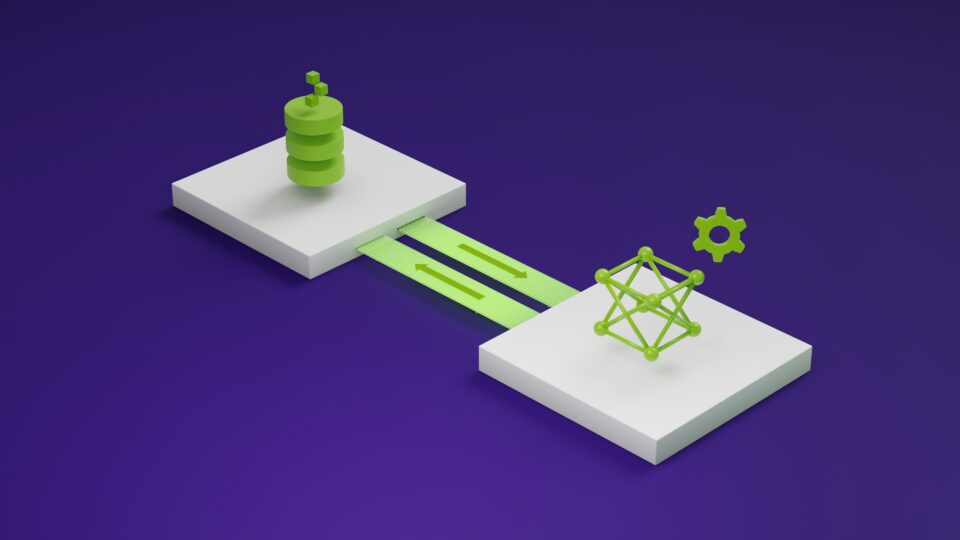

Inference optimizations with open source frameworks

To maximize performance for the MiniMax M2 series of models, NVIDIA collaborated with the open source community to integrate high-performance kernels into vLLM and SGLang. These optimizations specifically target the architectural demands of large-scale MoE models:

- QK RMS Norm Kernel: This optimization fuses computation and communication operations into a single kernel to normalize query and key together. The kernel can better overlap computation and communication, reducing kernel launch and memory read/write overhead, and improving inference performance.

- FP8 MoE: Integration of NVIDIA TensorRT-LLM FP8 MoE modular kernel. This well-optimized kernel specifically targets MoE models, boosting overall end-to-end performance.

The following is the vLLM result on NVIDIA Blackwell Ultra GPUs setup with a 1K/1K ISL/OSL dataset. The two optimizations delivered up to 2.5x improvement in throughput in 1 month.

Figure 2 shows the SGLang result on NVIDIA Blackwell Ultra GPUs, using a 1K/1K ISL/OSL dataset. The two optimizations delivered up to 2.7x improvement in throughput in 1 month.

Deploying with vLLM

When deploying models with the vLLM serving framework, use the following instructions. For more information, see the vLLM guide.

$ vllm serve MiniMaxAI/MiniMax-M2.7 \

--tensor-parallel-size 4 \

--tool-call-parser minimax_m2 \

--reasoning-parser minimax_m2_append_think \

--enable-auto-tool-choice \

--trust-remote-code \

--enable-expert-parallel

Deploying with SGLang

Users deploying models with the SGLang serving framework can use the following instructions. See the SGLang documentation for more information and configuration options.

$ sglang serve \

--model-path MiniMaxAI/MiniMax-M2.7 \

--tp-size 4 \

--trust-remote-code \

--disable-radix-cache \

--max-running-requests 512 \

--mem-fraction-static 0.85 \

--cuda-graph-max-bs 512 \

--kv-cache-dtype fp8_e4m3 \

--quantization fp8 \

--stream-interval 10 \

--reasoning-parser=minimax-append-think \

--dtype bfloat16 \

--moe-runner-backend flashinfer_trtllm_routed \

--fp8-gemm-backend flashinfer_trtllm \

--enable-flashinfer-allreduce-fusion \

--scheduler-recv-interval 10

Build with NVIDIA endpoints

Start building with MiniMax M2.7 through free, GPU‑accelerated endpoints hosted on NVIDIA GPUs. Quickly test prompts in the browser on build.nvidia.com and evaluate performance with your own data. Scale to production with NVIDIA NIM—optimized, containerized inference microservices, deployable on‑prem, in the cloud, or hybrid.

Post-training with NVIDIA NeMo Framework

To fine‑tune MiniMax M2.7, use the open source NVIDIA NeMo AutoModel library, part of the NVIDIA NeMo Framework, with the M2.7 recipe and doc on the latest checkpoints available on Hugging Face. Users can perform reinforcement learning on MiniMax M2.7 using their choice of data and the NeMo RL library, with sample recipes (8k sequence, 16k sequence) and reference accuracy validation curves.

Get started with MiniMax M2.7

From data center deployments on NVIDIA Blackwell to the fully managed enterprise NVIDIA NIM microservice to finetuning, NVIDIA offers solutions for your integration of MiniMax M2.7. To get started, check out the MiniMax M2.7 page on Hugging Face or on build.nvidia.com.