Mixed Precision

Jan 27, 2021

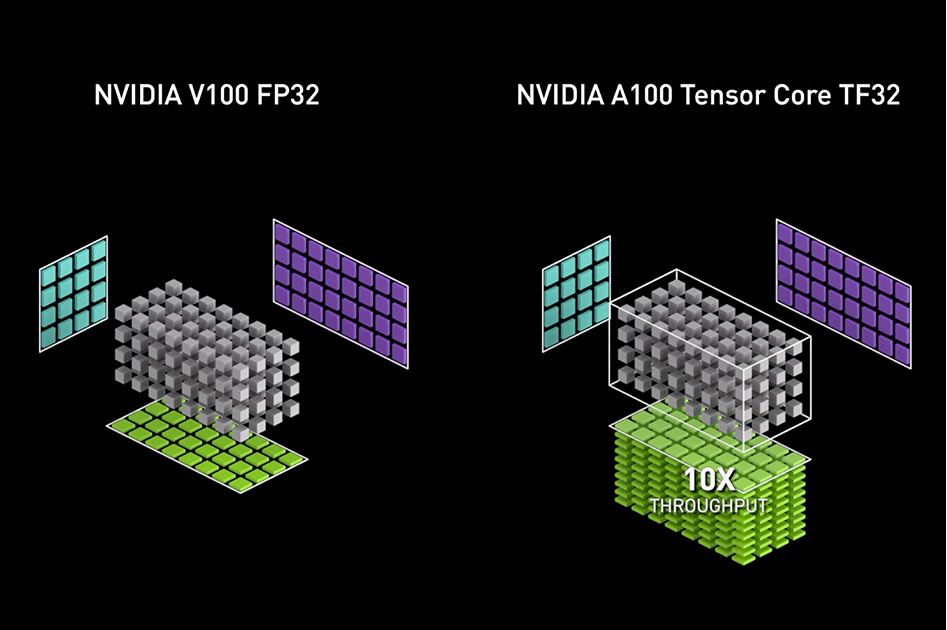

Accelerating AI Training with NVIDIA TF32 Tensor Cores

NVIDIA Ampere GPU architecture introduced the third generation of Tensor Cores, with the new TensorFloat32 (TF32) mode for accelerating FP32 convolutions and...

10 MIN READ

Jan 30, 2019

Video Series: Mixed-Precision Training Techniques Using Tensor Cores for Deep Learning

Neural networks with thousands of layers and millions of neurons demand high performance and faster training times. The complexity and size of neural networks...

5 MIN READ

Jan 23, 2019

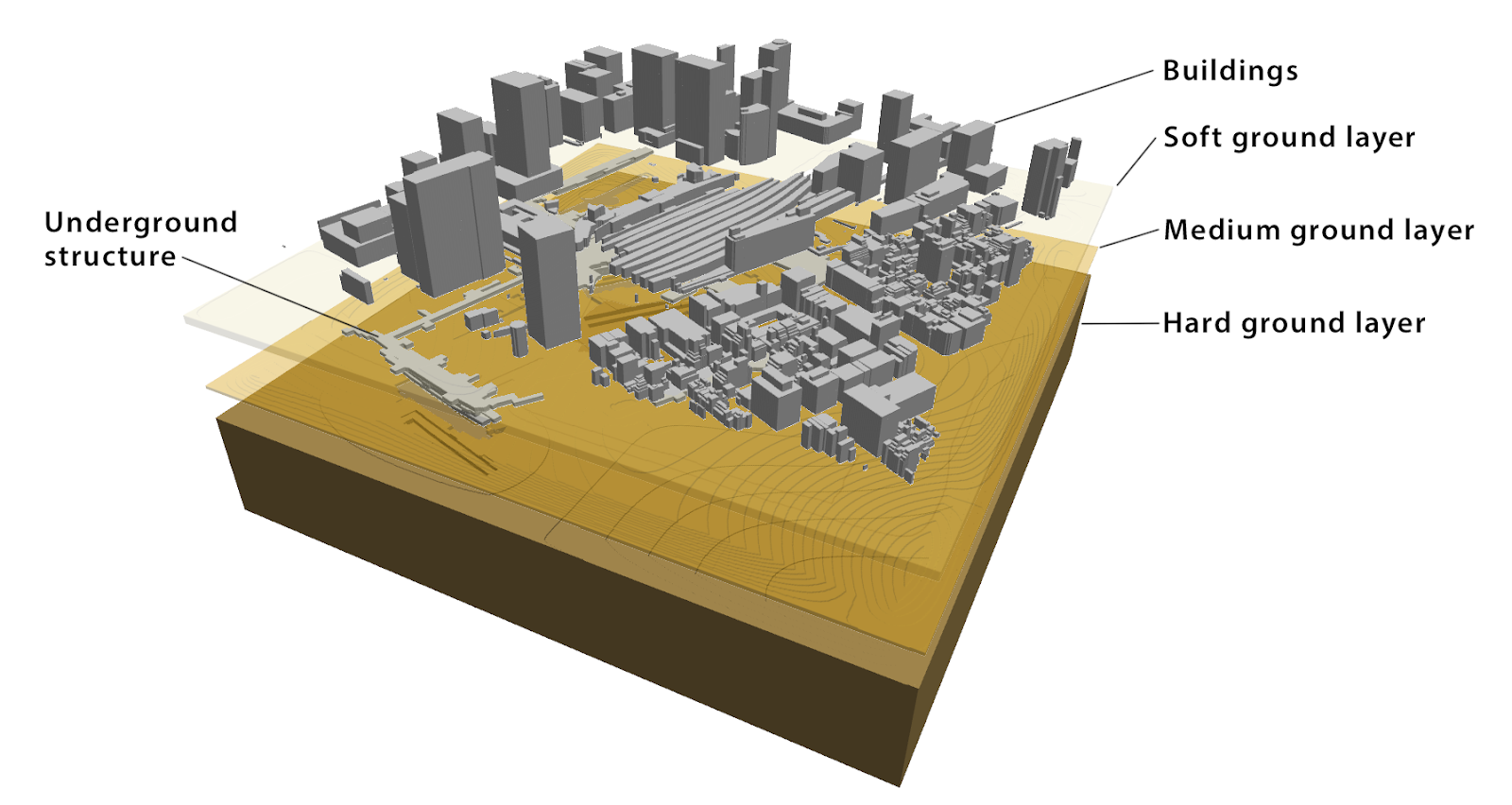

Using Tensor Cores for Mixed-Precision Scientific Computing

Double-precision floating point (FP64) has been the de facto standard for doing scientific simulation for several decades. Most numerical methods used in...

9 MIN READ

Dec 03, 2018

NVIDIA Apex: Tools for Easy Mixed-Precision Training in PyTorch

Most deep learning frameworks, including PyTorch, train using 32-bit floating point (FP32) arithmetic by default. However, using FP32 for all operations is not...

8 MIN READ

Oct 09, 2018

Mixed Precision Training for NLP and Speech Recognition with OpenSeq2Seq

The success of neural networks thus far has been built on bigger datasets, better theoretical models, and reduced training time. Sequential models, in...

11 MIN READ

Aug 20, 2018

Tensor Ops Made Easier in cuDNN

Neural network models have quickly taken advantage of NVIDIA Tensor Cores for deep learning since their introduction in the Tesla V100 GPU last year. For...

6 MIN READ

Dec 11, 2017

Fast INT8 Inference for Autonomous Vehicles with TensorRT 3

Autonomous driving demands safety, and a high-performance computing solution to process sensor data with extreme accuracy. Researchers and developers creating...

13 MIN READ

Oct 17, 2017

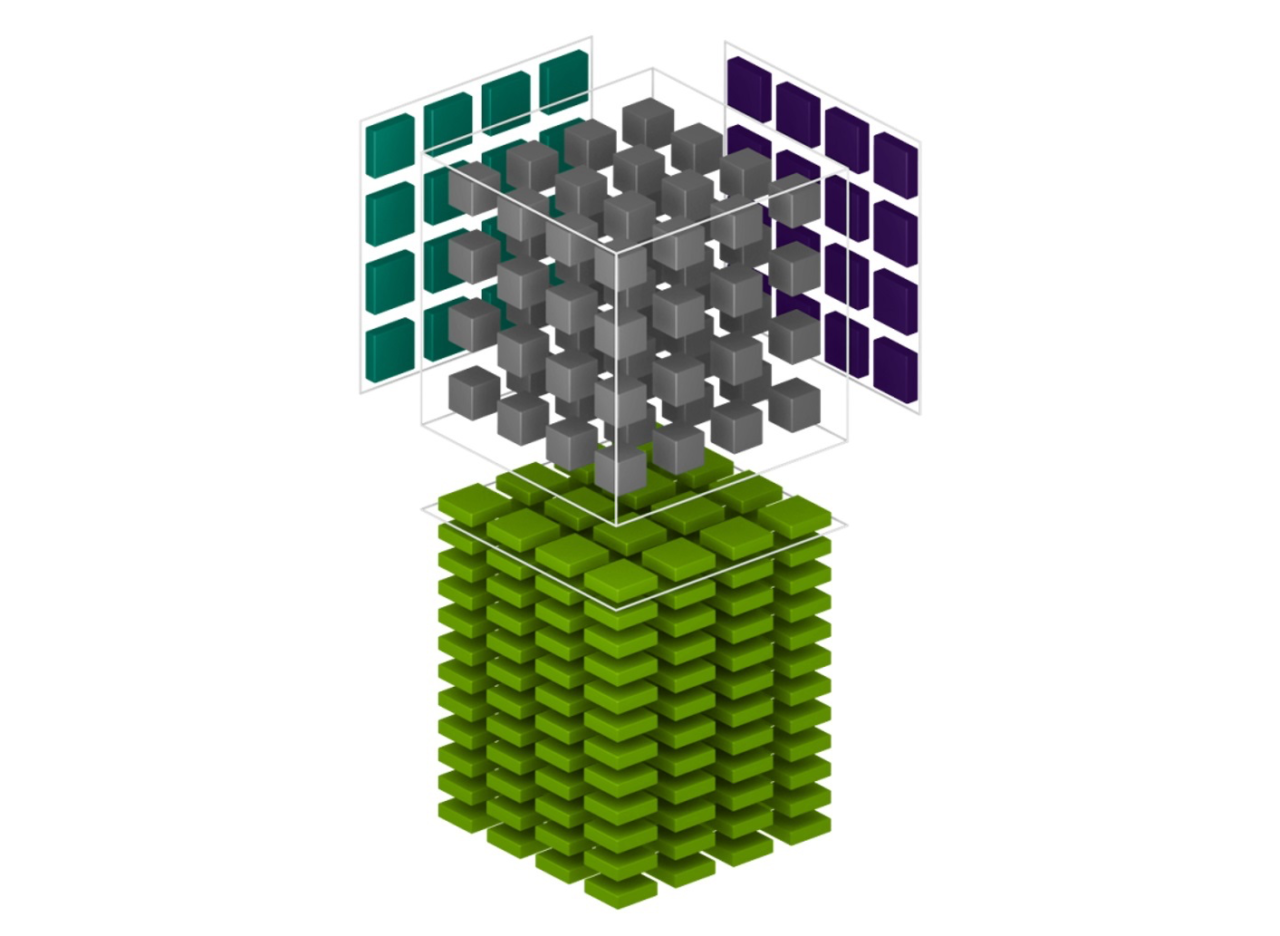

Programming Tensor Cores in CUDA 9

A defining feature of the new NVIDIA Volta GPU architecture is Tensor Cores, which give the NVIDIA V100 accelerator a peak throughput that is 12x...

16 MIN READ

Oct 11, 2017

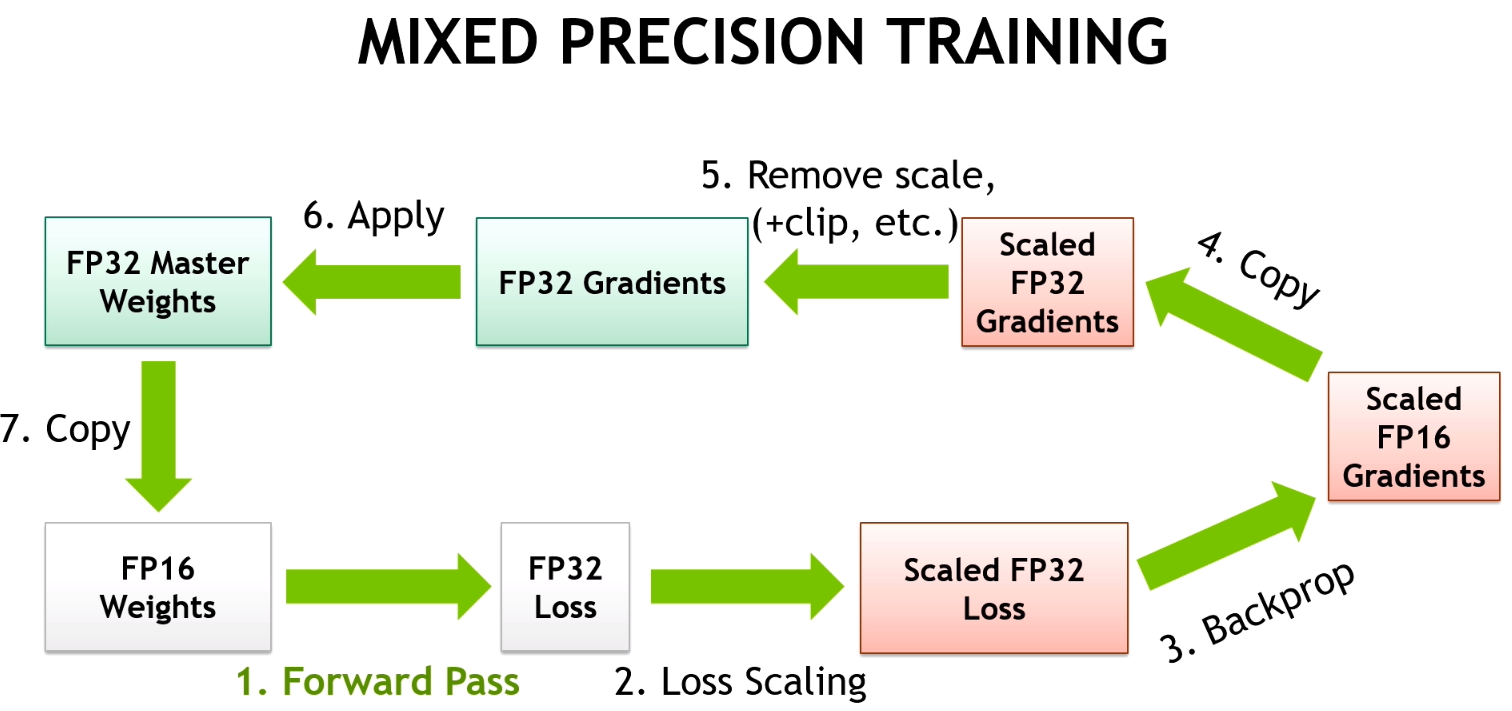

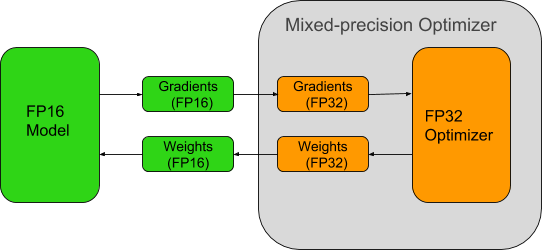

Mixed-Precision Training of Deep Neural Networks

Deep Neural Networks (DNNs) have lead to breakthroughs in a number of areas, including image processing and understanding, language modeling, language...

9 MIN READ

Oct 19, 2016

Mixed-Precision Programming with CUDA 8

Update, March 25, 2019: The latest Volta and Turing GPUs now incoporate Tensor Cores, which accelerate certain types of FP16 matrix math. This enables faster...

17 MIN READ